Very few things happen in the IT vendor community without orchestration. So it is no coincidence that Intel, which rules computing in the servers and storage arrays of the world and wants to take a big bite out of networking is launching a considerably expanded range of Xeon D processors ahead of the ARM TechCon conference that is getting underway in its hometown of Santa Clara this week.

The Xeon D processors are the first of Intel’s server-class chips to be based on the “Broadwell” cores that have been used in its laptop and desktop processors for more than a year and, like them, are manufactured using its most advanced 14 nanometer processes. They set the stage for the Xeon E5 v4 Broadwell processors, which are widely expected in the first quarter of next year from Intel for its workhorse two-socket server platforms.

The updated Xeon D chips, which are aimed at storage and network function virtualization workloads, augment the initial two processors that the company debuted back at the Open Compute Summit in March. These were aimed mostly at single-socket microservers and mostly at the hyperscalers – Google, Microsoft, Amazon, Facebook, Baidu, Tencent, and Alibaba – that might be tempted to move portions of their workloads to 64-bit ARM processors as chips from AMD, Applied Micro, and Cavium Networks became available in production quantities this year.

In years gone by, blunting the ARM assault was a task that the server-class Atom processors were given, but as ARM server chips have gotten beefier, Intel has changed tactics with a single-socket Xeon chip that is geared down, in terms of its price and performance, and implemented in a system on chip packaging like many of the ARM chips and unlike the Xeon E3. (Well, technically speaking, the Xeon D is a system on package, since the southbridge chipset that provides support for legacy I/O devices is not etched onto the die but rather hooked to the Xeon D processor and then integrated in the same chip package.) This is not just a matter of semantics, but the point is that the Xeon D has more oomph than the Atom C series. But practically speaking, Intel is providing the kind of integrated functionality that hyperscalers as well as makers of storage and networking devices – and, by the way, many hyperscalers make their own storage and networking devices and will be keen on cheap, low-power, Xeon compute for them, too – want in some of their processors, and it is again no coincidence that these are precisely the avenues of attack that many of the ARM chip suppliers believe that can take as they try to break their way into the datacenters of the world.

Thus far, thanks to slower than expected rollouts of 64-bit, server-class ARM processors and commercial-grade operating systems that enterprises typically deploy, Intel has not had much trouble vanquishing ARM from the datacenter. That does not mean this will always be the case, particularly when a price war starts among ARM chip makers, which we think is not just possible, but inevitable. (Whether or not this is desirable for anybody but the customers is a debatable point.) As we have pointed out many times before here at The Next Platform, the hyperscalers control their entire software stacks, including rolling their own Linuxes, and have the ability to switch processing architectures on a dime. They buy in huge volumes, too. So winning just one of them for even a piece of their infrastructure is a very big win. Intel has done everything in its engineering and marketing power to prevent this from happening, and the Xeon is a big part of its offense and defense against ARM.

While the new Xeon D processors are, technically speaking, aimed at storage and networking jobs, hyperscaler, telecommunications, cloud builders, and other service providers might be tempted, we think, to deploy these new chips inside of systems. Intel is bifurcating the Xeon D line into three parts – one strictly for serving, one for storage, and one for networking – but it would not be surprising at all to see a hyperscaler want a Xeon D with all of these functions activated on a single chip and therefore able to be massively deployed in their infrastructure and given different jobs over time as the need arises. IT vendors who want to use Xeon D chips in various kinds of appliances will pick one or the other, depending on what they are building, and be fine with the SKUs as Intel delivers them, but then again, they may want to turn on all of the functions to, for example, build a server storage appliance with zippy network functions. That is what we would do, provided the cost from Intel for a Xeon D with all of the bells and whistles was not too expensive.

Intel has not said very much about its successor to the “Avoton” Atom C series server chips, code-named “Denverton,” which was expected to be launched sometime after 2014 using the “Goldmont” core designs aimed at consumer devices. (Goldmont is the successor to the “Silvermont” Atom cores, which are only interesting in that they were used in the Avoton Atom server chips and also formed the basis of the core that is used in the impending “Knights Landing” Xeon Phi parallel processor from Intel. That Xeon Phi variant of the Silvermont is so different from the one used in the Atoms that it probably should be considered distinct.) The thing is, between the Xeon D and the Atom server-class chips, Intel will pepper the datacenter skies with server-class chips, hoping to shoot down the dreams of ARM suppliers with a barrage of silicon shrapnel.

Doubling Up The D Cores In 2016

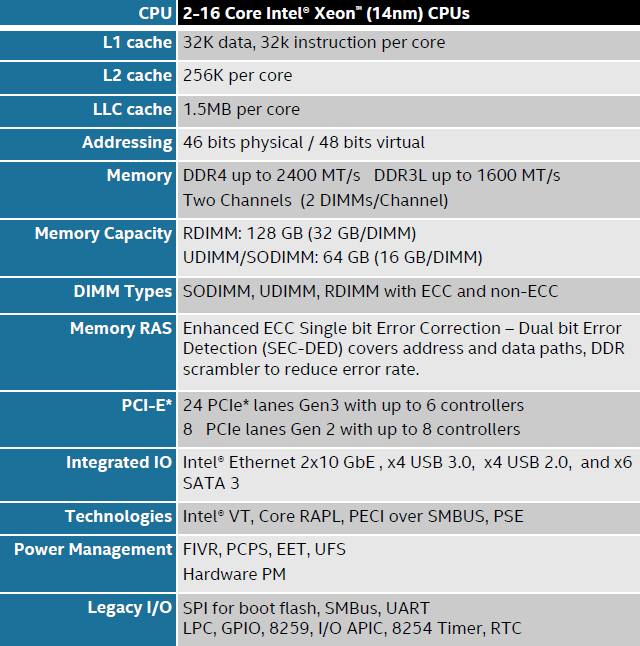

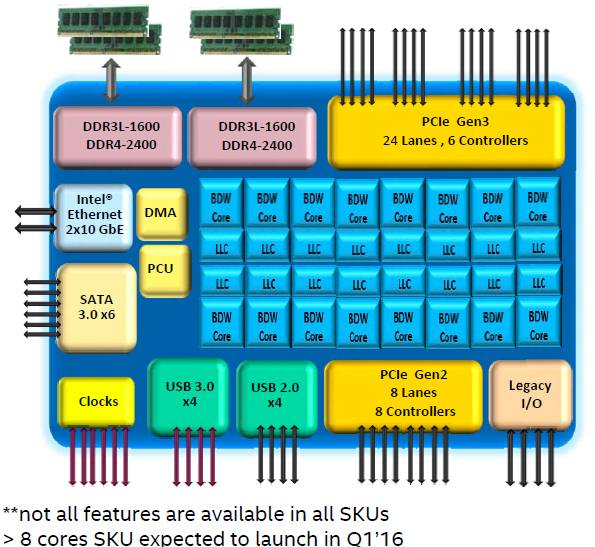

One interesting bit that was divulged as the Xeon D line was expanded is that this processor family was architected to span from two to sixteen cores, not the eight cores of the two Xeon Ds announced back in March. The Xeon D processors have 32 KB of L1 data cache and 32 KB of L1 instruction cache per Broadwell core; each core has 256 KB of L2 cache as well, and an L3 cache that spans all of the cores is segmented into 1.5 MB chunks, with one per core for a maximum of 24 MB for a fully loaded Xeon D chip. By any measure, a sixteen core Xeon D with 24 MB of cache is going to be a pretty powerful processor, and Intel will probably have a tricky time balancing them against the Xeon E3 v4 processors, launched in June this year, and the future Xeon E5 v4 chips, also based on Broadwell cores. It is reasonable to wonder how Intel can afford to maintain so many different chip development efforts, but its gross margins clearly show that it can, in fact, afford to do this. Which is convenient because to compete with suppliers of alternate CPU, GPU, FPGA, and DSP compute engines it must do this.

The Xeon D chip has 46-bit physical addressing and 48-bit virtual addressing, like the other members of the Xeon family, which means it can in theory address up to 64 TB of main memory. But the current single-socket Xeon D processors top out at 128 GB of addressing. The processors can support low-voltage DDR3 memory running at up to 1.6 GHz and DDR4 memory (which chews juice at a lower voltage than regular DDR3 memory by default) running at up to 2.4 GHz. The Xeon D processor has two on-die memory controllers and supports two memory slots per channel for a total of four memory slots, the same limitation as on the Xeon E3 chips that most resemble the Xeon Ds. Using 32 GB registered DIMMs the main memory of the Xeon D tops out at 128 GB, and using UDIMM or SODIMM form factors, the capacity is cut back to 64 GB max.

Because the Xeon D is based on true Broadwell cores, it supports Intel’s HyperThreading (HT) implementation of simultaneous hyperthreading, which makes each core look like two virtual cores as far as operating systems are concerned and which can boost the throughput a bit for workloads that like threads. The Xeon D also supports Turbo Boost, which allows for clock speeds to crank up above their base levels in the event that the workloads running on a machine are not stressing all of the components on the chip and generating a lot of heat. The chips also sport Intel’s VT set of hardware-assisted virtualization circuits. Each Xeon D also has two integrated Ethernet ports running at 10 Gb/sec and a block of six PCI-Express 3.0 controllers with up to 24 lanes. The southbridge functionality that is integrated onto the Xeon D package with a separate chip provides a block of PCI-Express 2.0 controllers with up to eight lanes of traffic plus six SATA 3.0 ports running at 6 Gb/sec and a bunch of USB ports.

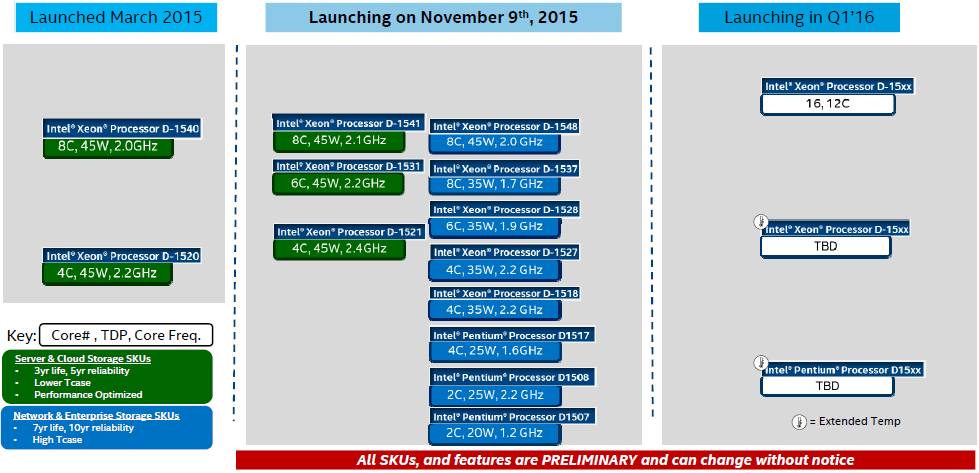

With the expanded Xeon D lineup launched today, Intel is adding three new SKUs aimed at servers and cloud storage workloads, two of them with slightly higher clock speeds than the eight-core Xeon D-1540 and four-core D-1520 chips that came out in March. There is also a new six-core Xeon D-1531 chip that slides in between these two. The workloads that Intel is targeting with the Xeon D chips for servers are dedicated hosting (where customers want to have a whole machine to themselves for the sake of hardware isolation, but have relatively modest processing needs), generic web hosting and web application serving (which is what Facebook plans to run on its “Yosemite” microservers based on the Xeon D chips it pushed Intel to create) and web caching programs such as Memcached (which Facebook also uses to accelerate its eponymous social network service).

As Intel has promised and as it has done with the server-class Atom chips, the Xeon D lineup now includes special variants that are aimed at network and enterprise storage workloads, and these chips have special functions enabled that help accelerate algorithms commonly used for this work. The targets for these versions of the chips are warm cloud storage, entry NAS arrays, and midrange NAS and SAN arrays where the compute needs are modest, as well as edge routing, edge security, wireless base station, and wireless access points on the network front. We also think the Xeon D could see some play in homegrown cold storage at the hyperscalers and even in scale-out cloud storage (such as Ceph and Cinder) and NoSQL and NewSQL data stores where the compute requirements can be modest. ARM server chip makers are certainly targeting here for these reasons. Given this, it is interesting to contemplate a Xeon D that would have a dozen SATA ports instead of six, allowing for a disk-heavy storage server. But there may not be enough oomph between that southbridge and the Xeon D chip.

The point is that by having different SKUs across these four workloads – servers, cloud storage, enterprise storage, and networking – Intel can offer a wide variety choices and price points, much as Cavium has said it will do with its ThunderX line of ARM server chips. We suspect that other ARM server chip makers will follow suit with their devices at some point.

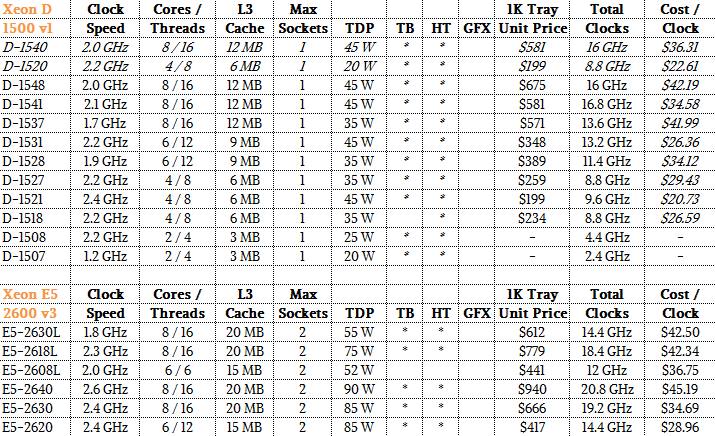

As you can see in the SKU chart above, Intel is offering a wider selection of core counts, wattages, and clock speeds in the line of Xeon D chips that are aimed at networking devices and enterprise storage arrays. Some of the chips shown in the chat above are not in all of the Intel materials describing the fleshed-out Xeon D line – the two-core D-1508 and D-1507 to be precise. A two-core chip sounds so last decade, but not every workloads needs a behemoth with a dozen or two cores.

That roadmap above shows that Intel is planning to deliver future Xeon D chips with 12 or 16 cores in the first quarter of 2016, and that it is also working on variants that can function in an extended temperature range – which means both hotter and colder than its standard Xeon D parts. Part of the reason Intel is offering such chips is that it wants them to be employed in cellular network base stations and wireless access points, where network providers are keen on putting some compute closer to the client devices to improve the service levels they can offer on their networks. (Compute is always pushing out to the edges even as we try to consolidate it back in the datacenter.)

What is interesting to contemplate is that companies might be able to get their hands on Xeon D chips that can run a little hotter than normal and therefore lower their datacenter power consumption. Up until now, you had to engage with Intel on a special bid basis to get such custom SKUs on any of the Xeon line. It stands to reason that Intel might formalize this and do the same for the future Broadwell Xeon E5 processors coming early next year, too.

Where The Rubber Hits The Road

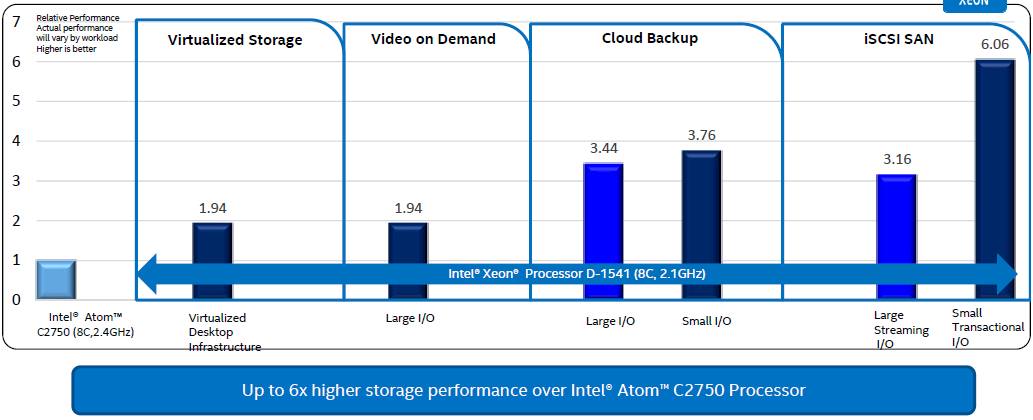

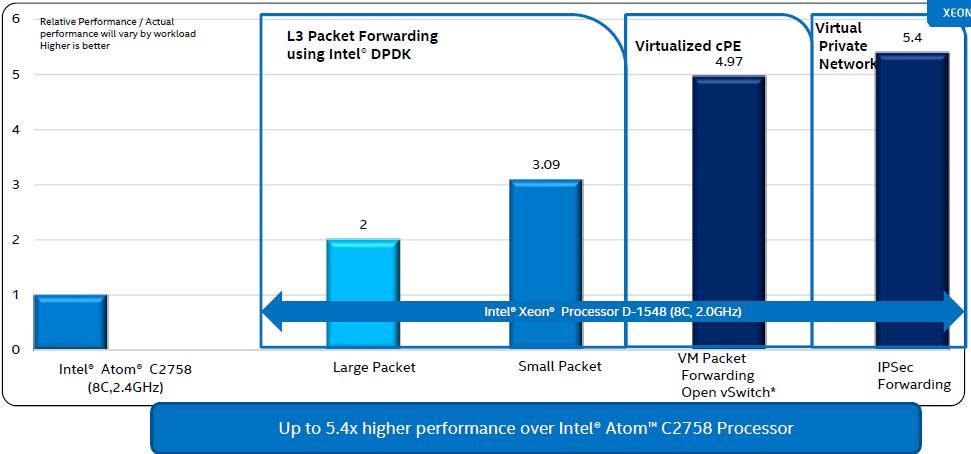

While the competitive target that the Xeon D is aiming at is without question ARM server chips and their network and storage variants, Intel’s own comparisons (at least the ones that is publicizing as part of the expanded Xeon D line rollout) pit the Xeon D against the Atom C series based on the Silvermont cores.

Intel has not published a lot of performance specs on the Xeon D chips running generic server benchmarks, but reiterates that an eight-core Xeon D test chip from last March running at 2 GHz was able to deliver about 3.7X the performance of an eight-core Atom C2750 running at 2.4 GHz on dynamic web serving, and delivered 1.8X better performance per watt.

On a variety of storage benchmarks, here is how an eight-core Xeon D-1541 with eight cores running at 2.1 GHz stacked up against an eight-core Atom C2750 running at 2.4 GHz:

Both of those processors are the top bin parts in their lines, at least until the Denverton Atoms (widely expected early next year with up to 16 cores) come out, probably about the same time the 16-core Xeon Ds will come out is our guess. And the gaps will likely stay about the same. In most cases, the Xeon E3-1265L v3 chip with four cores running at 2.5 GHz was also tested on storage workloads, offering performance that was generally better than the Atom C2750 but worse than the Xeon D-1541.With the Intelligent Storage Acceleration Library (ISA-L) functions that are embedded in all Xeon Broadwell chips, the Xeon D has some big advantages over the Atoms, too. For data compression, the Xeon D-1541 bests the C2750 by a factor of 2.4X on data compression, by 2.4X running erasure codes that are commonly used on object storage, by 6.4X on cryptographic hashing techniques, by 8.9X on encryption algorithms, and software RAID implementations by 12.5X. Importantly, the Storage Performance Developer Kit that Intel has created to help storage makers better run their wares on Atom and Xeon chips has a kernel driver for Linux that allows for NVM-Express drivers, which link flash and disk drives more directly to the compute complex, to scale across more ports and deliver higher performance than the out-of-the-box NVM-Express driver, which does not scale well apparently. With four NVM-Express devices fired up, the Intel driver is able to deliver about five timed the performance – although what metric Intel is measuring here is a bit vague.

On networking jobs, the Xeon D-1548 was aimed at the Atom C2758, which are networking variants of the top-bin eight core parts, and as the chart above shows, the D- 1548 yields significantly better performance on Layer 3 packet forwarding for both small (64 byte) and large (1,518 byte) packet sizes. (This is a job that is commonly done in routers and some switches and increasing in network appliances of various kinds, linking the internal corporate network with the wide area network outside the firewall.) This particular L3 packet forwarding test was done with Intel’s own Data Plane Development Kit, which accelerates various network algorithms. For the IPSec security protocol, the Xeon D-1548 has a 5.4X improvement over its Atom C series equivalent, and on packet forwarding over the Open vSwitch virtual switch commonly embedded in hypervisors to provide switching for virtual machines, the performance bump (again using Intel’s DPDK middleware) is just a hair under 5X of a performance boost.

All of this begs the question as to why Intel is continuing to invest in the server-class Atom chips, so we presume the issue has to do with different cost of manufacturing and different levels of price/performance.

We also assume that hyperscalers and cloud builders – and perhaps even intrepid large enterprises that spec out their own gear – will be interested in deploying Xeon D iron that is capable of being used for serving, storage, or network function virtualization workloads. In the end, these are all just servers, really, and if the hyperscalers teach the world anything, it is to scale up infrastructure by having as few SKUs are possible and keep the management complexity down to a dull roar.

The obvious question, and one that is a bit harder to answer, is how does the Xeon D line compare to the Xeon E3 and Xeon E5 line – the two processors that have the highest likelihood of being employed in the datacenter today. (You don’t see a lot of Atoms in the enterprise datacenter.) We have a fairly simplistic comparison below as a starting point, which compares the Broadwell Xeon D v1s to the Haswell Xeon E5 v3s:

This is admittedly a very rudimentary comparison, ignoring the instructions per clock (IPC) and other architectural differences between the various entry Xeon processors. But it gives you a ballpark reckoning of the basic features of these chips and where to start making your own comparisons and doing your own tests. In the end, it always comes down to doing tests on your own workloads before committing to any hardware platform, after all.

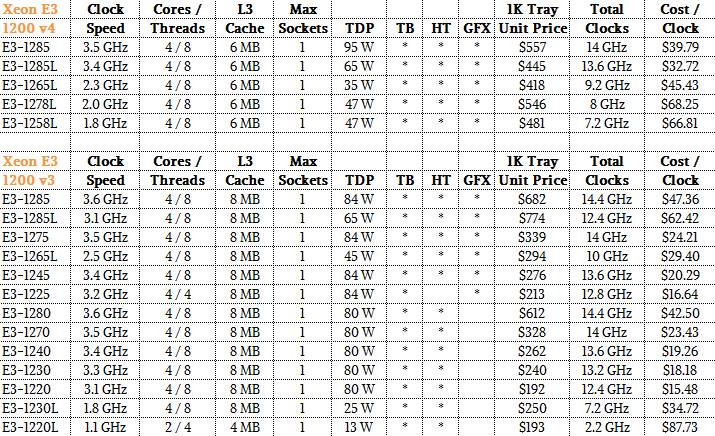

And just for fun and further comparison, here is a chart with the two generations of Xeon E3 chips:

Let the comparisons begin.

One last thing. In addition to the Xeon D processors, Intel is also unveiling its “Red Rock Canyon” multihost server adapters, which will compete against the multihost ConnectX-4 adapters that Mellanox Technology has been shipping since the Open Compute Summit back in March. The multihost ConnectX-4 adapters allows for up to four independent server nodes in an enclosure to share a single, virtualized adapter card that divvies up the bandwidth between the nodes and across the switch port it plugs into. Intel’s FM10000 multihost adapters, as the Red Rock Canyon adapters are named in the Intel product catalog, have two 100 Gb/sec Ethernet ports, can aggregate the network interfaces for up to eight server nodes, and can process up to 960 million packets per second.

Be the first to comment