If money was no object, and the laws of physics were a lot more yielding, all of the data in the world might be stored on a single medium and system architecture would be a lot simpler. But money always matters, and so do the performance characteristics of various kinds of media, which is why we see the storage hierarchy in systems and in the datacenter getting deeper and deeper.

In many organizations, it is not as simple as storing some data on disk or hybrid disk-flash arrays and then pushing it out to tape when it gets cold. Data starts hot, sometimes cools slowly, and gets hot again before going more or less permanently cold. To make matters more complex, companies want a mix of file and object access, formats that are driven by the applications because of their own needs for scale and performance. This dichotomy of storage needs is something that Spectra Logic, known best for its massively scaled tape libraries, has been focused on for years, and which has turned it into a disk array and object storage gateway manufacturer, too.

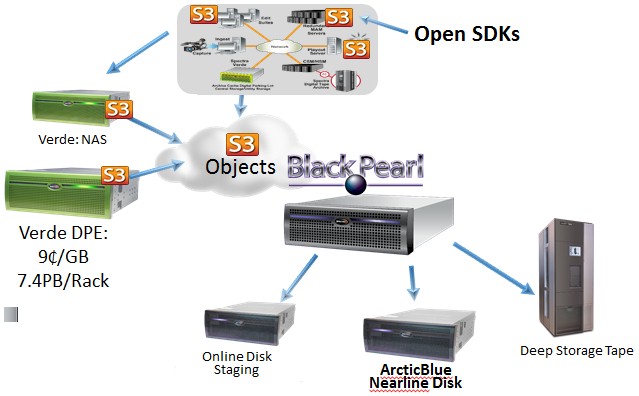

At the Spectra Summit storage conference this week in Boulder, Colorado, the company rolled out a new nearline storage array, called ArcticBlue, that sits behind its BlackPearl S3 object storage gateway and alongside its T series of tape libraries and Verde NAS arrays. The BlackPearl gateway was itself launched two years ago and acts as a fast cache that takes in streams of objects that are compatible with Amazon Web Services’ S3 format coming randomly into the server appliance and organizes them so they can be written in a reasonably fast manner out to LTO tape drives in a library in a sequential manner. BlackPearl is not just a write cache, but a read cache, too, staging sequentially stored data pulled off tapes so it can be accessed in a more random fashion than is possible at respectable speed by tape drives.

Don’t get the wrong idea. Spectra Logic is not new to disk arrays, and the Verde NAS arrays that it also launched back in 2013 were its fourth generation of machines. But the company is clever about how it engineers its arrays and how it integrates them as a whole into a hierarchical storage system that can adapt to different workflows and data storage needs.

The new Verde DPE NAS arrays – short for Digital Preservation Enterprise – and the ArcticBlue nearline arrays are the company’s fifth generation of disk-based products. They are not intended to be tier one storage but rather fast access NAS for replicated data from production systems that has not yet been offload to cold storage yet. (Major League Baseball, for instance, currently uses NAS to keep two years’ worth of games close to editors for quick retrieval, but pushes colder video data out to tape.) Both Verde DPE and ArcticBlue are interesting in that they make use of disk drives that employ Shingled Magnetic Recording (SMR) techniques to cram about 25 percent more data onto a disk drive and also use a customized variant of the Zettabyte File System (ZFS) to organize data and the triple parity RAID Z3 encoding to distribute data across bands of drives inside of the array. In a sense, the Verde DPE and ArcticBlue arrays are implementations of the same basic hardware with slightly different software stacks and positions in the storage workflow:

Customers that have the dough can use ArcticBlue nearline arrays instead of tape, of course, for their archiving needs, but Spectra Logic expects most customers will want to have a mix of tape libraries and nearline storage for their archiving, with access managed through the BlackPearl gateway. The reason is simple: storing data on tape costs about 2 cents to 2.5 cents per GB compared to around 10 cents per GB for the ArcticBlue nearline array. If you have lots of data and a budget that is not infinite, then a mix of nearline disk and tape libraries is the best use of budget for a mix of warm and cold data.

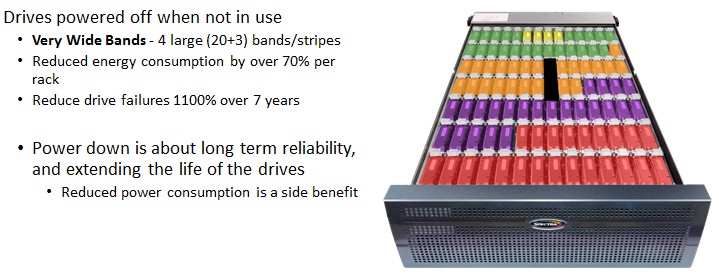

The big difference between the Verde DPE NAS arrays that came out in August and the ArcticBlue nearline storage arrays that debuted this week is that the latter uses a mix of powering down the drives and read/write controls to extend the life of a disk drive from three years to seven years. The idea, explains David Feller, senior director of product management at Spectra Logic, is to create a disk array that has some of the longevity and low-cost of tape. Feller is not revealing all the secrets of the ArcticBlue system and how it is working to increase the longevity of the drives, but says that it is working with disk manufacturers to ensure that its seven year guarantee is valid based. Even a seven year guarantee on a disk drive is nothing compared to the 25 to 35 years that an LTO tape cartridge has, mind you, but this is still more than twice as long as the three-year lifespan of an average disk array.

Taking Inspiration From Copan, Facebook, And Microsoft

“We can offer incredibly low power consumption, and incredible density,” said Feller, and he referenced Microsoft’s “Pelican” storage array as an example of the dense storage that cloud providers are building themselves. Microsoft’s Pelican cold storage is comprised of storage servers and associated enclosures that cram 1,152 drives and multiple controllers into a 52U rack, delivering 5 PB of capacity and 1 GB/sec of peak sustained read rates per PB out of the rack.

“This is basically at the same density as a Pelican system,” Feller said referring to ArcticBlue. “This provides that kind of capability to the general datacenter. You don’t have to be a Microsoft or a Facebook and invest billions of dollars in your IT infrastructure to get to this kind of density.”

Spectra Logic is not the first vendor to offer storage that spun down disks to increase their longevity to make the nearline storage more like tape and less like a production disk array. Facebook uses a mix of erasure coding across striped disks and power down in its homegrown arrays, and Microsoft’s Pelican storage only allows for 8 percent of the drives in the array to be powered up at any time. Copan Systems, a pioneer in Massive Array of Idle Disk (MAID) systems until it went bust and its assets were acquired by SGI in 2010, built systems that had some restriction that limited their appeal. The Copan arrays, like those from Spectra Logic, put data into different bands, or groups of disk drives, within an array. But how they use the bands is different.

“Copan was a great company, just down the road from here, but one of the big drawbacks was the assumption that the primary motivation for adopting MAID for datacenters was power consumption,” Feller explained. “And because of that, customers are never going to bring all of the drives alive at the same time. So they guaranteed they would never get above a certain power consumption, but you have to trickle your data back and bring drives up in different sequences to get all the data back.”

With the ArcticBlue nearline array, customers can fire up all of the drives in the enclosure all at once, and keep them on forever, and everything will work fine. But if data is at rest, the bands can go to sleep and not only save power, but extend the life of the disk drives by a factor of 2.3X. That means that companies using ArcticBlue arrays can basically skip every other array upgrade they would normally do for disk-based nearline storage, and get a leap year of extra time if they want to push it to the hilt.

To build an ArcticBlue system, customers buy a BlackPearl S3 gateway, which has SAS drives for caching objects and SSD flash drives holding the metadata database that describes where all of the data is stored on nearline disk or tape that is sitting behind the BlackPearl. The ArcticBlue chassis hangs off this, and has 96 disk drives in total. Spectra Logic is using 3.5-inch, enterprise SAS drives with SMR recording and with 8 TB capacity each.

The SMR technique partially overwrites data on tracks, much like shingles on a roof overlap, which allows for 25 percent more data to get onto the drive. But there are some funny kinks to SMR. These dives have to move overlaying tracks to lay down new data, and they like to write data in large blocks in a sequential fashion – just like a tape drive pushing data to a tape cartridge, which is something Spectra Logic knows a thing or two about. So the BlackPearl gateway can organize data and blast it out to ArcticBlue nearline storage as it has already been doing for T series tape libraries and make the best use of SMR drives.

As for banding, a base 4U chassis has 48 drives (half full) and two bands for a total of 384 TB of raw capacity and 320 TB of usable capacity after ZFS Z3 is done with it. The bands are there so collections of 24 drives (20 drives for data, three for parity, plus one hot spare for failure) can be powered up and down as a unit as data is requested and delivered. Add another 48 drives to the ArcticBlue chassis, and you have four bands. The ArcticBlue system maxes out at eight enclosures with 32 bands delivering 6.14 PB of raw and 5.12 PB of usable capacity – all for about 10 cents per GB. That’s about four to five times as expensive as tape, but considerably less expensive than NAS arrays – even those from Spectra Logic. (To be precise, a loaded ArcticBlue chassis has a list price of $76,800.) The ArcticBlue array has data compression on by default and built in, but many media types are not amenable to compression, so Spectra Logic doesn’t count that in its money math. If your data does compress, go for you.

The ArcticBlue system can handle 1 GB/sec of random reads (that is presumable for the entire system, based on one controller node) and can push data out to tape arrays through the BlackPearl gateway at 70 TB per day, too. Microsoft was getting considerably more oomph than that through its Pelican system at 1 GB/sec per PB, but it also was moving data in 1 GB chunks. That is not necessarily an assumption Spectra Logic can make for its customers.

By the way, the Verde NAS arrays from two years ago scaled up to 1.7 PB and were priced at about 45 cents per GB, and at that time the competition was charging somewhere between $1 and $3 per GB. Today, using 8 TB SAS drives, Spectra Logic can get that price down to 21 cents per GB on the original Verde array, which it now pitches as its “performance NAS.” If you just want bulk NAS storage, which means higher capacity but lower performance, then the Verde DPE, is the ticket. (And is essentially the same iron as used in the ArcticBlue nearline storage.) This Verde DPE system has one master node and nine expansion nodes, delivering up to 7.4 PB of raw capacity in a full configuration for around 9 cents per GB. Yes, the Verde DPE machines are cheaper than the nearline storage at the moment, but they are not supported as a target archive device by the BlackPearl gateway and only have a three year warranty and expected lifetime in the field. (So don’t get any ideas.)

The interesting bit about this setup using Verde NAS, ArcticBlue nearline, and T series tape library storage is that customers do not have to spend money on hierarchical management system software, since the BlackPearl gateway will handle all of that. (Feller says that this software costs on the order of dollars per GB of the capacity it manages.) Using the RESTful interface data can be PUT into the BlackPearl’s buckets (to use the S3 nomeclature) and then user policies about how long an object needs to persist and where it needs to be replicated will determine how it is moved across the devices through the gateway. When an application or user asks for an object, the GET command goes into the BlackPearl, which finds it in its fastest media and rips it out to the application or user. If it goes into deep cold storage, it may require someone to retrieve it from an offsite location. That is when the latency is beyond the control of BlackPearl, of course.

Be the first to comment