The very first disk drive in the world was a vertical rotating drum coated with magnetic material called the IBM 305 RAMAC, which still used vacuum tubes as its compute elements and weighed about a ton. It was invented in 1956 and commercialized two years later, with IBM selling more than 1,000 of the units, which could store 5 million 8-bit characters and had an average seek time 600 milliseconds, not bad for a 1,200 RPM device. Fast forward 70 years, and if the forecasts by the analysts at Wikibon prove true, then the venerable disk drive will finally be vanquished from tier one storage in the datacenter.

This will be the last electromechanical device at the heart of computing to go, leaving us finally with the solid state systems that the chip industry and IT consumers alike have been craving for decades.

The reason is simple: Disks may get more capacious with every new and clever materials engineering trick, but they are mechanical devices with very physical limits and they can’t get any faster. And in today’s world, where there is an increasing focus on speed and bandwidth over storage capacity, days of the disk drive are numbered. It looks like until about 2026, so call it 3,600 days for sure.

The fattest disk drives, like HGST’s shiny new 3.5-inch Ultrastar Ha10 weighs in at 10 TB thanks to the fact that its disks rotate at 7,200 RPM in Helium gas (less resistance than air but still allowing for disk heads to literally fly over top of data) and use shingled magnetic recording techniques to store data. But the average access time is on the order of 12.7 milliseconds. (That’s a seek time of 8.5 milliseconds on reads plus a latency of 4.2 milliseconds to line the head up with a specific spot on the disk platter.) That’s a factor of 71X increase in seek time performance over the 305 RAMAC – arguably not an apples-to-apples comparison, we know, but we don’t have the access time on the unit. The 10 TB disk has a factor of 2,000,000X increase in capacity.

If you want to go for performance with disks, HGST will be happy to sell you an Ultrastar C15K600, which comes in a 2.5-inch form factor, weighs in at a mere 600 GB, but has a seek time of about 2.9 milliseconds and a latency of under 2 milliseconds for an average access time of under 4.9 milliseconds. Depending on the model of the C15K600, you can get sustained transfer rates of between 175 MB/sec and 271 MB/sec, and if you wanted to do some short stroking (only storing data on the outside of the platter where it spins the fastest relative to the disk heads) you could sacrifice capacity for a little more performance. But that is still only a factor of 207X improvement in seek time (without short stroking) over the 305 RAMAC and 120,000X improvement in capacity. As you can see, balancing capacity and performance in storage is a tricky business. Well, it is not all that tricky. Access times stopped improving a long time ago, even if bandwidth did improve in and out of disks thanks to buffering and improved protocols.

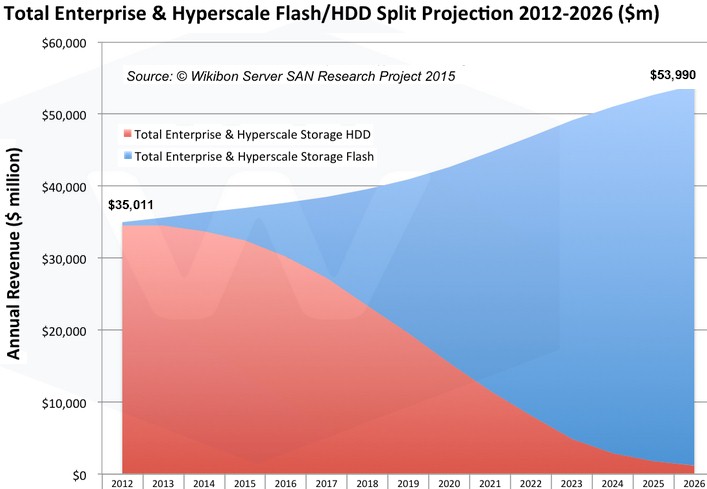

This is why flash has taken the datacenter by storm, and it is also why Wikibon CTO David Floyer thinks that disk drives are not going to be as prevalent in the datacenter of the future as many think. Floyer’s thinking is outlined in a new report, Enterprise Flash vs. HDD Projections 2012-2026, which just came out, and it is unabashedly aggressive in forecasting the demise of the disk drive in the datacenter for tier one (or primary) storage. Even as incumbent disk array makers peddle hybrid machines mixing disk and flash and trot out all-flash arrays to compete with the myriad – and often well-funded – upstarts, most of the vendors say again and again that they expect to see disk drives in the datacenter for the foreseeable future. To his credit, Floyer put a stake in the ground – and into the disk drive.

There is a lot going on underneath that seemingly simple chart above. The blue area shows the spending on flash storage among both enterprises and hyperscalers added together over the forecast period, and it shows it rising from a mere $490 million in 2012 to $2.54 billion last year, to $4.55 billion this year and $7.54 billion in 2016. The growth doesn’t just stop there, though – it accelerates as the cost per TB of flash storage is predicted to come way down over the next decade. At the array level, flash cost something on the order of $20,000 per TB three years ago, according to Floyer’s model, and will drop to $4,320 per TB this year. The cost of disk capacity in all-disk or hybrid arrays is still coming down, oddly enough, dropping from $2,268 per TB in 2012 to $1,070 per TB this year. So, flash is still around 4X the cost of disk, give or take. But that is only on traditional arrays. When you weave enterprise server SANs and hyperscale server SANs into the model – inexpensive and often clustered servers with beefed up storage capability – prices really come down. An all-flash server SAN costs about $672 per TB this year, says Floyer, and an all-disk server SAN will run a piddling $87 per TB.

Can you say open source software?

By the end of the forecast period in 2026, the cost of flash in either hybrid or all-flash arrays will be about $16 per TB, compared to $26 per TB for disks in all-disk or hybrid arrays. You read that right, disks will be more expensive than flash, and the crossover will come in 2023 in Floyer’s model within hybrid or all-media arrays. Server SANs, whether based on disk or flash, will continue to be much cheaper than arrays with their fancy controllers and architectures, although the gap gets pretty small by the time 2026 rolls around. Disks will be slightly cheaper in Server SANs than flash for a reason that we wish Floyer would explain.

When Floyer is saying “flash,” he means NAND flash, not any and all kinds of non-volatile memory in what could be its many forms. NAND flash is certainly the best contender for future tier one storage, as many have pointed out in recent weeks to The Next Platform. It will come down to economics as much as any other factor, as Micron Technology has pointed out. Disk will still have a place, mostly in archival storage, so don’t write off Seagate Technology, Western Digital, and Toshiba just yet. (It is amazing that over 200 disk drive manufacturers in the past sixty years have consolidated down to three. There are only three DRAM memory makers, too.)

What is happening in the enterprise storage market is not just the clash of flash and disk, but traditional storage arrays getting caught in the vice grip between hyperscale server SANs at the high end and enterprise server SANs at the low end. Over the forecast period, Floyer is projecting that traditional enterprise storage arrays (SAN, NAS, and DAS) sold by the biggies in the business will fall from $33.6 billion in 2012 to a mere $3 billion in 2026. Enterprise server SANs will rise on a nice S-curve from a puny $149 million in 2012 to $26.3 billion in 2026. (It would be interesting to see a chart showing capacity curves plotted against revenues.) Hyperscale server SANs, used by hyperscalers and cloud builders who will soon comprise more than 50 percent of server spending and could account for more than that in a decade, will rise from $1.3 billion in revenues in 2012 to $24.7 billion in 2026. That’s a 17.9 percent compound annual decline rate for traditional enterprise storage, a 19.4 percent and a 30.7 percent compound annual growth rate for hyperscale and enterprise server SANs, respectively, over the forecast period.

Within the hyperscalers, spending on flash and disk will reach parity sometime in late 2018 or early 2019, with disk spending declining steadily to near zero by the forecast period. Enterprise server SAN spending for flash and disks looks very similar, although the curves are taller for both disk and flash spending. With traditional enterprise storage arrays, spending on flash, which is a tiny slice of the revenue pie today, will help buoy vendors of these arrays as disk revenues plummet (due to a combination of capacity and price declines, we presume).

The Wikibon report shows that annual disk capacity in storage arrays of all kinds will rise from a few tens of millions of terabytes in 2012 to peak at a few hundreds of millions of terabytes in 2020, with the inevitable capacity decline. Logical flash capacity sold into the datacenters of the world will reach parity with disk in 2018 or so and will hit 10 billion terabytes in 2025 and continue growing from there. This is capacity shipped per year, not the installed base, which will look a bit older than the shipment data (and have quite a bit more capacity, too).

The one thing that this chart does not show is a distribution of flash and disk across different customer segments, which for the sake of simplification we will call the rich and the poor. We have heard from a number of vendors, most recently Hewlett-Packard, that some large and well-off customers are going for all-flash datacenters because of the space constraints they have. It is cheaper to go to all-flash than build a new datacenter in dense urban areas. This will certainly not be the case for smaller companies not operating in dense urban areas, which is why others have told us that they expect to see hybrid arrays in the datacenter for many years to come and why this makes technical and economic sense.

The future is undecided until we live it. We shall see.

When we talk about all-flash datacenters, we never meant to imply that all datacenters will be all-flash. That would be silly and when and if it does happen, it will take a long time. But if the cost of flash keeps coming down and flash suppliers can supply enough of the chips – not something anyone can be sure of, but the Wikibon model seems to assume that – there are so many compelling reasons to move to flash that the richest datacenters in the world, which want advantages in terms of performance and storage per floor space, will jump first. The rest of the world will be on slower disk with a splash of flash for a longer time because the economics will work that way, unless they pay for a higher tier of service in the public cloud or build or buy their own all-flash storage.

We also wonder what other non-volatile memory could take a bite out of NAND flash in the coming decade, but even if flash doesn’t eat all of the capacity, as Floyer’s model shows, the fate of disk will probably be the same. There will still be lots of disk capacity sold, but nothing like the kind of money disk arrays used to generate in the past or even today.

“and the crossover will come in 2013 in Floyer’s model within hybrid or all-media arrays”

oy 2023 you mean

Yes indeed. Thanks for that catch. Dyslexics of the world, untie…

Since I still do not completely trust NAND for long term storage, I am hoping NAND will be displaced sufficiently by CrossBar, and similar technologies, for the SLC NAND’s price point to be driven down by competition enough that SLC NAND cells will become more affordable in SSDs. I would much rather have a hybrid SSD/HDD drive consisting of equal parts NAND storage/cache to HDD capacity, and the Hybrid drive having some form of built-in tiered storage management software/firmware with the hybrid drive’s controller able to in the background copy/mirror the NAND portion to the HDD without any OS intervention and without requiring any main system BUS bandwidth. Or even if the HDD was larger than the NAND cache in a 2 to one ratio.

HDD’s will still have uses that will make them good for longer term storage, before Tapes become more advantageous for cold/archival storage. As the main tier in the hierarchical storage pecking order, NAND will quickly be supplanted by CrossBar/other NVM technologies while that Good old spinning rust will be pushed into mostly the longer term storage of data, and Flash will take its place for the hot data pool availability on server systems. A nice affordable Hybrid drive with a good amount of NAND/Cache storage for laptops with all the mirroring done in the background, NAND to HDD platter, on the devices intelligent controller and channels. This would be nice and the OS and paging files on the SLC NAND and some background BUS bandwidth to and from the platters.

That Intel CrossBar looks like it would make a nice addition to a consumer device’s motherboard in a more directly connected to the CPU channel/module to play host to the OS and paging files while the faster regular volatile DRAM hosts the running code and data.