With the growing adoption of custom servers tailored to specific workloads, a wide variety of Xeon processors available from Intel, and the quickening commercial ramp of machinery that is compliant with Open Compute designs, you might think the last thing that Rackspace Hosting, one of the largest cloud providers in the world, would do is strike out on its own and create a new line of servers that will be based on IBM’s Power processors. Homogeneity has ruled in the cloud datacenter, or at least it did until the engineers at Rackspace started looking at the CPU as but one element in a modern system that can be tailored even more tightly to specific applications.

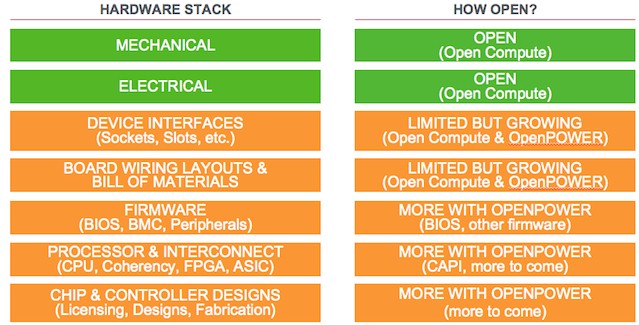

But some things trump homogeneity, and control is one of them. Like many cloud providers, Rackspace wants to have a completely open set of hardware and software, from the processor up through the firmware to the OpenStack cloud controller that orchestrates hypervisors and virtual machines and application placement on its public cloud. OpenStack is, of course, a key product that Rackspace has championed since it founded the cloud controller project with NASA five years ago, and Rackspace was an early proponent of the Open Compute open source hardware effort, too.

Rackspace, which has a fleet of over 112,000 servers supporting its more than 300,000 cloud and hosting customers as 2014 came to a close, joined the OpenPower Foundation and announced that it would be building a server of its own based on IBM’s Power8 chips and deployed in a form factor that is compatible with the Facebook Open Rack that is one of the racks that meets the Open Compute specifications.

The impetus to build an Power-based Open Compute machine came from Rackspace’s experience in launching its OnMetal bare metal cloud offering, which provides high speed networking and rapid provisioning of systems akin to that offered by hypervisor-based clouds but without the hypervisor performance overhead. Aaron Sullivan, senior director and distinguished engineer at Rackspace, has been driving the company’s Open Compute server effort for the past several years and spoke about the future “Barreleye” system at the recent Open Compute Summit and sat down to talk with The Next Platform about the challenges that Rackspace is addressing with its Power-in-Open Compute efforts.

“Getting into OCP and having those direct relationships and getting more control over the design of the server, we were able to dramatically remove our costs,” Sullivan explains, adding that customers get excited with OCP machines if they can cut capital costs by 20 percent to 30 percent. “But let’s just say that is just removing overhead. That’s not really changing the system. That is not an indicator that the core components of the system are that much more efficient. You are integrating in a way to get more out of them. This is an exercise that now needs to be repeated down at The Next Platform level and down at the chip level.”

The problem that Rackspace ran into is adding security and control to a bare metal service like OnMetal. If you don’t have a hypervisor, as the service does not, then this security and operational control has to be embedded in the system firmware, which Rackspace did with its server partners. But when it wanted to then contribute the tweaks it make to that firmware back into the open source community, it was not possible because of licensing and non-disclosure issues. But Google and IBM were partnering to create an open firmware stack for the Power8 processors as part of the OpenPower Foundations, so Rackspace took a look at this non-X86 processor. And as it turns out, it got more than it bargained for in an open server stack.

“The Power8 chip is just really powerful for certain applications, especially virtual machine farms,” says Sullivan. “It has really high performance cores, a hardware thread count that is significant, and a memory footprint that is so large that we can make huge improvements in the cost per virtual machine or we can make huge improvements in the performance of a virtual machine at an existing cost. There is a rubber band we can stretch there. It is a sandbox with a lot of ROI, just on its own, without doing anything else. Then we can start plugging in additional innovations to the system.”

Sullivan says that Samsung, Avago Technology, Mellanox Technologies, PMC, IBM and a few others are working on the Barreleye system design with Rackspace, adding that the resulting machine will be much more efficient for Rackspace applications than anything it has in its datacenters today. “We are taking it seriously and we expect it to be as impactful as our original Open Compute platforms,” Sullivan adds. And because Rackspace will be contributing the designs back to the Open Compute community, others will be able to make use of the Barreleye system, which could have applicability in the enterprise, hyperscale, HPC, and cloud segments.

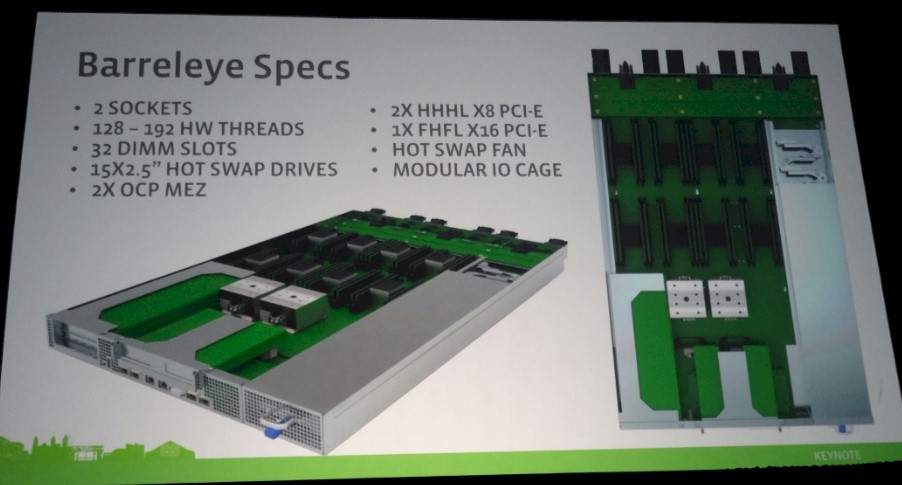

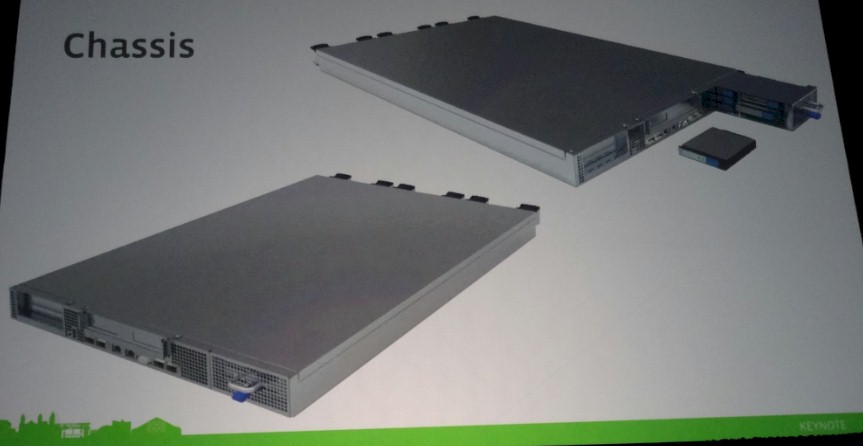

Rackspace is still forging the Barreleye system board but showed off the mechanical design at the recent Open Compute Summit and OpenPower Summits that were held a week apart in San Jose this month. The Barreleye system will have two Power8 processors, like many of the OpenPower designs do at this point even though the Power8 chip can scale up to sixteen sockets. (Rackspace could do a NUMA interconnect lashing multiple rack servers together in a single system image, much as IBM has been doing with its high-end machines since the Power5 generation was launched a decade ago.) The Barreleye will support Power8 chips with either eight or twelve cores, for a total of 64 or 128 threads per socket, or 128 to 192 threads per system. The machine has eight of IBM’s Centaur memory interface chips, which implement L4 cache as well as connectivity from the generic Power8 memory controller out to the memory riser cards in the system, which can employ DDR3 or DDR4 main memory. Using 32 GB DDR3 memory sticks, the system will be able to support 1 TB of main memory, and importantly, each Power8 chip has 288 GB/sec of memory bandwidth, about three times that which you can get from a Xeon processor from Intel used in a two-socket machine.

Rackspace is not customizing Power8 processors for this future Open Compute machine, much as Google, which also has a two-socket Power8 system under development, has told The Next Platform that it has also not licensed the Power8 chip. (Chinese chip maker Suzhou PowerCore has licensed the chip and has kicked out an early version of its CP1 variant of the Power processor, which was unveiled along with a bunch of OpenPower systems at the summit late last week.)

“We are using merchant Power8 SKUs and Centaur memory buffer chips,” Sullivan tells The Next Platform. “With those, motherboard layout is a little more complicated, and we think about mechanical and thermal design a little differently. The Barreleye server is considerably larger than a typical OCP design. The emphasis is less on trying to pack multiple servers per OU, and more on maximizing resources connected to the processors. Don’t think of these as microservers or standard servers, think of them as megaservers. More I/O, more DRAM, more cores/threads, more storage, more network, and so forth. The server is designed larger in proportion to all of the extra processing and memory resources it has.”

One of the things that is making the Barreleye machine a bit beefier than other Open Compute machines, as you can see from the chassis above, is the storage sled, which is on the right side of the chassis. This modular I/O cage has five columns of 2.5-inch drives, which presumably will include a variety of disk and flash storage options. The Barreleye machine has one PCI-Express 3.0 x16 slot and two x8 slots for peripheral expansion, which can include a variety of accelerators and I/O options.

As it turns out, Rackspace has its own business selling its ObjectRocket Redis NoSQL database as a service, and one that it wants to accelerate dramatically.

“When we go changing some of those underlying pieces and tweak the software a little to understand how to use the new memory system or better leverage the coprocessing capabilities, we can take some applications that today get bound up on the cost of DRAM or the cost of flash or the cost of a core and we can change that equation,” Sullivan explains. “Redis is an example of an application that just spends a lot of time moving data between I/O devices and the network. If you think of the threading model of some of these web servers and NoSQL platforms, they spend a ton of time in I/O threads. That is all the overhead of context switching, that is all of the overhead of I/O thread processing, just to get it in and tell it to move it to another I/O thread. If you could just get in on a memory model there and say to the application that it is no longer dealing with flash as block storage but rather as memory, that we put something into the system like CAPI that safely abstracts that, now you can go into your Redis application and tell it to treat all of this data that is normally stored in a file that it is stored in memory. Now you have got Redis out of all of this context switching and all of this waste and those processor cores are spending more of their time doing the really productive work and not just flipping modes just to move data around.”

“You don’t fit as many of these megaservers into a rack, but what you do fit in is two to three times faster. We are trying to create something that allows for enterprise adoption faster. So we are doing work that allows for modularity in the I/O system, so people can change that part without having to spin the whole board.”

This is not just a science project. IBM has already done this. IBM launched a hybrid system last year called the Data Engine for NoSQL, and it uses an FPGA card to implement a connection to CAPI from a FlashSystem V840 all-flash array to tightly link the main memory and flash memory together into a single address space. In IBM’s tests on a 12 TB Redis NoSQL database, IBM found that it would need 24 servers to host this database in memory. But, as it turns out, only about 10 to 15 percent of the data in a Redis database is hot at any given time, so the remaining 85 to 90 percent can be dumped onto flash – so long as there is a fast and coherent link to it as CAPI provides. IBM was able to consolidate those servers from 24 down to 1. The resulting system cost about a third the price of the 24 servers, too. So there was a dramatic reduction in server nodes and costs by tightly coupling the server and the flash array. (Those prices do not include licenses for Redis, but rather just the hardware.)

Sullivan is not making any promises on future products from Rackspace regarding the Redis service or indeed any specific infrastructure or platform clouds. But he does say that in tests on early Power8 machines, system admins loading up Linux software had no idea they were on a Power-based machine, they just saw a box with a lot more threads than they were used to on a two-socket X86 machine.

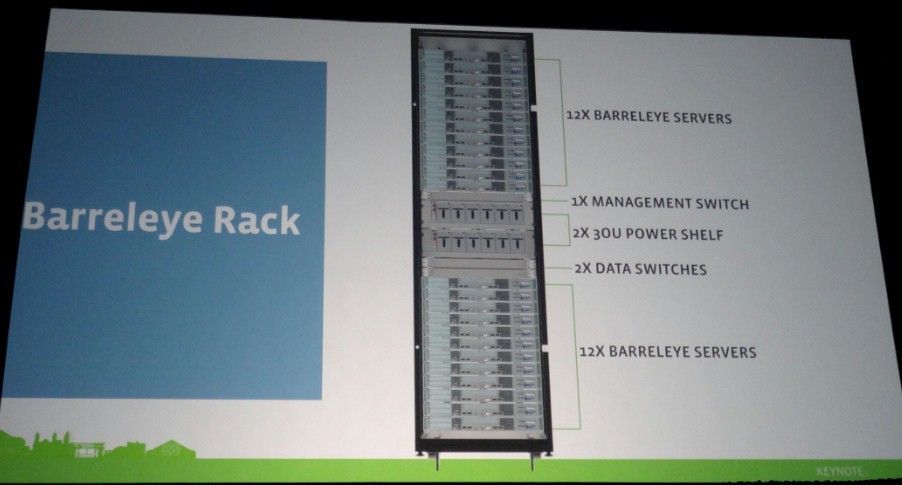

Barreleye will fit in an Open Rack, it will use rack-based switching and use standard Open Rack power shelves. The parts that Rackspace is changing are inside the server and will take advantage of all of the firmware and systems software work that Rackspace has done already with X86-based systems and will also leverage the open firmware that IBM and Google have created for Power8 systems, which weighs in at around 420,000 lines of code.

“You don’t fit as many of these megaservers into a rack, but what you do fit in is two to three times faster,” says Sullivan. “We are trying to create something that allows for enterprise adoption faster. So we are doing work that allows for modularity in the I/O system, so people can change that part without having to spin the whole board. We will bring in hot swap drives and other things that enterprises are used to and that we have done for other Open Compute systems we run at Rackspace.”

There is also an HPC angle to this OpenPower-Open Compute platform, too.

“We link Barreleye to what we learned from our OnMetal service, which was one of the first cases of Rackspace supporting customers who wanted to do HPC,” Sullivan explains. “OnMetal gives them bare metal access, and everybody in HPC wants that, and the way we built OnMetal, it would not be hard to turn on shared memory functions like RDMA or MPI. We won’t build fabrics that are as esoteric as some HPC companies can do, but we can build a fabric that works for a lot of customers. Just like companies adopted cloud an app at a time, I think HPC shops can start to dabble there and rethink their application models. But we didn’t get into this thinking: ‘Man, how do we go get HPC?’ HPC is a benefit in the cart that comes from the rest of the effort.”

Be the first to comment