Paid Feature We expect software development teams to move fast and at scale these days. But can you be sure your datacenter and network ops teams are able to keep up?

Raw network speed and datacenter capacity is just part of the equation, Jon Lundstrom, director of business development for Nokia’s webscale organization, says. Networks need to be able to support cloud native architectures for cloud native applications which “live not in one place, they live as containers and they are potentially short lived.”

At the same time, DevOps teams have a ton of automation at their disposal. “They want to consume the network, whether that’s private cloud or public cloud, in the same way.” They want the network to be a black box, out of which they can pull high or low level information covering security or connectivity, via an API, and “then have the network just happen.”

Delivering this represents a cultural and technical challenge for the NetOps team running the network given their focus has traditionally been on stability and minimizing risk, says Lundstrom “They can absorb technology at a certain pace. But it’s been challenging for them to keep up with the DevOps side of the equation.”

The problem is that traditional network planning practices don’t match this new world, according to Lundstrom. Typically, modeling was revenue driven, with datacenter operators focusing on the “new revenue” that was, theoretically, on the table. Planners would make assumptions based around new services they could offer, calculate the capex costs needed to deliver them, and work out the payback period.

However, this approach glosses over the internal operating costs of running the network over the long term and is increasingly out of kilter with both the new drivers and the possibilities presented by modern architectures in terms of optimization and automation.

In reality, capex is “the smaller portion of the bigger business case, which is really the optimization or cost side of the equation. The cost side business cases are more complicated, because it actually takes an introspective view of where they are spending money today.”

The adoption of newer technologies by Nokia customers has already delivered a fair degree of automation, Lundstrom explains. “But there are still a lot of areas we believe can be optimized. And again, it’s all to try to meet the needs of the DevOps team, who need to move faster and have more scale without sacrificing that reliability.”

Sometimes introspection is a good thing

Nokia’s response has been to develop an online datacenter fabric Business Case Analysis tool that takes a much broader view of the network lifecycle, encompassing both the Day-0 design and Day-1 deployment stages, and the much lengthier, ongoing Day-2+ operating and management phase.

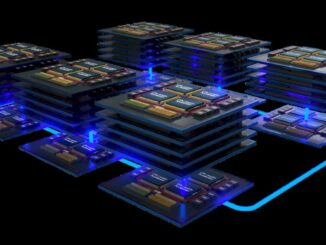

This allows network and datacenter planners to drill down into their current setup and compare it to different future scenarios, including switching to Nokia’s Datacenter Switching Fabric, which encompasses its Fabric Services System, Service Router Linux, and Nokia’s own switching hardware platforms, and which is explicitly geared to allowing NetOps teams to develop their own optimizations.

Lundstrom says it’s important to remember that with any datacenter project, whether it’s a green field effort or reworking existing on-prem or colocation space, “The actual network portion of the investment doesn’t necessarily end up being the big part. It’s either servers or bricks and mortar.”

At the same time, adding in modern hardware does mean a cost for the NetOps team in “learning that new technology, understanding how they can get gains, and then effectively, becoming efficient, and actually, realizing those gains.”

“So what we’ve done is we’ve really just focused on the operational costs, and what effort in total person hours is there going to be between the two different solutions? And that’s really the combination of the automations and the optimization of the environments.”

As for the underlying data and assumptions of the model, there is an element of “tribal knowledge” with the vendor’s architects and product managers alike contributing. The model also draws on the experience of dozens of customers across multiple continents, ranging from enterprises to service providers and tier two webscalers and operators.

It also tapped the experience of the engineers within Nokia’s Bell Labs organization, who “have a vast amount of engineering knowledge as well as experience with customers.”

The model covers dozens of potential job functions that may be affected by the implementation of new technologies, many of which might not be apparent in traditional scenario planning.

“So it’s a little bit of an education as to, ‘Hey, here’s the 50 job functions that you have. Which ones are going to be impacted by this new technology? Some of them are going to be positive, but for example, a new integration is going to cost effort, right?’”

Likewise, Nokia has “made sure that our business case reflects the fact that new integrations are something that wouldn’t necessarily be required if you just use the same technology.”

Naturally, power density and sustainability has been worked into the tool, though realizing benefits around space and power efficiency is predicated on using new hardware, Lundstrom says. “In the tool, we compare the power or a space per gigabit of throughput needs between the existing and the new Nokia solution. And those savings are really driven by the evolution to the next generation of silicon.”

He points to two practical examples of how the Nokia platform and the business case tool can highlight benefits that traditional planning exercises might miss.

Unboxing, optimized

In the design phase, for example, customers at some point will have to get hands on with potential tech purchases in their own labs. But “Lab environments are big and costly, and they are a challenge to manage.” Just setting up a lab with the proposed kit can take months, with each round of tests followed by another bout of time-consuming reconfiguration.

“If you can optimize that test scenario and evaluation of the technology, maybe in a virtualized environment, instead of a physical environment, like we have done with our Digital Sandbox, we believe that that can potentially have significant gains, in the amount of time it takes to go through the design process.” (See Making Datacenter Networking As Consumable As Compute.)

Likewise, at the deployment stage, working out the “effort” of an individual unboxing a switch, adding it to a rack, screwing it in, and powering it up is relatively straightforward.

“But then how do they know what cables and what fibers to plug into what ports? It actually ends up being a very manual process of generating what’s called a cable map,” Lundstrom explains. But this could potentially be partially automated using a design tool to generate a picture of what cables should connect what devices. This would make life easier for the installer and mean a big reduction in effort and cost.

The overall results can be eye opening. For a “medium” installation of 3,200 servers across two datacenters, with 10GE/25GE access for servers and 40GE/100GE uplinks for spines, and 32 full time employees, the tool uncovers total “effort” of 104,217 hours over four years across all the job functions and tasks. Adopting Nokia’s SR Linux and Fabric Services System, with new silicon delivering 10GE/25GE/100GE access for servers and 100GE/400GE uplinks, would reduce total effort to 62,728 hours, the tool predicts, a 40 percent saving. Day-2+ savings would be around 55 percent.

Nokia said it will update the Business Case Analysis tool as it continues to pull together more data points and feedback from customers. At the same time, anyone using the tool can tailor the “100 and 200 level” underlying assumptions to reflect their own starting point.

“But the 300 level assumptions are the ones that are kind of the core of the system. Those are the ones where you would come to us,” Lundstrom says. “Then we would work on a custom version of the analysis.”

The one thing that is perhaps most difficult to model is the biggest barrier to change – the potential inertia that comes in part from the NetOps world’s traditional aversion to risk and disruptive change.

As Lundstrom notes, “culture is one of those things that takes a long time to change, and that’s nothing that you can buy with technology.”

Sponsored by Nokia.