The Next AI Platform is going virtual for 2020.

This was, of course, not the plan. We had been looking forward to another packed event in San Jose back in March but as everyone is aware, the situation changed dramatically.

But here’s a bright spot. We discovered that our all technical interview-based, no Power Point philosophy lends itself well to a virtual platform. After testing the idea since March, we quickly found that this is actually a great way to deliver the exclusive, in-depth interviews you expect from these events.

With all of that in mind, we invite you to register now for The Next AI Platform. A full day of exclusive interviews and panels, delivered remotely and available to registrants only.

REGISTER FOR THE NEXT AI PLATFORM

The full agenda is below, but here is a quick peek at just a few of the speakers/interviewees/panelists for the day…

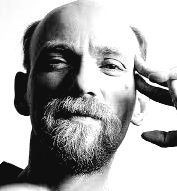

Timothy Prickett Morgan (Host/Interviewer)

Timothy Prickett Morgan is Co-Founder and Co-Editor of The Next Platform and co-host of The Next AI Platform event on March 10, 2020 in San Jose. He brings 25 years of experience as a publisher, IT industry analyst, editor, and journalist for some of the world’s most widely-read high-tech and business publications including The Register, BusinessWeek, Midrange Computing, IT Jungle, Unigram, The Four Hundred, ComputerWire, Computer Business Review, Computer System News and IBM Systems User. Most recently, he was the Editor in Chief of EnterpriseTech.

Nicole Hemsoth (Host/Interviewer)

Nicole Hemsoth (Host/Interviewer)

Nicole Hemsoth is Co-Founder and Co-Editor of The Next Platform and co-host of The Next AI Platform event. In addition to her role analyzing the AI and semiconductor space, she brings insight from the world of high performance computing following most recently a career covering supercomputing hardware and software as former Editor in Chief of long-standing supercomputing magazine, HPCwire. She was founding editor and conceptual creator of the data-intensive computing magazine Datanami, as well as the conceptual creator and founding Senior Editor for the large-scale infrastructure focused EnterpriseTech.

Karl Freund (Guest Host/Interviewer)

Karl Freund (Guest Host/Interviewer)

Karl Freund is Moor Insights & Strategy’s lead analyst for HPC and deep learning. His recent experiences as the VP of Marketing at AMD and Calxeda, as well as his previous positions at Cray and IBM, positions him as a leading industry expert in these rapidly evolving industries. Karl works with investment and technology customers to help them understand the emerging deep learning opportunity in datacenters, from competitive landscape to ecosystem to strategy. Freund is also a Forbes contributing author.

David Kanter (Guest Host/Interviewer)

David Kanter (Guest Host/Interviewer)

David Kanter is Principal Analyst and Editor-in-Chief at Real World Tech, which focuses on microprocessors, servers, graphics, computers, and semiconductors. He is also a consultant specializing in intellectual property evaluation/development and technical/competitive analysis. Mr. Kanter has been quoted in the New York Times, CNN, Reuters, IEEE Spectrum, and many more over the last 10 years. Mr. Kanter was a Founder of Strandera, a startup commercializing advanced multi-core hardware and software technologies. Prior to Strandera, he was an early employee at Aster Data Systems (acquired by Teradata), a leader in data warehousing and analytic databases. He also was an Economic Analyst at the Huron Consulting Group.

Schedule for Select Virtual Sessions, Guests Include:

Ramine Roane – Keynote Interview

Ramine Roane – Keynote Interview

“Why Reconfigurability Will Be Key for Inference at Scale”

Ramine Roane is VP of AI and Software at Xilinx, where he focuses on AI amd machine learning acceleration, IP libraries and the ecosystem from datacenter to the edge. His long career includes engineering roles at Abound Logic, Mentor Graphics, Synopsys, among others. His focus at the event will be on the roles FPGAs will play in both datacenter and edge inference with an emphasis on the flexibility of a reconfigurable architecture over a static ASIC.

Chandra Khatri

Chandra Khatri

“Building Vast, Scalable Conversational AI Platforms: Pain Points in Software, Hardware”

Chandra Khatri is a Senior AI Research Scientist at Uber AI driving Conversational and Multi-modal products and research at Uber. He has a wide experience of building scalable AI systems and taking the state of the art research to production. Prior to Uber, he was the Lead AI Scientist at Alexa and was driving the Science for the Alexa Prize Competition, which is a $3.5 Million university competition for advancing the state of Conversational AI. Some of his recent work involves Multi-modal and Embodied Understanding, Common sense and Semantic Understanding, Natural Language and Speech Processing, Open-domain Dialog Systems, and Deep Learning.

Prior to Alexa, Chandra was a Research Scientist at eBay, wherein he led various Deep Learning and NLP initiatives such as Automatic Text Summarization and Automatic Content Generation within the eCommerce domain, which has lead to significant gains for eBay. He holds degrees in Machine Learning and Computational Science & Engineering from Georgia Tech and BITS Pilani.

Bill Dally

Bill Dally

“Architectural Considerations for Next Generation Deep Learning”

Bill Dally joined NVIDIA in January 2009 as chief scientist, after spending 12 years at Stanford University, where he was chairman of the computer science department. Dally and his Stanford team developed the system architecture, network architecture, signaling, routing and synchronization technology that is found in most large parallel computers today. Dally was previously at the Massachusetts Institute of Technology from 1986 to 1997, where he and his team built the J-Machine and the M-Machine, experimental parallel computer systems that pioneered the separation of mechanism from programming models and demonstrated very low overhead synchronization and communication mechanisms. From 1983 to 1986, he was at California Institute of Technology (CalTech), where he designed the MOSSIM Simulation Engine and the Torus Routing chip, which pioneered “wormhole” routing and virtual-channel flow control.

Kanu Gulati, Khosla Ventures

Kanu Gulati, Khosla Ventures

Venture Capital/Market Perspectives

Kanu Gulati is a Principal at Khosla Ventures, focused on investments in data and ML-enabled enterprise applications. Kanu has over 10 years of operating experience as an engineer, scientist, and strategist. She developed advanced parallel CAD solutions and owned Intel’s multicore CAD algorithms research roadmap. Kanu has led early-stage investments in high performance computing (HPC), distributed systems and ML-enabled systems at Intel Capital and Zetta Venture Partners. Kanu was Employee #2 at MapD (hardware-accelerated data visualization) and has also held engineering roles at Nascentric (fast-circuit simulation tool, acquired by Cadence) and Atrenta (predictive analytics for design verification and optimization, acquired by Synopsys), among other startups.

Vijay Reddy, Intel Capital

Vijay Reddy, Intel Capital

Venture Capital/Market Perspectives

Vijay Reddy leads investments in Artificial Intelligence infrastructure, as well as applications of AI in several domains. Vijay is a board observer and/or has responsibility for Matroid, Sambanova, AEYE, DataRobot, Mesmer, Zumigo, Paperspace etc. Previously Vijay sourced and led Nervana engagement that ultimately lead to the acquisition to form the AI products group. Prior to joining Intel Capital, Vijay held leadership business development and product management positions in communications, software and wireless domains. He began his career as a researcher and an entrepreneur in wireless and software engineering. Vijay is a Kauffman Fellow, received his MBA from Chicago Booth and has a BS & MS in Electrical and Computer Engineering.

Michael Stewart, Applied Ventures

Michael Stewart, Applied Ventures

Venture Capital/Market Perspectives

Michael Stewart joined Applied Ventures in 2015 after working for more than 12 years in advanced technology development at Applied Materials and Intel. Michael’s most recent investment was with portfolio company Electroninks where he serves as a board observer. Prior to joining Applied Ventures, Michael Stewart was co-founder of JUSE LLC, a consumer electronics focused startup, and the inventor of the low cost CRAFT Cell for silicon photovoltaics. He has developed high volume manufacturing products for crystalline Si solar and semiconductor device fabrication. He is an expert in silicon materials science, surface chemistry, and post-CMOS electronics, as well as chemicals and materials for electronics and biotechnology applications. Michael Stewart holds a Ph.D. in Chemistry from Purdue University and an MBA from the University of California at Berkeley (Haas School of Business), and is an inventor on over 40 US and world patent applications.

Jason Gauci

Jason Gauci

“Refining Machine Reasoning and the Hardware/Systems Impact”

Jason Gauci is currently a software engineering lead in Facebook AI and developer of a scalable reinforcement learning platform called ReAgent, which is used internally to improve Facebook products and services. He was previously a software engineer at Apple, Google, and Lockheed Martin. The focus of his segment will be on the emerging area of machine reasoning and the impact of these workloads over more traditional reinforcement learning approaches on hardware efficiency and overall performance.

Andy Hock

Andy Hock

“Going Big on Inference Architecture: Chip Deep Dive/Use Cases”

Andy Hock is Director of Product at Cerebras Systems. Andy was the Senior Director of Advanced Technologies at Skybox Imaging, makers of high-resolution satellites, when the company was purchased by Google in 2014 for $500M. After the acquisition, he continued on as Product Manager at Google before joining the team at Cerebras. Prior to Skybox, Andy was the Senior Program Manager, business development lead and a Senior Scientist for Arete Associates. He holds a PhD in Geophysics and Space Physics from UCLA.

Rodrigo Liang

Rodrigo Liang

“Scalability, Efficiency, and Architectural Balance: A Chat with SambaNova”

Rodrigo Liang is the CEO and co-founder of SambaNova Systems. Prior to SambaNova Systems, Rodrigo was senior vice-president at Oracle Corporation where he was responsible for SPARC Processor and ASIC development. During his tenure at Oracle, he led one of the industry’s largest engineering organizations in developing high-performance microprocessors and releasing 12 major SPARC processors and ASICs for enterprise servers over the past 15 years. SPARC processor performance achieved numerous world records, and it continues to be a performance leader for enterprise applications.

Before joining Oracle via the Sun acquisition in 2010, Rodrigo was vice-president at Sun Microsystems where he worked on the development of the Niagara line of multi-core processors. Rodrigo holds master’s and bachelor’s degrees in electrical engineering from Stanford University.

Elias Fallon

Elias Fallon

“Using AI to Design AI Chips: EDA Next Area for Deep Learning Growth”

Elias is currently Engineering Group Director at Cadence Design Systems, a leading Electronic Design Automation company. He has been involved in EDA for more than 20 years from the founding of Neolinear, Inc, which was acquired by Cadence in 2004. Elias is currently co-Primary Investigator on the MAGESTIC project, funded by DARPA to investigate the application of Machine Learning to EDA for Package/PCB and Analog IC. Elias also leads an innovation incubation team within the Custom IC R&D group as well as other traditional EDA product teams. Beyond his work developing electronic design automation tools, he has led software quality improvement initiatives within Cadence, partnering with the Carnegie Mellon Software Engineering Institute. Elias graduated from Carnegie Mellon University with an M.S. and B.S. in Electrical and Computer Engineering.

Raymond Nijssen

Raymond Nijssen

“High Performance, Low Power: The FPGA Way to Consider Datacenter Inference”

Mr. Nijssen has over 20 years of experience in the FPGA and EDA industries in various technical and management positions. Mr. Nijssen joined Achronix as Chief Software Architect to manage the software development group, define the foundations and algorithms of the software system, and architect key aspects of the company’s FPGA architectures. In his current role, he is responsible for the productization of the company’s current products and R&D for new technologies for future products. Prior to Achronix, Mr. Nijssen was at Tabula where he was responsible for placement and timing analysis of a time-multiplexed FPGA technology. Prior to Tabula, he was one of the first engineers at Magma Design Automation, and held multiple leadership positions in charge of routing and placement, data models and customer deployment of Magma’s Blast Plan Pro hierarchy hierarchical virtual prototyping and floorplanning products for very large ASIC designs. Mr. Nijssen received his MSEE degree from Eindhoven University of Technology in The Netherlands, and after that followed its postgraduate program studying EDA for VLSI. He holds several patents related to P&R and asynchronous circuit technologies.

Krishna Rangasayee

Krishna Rangasayee

“Architectural Differentiation from the Ground Up”

Krishna Rangasayee is founder and CEO of SiMa.ai. He is also a member of the board of directors of Lattice Semiconductor.

Previously, Krishna was the COO of Groq. He was with Xilinx for 18 years, where he held multiple senior leadership roles including senior vice president and GM, responsible for driving the strategy, growth, and execution across all markets. During this timeframe Krishna grew the business to $2.5B in revenue at 70 percent gross margin. As the executive vice president or Global Sales he created the foundation for 10+ quarters of sustained sequential growth and market share expansion.

Prior to Xilinx, he held various engineering and marketing roles at Altera Corporation and Cypress Semiconductor. He holds 25+ international patents.

Peter Mattson

Peter Mattson

“Performance Benchmarking and Device Evaluation”

Peter Mattson is a staff engineer at Google Brain, where he originated and coordinates the multi-organization MLPerf benchmarking effort. Previously, he led the Programming Systems and Applications Group at NVIDIA Research, was VP of software infrastructure for Stream Processors Inc (SPI), and was a managing engineer at Reservoir Labs. He has authored more than a dozen technical papers as well as four patents. His research focuses on accelerating and understanding the behavior of machine learning systems by applying novel benchmarks and analysis tools. Peter holds a PhD and MS from Stanford University and a BS from the University of Washington.

Debo Dutta

Debo Dutta

“AI Hardware Evaluation from a User Standpoint”

Debo Dutta is a Distinguished Engineer at Cisco where he leads a technology group at the intersection of algorithms, systems and machine learning. During his tenure at Cisco je has built an AI team to create consistent AI in enterprise and a massive-scale data ingestion product called Zeus from scratch. In addition to his role at Cisco he has also been a visitor at the Department of Management Sciences and Engineering, Stanford. He is one of the founding members of the MLPerf benchmarking effort.

Be the first to comment