One of the biggest storage decisions being considered by customers nowadays is how they want to manage their data: as cloud-like objects or as traditional files.

For some, that decision inevitably leads to compromises, since the same data can be employed differently by different users or even by the same user at different times. Objects offer the simplicity and scalability of a global namespace, along with the cost-effectiveness inherent in cloud-based storage, while files provide high performance access, along with the compatibility of most legacy applications. These two access approaches have tended to split storage vendors and users into two camps.

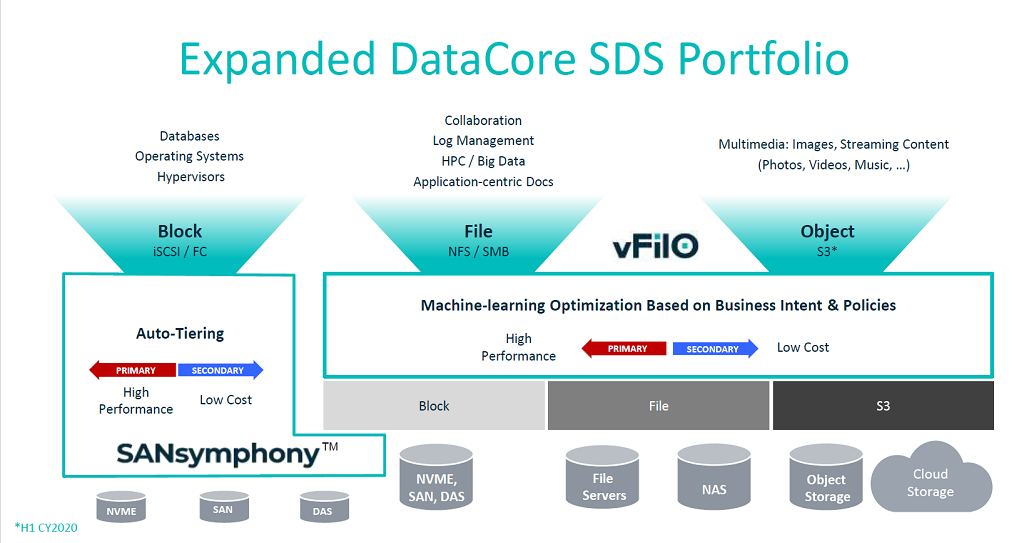

But a new offering from DataCore Software is designed to unify the file-object duality, offering the ability to view their data from either perspective. The product, known as vFiIO, uses software-defined storage technology to enable data to be stored, organized and managed not only independently of the underlying hardware, but also independently of the access method. It can be use in conjunction with the company’s SANsymphony product, which provides the same kind of storage virtualization that vFiIO does, but at a block level.

The generic use case is for organizations that need high performance file access for on-premise workloads, plus the ability to migrate that data to cheaper cloud storage as it ages, where it can become fodder for less I/O-intensive applications. For files, vFiIO supports NFS, and SMB, while for objects, it uses the S3 protocol.

An illustration of this dual-use accessibility can be found with medical imaging data. In this case, a hospital or clinic would typically use on-premise analytics or machine learning on file-based imagery in order to make timely healthcare decisions. Later those images could be moved to cloud object storage, where the data would be globally available across multiple medical institutions for less time-sensitive applications or even for archiving.

In other cases, where enterprises have multiple datacenters filled with legacy files of unstructured data that would best be stored as objects in a global namespace, the software can act as a transition platform to slowly migrate that data to the cloud. While such a process could take months or even years, vFiIO would enable the data to be accessible throughout the transition.

Storing the metadata and data separately is key to the ambidextrous nature of this file-object duality. The model involves storing the metadata on fast local media (NVMe or other flash-based storage), while the actual data is stored locally or remotely or both. As with most SDS offerings, vFiIO is designed to scale, either across physical clusters or across virtual machines.

Underlying this is Parallel NFS technology, which is used across all the supported protocols: NFS, SMB, and S3. To further boost performance, the software employs load balancing and parallelization of I/O requests.

On top of all this, vFiIO provides a series of data services including deduplication/compression, encryption, and cloning/replication. Data migration across the various storage tiers or environments is performed automatically, based on user-defined policies applied to telemetry data collected from storage use patterns, which is further infused with machine learning. Policies typically enforce criteria regarding performance, resiliency, aging, location, and storage cost.

Even for organizations without plans to migrate their data or take advantage of the file-object unification, vFiIO’s ability to unify storage across multiple sites is useful on its own, especially where automated tiering is desired. In these cases, its ability to optimize data placement across primary and secondary storage is valuable on its own.

As we mentioned, the software can also offer block access, via DataCore’s existing SANsymphony product, which offers virtualization for block-based storage. In this case, SANsymphony expands vFiIO access to low-level SAN, DAS, and NVMe access.

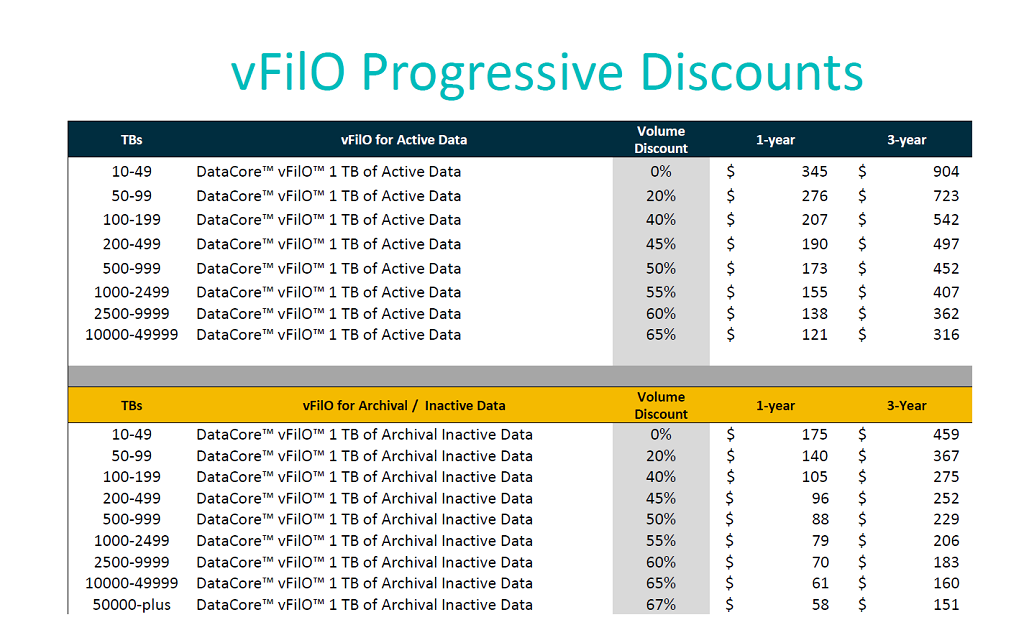

Pricing of the software uses a subscription model based on the number of terabytes vFiIO is managing, with different pricing regimes between active data on primary storage and inactive data on secondary object or cloud storage. Pricing is graduated with discounts applying as more capacity is consumed.

DataCore has a handful of early vFiIO customers, including a financial services firm looking to consolidate storage from 30 of its datacenters under a single unified platform. Meanwhile, a German HPC lab is using the software to consolidate its file namespace and storage hardware. A number of smaller customers are also kicking the tires on the new offering.

Whether there is a critical mass of use cases out there to warrant this unified approach remains to be seen. But for users whose data is sprawled across multiple site in private and public clouds, this model theoretically offers a lot of flexibility, as well as the ability to streamline their underlying infrastructure. And for users building new systems from the ground up, support for these kinds of heterogeneous environments promises to offer at least some level of future proofing.

The product became generally available on November 20.

I thought it’s going to be something actually interesting like Ceph, but it’s just another proprietary offering. Seen dozens of these in last few years.

This sort of lock-in may make sense in HPC, where you are granted a few bazillions, build a top-of-the-line cluster, commission it, run a few codes, then watch it become quickly obsolete and disused. That is when you scrap it, together with its attached grand unified storage solution. But nobody who cares about their data is dumb enough for this.