One of the more significant efforts in Europe to address the challenges of the convergence of high performance computing (HPC), high performance data analytics (HPDA) and soon artificial intelligence (AI), and ensure that researchers are equipped and familiar with the latest technology, is happening in France at GENCI (Grand équipement national de calcul intensif).

Grand équipement national de calcul intensif (GENCI) is a “civil company” (société civile) under French law and 49% owned by the State, represented by the French Ministry of Higher Education, Research and Innovation (MESRI), 20% by the Commissariat à l’Energie Atomique et aux énergies alternatives (CEA), 20% by the Centre National de la Recherche Scientifique (CNRS), 10% by the French Universities, which are represented by the Conférence des présidents d’Université, and 1% by Inria. GENCI, created in 2007 to support a long-term HPC strategy with a yearly funding of 30M€, has three main missions:

- To equip three national centers [CINES for French Universities, IDRIS for CNRS and TGCC for CEA] with complementary HPC and storage facilities, jumping from 20 teraflops in 2007 to close to 7 petaflops in 10 years.

- To represent France in the creation of a European HPC ecosystem with the Partnership for Advanced Computing in Europe (PRACE) research infrastructure, formed to pool HPC resources and services at the European scale in order to compete with countries like USA, Japan and China, enabling large-scale research projects that require supercomputing capacities which may not be available in their country.

- To promote and disseminate the use of numerical simulation and HPC toward scientific and industrial communities with a specific focus on small and medium sized companies (SMBs).”

TGCC hosts the Curie HPC system, which is a two petaflops Bull system based on the Intel Xeon processor, at Bruyères-le-Châtel near Paris. The GENCI’ Curie system is named after Marie Skłodowska Curie a physicist and chemist who conducted pioneering research on radioactivity. She was the first woman to win a Nobel Prize, the first person and only woman to win twice, the only person to win a Nobel Prize in two different sciences.

According to Stephane Requena, GENCI CTO, “GENCI was created in 2007—when the HPC capacity of France for civil research was close to only 20 teraflops—to bring France back into the HPC race. At that time, scientists were obliged to compute in the U.S. or Japan, or in some cases had papers refused because they were not at the state-of-the art, raising issues in terms of scientific competitiveness.

Requena notes, “As GENCI is celebrating its 10-year anniversary this year, HPC is now being used across all the scientific and industrial fields, from fundamental research (such as particle physics, astrophysics, fusion, chemistry) to applied research including combustion, climate/numerical weather forecast, innovative materials, fission and fusion monitoring, new energy sources, personalized medicine, and other areas. It is also starting to come into use in social sciences and humanities (like genealogy, history, archeology, architecture, psychology, analysis of individual and group behavior, etc.) HPC is also being used for supporting public decision making in case of natural risks, biological/industrial risks or cyberterrorism.”

Without the use of supercomputers some projects would not be possible, including the Dark Energy Universe Simulation (DEUS) project in the area of cosmology or an industry project by the Renault automotive company. These projects show that GENCI can address research challenges in both fundamental and applied science.

DEUS Study: Cosmology Research-Dark Energy Universe Simulation

In 2012, GENCI received an award for Best use of HPC in edge HPC application for its contribution using the Curie supercomputer for a worldwide dark matter simulation performed by Jean Michel Alimi (Observatoire de Paris) and his team. At the time, there were only three machines in the world able to run such a huge simulation: Mira at Argonne National Laboratory (USA), the K-Computer at Riken (Japan), and Curie at TGCC (Très Grand Centre de calcul du CEA, France).

The challenge was to use three different initial dark energy distributions and simulate the evolution of the Universe from Big Bang to the present, since dark energy represents 75 percent of the Universe. The team ran three full simulations in a month using 0.5 trillion particles; they used close to 80,000 cores of Curie as well as all the 320 terabytes of distributed memory. The work generated nearly 150 petabytes of rough data. Such a large amount of data was impossible to store, so the team implemented an on-the-fly in-situ post processing for reducing the data by a factor of 100, to 2 petabytes of refined data written at a sustained rate of 70 gigabytes per second on the Lustre* filesystem.

According to Requena, “The results, a first-ever, were amazing from a scientific point of view. The team plans to use such results to feed as input data the EUCLID satellite observational scientific model aiming at mapping the geometry of the dark Universe for the European Space Agency (ESA) around 2020. The study shows how numerical simulation and HPC can feed large-scale instruments (the reverse loop resulting in a iterative process between observations and theory). It also clearly demonstrates the importance of having a balanced architecture on the Curie HPC system because of the compute performance, the memory footprint and the I/O bandwidth required for such large-scale applications— not only focusing on peak performance.

Fast Multi-Physics Optimization of a Car by Renault SAS

In 2014, PRACE was awarded Best Use of HPC in Automotive for work done by Marc Pariente of Renault SAS, France. The challenge was to optimize the design of upcoming cars while ensuring higher safety rules using more than 200 parameters on meshes of 20 million elements. The researchers used 42 million core hours of Curie because it was not possible to generate this research work on their own computer systems. Renault indicated that being able to use Curie as part of PRACE saved them more than five years of Research and Development time. According to Requena, “HPC matters to science but it also matters to business and has big advantages. This example shows how HPC is important to support industrial competitiveness.”

Curie Available to Academia, Industrial, SMBs and PRACE Members

Curie has a generalist and balanced architecture and is one of the most sought-after supercomputers among the PRACE projects with up to 80 percent of the machine’s CPU time available for European research under the PRACE infrastructure. France has been making good use of the PRACE computing resources, with 20 percent usage by a number of scientific projects and allocated resources. It is also the leading country by number of industrial users (large groups and SMBs) accessing the PRACE resources since April 2012.

In addition to Curie, other current GENCI systems include:

- Ada: an IBM system with 1,328 Intel Xeon processors located at Idris at Orsay near Paris;

- Turing : a 1.4 PFlops IBM BlueGene/Q system with 6,144 processors also located at Idris;

- Occigen: Located at Cines at Montpellier Occigen, which replaces the older Cines Jade supercomputer, is a Bull cluster with 4,212 Intel Xeon processors and is a world-ranked system with a peak performance of 3.5 petaflops.

New Joliot-Curie system

In 2018, GENCI is replacing Curie with a new supercomputer honoring Irène and her husband Frederic Joliot-Curie two renowned Nobel Prized scientists. Replacing Curie is part of GENCI’s updating of HPC systems every five years to provide the best possible and complementary options for researchers.

The system will be a 9 petaflops ATOS/Bull system based on Intel Xeon Scalable processors and Intel Xeon Phi processors. This machine will be used for both PRACE and national needs. Like Curie, Irene (the nickname for the Joliot-Curie system) will have a balanced architecture between compute performance, memory footprint (400 terabytes) and I/O bandwidth (close to 0.5 terabyte per second using a multi-level Lustre parallel file system). The installation of the system has started with full availability expected in the second quarter of 2018.

Software used in HPC Support

The GENCI team uses a variety of Intel software products in their work to optimize code including Intel® compilers and Intel® VTune™ to help with profiling system performance as well as providing information on energy usage. In addition, the GENCI team use tools such as OpenMP and Intel® MPI for system optimization. GENCI is part of the OpenHPC consortium and tries to use open source tools or tools developed by their users in many fields like climate, astrophysics, fusion, particle physics, chemistry, materials, biology, combustion, and computational fluid dynamics (CFD).

GENCI Performance Results

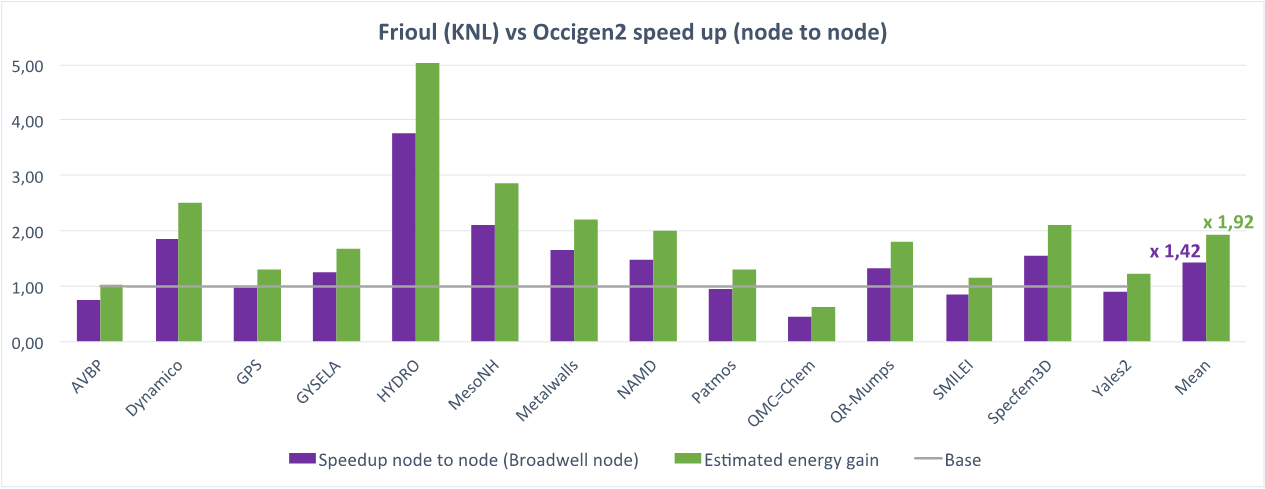

GENCI has set up a national technological watch activity across its partners in which they are preparing users for doing research on next generation architectures. For example, the GENCI team is porting and optimizing real-world HPC applications running on Intel Xeon Phi processors. In 20 real applications (see figure 4), Intel Xeon Phi processors compared favorably in terms of performance and energy savings when compared to Intel Xeon processors (base 1):

GENCI Provides a Full-Range of HPC Support

“Beyond our main missions, our day-to-day actions are to perform technological watch with our partners in order to prepare scientific communities for upcoming HPC/data converged architectures. Then, every 10 to 12 months, we issue renewals or upgrades of national production systems for ensuring a good level of performance and diversity available. In addition, we allocate our resources to French researchers (academia, industry) though open calls for proposals twice a year, and work with our European colleagues in PRACE and other European initiatives. Finally, at the regional level work, we work with 15 regional centers in order to attract new scientific communities and also help small and medium sized companies to assess and use HPC in order to boost their competitiveness,” states Requena.

Challenges for HPC in Moving to Exascale Computing

Requena believes that there are a growing number of challenges for high performance computing in the future. “In terms of science and industry challenges, we can talk about high resolution (1km global) of climate/weather simulations for assessing extreme events like hurricanes, high fidelity combustion for reducing car/plane pollution, development of innovative materials using quantum chemistry, as well as enabling personalized medicine and new energies (renewables, fusion). In terms of technology and usages, the next frontier called Exascale, projected to be reached around the year 2022, will provide HPC systems capable of providing a 50 times better performance on real applications with a contained energy consumption of 20 to 30 megawatts.

“In addition, scientific instruments and numerical simulations are generating huge volumes of data, not possible to post process by humans. All of this will require HPC, HPDA and artificial intelligence to value science and bring new insights in a competitive time.

“One of the challenges here is to make these machines usable—so issues such as programmability, performance and development of skills (HPC, HPDA, and AI) are crucial. This convergence of HPC, HPDA and AI is a real and very challenging stake for us in the future.”

Linda Barney is the founder and owner of Barney and Associates, a technical/marketing writing, training and web design firm in Beaverton, OR.

Be the first to comment