Building high performance systems at the bleeding edge hardware-wise without considering the way data actually moves through such a system is too common—and woefully so, given the fact that understanding and articulating an application’s requirements can lead to dramatic I/O improvements.

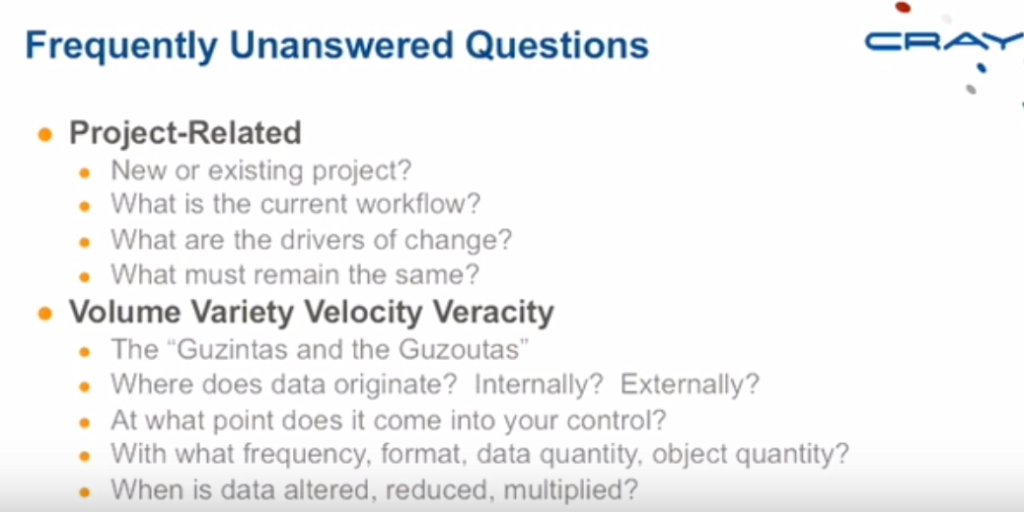

A range of “Frequently Unanswered Questions” are at the root of inefficient storage design due to a lack of specified workflows, and this problem is widespread, especially in verticals where data isn’t the sole business driver.

One could make the argument that data is at the heart of any large-scale computing endeavor, but as workflows change, the habit of not understanding and communicating how this might affect I/O subsystems in RFPs means that many shops are not getting all that is possible out of their storage infrastructure. The expectation that I/O systems “simply work” is a dramatic oversimplification that leads to some RFPs overlooking an area that could boost overall system performance and efficiency.

With the arrival of SSDs in greater volume as well as new applications and use cases in high performance computing and large-scale analytics, a lack of knowledge about how data moves through a system means that efficiency and performance are both being left on the table, according to Cray’s chief architect for storage, Lance Evans.

Those who can communicate effectively about how their applications and workflows hum can find that there are far more elements that can be rearranged, re-tooled, and tuned for their specific needs, but too often, the capabilities of that tuning aren’t being taken full advantage of due to a lack of specifying workflows in overall evaluations.

Users don’t tend to think of this in terms of complexity unless it’s already been a problem,” Evans notes. “But the devil is in the details. Customers will give details about what applications are supposed to be doing, but in some cases, they don’t know what the I/O patterns are. They may think they know how data flows through the system, but if you ask where data comes from, what to do with it, and what it should do, they don’t know. So the challenge is finding a taxonomy for workflows.” Finding one, and, of course, being able to communicate it in an RFP.

“Every workflow is different. Understanding the details about how an application commits and absorbs data is critical—and articulating that in an RFP is even better. We can talk broadly about how data flows into the system, but if we don’t know the details, we might design an I/O system that isn’t appropriate.”

In his experience designing storage systems for Cray’s wide base of customers, Evans says that the HPC users who have long-running applications that they understand well and repeat often, as well as many different scientific computing users who do not want to get into the finer points of optimizing for storage and I/O often have less of a degree of understanding about their specific workflows that analytics shops where the data and how it is used forms the core of the business. In both cases, however, the questions that should be asked are approximately the same.

When Cray sets about designing the right I/O subsystem for users, the RFP is usually quite telling. If users had never had to struggle with I/O issues before, that subsystem is simply expected to work—there is little detail given to help architects evaluate the best approach. Further, in such cases, the complexity and range of options to meet the needs of an application varies widely. There is no “one size fits all” approach to storage at scale and by not understanding how data actually moves through a system, and no ability to communicate that when it is time to build out a new system, performance can be left on the table.

Even for users that do specify in an RFP what their I/O challenges are because of past issues, there are larger workflow details that can lend insight into how to build a better system. “Users tend to know a lot about their applications, but not about the things that influence and impact the storage subsystem, especially when running multiple applications,” Evans explains. In the HPC space, where the single, monolithic applications are becoming the exception, the need to drive better understanding of specific workflows is more crucial.

Although the plumbing analogy is not a new one in terms of how systems operate, on the I/O subsystem front, it goes a bit deeper. Just as with plumbing, all work is done with a common set of tools and pipes, to actually function optimally, plumbing needs to be tailored to suit the building or infrastructure. With so much tunability and possibility for unique routing to circumvent problems or optimize delivery, setting to build pipes without an architectural drawing leads to a sub-optimal result.

Be the first to comment