Companies in the IT sector have a kind of inertia that is directly related to their revenue and profit streams, which are a measure of their influence in the IT supply chain and among customers. A big company can make a fairly large mistake and still push on, but a smaller one that has fewer customers – and often a few really important ones – takes a long time to build up inertia and it only takes a small mistake to knock it off track.

It is safe to say that AMD has been more or less vanquished from the datacenter by Intel. At its financial analysts day in New York this week, Lisa Su, who took over as CEO last fall to revamp the company, outlined the markets that AMD would focus on, and significantly it included a renewed assault on datacenters alongside its continuing push in high-end PCs, gaming, and custom products like making the chips for Sony and Microsoft game consoles.

While everyone wants intense competition for compute in the datacenter, AMD will find the going a bit tougher this time around than back in 2003, when Intel left the glass house door wide open and AMD walked right in with its “SledgeHammer” processors. AMD did not have to break any glass to get in. Intel was trying to protect and grow its 64-bit Itanium processors and restricted the Xeons to 32-bit memory addressing. AMD had a decent core design, the HyperTransport interconnect for linking CPUs together, a plan to add multiple cores to a single die, and other innovations such as on-chip memory controllers. But as soon as AMD hit a bug with its “Barcelona” Opterons just as the Great Recession was starting up and Intel was getting ready to smack back hard with its “Nehalem” Xeon designs – which made a Xeon look an awful lot like an Opteron, to put it bluntly – the game got a lot harder for AMD in the datacenter.

Intel is all the more strong in 2015 than it was back in 2008. It’s Data Center Group has more than doubled its revenues. Intel has a diverse line of Xeon processors addressing seemingly ever workload under the sun, plus custom ones that it peddles to the hyperscalers and to system makers tuned for specific workloads; server-style Atoms for those who want them; and a very interesting future with the “Knights Landing” and “Knights Hill” massively parallel processors. Intel is integrating fabrics on its processors, too, making life hard for Mellanox Technologies, which gets a fair amount of business from its ConnectX adapter line.

To put it bluntly, AMD is going to have to put some pretty dramatic technology into the field and focus it on very specific workloads if it wants to build a new datacenter business. And we only got a hint of what these might be at this week’s briefing with Wall Street.

Su said that AMD has been engineering this recovery in the datacenter with a new core microarchitecture called “Zen” that is due to start shipping in high-end desktops in 2016 and in server processors at some point after that. But she conceded that AMD had more or less walked away from this business, which now comprises less than $300 million a year in revenues, mostly from sales of vintage Opteron processors that have not been updated in years. AMD had 10 percent server CPU market share at one point in the server racket – we remember when it had 25 percent share in the four-socket segment – and has dropped down to 1.5 percent share. Su did not dispute these figures and neither did Forrest Norrod, who formerly ran Dell’s systems business and who is general manager of the company’s Enterprise, Embedded, and Semi-Custom Business Group.

“In datacenters, the reason we have 1.5 percent share today is that we decided not to invest,” Su explained. “If you take a look at our core roadmaps, we decided not to invest in high performance computing. When we want to get back into that market, the truth is we have the technology, we have the IP, we have the people to do that, and we need to set the design point there. When you set the design point, a brand new microarchitecture from scratch, it takes three plus years. We have been working on it and from that standpoint, that’s what gives us confidence. If you look at all of the places where we have been able to compete, it is really about the technology, especially in the datacenter. I have spent an enormous amount of time with customers – and I think Forrest can also talk to the customer aspect of this – the datacenter wants AMD. Customers want us to offer the technology. But what we must do, though, is this: It can’t be just that we are the cheaper solution. That is not the right way to do it. It is to start with technology that is competitive and then ensure that we are wrapping around it things that others won’t do.

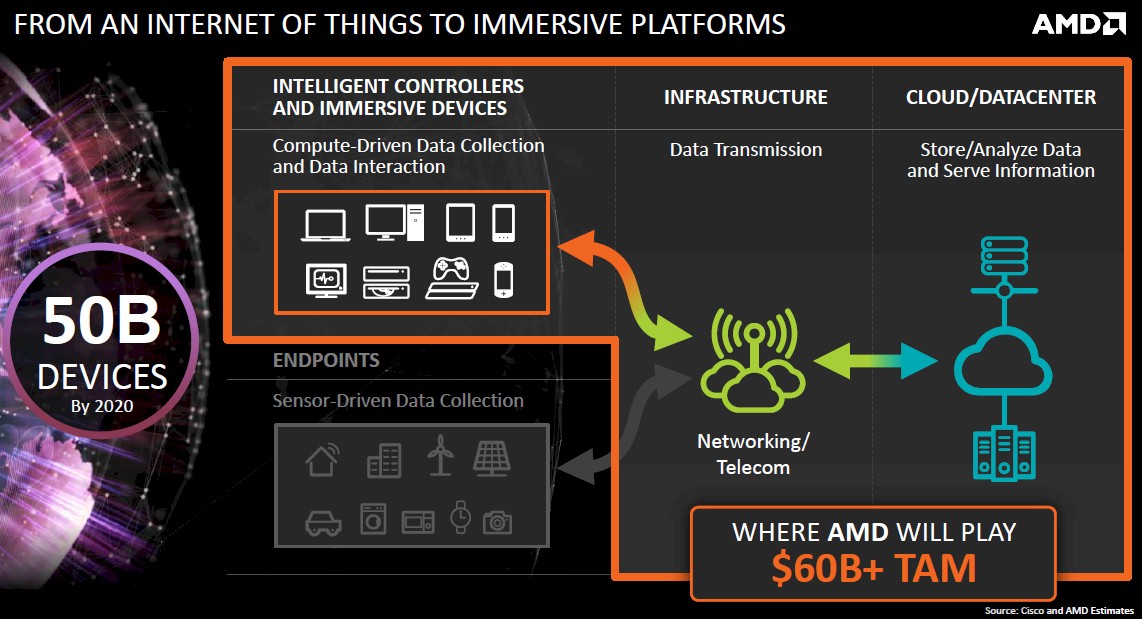

The datacenter space is a very large market, Norrod explained, and he said that AMD had no intention of trying to cover the entire market. In his presentation, Norrod said that AMD reckoned that the market for chips aimed at servers amounted to about $10 billion a year, with another $4 billion or more that could be targeted as networking device markers shift from proprietary ASICs to software running on X86 or ARM processors. AMD reckons there is another $2 billion or more in an adjacent software-defined storage market. Add it all up, there is a $16 billion target – one that Intel is going to aggressively protect and that IBM with Power and the ARM collective outside of AMD (notably including Cavium Networks, Broadcom, and Applied Micro) are also going to attack.

“We’re not going to try to cover every space in that market. I think that we are being very thoughtful about where we see opportunity with the parts that we have and the technology that we have,” Norrod said during a question and answer session after the hours of presentations were done. “I will tell you something that I have told people internally. You are absolutely right, we have got 1.5 percent server market share right now. I am telling my teams that’s an asset. When you have 30 or 40 percent share, your strategy has to be centered on broad participation, general purpose, very broad design points. When you have 1.5 percent market share, you can think differently. And that is where AMD has had success in the past, where we thought differently – 64-bit memory addressing and integrated controllers are a good example. Things that Intel doesn’t want to do or things Intel is not doing.”

This is a familiar strategy, and in fact, it is the only one that AMD can have if it wants to get back into the glass house. The trouble is, this is not 2003, it is 2015, and Intel is doing a lot of different things in the datacenter as it expands from its server hegemony out to storage (which is has a pretty decent lock on) and to networking (more work needs to be done here). The datacenter door is not wide open, but as Norrod knows full well, server buyers do want an alternative. And we think they would prefer an X86 alternative a little more than an ARM one for server workloads because 64-bit ARM hardware is not widely available and the software ecosystem is still maturing.

While Dell would never say much about this publicly, a very large portion of the servers made by its Data Center Solutions custom server and storage business was based on Opteron processors from AMD. And it is probably not a coincidence that when The Next Platform visited Google headquarters two weeks ago, three out of four of the vintage motherboards that Urs Hölzle, senior vice president of the Technical Infrastructure team at the search engine giant, brought for show and tell were based on Opteron designs. One was a four-socket beast with sixteen PCI-Express slots that probably ran databases. But as we say, that was history, and today, Google is a member of the OpenPower Foundation and has tested its application stack on Power8 systems and, as Hölzle put it succinctly: “People ask me if we would switch to Power, and the answer is absolutely. Even for a single generation.”

If Google would think of porting its Linux and app stack to Power CPUs for a single generation of systems just because of the advantages they have over Xeons, then the good news is that Google would do the same thing for Opterons, whether they are using X86 or ARM instructions, too. The trick is to have the differentiation.

The Roadmap Back To The Datacenter

“This is probably the single biggest bet that we are making,” Su said in reference to the plan to attack the datacenter again. “We have not been competitive in the past few years. We will be competitive.” Su added that given the low share that AMD has in the datacenter these days, it is “practically a greenfield opportunity” for the company.

The same could be said of a resurgent Power chip coming from the OpenPower Foundation and the switch chip makers like Cavium and Broadcom who want to take a bite out of the server and storage markets.

To get focused on the datacenter again, AMD has done a little house cleaning. First, as The Next Platform reported three weeks ago in going over AMD’s financial results for the first quarter, Su has shut down the SeaMicro microserver business line and taken a $75 million write-off for that. So AMD is now formally out of the server business and no longer competing against its potential component buyers. It is hard to say, but SeaMicro was probably not worth the $334 million that AMD paid for it back in February 2012, but it did make for some exciting headlines and it did give AMD an interconnect story to tell as Intel was snapping up the Ethernet switch chip maker Fulcrum Microsystems, the InfiniBand business from QLogic, and the supercomputer interconnects from Cray.

More recently, Su divulged, the company has decided to mothball its “SkyBridge” project, which would have put future Opteron X86 and Opteron ARM processors in a shared socket. SkyBridge was announced precisely a year ago in conjunction with its “K12” ARM processor design. AMD is a full licensee of the ARMv8 architecture, which allows it to make customized cores. (The current “Seattle” Opteron A110 is based on ARM Holdings’ own Cortex-A57 core design.) SkyBridge was expected in 2015 in conjunction with a kicker to Seattle using a low-power implementation of the Cortex-A57 core. To be more precisely, the SkyBridge socket would host both X86 and ARM chips implemented as system-on-chips and as Accelerated Processing Units – AMDspeak for a CPU with a GPU linked to it. The SkyBridge processors were going to be etched in 20 nanometer processes, which is a pretty big jump from the 28 nanometer processes that AMD’s foundry partners are using today.

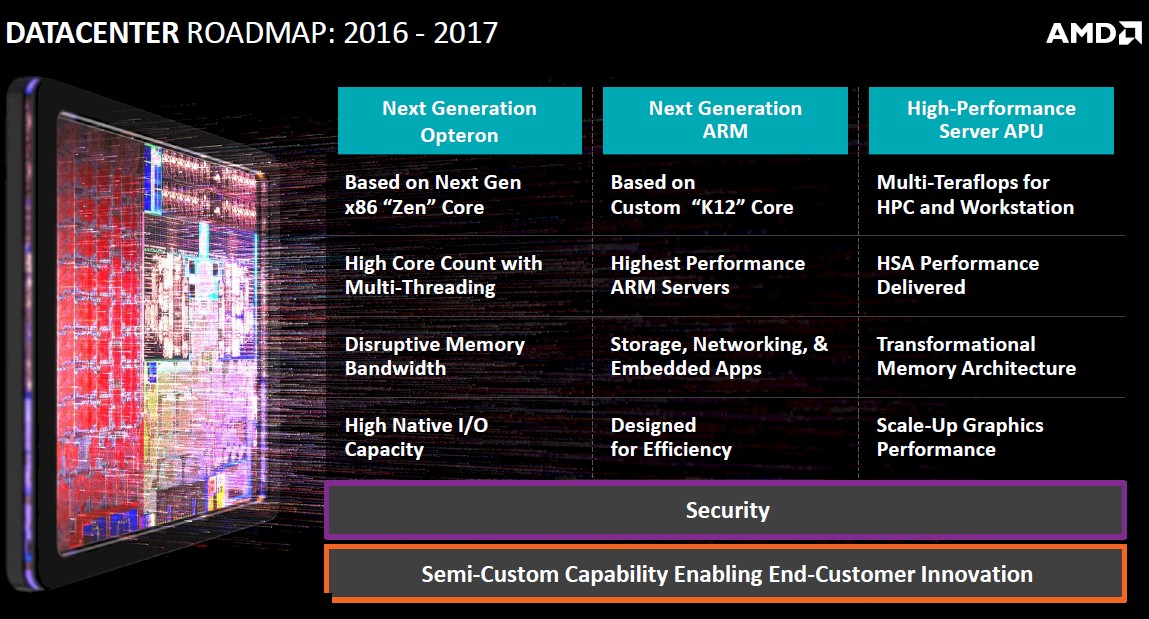

Now, the SkyBridge common socket and the SkyBridge X86 and ARM chips are mothballed. Su said that SkyBridge was not something that customers were not asking for – which makes us wonder why the effort was started in the first place. The ARM K12 chip that was expected in 2016 is now being pushed out to 2017. Mark Papermaster, AMD’s CTO, said in his presentation that the K12 chip was up and running in the labs with early samples and that the 2017 launch would be timed with the maturity of the ARM software stack.

Intellectually, the SkyBridge socket made sense because there is very little question that system suppliers would love to have a common socket for X86 and ARM chips. This is no doubt a difficult task – Cray has actually done it, putting its ThreadStorm massively multithreaded processor into an old Opteron socket, and Intel has tried to converge Xeon and Itanium processors to a common socket but gave up on the idea. The problem, we would guess, is that what AMD was doing was making its ARM chip work in an Opteron socket, and what server makers would really like is a socket that was standard across all ARM chips so they could be interchangeably plugged into them. That is not going to happen, either. Just like there was never an enterprise blade server standard.

The ARM ramp is taking longer than expected, and now the Seattle A110 is not expected to appear in systems until the second half of 2015. AMD will be targeting the Seattle chips at web front end servers, IoT gateways, and storage servers, and Norrod says that the software ecosystem will be mature enough, with the key Linux distributions ready, by the end of the year.

At the moment, the momentum in the ARM server chip business has shifted away from AMD and Applied Micro and towards Cavium Networks and Broadcom, whose respective ThunderX and Vulcan chips offer a lot more cores and lots of features that networking experts like these two companies have and that AMD does not. Hopefully, AMD can get K12 out and it is impressive enough that it can get some design wins and market traction.

Looking ahead – and we are not exactly how far ahead because AMD did not put out a very detailed server processor roadmap – AMD will be creating Opteron server chips based on the Zen core. What these chips might look like, AMD did not say.

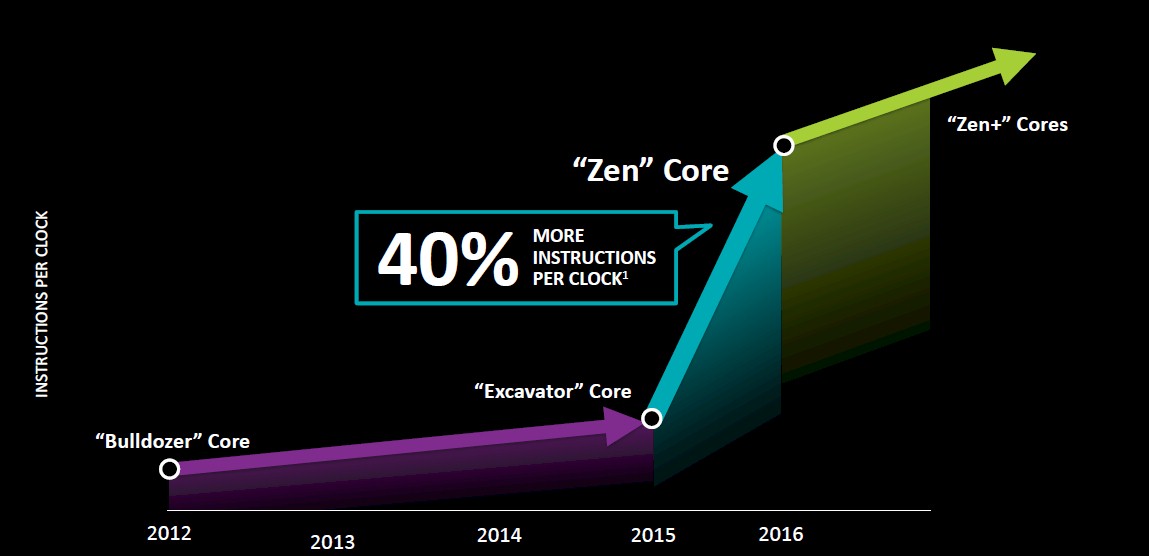

Papermaster said that AMD was focusing a bit more on high performance and a bit less on energy efficiency as a way to contrast the prior Opteron efforts with the Dozer family of cores used in past Opterons. (He quickly added that AMD was not backing off on energy efficiency, but this is physics and you can’t have it both ways.) With chip designer Jim Keller returning to the company last year, the effort with Zen has been to increase the instructions per clock of the future Zen cores, as illustrated below:

To be specific, Papermaster said that the IPC for the future Zen core would be 40 percent higher than the current “Excavator” core used in Opteron APUs, and clearly that chart above is not drawn to scale because if it were the IPC would be something more like 3X. Papermaster said that AMD was already starting work on a Zen+ core design kicker, and this chart suggests that the IPC gains will be more modest, but still better than the gains seen as AMD moved from the Bulldozer cores in 2012 to the Excavator cores used in the Opteron APUs this year.

The other big change is that AMD will no longer have two families of cores, the Dozers from the Opteron server chips and the Cats for the Opteron APUs. Going forward, there is just a Zen core and it will be used across a variety of desktop, embedded, and server products.

AMD did not divulge much about the Zen cores, but it is abandoning the chip multithreading design of the Dozer cores (where two single-threaded integer units shared a floating point unit) for true simultaneous multithreading (or SMT) that presents multiple virtual threads in each integer unit in a core. AMD has not said how many threads it will support per Zen core. Intel has stuck with two threads per core with the HyperThreading variant of SMT in its Xeon chips and IBM has gradually ramped its Power chips from two to four to eight threads per core with the SMT in is respective Power6, Power7, and Power8 chips. Eight threads per core is a lot to juggle, and it would be interesting to see AMD push it that far and to allow dynamic allocation of threads as IBM does with Power8.

Papermaster added that the Zen design would have a high core count for top-end parts, and include a new high bandwidth, low latency cache system. The Zen chips will be implemented in a 3D transistor FinFet process. AMD has not said what process it will use for the Zen chips, and it is not clear if GlobalFoundries or Taiwan Semiconductor Manufacturing Corp will be getting the work of etching the Zens. (It is hard to guess which way this one will fall, but AMD could split the job with TSMC getting the APUs and GloFo getting the plain vanilla CPUs.)

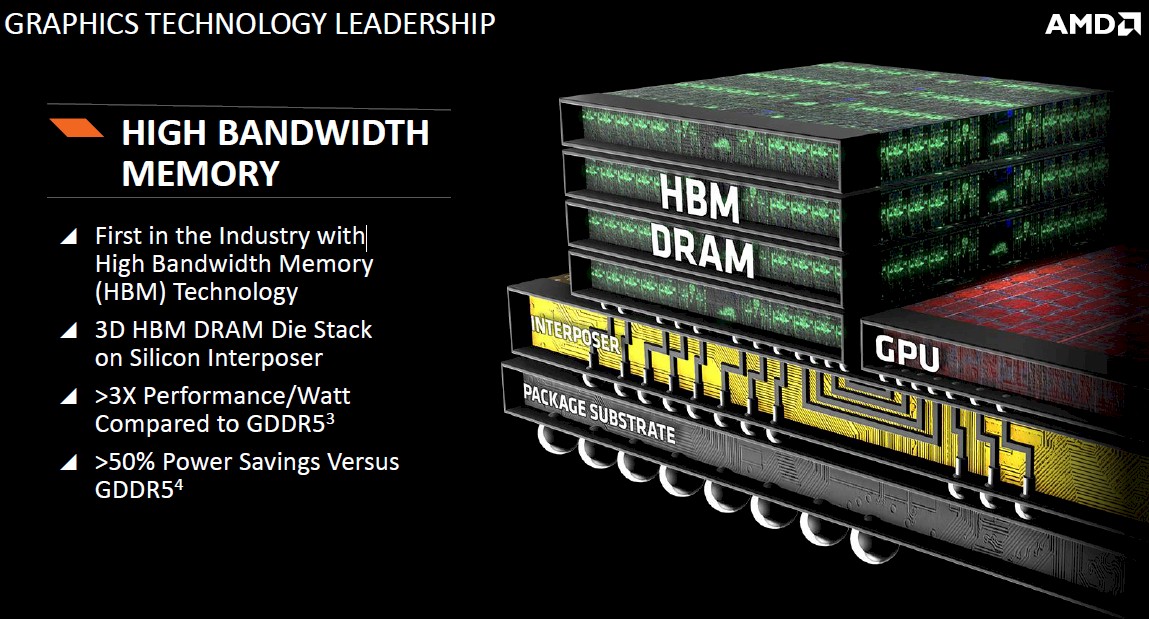

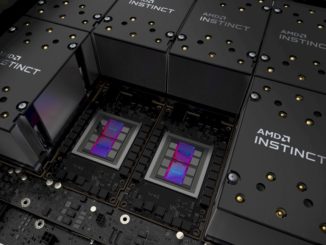

Perhaps the most interesting development at AMD is the high bandwidth memory (HBM) that it is creating for its next generation of graphics processing units, which will put stacks of DRAM memory right next to the GPU and wired directly into the GPU, allowing for a huge increase in memory bandwidth:

This HBM memory, which AMD has been working on with partners to develop for over seven years, will give the GPUs a much lower power profile compared to a bunch of GDDR5 memory. On top of this HBM bandwidth and energy efficiency bump, the GPUs that AMD will be announcing in 2016 for its third generation of “Graphics Core Next” GPU designs will also be using 3D transistor FinFet processes, which Papermaster said would have twice the energy efficiency of the second generation GCN GPUs that AMD is rolling out this year. AMD has its GPU act together a lot more than it has its server CPU act together, for sure. But that GPU game is always a horse race, and both Intel and Nvidia are tough competitors here, too.

Perhaps the most intriguing thing is AMD’s admission that it is working on an APU for workstations and HPC servers that will combine its CPU and GPU units together into a high-performance APU with “multi-teraflops” of aggregate computing power. This is on the roadmap for 2016 through 2017, and not much was said about this.

The chatter about such an APU has been going around for the past several months, and this would be a very interesting part to have in the field today, forget about 2016 or 2017. The rumors suggest that this APU will have 16 Zen cores with 32 threads with 512 KB of L2 cache per core and a 32 MB shared L3 cache; it will also, if the rumors are correct, have a “Greenland” family stream processor and up to 16 GB of HBM with 512 GB/sec of memory bandwidth. Add in four DDR4 memory controllers and 64 lanes of PCI-Express 3.0 I/O and this would be a very interesting bit of iron to throw into the datacenter.

Hopefully, this is the kind of thing that AMD is indeed cooking up. And if it was doing so, perhaps yesterday would have been a good time to be a little more explicit about what the plan is and to put a much larger set of stakes in the ground. But AMD has to be careful. Intel is ten times its size and dominates the datacenter, and talking too much a few years ahead of time gives Intel time to react and then act.

What Norrod did do is talk about how the Semi-Custom business was not just a business, but an approach to business, and that many customers who start out buying standard CPU and GPU parts from AMD will eventually want semi-custom parts – sometime with their own IP and sometimes with that of third parties – added to the devices. We can, for instance, envision AMD partnering with Mellanox to add Ethernet and InfiniBand adapters to Opteron or ARM processors to counter the on-die fabrics Intel is adding to its Xeon and Xeon Phi chips. (If AMD is not doing this, it had better get the lead out.)

“I have been in the server industry for a long time, and I can tell you, it is becoming the car industry,” Norrod explained in his presentation. “Fifteen years ago, you maybe needed a sedan and a minivan to service the needs of 95 percent of the X86 market. The new workloads, the new applications, the new large scale deployments mean it has never been more important to have different points of optimization and different types of systems to create opportunities to differentiate.”

This is certainly true. Now AMD has to deliver the parts – and get them to market as quickly as it can and never take its foot off the gas pedal again.

Be the first to comment