There is not really an Ethernet switch market so much as several distinct ones, aimed at customers who need different combinations of bandwidth and latency in their networks and who also upgrade their networks at different paces. The 10 Gb/sec Ethernet generation may be coming to a close in the hyperscale datacenters of the world with 25 Gb/sec server and 100 Gb/sec backbones on the horizon, but the enterprise has by and large still not widely adopted 10 Gb/sec speeds in their racks.

Despite the wide availability of 10 Gb/sec Ethernet switches, enterprises need a little extra push to get moving, and Broadcom, the dominant maker of ASICs for top of rack switches in the datacenter, is using a process shrink from its foundry partner to add lots of new features into its core StrataXGS “Trident” line of network chips that will give its switch-making partners better gear to help companies make the transition to 10 Gb/sec in their servers and racks.

The typical enterprise is operating with somewhere between tens and hundreds of racks of gear, nothing on the scale of a Google, Amazon, Yahoo, or Facebook, which have orders of magnitude more iron. (Think tens of thousands of racks and millions of servers.) These hyperscalers upgrade whole datacenters to new technologies in one fell swoop – for them, an entire datacenter is akin to a couple of racks targeting a specific set of workloads off in one part of the an enterprise datacenter. The network bandwidth scale is different, too. Hyperscalers have billions of users and their distributed applications have a lot of chatter back and forth across racks – and sometimes between datacenters – and they have immense bandwidth needs inside their racks and across their datacenters.

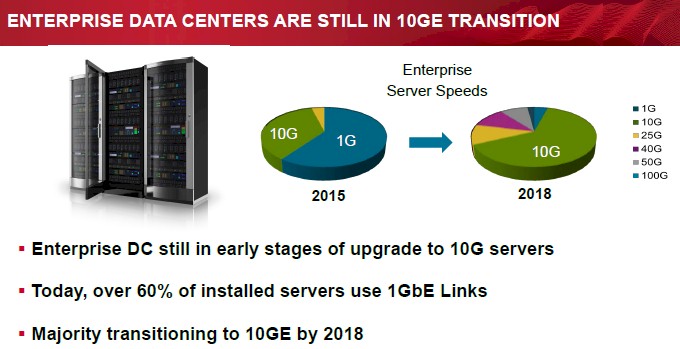

Rochan Sankar, director of product management for Broadcom’s Infrastructure and Networking Group, tells The Next Platform that most of the ports running at the typical large enterprise are running at only 1 Gb/sec speeds. (This is also true of the high performance computing segment, where there are lots of workloads that are not particularly bandwidth sensitive and therefore are still using what seems like ancient technology.) Even though 10 Gb/sec technologies have been around for a decade, only about 35 percent of the servers in the enterprise datacenter have been upgraded to 10 Gb/sec speeds, and more than 60 percent, as you can see in the chart below, are still at 1 Gb/sec speeds in there servers and top of rack switches.

That said, Broadcom and the market research it cites are expecting for a massive rollout of 10 Gb/sec servers and top of rack switches in the next three years, and the expectation is that 1 Gb/sec will represent only a few points share of the server port count in 2018, with 10 Gb/sec accounting for around 63 percent. Interestingly, while the 25 Gb/sec and 50 Gb/sec adapters and top of racks that are being pushed by the hyperscalers because they offer better thermals and economics than current 40 Gb/sec and 100 Gb/sec adapters and switches, will be a significant part of the enterprise server base in three years, according to the forecast above. Switches running at 100 Gb/sec – whether based on ten lanes running at 10 Gb/sec as early aggregation switches did or on four lanes running at 25 Gb/sec as the newer ones do – will still be only a few percent of the ports coming off servers in the enterprise.

“Even though we have been talking about VXLAN for three or four years, it is only now becoming a staple requirement in an enterprise network. And there is a lot of room to capitalize on that.”

While enterprises are not exactly pushing the bandwidth barriers, they are increasingly running network overlays so their virtualized workloads can flit around between hypervisors running on physical servers distributed around the datacenter. This is its own kind of so-called east-west traffic. Any new 10 Gb/sec switch has to do a better job handling these overlays than current ones do. This is precisely what Broadcom has done with its Trident-II+ ASIC, an upgrade to the workhorse Trident-II chip that is found in the majority of datacenter switches these days. (Depending on who you ask, the Trident family accounts for about 65 percent of ASICs in the datacenter.)

“It is a VXLAN world, and that is becoming abundantly clear,” explains Sankar, referring to the Layer 2 network overlay and tunneling method championed by server virtualization juggernaut VMware. “Anecdotally, this year is when the confluence of the software, the hardware to support it well, and the control plane applications are starting to develop and come together. Even though we have been talking about VXLAN for three or four years, it is only now becoming a staple requirement in an enterprise network. And there is a lot of room to capitalize on that.”

Enterprises are also dealing with some other issues that do not affect hyperscale, HPC, or cloud shops. One of them is the need to interoperate datacenter networks with campus networks that face into the enterprise, linking end users to their applications. Cabling is also a big issue, and enterprises want to get the lowest cost cabling installed over the longest period of time to get the best bang for the buck. (In many networks, the cabling costs dwarf the switching costs, so cabling becomes a real limiting factor.) Hyperscalers, clouds, and HPC centers drop in new machines with new cabling every time they do an upgrade. It is just the way they roll, and for hyperscalers, they upgrade whole datacenters, not just clusters. Operating expenses are also a target for enterprise networks, and that means lowering the power draw of switches and network interface cards inside but also taking out some layers of software and complexity in the network to take some more chunks out of those operating expenses.

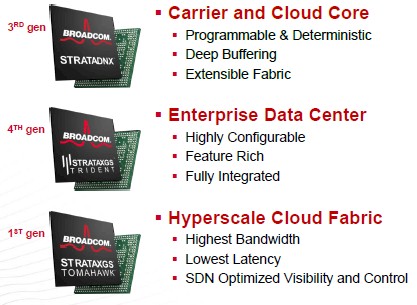

The Trident-II+ ASIC from Broadcom is implemented in 28 nanometer processes and is manufactured by Taiwan Semiconductor Manufacturing Corp, as so many chips are these days. The prior Trident-II chip was etched in 40 nanometer processes and supported aggregate switching bandwidths of between 720 Gb/sec to 1.28 Tb/sec, depending on the model of the ASIC. The bandwidth on the Trident-II+ is set at 1.28 Tb/sec; the port-to-port latency hop is under 600 nanoseconds for a Trident-II+ switch, about the same as the predecessor Trident-II. (Incidentally, the “Tomahawk” StrataXGS variants created for hyperscalers and clouds that uses 25 Gb/sec signaling lanes instead of 10 Gb/sec lanes, has a port-to-port hop latency of under 400 nanoseconds. So Tomahawk switches not only have more bandwidth at a lower cost per bit shuttling, but also have lower latency.)

The Trident-II+ is also pin compatible with the Trident-II, so it can drop into existing switch designs, and makes use of the same Broadcom software development kit and APIs, too. So any network operating system that ran on the earlier chip should run on the new chip.

The big change that is going to get the attention of enterprises is the built-in VXLAN gateway support that is etched into the Trident-II+ chip. Compared to the Trident-II chip, the Trident-II+ has double the VXLAN performance because it can do single pass routing at 1.28 Tb/sec speeds; the Trident-II predecessor did a double pass routing that effectively ran at half this speed. This VXLAN gateway support embedded in the Trident-II+ ASIC works over any VXLAN topology, Sankar says, whether the VXLAN overlay is switched or routed, including hypervisor to switch, switch to hypervisor, switch to switch, switch to core – or unicast or multicast running over IPv4 or IPv6.

The side effect of putting this VXLAN functionality in the top of rack switch is that functions that were done in the hypervisor or a virtual switch running on servers are now done up in the switch. So about 30 percent of the CPU cycles that were dedicated to the hypervisor and vSwitch are now freed up and can be dedicated to getting back to running applications, says Sankar.

With the shift to the 28 nanometer processes, Broadcom is able to take about 30 percent off the heat coming off the chip, which means that switch makers can move to smaller power supplies and fans and the power draw per port drops by about 30 percent. that drop in power means that on a 300-rack setup – not an unusual size for a large enterprise – moving to a switch using the Trident-II+ could save them about 100 megawatt-hours of electricity consumption per year. (That is something on the order of $15,000 per year in lower energy costs, and then there is less cooling to do for the network gear on top of that.)

While those power savings are important, the enhanced support for virtual network overlays, a larger access control list rule database, and larger buffers are going to significantly increase the performance of networks based on Trident-II+ ASICs, says Sankar. The ACL rules database is four times larger (the exact number is not divulged), and the Smart Buffer packet buffer on the chip has been boosted by 33 percent from 7.6 MB to 10 MB. Back when the Trident-II was launched in August 2012, the on-chip packet buffer memory was tweaked so it could be dynamically shared across all ports and queues in the switch and had tweaks to make it much more efficient. In fact, Broadcom said that the 7.6 MB of Smart Buffer implemented on a 96 port switch running at 10 Gb/sec gave the same performance as a regular 40 MB static buffer. (Every little bit of shaving on memory helps cut down the cost and complexity of the ASIC.) The point is, enterprises are going to have a lot of east-west traffic in their networks and they are going to oversubscribe their switches, and that means congestion that needs to be mitigated by more packet buffers.

The Trident-II+ doesn’t just support VXLAN for virtual network overlays, but also the NVGRE protocol championed by Microsoft. The chip has 128 serial interfaces running at 10 Gb/sec, and importantly can be configured with 10 Gb/sec downlinks and up to eight uplinks running at 100 Gb/sec, positioning enterprises for a future 100 Gb/sec spine upgrade on their networks. Sankar expects for switch makers to offer switches with two, four, or eight 100 Gb/sec uplinks, and switches could be configured with more than 100 ports running at 10 Gb/sec or 32 ports running at 40 Gb/sec. The popular configuration will likely be 48 downlinks at 10 Gb/sec and four uplinks at 100 Gb/sec.

The important thing is that with 100 Gb/sec uplinks, switches will be able to plug into spines and cores based on the Tomahawk StrataXGS variants as well as the heftier switches that Broadcom is enabling through its latest “Dune” StrataDNX ASICs, which launched last month. The Dune chips don’t have the same bandwidth as the Tridents and Tomahawks, but they have off-chip memory for packet buffers and configuration tables, which is vital for Ethernet networks with different speeds running on the same network. The speed mismatch can cause a lot of congestion.

The Trident-II+ chips are sampling now, and Broadcom expects for its partners to be delivering products based on them before the end of the year.

Be the first to comment