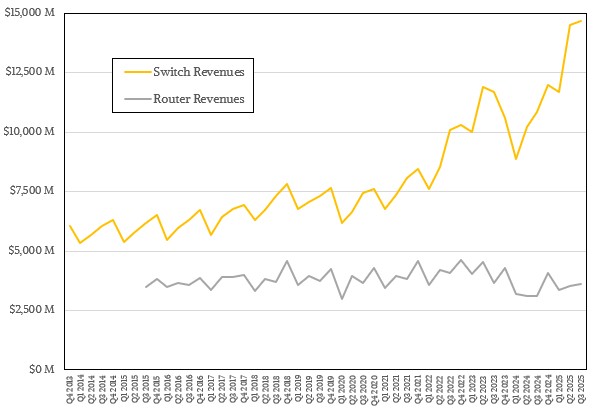

But virtue of its scale out capability, which is key for driving the size of absolutely enormous AI clusters, and to its universality, Ethernet switch sales are booming, and if the recent history is any guide, we can expect Ethernet revenues will climb exponentially higher in the coming quarters as well.

So long as the GenAI expansion in IT spending does not deflate. . . .

In the third quarter, which is the most recent one which IDC has data available for Ethernet switching and routing revenues, sales of switches based on the same Ethernet protocol that powers the backbone of the Internet as well as our campus networks and our edges rose by 35.2 percent year on year to kiss $14.7 billion. This is a record level of Ethernet switch sales, and even bested the second quarter of last year by 1.1 percent.

Almost all of the growth, after we have poured all of the IDC data into our historical spreadsheet and made our estimating where necessary, came from the sales of switchery with 200 Gb/sec, 400 Gb/sec, or 800 Gb/sec speeds. If you do the math on what IDC said in its report, then sales of such high-end gear comprised a combined $5.43 billion in revenues, 37 percent of the entire worldwide Ethernet switching pie. HPC shops and some AI model builders are still buying InfiniBand switches, which are not tracked in this report; there are still proprietary interconnects out there that get some sales, too. But it is safe to say that Ethernet gets the lion’s share for now unless and until someone innovates outside of Ethernet and convinces customers to bet against history.

We live in a world where the cloud builders made routing protocols run on gussied up and stripped down Ethernet switch ASICs so they could span datacenters or link between them in regions so they could get around buying expensive routers from Cisco Systems, Juniper Networks, or Huawei Technologies. This worked brilliantly for the distributed storage, compute, and analytics workloads running at massive scale at hyperscalers and cloud builders.

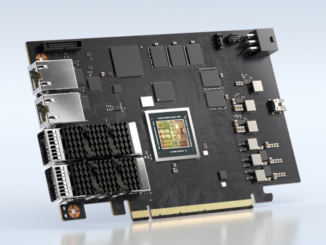

But AI workloads need something that is more like an HPC fabric to create large memory domains, and so a lot of the features of InfiniBand, arguably the best interconnect ever created but limited in scale and limited to one vendor source (Nvidia), were ported to Ethernet. Now, with the Ultra Ethernet Consortium, other features are being added to the Ethernet protocol (such as packet spraying) to make its congestion control and adaptive routing less brittle. And so hyperscalers, cloud builders, and AI model builders are increasingly using Ethernet for their back-end networks to link nodes with GPUs and XPUs together while also having to upgrade their front-end Ethernet networks linking them to storage and other systems that feed into the AI systems.

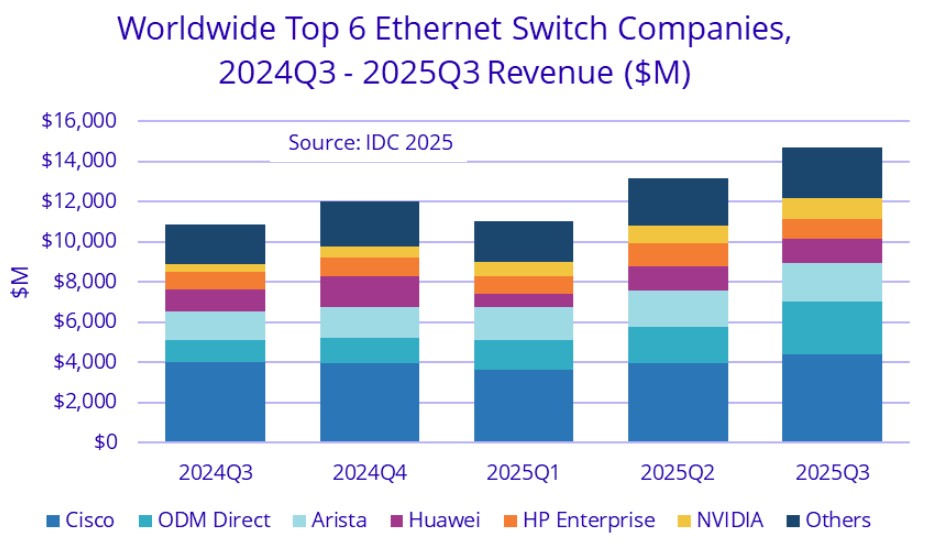

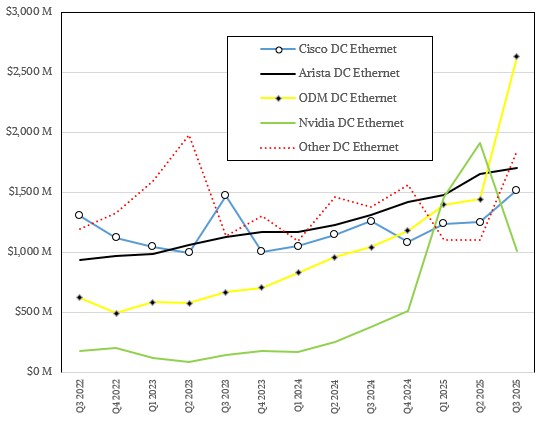

And, just as has happened with servers in the cloud era, the original design manufacturers, or ODMs, are getting a bigger slice of the switching in the datacenter. Here is the chart for all vendors selling datacenter and non-datacenter Ethernet switching, which certainly includes campus networks and possibly edge networks. (IDC has never been precise about this.) Take a gander:

It looks to us that IDC has revised Nvidia’s revenues downward for Q1 2025 and Q2 2025, and if that has happened, then it is probably because NICs, DPUs, and cables were extracted from the Nvidia numbers since this data is only supposed to be for Ethernet switching. Our historical data for Q1 and Q2 last year still shows the original IDC data, and we will update it as the market researcher provides precise updates.

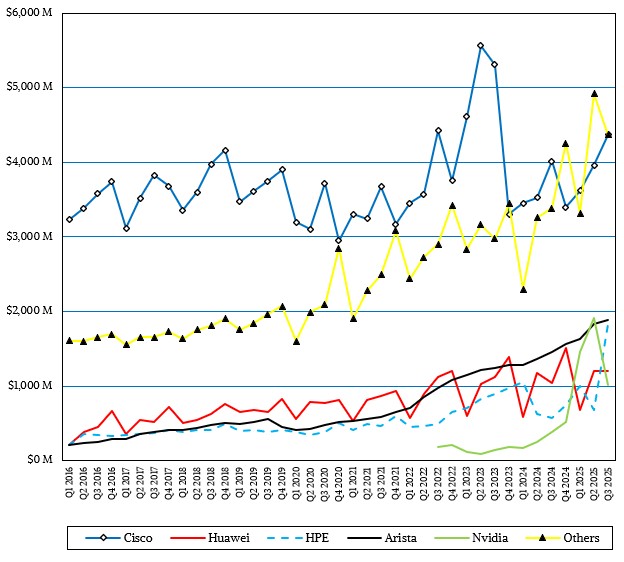

Here is the trendline by vendor for Ethernet switch sales of all kinds for the past decade that we have compiled and tweaked with updates from public statements made by IDC:

As we said, we think that Nvidia spike, shown in green of course, will be revised as we roll through the quarters. (We could go back and do it through pixel counting, but we have some medical stuff going on in our household and do not have time for that right now.)

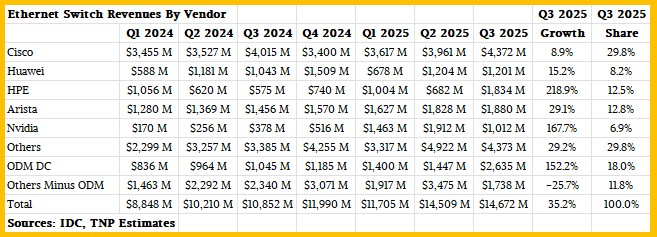

Here is the breakdown of Ethernet switch sales that we have calculated based on the past thirteen reports from IDC:

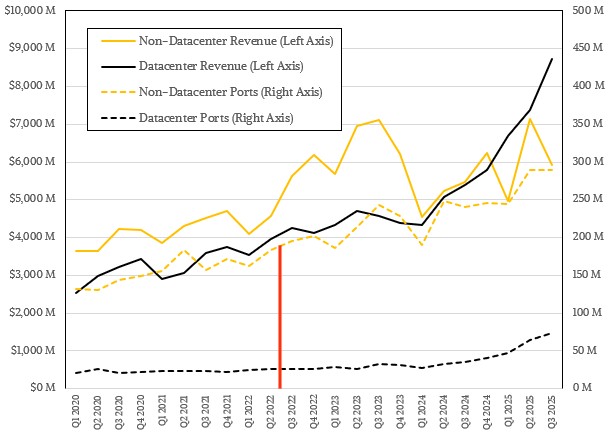

That chart and table above are for all Ethernet switching, but what we care about here at The Next Platform is the datacenter. Luckily, IDC still breaks out datacenter Ethernet switch revenues from non-datacenter sales, as it has been doing since the coronavirus pandemic hit in Q1 2020. Until Q2 2022, IDC also released to the public port counts shipped into the datacenter and outside of it, but stopped at that point. Since then, we have been estimating it as best we can. The demarcation between data and estimates for port counts is shown by the red line in the chart below:

In the third quarter of 2025, datacenter Ethernet switch sales rose by 62 percent to $8.73 billion – IDC gives the growth, we calculate the revenue based on historical data. That means datacenter switches accounts for 59.5 percent of the Ethernet switch market in Q3 2025, which is a new high as a slice of the pie. Our best guess is that across all Ethernet port speeds, 73.5 million ports shipped, which is more than double what shipped in the year ago period. Of that, we think 27.9 million ports were for switches running at speeds 200 Gb/sec and higher, and we know all of those were shipped into the datacenter. Once you are down in the 100 Gb/sec or lower speeds, this gear ends up in datacenters as well as campuses and sometimes edges.

IDC is still very good about dropping hints about how vendors ship Ethernet switching gear into the datacenter and outside of it, and if you take all those hints, you can build vendor revenue streams inside the datacenter. We have been doing this since the hints have been provided in Q3 2022, and here is what it looks like:

We shall see how that green line for Nvidia holds up as numbers are reported for Q4 2025, Q1 2026, and Q2 2026, but what seems very clear is that the ODMs as a group now have dominant share of Ethernet switch revenues in the datacenter as of Q3 2025. Between the rise of the ODMs and the rise of Nvidia, the incumbents – mostly Cisco and Arista and maybe Huawei – are under pressure. But the datacenter Ethernet market is expanding as the pressure is mounting, there appears to be enough room for all of the players to grow. For now.

Here is the cost per bit chart that we have been putting together for years, which admittedly has a lot of estimating witchcraft in it. Take it for what you will:

The gist is that you cannot get cheaper cost per bit than 400 Gb/sec switches, and there is about a 50 percent premium for bits on 800 Gb/sec devices at the moment. But 100 Gb/sec ports cost a 30 percent premium over 800 Gb/sec devices because they are based on older technology, and so on and so on all the way down to 1 Gb/sec switches, which are terribly expensive on a cost per bit basis.

That leaves routing. In Q3 2025, routing sales were a little bit higher than $3.6 billion, up 15.8 percent year on year and up 2.5 percent sequentially. Service providers, hyperscalers, and cloud builders accounted for 74 percent of router sales in the quarter, up 20.2 percent year on year, which means sales of routers to enterprises grew by only 4.9 percent to $940 million if you do the math.

Cisco’s router revenues grew by 31.9 percent to $1.35 billion in the quarter, which we think is a testament to the converged Silicon One switch and router ASIC architecture that the company has rolled out over the past several years. Huawei’s router revenues, by contrast, rose by only 1.1 percent to $837 million. Other vendors in the market, including the converged Hewlett Packard Enterprise-Juniper Networks, accounted for $1.42 billion in router sales in Q3, up 12.4 percent across the handful of vendors that still build this kit.

mb

Yes?

Hello, great article!

I was discussing with an AI the idea that as the number of GPUs in a cluster explodes, the scale‑out strategy could change dramatically. Nvidia has pushed high‑speed interconnects for their clusters, but in a world where data centers have 32K–500K GPUs, it might be more cost‑effective to use a three‑tier 100G fabric, which would benefit Arista. What do you think about this? Are we still going to see higher‑speed connections in two‑tier designs?

I would have to see the economics of both. But clearly, everyone wants a rack or two XPU memory domain, which is not cheap, and then very high bandwidth and predictable latency between the XPUs in many thousands of those domains.

Cheaper is not always better — lowest cost per token in the goal. Much lower. Getting more work through an expensive machine designed for I/O is how the children of the System/360 still drive revenues and pretty good profits to this day, six decades in.