At Build 2017, Microsoft’s annual and influential developer event, CEO Satya Nadella introduced the idea of the “intelligent cloud” and “intelligent edge.” This vision of software’s immediate future considers the plethora of smart devices – cell phones, appliances, home environment controls, business machinery and the like – that permeate and, in large part, orchestrate our daily lives.

We all know about the Internet of Things. Today, the ability to glean valuable business insights from seemingly mundane device telemetry is impressive. Consider the case of the connected cows. Researchers at a farm attached pedometers to dairy cows, largely to monitor the health of the animals; an active cow is usually a healthy cow. But the researchers also noted that an increase in mobility signaled the onset of estrus (“going into heat”) in a cow.

From that data, they discovered an even more valuable insight: the best times to inseminate a cow to produce another cow, or a bull. Based on this interpretation of data, the researchers could tell a farmer which cows to impregnate, and when, to get the desired livestock sex ratios. Modern technology is making even the Earth’s oldest economies hyper-efficient, using something as simple as a pedometer and, of course, the massive computing efficiencies of the cloud.

This is what big data, artificial intelligence, public cloud, and IoT do well today: Accept telemetry, interpret it, glean insights from it, and create an action plan based on that data interpretation. Nadella’s keynote at Build was talking about the next logical step in this process: Automating the ability to have our devices respond to these cloud-based, AI insights.

From Insights To Automatic Action

Consider a workplace surveillance camera. Suppose it’s located in a busy warehouse. It can certainly broadcast its video to the cloud. That’s easy enough. But what if there was AI, watching that stream and intelligent agent that can see two forklifts converging at the same blind corner, and sense that a collision is imminent?

Then, sensing that impending collision, the cloud could immediately issue instructions to both forklifts to stop and instruct those forklifts to pause and automatically beep their horns when approaching that corner. From there, log the incident and alert the warehouse supervisor and safety manager about it. Or even better, identify as it happens when stacked boxes create the safety hazard of a blind corner well before the collision and alert the foreman to the problem, so it can be resolved before it becomes a problem. Plus, schedule an immediate safety procedures review meeting, sending push notifications to all the attendees and automatically compile the evidence into a presentation for review?

That was the essence of the Build keynote: The cloud interprets IoT telemetry, in real time, with AI. And that AI can, in turn, instruct other IoT devices to do things based on its interpretation.

Serverless At The Center And The Edge

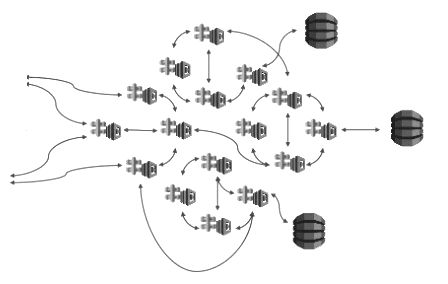

In Microsoft’s intelligent cloud, intelligent edge vision, processing this inbound telemetry with AI and running commands on IoT devices is done with serverless technology. That is, in the cloud, we see serverless functions, such as Azure Functions or AWS Lambda, calling the AI tools that interpret the inbound data. And at the device, we use a container – such as Docker or Mesosphere – to run the code that instructs the device how to respond to inbound cloud data. All of this is secured with encryption certificates, and managed by turnkey, end-to-end systems, such as AWS Greengrass and Azure IoT Edge (both of which are, admittedly, in their infancy).

Soon, we should also see IoT manufacturers preparing their devices to host these containerized workloads. Given the computing power of the average edge device, running a virtualized workload is no problem; we just need to agree on the host system and how to accept connections.

The simplest solution to which being create Android, OS and manufacturer-specific Linux implementations that support standard container technology such as Docker, and connect via HTTPS or already standardized message protocols, such as AMQP.

Coming Soon: Everyone Is A Programmer

This vision of a completely interconnected device grid, powered by serverless code and AI, is the near future. But if we take a moment to reflect on the implications of serverless and AI as we know them today, it’s not too difficult to see an even more sublime shift a few years down the road: The end of computer programming as an art. And, more tantalizingly, the advent of the ability for everyone, regardless of technical skill – in fact, with no technical skills required – to write programs.

As these cloud technologies force us to abandon monolithic applications and write our software using microservices and application programming interfaces, increasingly we will have a body of easily reusable, narrowly defined functionality.

For example, seldom do we see brand new web applications delivered with a traditional, server-based, single-codebase model. Increasingly, they are single-page applications, using APIs and front-end frameworks, such as Angular and React, to tie together functionality.

Today, we already see modern web applications that have an identity provider API and a user interface API and a storefront API and an inventory API and an order fulfillment API and a customer data API and a payments API and so on and so on. A web application isn’t so much code, but rather an integration of all these small pieces, tied together into workflows.

When we reach the point where we can define almost any business logic running in any environment as just snapping together these Lego-like building blocks of microservices and APIs, we will be at the point where no longer does a person need skill to code.

Instead, the average person will be able to simply describe the solution she seeks, using common language. From there, AI will automatically build, from the available “building block” microservices, a workflow that conforms to the requirements and produces the desired output. You can see this already if you use a voice agent, such as Siri, Alexa, Cortana or OK Google.

These voice agents are great at interpreting natural language into an actionable set of steps. And they continue to get better as more and more people use them. AI is making great strides in understanding semantics, including cultural and regional dialects.

This will use the same processes Nadella described at Build to allow anyone to simply speak a workflow and turn it into a program: “Siri, I want to make a web photo gallery of my sister’s birthday party. Use pictures from Instagram with the hashtag #ashley30 and Facebook for all my friend’s pictures taken at the Olive Garden on Main Street between 7 and 10 pm tonight. Moderate any pictures that are by people who aren’t in my contact list, but immediately publish pictures taken by me, my family and my friends.”

The APIs to do this already exist. The tooling to chain together these workflows already exists. The voice agents already exist. All it’s going to take is the AI that can parse that statement and turn it into a workflow. And that’s not very far away at all.

Doug Vanderweide is a Microsoft Certified Solutions Developer: Cloud Platform and Infrastructure and a Microsoft Certified Trainer who currently serves as an Azure course author and subject matter expert for Linux Academy and Cloud Assessments. Follow him on Twitter @dougvdotcom.