Finding NeMo Features for Fresh LLM Building Boost

This week Nvidia shared details about upcoming updates to its platform for building, tuning, and deploying generative AI models. …

This week Nvidia shared details about upcoming updates to its platform for building, tuning, and deploying generative AI models. …

Server makers Dell, Hewlett Packard Enterprise, and Lenovo, who are the three largest original manufacturers of systems in the world, ranked in that order, are adding to the spectrum of interconnects they offer to their enterprise customers. …

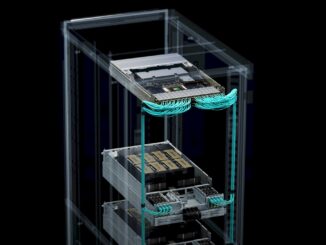

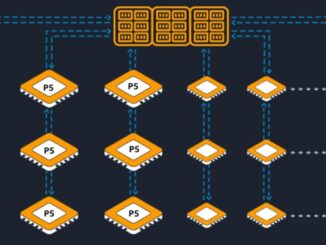

Since the advent of distributed computing, there has been a tension between the tight coherency of memory and its compute within a node – the base level of a unit of compute – and the looser coherency over the network across those nodes. …

Over the past few years, the Arm architecture has made steady gains, particularly among the hyperscalers and cloud builders. …

If you are looking for an alternative to Nvidia GPUs for AI inference – and who isn’t these days with generative AI being the hottest thing since a volcanic eruption – then you might want to give Groq a call. …

The exorbitant cost of GPU-accelerated systems for training and inference and latest to rush to find gold in mountains of corporate data are combining to exert tectonic forces on the datacenter landscape and push up a new Himalaya range – with Nvidia as its steepest and highest peak. …

In a world where allocations of “Hopper” H100 GPUs coming out of Nvidia’s factories are going out well into 2024, and the allocations for the impending “Antares” MI300X and MI300A GPUs are probably long since spoken for, anyone trying to build a GPU cluster to power a large language model for training or inference has to think outside of the box. …

For very sound technical and economic reasons, processors of all kinds have been overprovisioned on compute and underprovisioned on memory bandwidth – and sometimes memory capacity depending on the device and depending on the workload – for decades. …

Because they are in the front of the line for acquiring Nvidia datacenter GPUs, the hyperscalers and cloud builders are going to be the ones who benefit mightily from shortages of matrix math engines that can train AI models and run inference against them. …

If you had to sum up the second half of 2022 and the first half of 2023 from the perspective of the semiconductor industry, it would be that we made too many CPUs for PCs, smartphones, and servers and we didn’t make enough GPUs for the datacenter. …

All Content Copyright The Next Platform