Accelerating Deep Learning Insights With GPU-Based Systems

Explosive data growth and a rising demand for real-time analytics are making high performance computing (HPC) technologies increasingly vital to success. …

Explosive data growth and a rising demand for real-time analytics are making high performance computing (HPC) technologies increasingly vital to success. …

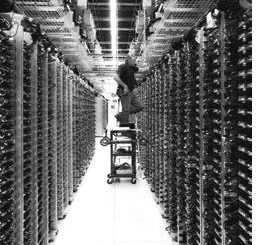

The oil and gas industry has been on the cutting edge of many waves of computing over the several decades that supercomputers have been used to model oil reservoirs in both the planning of the development of an oil field and in quantifying the stored reserves of a field and therefore the future possible revenue stream of the company. …

For years, the pace of change in large-scale supercomputing neatly tracked with the curve of Moore’s Law. …

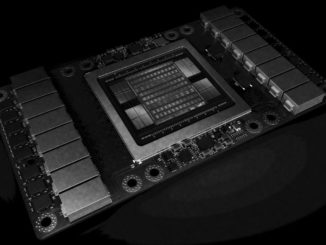

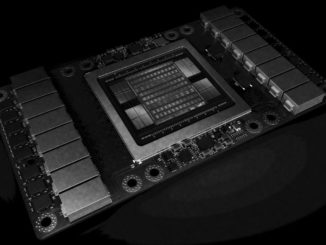

One of the reasons why Nvidia has been able to quadruple revenues for its Tesla accelerators in recent quarters is that it doesn’t just sell raw accelerators as well as PCI-Express cards, but has become a system vendor in its own right through its DGX-1 server line. …

We are still chewing through all of the announcements and talk at the GPU Technology Conference that Nvidia hosted in its San Jose stomping grounds last week, and as such we are thinking about the much bigger role that graphics processors are playing in datacenter compute – a realm that has seen five decades of dominance by central processors of one form or another. …

GPU computing has deep roots in supercomputing, but Nvidia is using that springboard to dive head first into the future of deep learning. …

Graphics chip maker Nvidia has taken more than a year and carefully and methodically transformed its GPUs into the compute engines for modern HPC, machine learning, and database workloads. …

Scaling the performance of machine learning frameworks so they can train larger neural networks – or so the same training a lot faster – has meant that the hyperscalers of the world who are essentially creating this technology have had to rely on increasingly beefy compute nodes, these days almost universally augmented with GPUs. …

Google created quite a stir when it released architectural details and performance metrics for its homegrown Tensor Processing Unit (TPU) accelerator for machine learning algorithms last week. …

Increasing parallelism is the only way to get more work out of a system. …

All Content Copyright The Next Platform