Competition Heats Up In Cluster Interconnects

Any time a ranking of a technology is put together, that ranking is always called into question as to whether or not it is representative of reality. …

Any time a ranking of a technology is put together, that ranking is always called into question as to whether or not it is representative of reality. …

When IBM sold off its System x division to Lenovo Group in the fall of 2014, some big supercomputing centers in the United States and Europe that were long-time customers of Big Blue had to stop and think about what their future systems would look like and who would supply them. …

As we have written about extensively here at The Next Platform, there is no shortage of use cases in deep learning and machine learning where HPC hardware and software approaches have bled over to power next generation applications in image, speech, video, and other classification and learning tasks. …

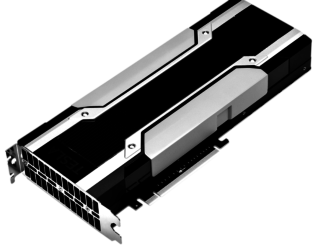

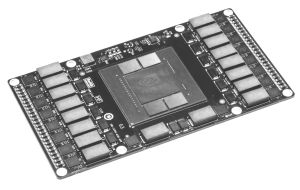

As we have noted over the last year in particular, GPUs are set for another tsunami of use cases for server workloads in high performance computing and most recently, machine learning. …

Nvidia made a lot of big bets to bring its “Pascal” GP100 GPU to market and its first implementation of the GPU is aimed at its Tesla P100 accelerator for radically improving the performance of massively parallel workloads like scientific simulations and machine learning algorithms. …

Deep learning could not have developed at the rapid pace it has over the last few years without companion work that has happened on the hardware side in high performance computing. …

For Google, Baidu, and a handful of other hyperscale companies that have been working with deep neural networks and advanced applications for machine learning well ahead of the rest of the world, building clusters for both the training and inference portions of such workloads is kept, for the most part, a well-guarded secret. …

The future of hyperscale datacenter workloads is becoming clearer and as that picture emerges, if one thing is clear, it is that the content is heavily driven by a wealth of non-text content—much of it streamed in for processing and analysis from an ever-growing number of users of gaming, social network, and other web-based services. …

For more than a decade, graphics processor maker Nvidia has been championing the adoption of GPU accelerators as heavy-lifting compute engines for an increasing array of applications that can take advantage of the parallel processing inherent in a GPU. …

A little-known upstart Chinese chip maker called Phytium Technology was set to use the Hot Chips 27 conference in Silicon Valley as a coming out party of sorts for its 64-bit ARM server processors, and the company’s director of research, Charles Zhang, was not permitted to come to the event because of visa issues. …

All Content Copyright The Next Platform