The Roads To Zettascale And Quantum Computing Are Long And Winding

In the United States, the first step on the road to exascale HPC systems began with a series of workshops in 2007. …

In the United States, the first step on the road to exascale HPC systems began with a series of workshops in 2007. …

If you want to get a sense of what companies are really doing with AI infrastructure, and the issues of processing and network capacity, power, and cooling that they are facing, what you need to do is talk to some co-location datacenter providers. …

Google is a big company with thousands of researchers and tens of thousands of software engineers, who all hold their own opinions about what AI means to the future of business and the future of their own jobs and ours. …

Variety is not only the spice of life, it is also the way to drive innovation and to mitigate risk. …

Large language models, also known as AI foundation models and part of a broader category of AI transformer models, have been growing at an exponential pace in terms of the number of parameters they can process and the amount of compute and memory bandwidth capacity they require. …

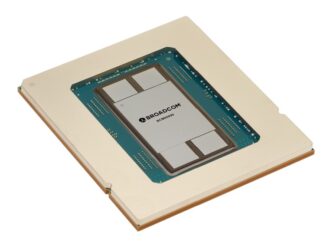

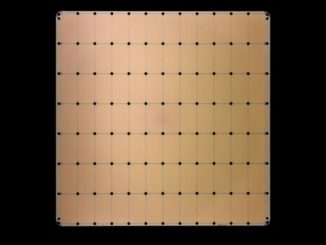

A decade ago, waferscale architectures were dismissed as impractical. Five years ago, they were touted as a fringe possibility for AI/ML. …

It has been becoming increasingly clear – anecdotally at least – just how expensive it is to train large language models and recommender systems, which are arguably the two most important workloads driving AI into the enterprise. …

The HPC industry, after years of discussions and anticipation and some relatively minor delays, is now fully in the era of exascale computing, with the United States earlier this year standing up Frontier, its first such supercomputer, and plans for two more next year. …

When we last focused on Untether AI in 2021, the AI inferencing hardware startup had just secured $125 million in funding, which came a year after the company officially launched with its first-generation runAI200 devices and its unique at-memory inferencing approach. …

In March, Nvidia introduced its GH100, the first GPU based on the new “Hopper” architecture, which is aimed at both HPC and AI workloads, and importantly for the latter, supports an eight-bit FP8 floating point processing format. …

All Content Copyright The Next Platform