New Optimizations Improve Deep Learning Frameworks For CPUs

Today, most machine learning is done on processors. Some would say that acceleration of learning has to be done on GPUs, but for most users that is not good advice for several reasons. …

Today, most machine learning is done on processors. Some would say that acceleration of learning has to be done on GPUs, but for most users that is not good advice for several reasons. …

Chip giant Intel has been talking about CPU-FPGA compute complexes for so long that it is hard to remember sometimes that its hybrid Xeon-Arria compute unit, which puts a Xeon server chip and a midrange FPGA into a single Xeon processor socket, is not shipping as a volume product. …

There are a lot of different ways to skin the deep learning cat. …

Custom accelerators for neural network training have garnered plenty of attention in the last couple of years, but without significant software footwork, many are still difficult to program and could leave efficiencies on the table. …

The key to creating more efficient neural network models is rooted in trimming and refining the many parameters in deep learning models without losing accuracy. …

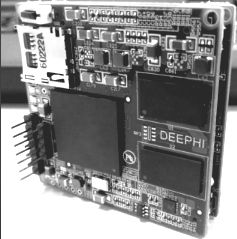

Around this time last year, we delved into a new FPGA-based architecture that targeted efficient, scalable machine learning inference from startup DeePhi Tech. …

The last two years have delivered a new wave of deep learning architectures designed specifically for tackling both training and inference sides of neural networks. …

Here at The Next Platform, we tend to focus on deep learning as it relates to hardware and systems versus algorithmic innovation, but at times, it is useful to look at the co-evolution of both code and machines over time to see what might be around the next corner. …

It is difficult to shed a tear for Moore’s Law when there are so many interesting architectural distractions on the systems horizon. …

As a thought exercise, let’s consider neural networks as massive graphs and begin considering the CPU as a passive slave to some higher order processor—one that can sling itself across multiple points on an ever-expanding network of connections feeding into itself, training, inferencing, and splitting off into multiple models on the same architecture. …

All Content Copyright The Next Platform