Fishing For Insight Into The Ocean Economy With HPC And AI

Historically and traditionally, academic supercomputing centers have been very open about the architecture and setup of their HPC systems, and it makes sense if you think about it. …

Historically and traditionally, academic supercomputing centers have been very open about the architecture and setup of their HPC systems, and it makes sense if you think about it. …

It doesn’t take a machine learning algorithm to predict that server makers are trying to cash in on the machine learning revolution at the major nexus points on the global Internet. …

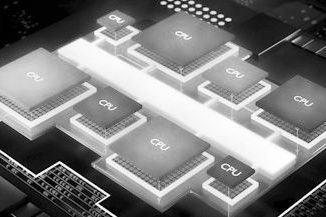

With Moore’s Law running out of steam, the chip design wizards at Intel are going off the board to tackle the exascale challenge, and have dreamed up a new architecture that could in one fell swoop kill off the general purpose processor as a concept and the X86 instruction set as the foundation of modern computing. …

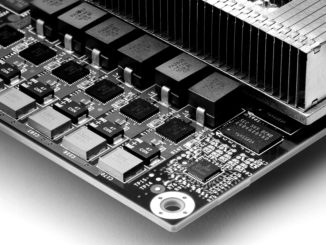

Over the last few years we have detailed the explosion in new machine learning systems with the influx of novel architectures from deep learning chip startups to efforts from vendors and hyperscalers alike. …

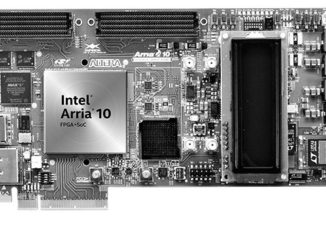

FPGAs might not have carved out a niche in the deep learning training space the way some might have expected but the low power, high frequency needs of AI inference fit the curve of reprogrammable hardware quite well. …

Another Hot Chips conference has ended with yet another deep learning architecture to consider. …

There have been several chip startups over the last few years that have sought to new ways to train and execute neural networks efficiently, but why reinvent the wheel when each idea has yielded at least one small piece of a much bigger performance picture? …

A research team from Nvidia has provided interesting insight about using mixed precision on deep learning training across very large training sets and how performance and scalability are affected by working with a batch size of 32,000 using recurrent neural networks. …

There has been much written about the potential for FPGAs to take a leadership role in accelerating deep learning but in practice, the hurdles of getting from concept to high performance hardware design are still taller than many AI shops are willing to scale, particularly when GPUs dominate in training and in a pinch, standard CPUs will do just fine for datacenter inference since they involve little developer overhead. …

Enterprises that want to leverage the huge amounts of data they are generating to gain useful insights and make faster and better business decisions are going to have to use machine learning at scale for modeling and training. …

All Content Copyright The Next Platform