Back in 2015, when Intel was flush with cash thanks to a near-monopoly from X86 datacenter compute, it shelled out an incredible $16.7 billion to acquire FPGA maker Altera because a few hyperscalers and cloud builders were monkeying around with offloading whole chunks of CPU compute to FPGAs to create SmartNICs.

This seems like an incredible amount of money now when Intel needs about that much cash to build a chip fab. But back then, 22 nanometer and 14 nanometer processes were humming and Intel had not yet hit the 10 nanometer wall in its foundries. AMD had not yet reanimated itself in the datacenter, and Arm server CPUs were still more or less ethereal.

That said, Intel was jumpy about threats to its hegemony, particularly with Nvidia having successfully created a burgeoning HPC business and an absolutely explosive AI business based on offloading massively parallel compute jobs from CPUs to GPUs. So you can understand, in principle, why Intel might have been so eager about – and worried about – FPGAs storming the datacenter, which we talked about three months before the deal went down. When the deal was announced in June 2015, Intel thought as much as a third of the servers in the hyperscaler and cloud datacenters would have an FPGA accelerator in them, and the early indicators were that something was going on.

What was going on, which we knew at the time and can say emphatically in hindsight, is that Intel was charging too much for Xeon CPU cores, and every kind of accelerator came out of the woodwork to try to beat it for various kinds of workloads.

Intel saw hundreds of thousands of Xeon sockets, and millions of cores, just disappearing. And maybe it freaked out a little. And maybe it wanted to slow this offload down as much as benefit from it. Intel does like to control the pace of technology, and any time you can slow it down when there is less competition, you make more profits. As the 2010 through 2018 Data Center Group financials succinctly show.

And so, it is seven years later, and Intel has a respectable FPGA business that it has not invested enough in to stay competitive with archrival Xilinx, which was acquired by AMD for an even more stunning $49 billion in February 2022. This AMD acquisition was an all-stock funny money deal, not real cash like Intel spent on Altera, and there probably was not a better time to cash out on a stock peak than earlier this year.

In both cases, Altera and Xilinx have well-established markets with thousands of customers who have deep domain expertise and software and VHDL skills that are enormously valuable for any platform. Soft-coded FPGAs, with algorithms molded out of their fabrics of logic gates and local memories, have been a part of the compute substrate for four decades and serve a vital middle ground between generic applications written in low-level and high-level programming languages running on X86 or Arm compute engines and custom ASICs with hard-coded algorithms and routines.

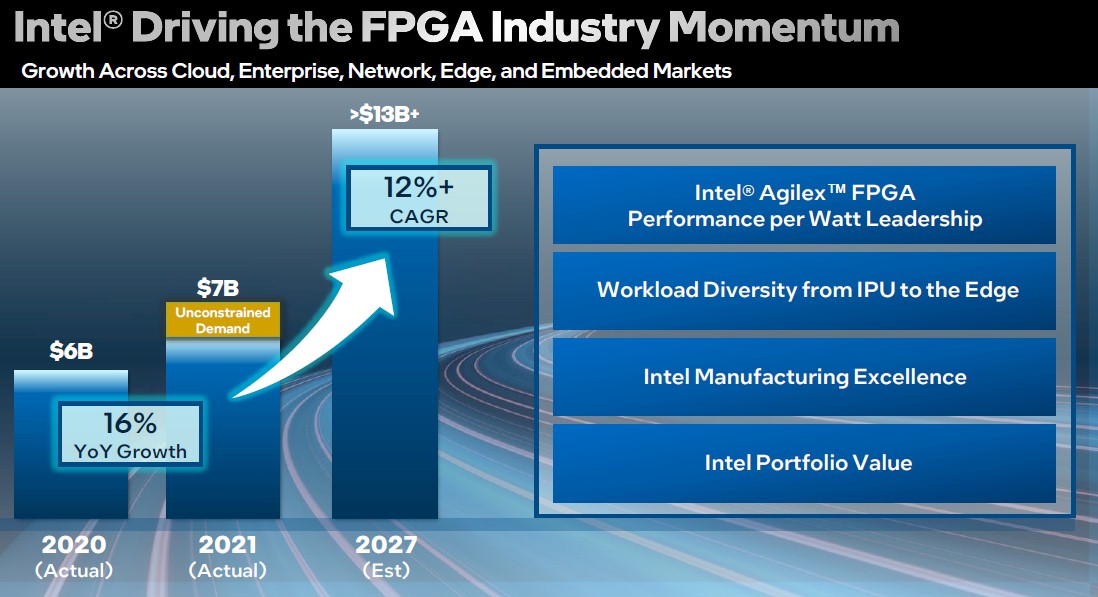

FPGAs are not going away even if they have not taken over the world. And now, Intel is ready to get down to business and compete against AMD/Xilinx for more of the FPGA market, which should generate somewhere between $8 billion and $9 billion in 2022 and rise at a compound annual growth rate of 12 percent between 2022 and 2027 inclusive to exceed $13 billion at the end of that range.

That chart was presented by Shannon Poulin, general manager of the Programmable Solutions Group, which sits within the Datacenter and AI Group under Sandra Rivera but which has a dotted line report to Nick McKeown, who runs the Network and Edge Group and who we spoke to recently about all sorts of things. Poulin is familiar to many of us as the executive who drove the Xeon CPU roadmap between 2011 and 2015. Since then, Poulin was in charge of Intel’s relationships with its largest customers, including the hyperscalers and cloud builders as well as the OEMs, ODMs, and large enterprises, and when Dan McNamara left Intel to take over the server business unit at AMD, Poulin was tapped to take over running the Programmable Solutions Group.

This week’s Intel Innovation 2022 event is Poulin’s first chance to elaborate on the strategy that the chip maker has come up with to revitalize its FPGA business, and he makes no bones about what problems there are with the Agilex line of products.

“When I came in about a year ago and took over the group, I really felt like we needed to look at supply chain,” explains Poulin. “We still have many of our products on legacy supply nodes, and many of those nodes are not made at Intel. And I’m talking about nodes that are five, ten, even twenty years old in some cases. We really felt that supply chain was going to be a critical factor for a lot of people as they were considering what are they designing into their product since things have gotten really tight in the industry. Over the past year and a half, we have seen even more engagements with procurement teams around second source supply ability – they want availability and redundancy built in. And we are taking our time and explaining all the things that we are doing on a packaging level, on a wafer level, and on a product level that we never really candidly had to explain before.”

We are inventing a new term here: “Supply win.” This is distinct from a “design win.” We have been dancing around this idea since the pandemic started, joking that if AMD had believed more in its own Epyc CPU business it could have sold even more processors than it has in the past several years. And because AMD did conservative forecasts – and honestly, who can blame them because it overshot its forecasts with Opterons and Athlons in a bygone era – Intel could sell relatively ancient “Skylake” and “Cascade Lake” Xeon SPs based on 14 nanometer technologies, and now “Ice Lake” Xeon SPs based on a very cranky 10 nanometer process, against “Rome” Epyc 7002 and “Milan” Epyc 7003 chips based on 7 nanometer cores, and soon the 5 nanometer cores used in “Genoa” and “Bergamo” Epyc 9000 series chips. (The Epyc processors have a chiplet design that has 14 nanometer and 12 nanometer I/O and memory controllers linking the cores together into what amounts to a distributed CPU.)

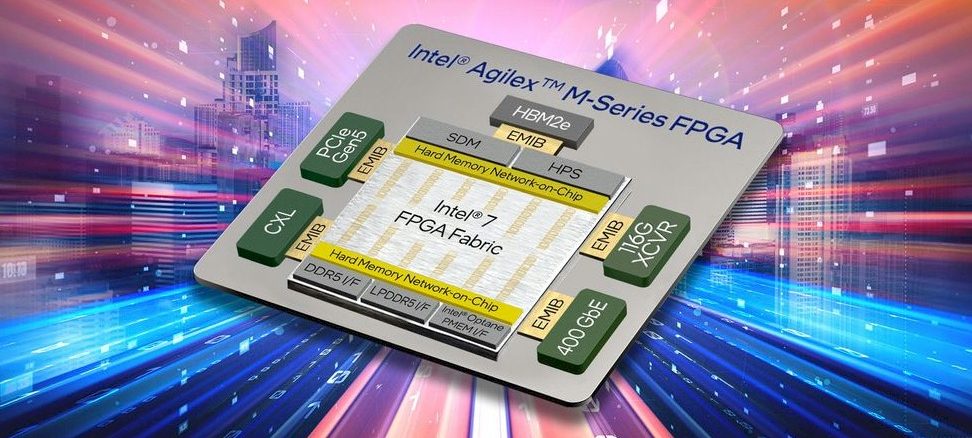

And so, AMD has the design wins and Intel has the supply wins when it comes to CPUs. The same is true to a lesser degree in the FPGA market. Intel has supply wins with low end and midrange FPGAs, although Intel did get some design wins as well as supply wins with the high-end Stratix FPGAs based on 14 nanometer processes from 2018, the Agilex F-Series and I-Series FPGAs based on 10 nanometer processes from 2019, and the Agilex M-Series based on Intel 7 processes (second generation 10 nanometer SuperFIN) from earlier this year when they were announced.

Intel does not want to live just on supply wins anymore, and the plan, Poulin tells The Next Platform, is to bring a line of lower-end and midrange FPGAs to market to span the all of the use cases and to leverage Intel Foundry Services to etch this broadened Agilex FPGA lineup.

Intel has to etch with the fabs that it has, which means using Intel 7 (10 nanometer SuperFIN) processes on new Agilex designs. But eventually, we think that Intel will shift gears and move FPGAs to the front of the line on process nodes because this is a good way to test a process and improve its yield. FPGAs are made in relatively low volumes and are very complex products that require the best transistors. Historically, FPGAs and datacenter GPUs have been on the leading edge nodes for just this reason.

This roadmap is making no specific commitments to hat processes Intel will use in its 2025 and beyond products, and we think it is very likely that the chip maker will skip its Intel 4 process (7 nanometer) and Intel 3 (a rev of 7 nanometer) and move straight to Intel 20A (5 nanometer with RibbonFET transistors). There is an outside chance that PSG might push as far ahead as the refined 5 nanometer RibbonFET process known as Intel 18A for the 2025 generation of Agilex FPGAs. This would get FPGAs back on the point of the process spear, where they belong.

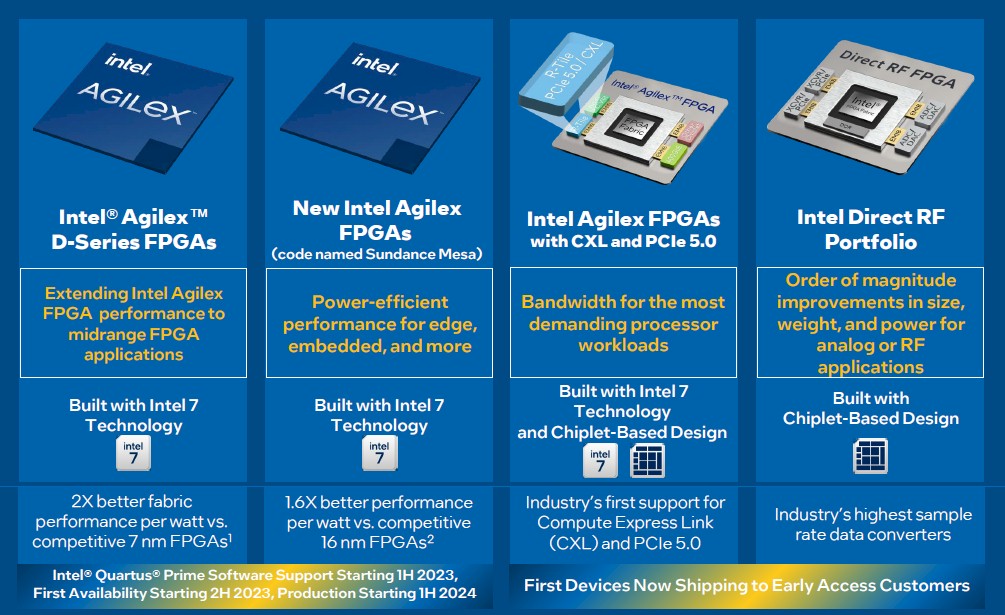

Here is what the immediate term roadmap for the expanded FPGA lineup looks like:

The Agilex D-Series FPGAs, which we do not have a code-name for, will be the new midrange product that takes over for older Cyclone, Arria, and Stratix FPGAs that date from when Altera was an independent company and that are based on 20 nanometer, 28 nanometer, and even older processes from Taiwan Semiconductor Manufacturing Co. We don’t know a lot about the D-Series, but like Nvidia’s “Grace” Arm server CPU, it will use low power DDR5 memory (LPDDR5) to cut the power consumption of the overall device. The D-Series will have a smaller FPGA logic fabric (on the order of 100,000 logic gates), a lower thermal design point, and a lower price, too, and Poulin expects to see it in communications, industrial and manufacturing, and robotics use cases. The FPGA emulated version of the Agilex D-Series FPGA will be available in early 2023 so customers can see its features and test it out, and will start sampling in the second half of 2023 and be in volume production in 2024 using Intel 7.

We do not know what hard blocks will be included with the D-Series, such as transceiver speeds, PCI-Express controller, or Arm cores. We do know it has a flavor of DDR5 memory and we strongly suspect it will have 112 Gb/sec transceivers (like the current Agilex I-Series and M-Series do), PCI-Express 5.0 controllers with at least CXL 2.0 support and very likely CXL 3.0 support, and a four-core Arm CPU of some sort.

The next new FPGA coming out of Intel is codenamed “Sundance Mesa” but we don’t know its series name as yet. What we do know is that this will be an even smaller FPGA. But Poulin says it will have a smaller FPGA fabric – on the order of 50,000 logic gates – and even lower cost and thermals.

These two future FPGAs will be monolithic designs. Intel will, of course, update its high-end, chiplet-based Agilex FPGAs that are based on the Intel 7 process and that have hard-coded PCI-Express 5.0 (and therefore CXL) controllers. This is a higher-end product aimed at communications and video rendering workloads will sport the second generation of its HyperFlex FPGA logic gate fabric, and it will have 112 Gb/sec transceivers and 400 Gb/sec Ethernet chiplets as well as that R-Tile for PCI-Express 5.0. All of this will link together using Intel’s EMIB technology, as past Stratix and Agilex FPGAs have done. As for Amr cores, this one will have a pair of Cortex-A55 skinny cores and a pair of fatter Cortex-A76 cores and will be programmed to load balance work across them as it changes. We strongly suspect that there will be an HBM2 memory variant of this device, and Poulin tells us that HBM memory will be an option on the roadmap for selected high-end FPGAs going forward.

This high end is where Agilex has been strong, but it only represents somewhere between 20 percent and 30 percent of the FPGA business, Poulin says.

Finally, the Agilex line will include a new Direct RF family of devices, which have chiplets to do digital to analog data conversions that are needed in military and certain communications workloads.

The latter two Agilex products are sampling to early customers here in the second half of 2022, so announcements should be soon.

Is there any potential application where an FPGA would be place on consumer motherboards to accelerate particular Windows apps?

It would be useful if it could accelerate Windows Update, right?

Not sure, given all of the oomph in modern CPUs. It is interesting to contemplate creating a CPU with more cores and L3 cache for the same money and then having such acceleration for video encoding and decoding or DSPs for AI inference on a shared memory coprocessor. As AI becomes part of apps, I think the answer is definitely yes if AI networks keep changing as fast as they do.