The Korean Meteorological Administration (KMA) operates 20+ numerical weather prediction (NWP) models, including Korean numerical models, for weather forecasting.

Large-scale compute is certainly a critical factor in its operations, but with vast computational capability comes the need to balance it with ultra-high performance networking. Over the years, the KMA has transitioned through several system architectures, adjusting its network architecture along the way. That evolution is worth describing, leading as it does to unprecedented demands on capacity.

The KMA has a long history of using supercomputers to do weather forecasting, starting with the NEC SX-5 system installed in 2000 and three lines of Cray supercomputers installed in 2005, 2010, and 2015. The Cray X1E used a federated NUMA interconnect on vector processors, the Cray XE6 used the Gemini interconnect, and the most recent Cray XC40 system used the Aries interconnect. However, with a shift away from those long-held architectures, the KMA is moving in an exciting new direction with Nvidia and Lenovo.

To get to the heart of how this evolution has unfolded, we consulted a confidential KMA source to discuss the benefits and challenges that needed to be overcome. In doing so we discovered a unique outlook on the past, present, and future of networking for large-scale weather forecasting – and so too a much wider set of HPC applications.

David Gordon: Let’s talk about network decision-making: The 5th generation KMA supercomputers (it looks like they are called Guru and Maru) are based on Nvidia Quantum 200Gb/s InfiniBand networking. What is different about this InfiniBand network compared to that in the prior machines, and how does the interconnect choice help increase the performance by a factor of nearly 9X moving from the 4th generation KMA machine?

KMA: To quickly provide the results of various NWP models to forecasters, the KMA requires hundreds to thousands of compute nodes. At the same time, compute resources are required for the improvement and research of NWP models. To meet this, the KMA needs thousands of supercomputer resources.

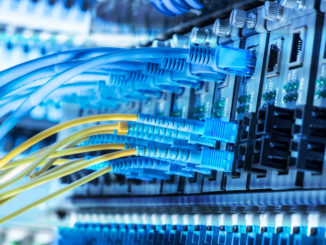

The KMA has introduced a fifth supercomputer to meet future demand for those resources. As the scale grew over the previous supercomputers, it was necessary to increase the bandwidth of the computational network. The 5th National Meteorological Supercomputer has secured approximately twice the bandwidth compared to the 4th Supercomputer interconnect using the Nvidia Quantum InfiniBand networking platform. Through this, it was possible to build a single supercomputer connecting 8,000 systems, and to have a more efficient and stable system with the split technology.

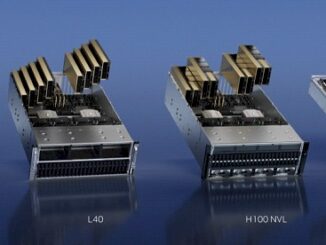

David Gordon: Your systems feature accelerated compute nodes, can you explain what those are being used for, and how you see acceleration (GPUs) impacting your work in the future?

KMA: The 5th National Meteorological Supercomputer does not have an accelerator node. However, separate GPU equipment is used for AI research. The KMA plans to use AI for a number of weather forecasting and climate research activities, which require an accelerator. Currently, the NIMR (National Institute of Meteorological Sciences), a subordinate organization of the KMA, is already actively conducting research and attempting to apply it to the field of climate change. The plan is to consider applying it to the weather forecast model later.

David Gordon: Can you tell us why you wanted the liquid cooling capabilities included within the Lenovo ThinkSystem SD650-V2 server for your infrastructure? How have the results been so far?

KMA: For operational efficiency, supercomputers are configured with high-performance processors that generate more heat, which means more energy is required for cooling. Air cooling in particular requires excessive energy due to its low efficiency, which causes the energy consumption to increase. To combat this, the KMA introduced the eco-friendly Liquid Cooling method to increase energy efficiency. This allows the 5th National Meteorological Supercomputer to improve its efficiency by four times compared to its predecessor.

David Gordon: There is a lot of talk around “Nowcasts” where artificial intelligence is used to trigger warnings for storms or floods, by using radar images fed to the system. Is this something you’re exploring? How do you see AI impacting the industry?

KMA: The KMA is continuing its research with subordinate organizations in many areas where AI can be used. As you mentioned, AI-related research is actively conducted on Nowcasting using radar images. In addition, research on typhoon routes, fog prediction, and satellite image improvement as well as the forecast decision supporting system based on weather conditions are currently in progress, which will help weather forecasting.

David Gordon: What is the topology of the InfiniBand network used, and why was this choice made? We assume that the KMA is sticking with a dragonfly topology as was probably used with the Aries-based 4th generation machine, but please explain.

KMA: The 5th National Meteorological Supercomputer connects all of the compute nodes using the DragonFly+ network topology to improve packet hop and latency performance over and above the Fat-Tree network topology commonly used for InfiniBand. Its structure is similar to the topology originally used in the 4th supercomputer.

David Gordon: How much does the InfiniBand network contribute to the ability to scale the resolution of the weather models from 12km down to 8km?

KMA: The KMA needed more than an 8X increase in computing performance to scale down the resolution of the Korean NWP models from 12km to 8km. In order to achieve this, the total number of compute nodes has also naturally increased as well. To utilize the increased compute nodes as a single supercomputer, the National Center for Meteorological Supercomputer applied the InfiniBand network to the interconnect, successfully built it, and is currently operating it stably. Although thousands of systems are connected, the network proves high performance and outstanding stability.

David Gordon: When does the KMA think it will be able to reach the Holy Grail of 1 km resolution, and what size of system and what kind of network will be needed to drive such weather modelling?

KMA: In general, a 2X resolution increase in an NWP model requires more than 10X the compute resources. So if the current 12km resolution model of the KIM (Korean Integrated Model) is expanded to 1km, it requires more than 10,000 times the computing power of the current model.

For advanced resolution and efficiency of compute resources, the KMA is currently conducting research to improve the fixed grid (12km) system of the KIM (Korean Integrated Model) into a variable grid system. Especially, it is focusing on achieving a resolution of 5km to 12km in the global area, and a grid of 5km in East Asia which greatly influences South Korea.

David Gordon: Can you describe how the balance between compute, storage and networking across your cluster helps you to execute forecasts faster and with greater precision?

KMA: The KMA operates more than 20 kinds of NWP models every day and computes more than 40TB of data.

To this end, the National Meteorological Supercomputer 5 has more than 24 PiB of computational storage and utilizes additional capacity and tape equipment for data storage. Computational storage is connected to all compute nodes via Nvidia Quantum 200Gb/s InfiniBand, offering hundreds of GB/s of performance when operating weather prediction models.

NWP models require extensive computations, communication with multiple nodes, and high-speed I/O performance.

To analyze vast amounts of observations and quickly generate numerical prediction materials through trillions of calculations, meteorological supercomputers need a balance between high-performance processors, memory, high-speed interconnect networks, and high-speed storage – with a disaster recovery system that guarantees stable operation and downtime minimization.

The National Meteorological Supercomputer 5 supports the weather forecasts that protect people’s property and lives by stably operating high performance compute resources 24 hours a day.

Sponsored by Nvidia

Be the first to comment