A key trend in the ever-changing IT world is bringing whatever is needed – processing, storage, analytics, networking – closer to where the data is. The growing amounts of data being generated and number of datasets being created – and the valuable business information captured within all of that – are putting pressure on compute, storage, and networking vendors to find ways to reduce the time needed and the costs involved in processing and analyzing that data.

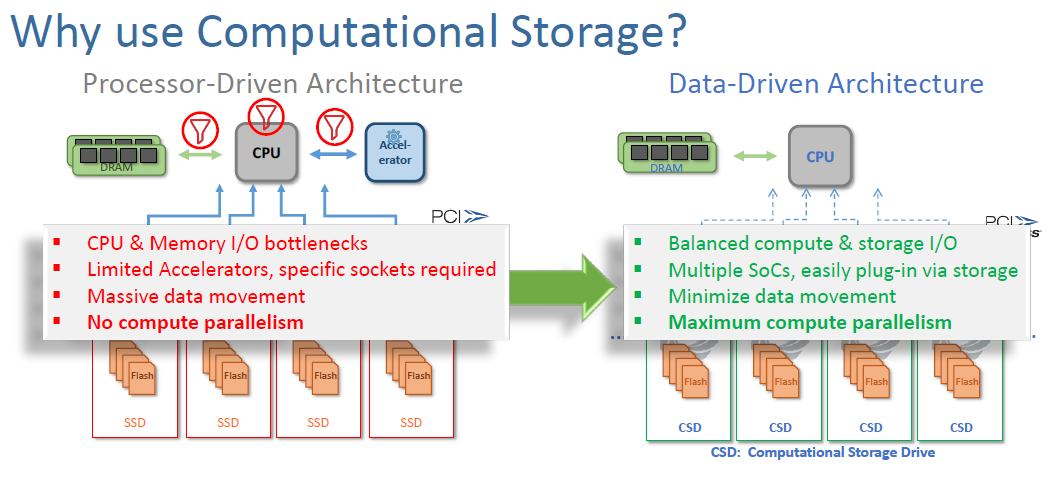

“This is an evolution that’s going on in the industry, from a traditional, very compute-centric, where everything is passed through the CPU and all of the all of the computational tasks were handled there in that central CPU chipset and DRAM construct,” JB Baker, vice president of marketing at computational storage vendor ScaleFlux, tells The Next Platform. “Over time, what we’ve seen is there’s a few things happening. One, that domain-specific computing is able to handle certain tasks much more efficiently than CPUs. For example, GPUs and TPUs for AI workload acceleration, and now SmartNICs have come out to offload a bunch of the network processing that can be handled much better in domain-specific silicon than it can in a CPU.”

Computational storage “is the next wave in handling tasks that either aren’t handled well in a CPU, such as compression, that can be just extremely burdensome if you try to run a high-compression level algorithm like a gzip file format, or things that are going to need to happen across most of the mass of data that by doing those tasks down in the drive, such as filtering for a database, then we can reduce the amount of data that had to move across the PCIe bus and pollute the DRAM or even across the network because networks are another bottleneck,” Baker says. “It’s really that evolution of domain-specific compute and the just the mass of data and the speed of data that is creating all these bottlenecks in your compute infrastructure.”

As we have written about, computational storage – also known as “in-situ processing” – is an emerging space driven by startups in a storage segment that has addressed capacity and speed issues over the past decade but is still challenged by the cost and time involved in moving it to where it can be processed. The idea essentially is to process the data close to where it’s already being stored.

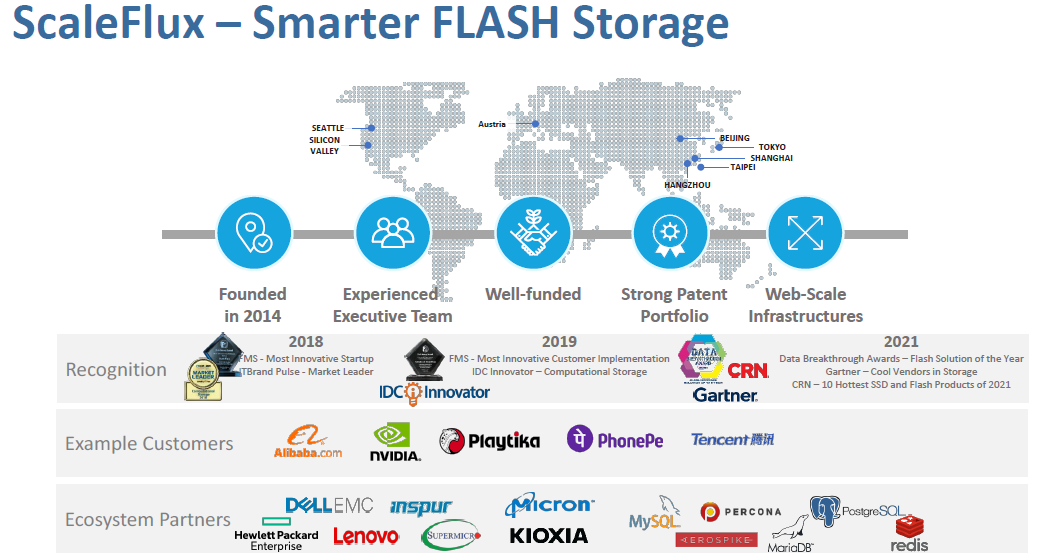

ScaleFlux is one of those startups, launching in 2014 and since then raising $58.6 million in six rounds of funding, the most recent being last year, when the company brought in more than $21.5 million, according to Crunchbase. Customers include Nvidia, Tencent and Alibaba and among the tech partners are Hewlett Packard Enterprise, Dell EMC, Lenovo, Inspur and Super Micro.

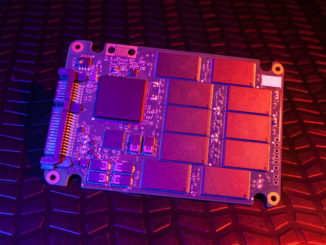

The vendor has launched two generations of products, the first in 2018 and the second two years later. ScaleFlux’s Computational Storage Drives (CSDs), which bring together PCIe SSDs and integrated compute engines, which offload data-intensive jobs from the compute to free up CPUs and reduce I/O bottlenecks that occur when sending huge amounts of data from storage devices to datacenter systems or disaggregated compute nodes.

The compute engines leverage field-programmable gate arrays (FPGAs) and the drives can be installed in standard slots for 2.5-inch U.2 drives or half-height, half-length PCIe add-in cards. IT administrators also can change settings to better balance storage space, performance, latency and endurance for the application.

“Our primary focus is around drives that are better than your ordinary NVMe SSD,” Baker says. “We’ve taken the medical mantra of do no harm – make sure that we’re delivering the performance, latency, endurance, reliability, all the features that you expect from your enterprise NVMe SSD, then add innovation to give you additional improvements to your system efficiency and your drive efficiency.”

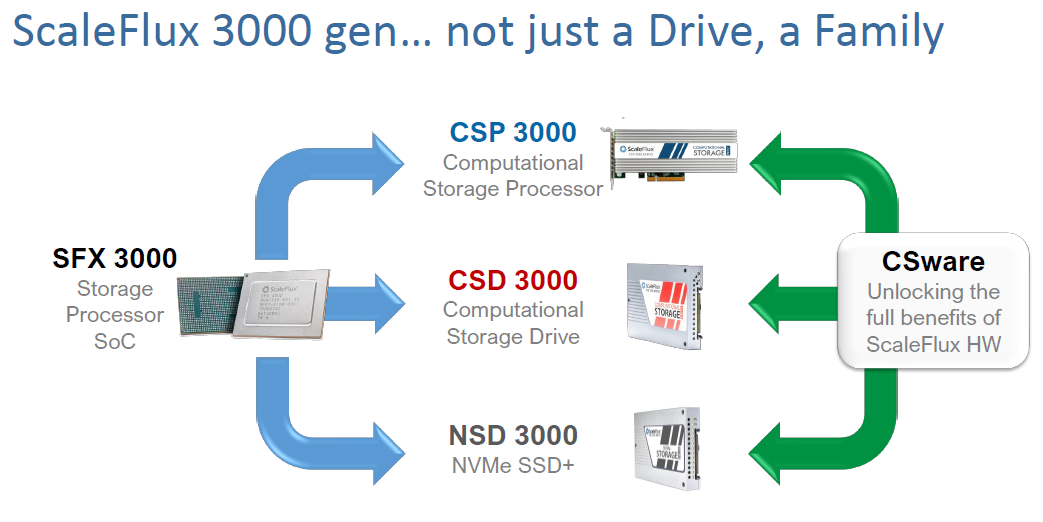

Now the ScaleFlux this week is coming out with its third generation of products, which are in beta now and will be available in the spring 2022. The latest generation not only comes with upgraded computational storage systems but also a homegrown Arm-based silicon and a suite of discrete software aimed at making it easier for enterprises to use computational storage.

“CSware is software and application integration that is aware of the transparent compression capability in the drives and leverages that to improve performance, reduce CPU burden, and reduce the DRAM pollution as well,” Baker says.

There is a software RAID in the CSware offering that will take advantage of the drives’ compression to deliver RAID 5-like storage costs and RAID 10-like performance, he says. There’s a KV store optimized for compression that Baker says is an alternative to using RocksDB as the storage engine for a database, reducing the amount of CPU and RAM consumed by half and doubling the write performance of the database.

A third piece of the software can be used in relational databases like MySQL and MariaDB to manage the updates to the data, improve the write performance for those databases and reduce the wear and tear on the drives.

ScaleFlux will likely offer a free version of the software and donate these to the open source community to drive adoption of computational storage.

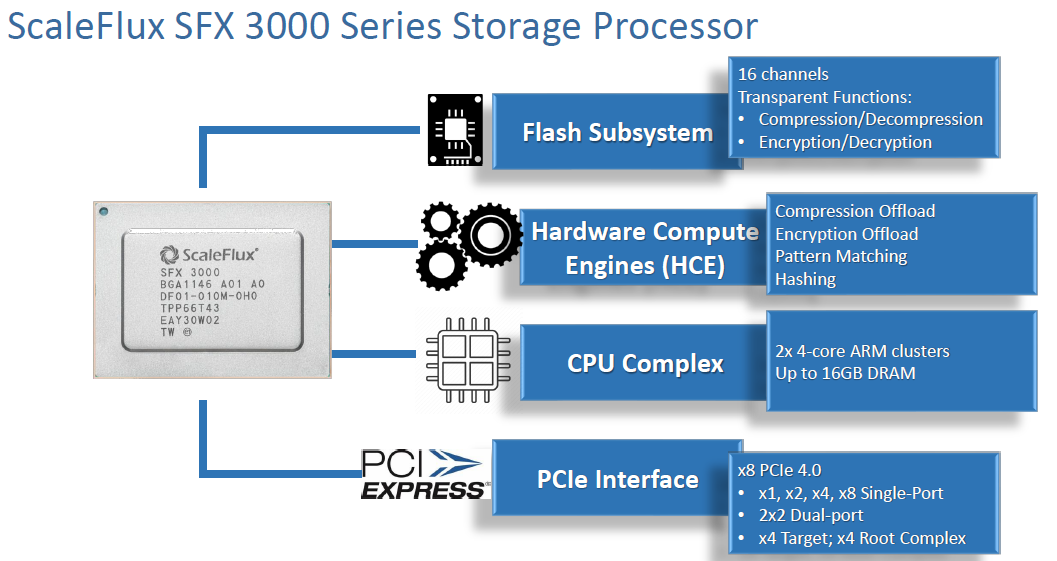

The company’s new SFX 3000 Series storage processor and the firmware it holds are the compute power behind the vendor’s latest systems. The CSD 3000 series NVMe drive is the latest generation of the company’s flagship offering. It enables enterprises to reduce data storage costs by up to three times and doubles the application performance. It also increases the endurance of flash nine-fold compared to other drives on the market, Baker says.

Through the four programmable Arm cores, organizations can deploy application-specific distributed compute functions in the storage device.

“In the CSD 2000 generation that’s shipping today, we have the capacity multiplier capability,” he says. “We have great performance in the storage. We do not have the encryption and we do not yet use the NVMe driver. We do have a ScaleFlux driver. In the 3000 [generation], we transition to the NVMe drivers to support a broader array of OSes very simply and extend the market for these drives much more broadly.”

The NSD 3000 series NVMe SSDs is essentially a smarter offering than other devices, with twice the endurance and performance on random write and mixed read/writes than competitive NVMe drives. It’s aimed at enterprises that might not need to adopt all the capabilities of the CSD 3000 and are looking for a cheaper alternative.

“For those who aren’t quite sure how they would consume the extended capacity or how they would consume those cores, here’s a product where we turn those things off and it’s just a better NVMe SSD,” he says. “In the NSD, we retain the transparent compression-decompression function, but we just leverage that for improved endurance and performance and don’t give customers the keys to do the capacity multiplication.”

The CSP 3000 delivers a computational storage processor without the built-in flash and offers organizations expanded access to the programmable cores so they can add additional functions specific to their deployments. This offering may be used when enterprises “have got hard drives and they’re not looking to wholesale swap out the hard drives but they want to do some compute offload to go with that array or for some other reason,” Baker says. “If They need to separate the compute from the storage, then the CSP is a viable solution.”

Developing the Arm-based ASIC was key to enabling ScaleFlux to expand the capabilities of its portfolio, which couldn’t be done with an FPGA in terms of cost and power, he says. The system-on-a-chip (SoC) has four functional areas integrated onto it. There is a flash subsystem with a 16-channel controller and built-in compression/decompression and encryption. Also integrated are hardware compute engines for fixed algorithm tasks and a CPU complex for use case-specific programs. In the CSD, users have access to four of the eight cores; the others are used for flash management and exception handling. However, in the CSP, they have access to all eight cores before there’s no flash involved.

There also is a PCIe interface, with eight PCIe 4.0 ports, though in most form factors, there will only be four lanes in use.

“It’s all integrated into a single chip,” he says. “We don’t need a separate processor to do the compute functions and a discrete flash controller. It’s all integrated to save board space, improve performance, save power [and] allow higher capacity of the drives.”

This data-driven architecture offers a range of advantages over traditional processor-focused compute infrastructure, including the ability to move more of those compute functions into the drives, Baker says. What ScaleFlux is looking to do is to offer enterprises a way to bring even more of those compute tasks onto its hardware.

Be the first to comment