Here are two things you don’t see every day in the realm of scientific and technical high performance computing. The first thing is seeing an organization upgrade an existing system after it has already had its successor plopped down in the datacenter next to it. And the second thing is a move to a utility model of capacity consumption.

We are not sure if we will see more of the former in the HPC sector, but we do expect to see a lot more of the latter. Both are happening right now with an upgrade to the legacy HPC4 system, to what we are calling the HPC4+ system, at Italian oil and gas giant Eni, which only got its HPC5 successor to the HPC4 machine up and running in February 2020 to do a big chunk of its seismic processing and reservoir modeling.

Dell was able to steal away the Eni account from Hewlett Packard Enterprise with the HPC5 system, which had 1,820 nodes based on a pair of 24-core “Cascade Lake” Xeon SP-6252 Gold processors and four Nvidia V100 GPU accelerators that hook to the CPU over the PCI-Express bus and to each other using the NVLink interconnect. These compute engines are housed in the hyperscale-inspired PowerEdge C4140 servers, and the nodes are hooked together to deliver 52 petaflops of aggregate double-precision floating point oomph using a 200 Gb/sec InfiniBand network from Nvidia.

With the original HPC4 machine, which was fired up in 2018 and which is still handling production loads at Eni, the system had 1,600 HPE ProLiant DL380 two-socket Xeon SP servers, which is a workhorse of enterprise computing like the Dell PowerEdge R540 is. The nodes in the HPC4 system used a pair of the near-top-bin 24-core “Skylake” Xeon SP-8160 Platinum processors, which were combined with a pair of “Pascal” P100 GPU accelerators from Nvidia; the nodes were connected with 100 Gb/sec InfiniBand from Mellanox (which was not yet part of Nvidia). This machine weighed in at 18.6 petaflops of aggregate double-precision floating point performance. Still formidable for a commercial entity to put on the floor.

The prior three generations of HPC systems – namely the HPC1, HPC2, and HPC3 machines – at Eni were built by IBM and its follow-on after selling off the X86 server business to Lenovo in 2014.

Although there are not a lot of details being put out – this is not unusual in the oil and gas industry, which is super-secretive about technology – what we find interesting is that the HPC4+ system – we are calling it that to distinguish it from the original HPC4 system – is an upgrade of an existing system, that it is shifting to AMD processors, and that Eni is shifting from buying its own machines and to consuming them under HPE’s GreenLake utility pricing model, which we have discussed in detail here specifically for the HPC industry. This is not additional capacity being added to HPC4, but a swap out of the HPC4 system for a new HPC4+ system. That is our language, calling it HPC4+, not Eni’s language, but we have to do something to differentiate the old from the new. And it is important to note, while we are thinking about it, that this is not the HPC6 system, whatever that system might be at some point in the future, perhaps in 2022 if history is any guide, but maybe in 2023 if Eni decides to change architectures for its CPUs or GPUs as it does from time to time.

So this HPC4+ machine is a peculiar kind of upgrade. The 1,600 nodes in the original HPC4 system are going to be removed by HPE and recycled (presumably through their server remanufacturing operation) and a new set of nodes are going to be replacing them, according to sources at HPE.

To be precise, Eni is going to be renting 1,522 ProLiant DL385 Gen10 Plus servers, which again are perfectly stock server workhorses aimed at the enterprise datacenter and which come with either one or two sockets, by the way, not just two sockets. The HPC4+ system will be using “Rome” Epyc 7002 processors, and we thought there was a chance that it might have a single CPU chip with a high core count and a quad of GPU accelerators in each node. This seems to be the new ratio of sockets, but given that there are eight chiplets on “Rome” and “Milan” CPU socket and four GPUs on the system, the ratio is more like 2:1, not 1:4, if you are looking at unique compute elements. That said, the original HPC4 machine had a pair of Xeon processors with a pair of Nvidia P100 accelerators in each node, and we strongly suspect that Eni could stick to a ratio of one GPU socket per one CPU socket with the HPC4+ system. Given how Eni must have tuned its software for these ratios, we think this is slightly more likely.

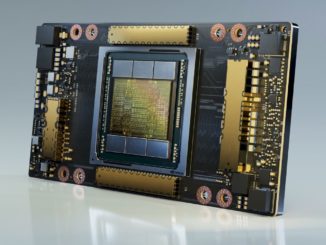

HPE said that the HPC4+ machine will employ a mix of GPU accelerators to provide most of its floating point performance (and integer performance for machine learning inference where needed). Specifically, the HPC4+ machine will have a mix of Nvidia “Volta” V100 and “Ampere “A100” GPU accelerators as well as AMD “Arcturus” Instinct MI100 GPU accelerators. Up until now, Eni’s prior four machines had GPU acceleration of some sort and all of it was done with Nvidia GPUs and all nodes in each generation of system had the same accelerator.

To be super-precise, Eni told us that out of the 1,522 nodes, there were 1,375 nodes equipped with two Epyc 7402 CPUs, which have 24 cores running at 2.8 GHz plus two Nvidia V100 accelerators ; 125 nodes with a pair of Epyc 7402 CPUs and two Nvidia A100s; 22 nodes with two Epyc 7402 CPUs and two AMD MI100s. Additionally, there 20 login nodes without GPUs.

We presumed that all of the nodes would be configured with 200 Gb/sec HDR InfiniBand from Nvidia, and said that there was an outside chance it might employ the 200 Gb/sec Slingshot interconnect from HPE (by virtue of its $1.3 billion acquisition of Cray in May 2019) or use the 400 Gb/sec Quantrum2 NDR InfiniBand interconnect from Nvidia, which ships later this year. And it is in fact using 200 GB/sec HDR InfiniBand.

Perhaps the most interesting thing about the HPC4+ system is that is has that utility pricing approach that makes it feel like a cloud, even though the machine will be going into Eni’s famous Green Data Center, shown in the feature image at the top of this story. What we don’t know is if Eni is going all the way to what we are calling a “cloud outpost,” which is when a vendor not only gives you utility pricing on their gear and drops it into your datacenter (or a co-location facility of your choosing) but also has the vendor provide managed services to operate this infrastructure. That is a true cloud experience but with the kind of control over the infrastructure that organizations are used to with on-premises gear.

The other thing that HPE did confirm about the HPC4+ machine is that it was getting a shiny new 10 PB ClusterStor E1000 parallel file system, which HPE says is twice the capacity of the one on the original HPC4 system. The HPC5 machine had a 15 PB parallel file system. It is not known which one Eni uses, but we figure that it has to be either the open source Lustre or IBM’s Spectrum Scale (formerly known as General Parallel File System, or GPFS).

Like many HPC centers in the world, Eni keeps two machines in production at the same time, not only to spread out its capacity but to spread out its risk. There is a balance between getting your investments back out of a machine and getting onto newer iron to drive scale as well as compute, storage, networking, and power efficiency. Even hyperscalers have to get three years – and now sometimes four years – out of their server and storage investments.

When we went to press, we did not know the aggregate petaflops that this new HPC4+ machine had, but said that if performance scales as we expect and the ratios of CPUs to GPUs are as we expect, then it should come in somewhere between 35 petaflops and 40 petaflops of peak aggregate performance. Which would have Eni pushing up against 100 petaflops of aggregate performance, making in the largest supplier of industrial HPC simulation and modeling capacity in the world. Well, that we know about, anyway. Any one of the other oil and gas majors could be larger.

As it turns out, the resulting HPC4+ system has about 22 petaflops of performance, or about 20 percent more than the HPC4 machine it replaces, which does not mean much more aggregate throughput at all in the Eni datacenter. This is clearly about saving money on energy, then, and doing a little more work in slightly fewer nodes as well as testing out the AMD hardware and software stack on some of the nodes. We strongly suspect that Eni got a heck of a deal on these compute engines because they are one generation back excepting the Nvidia A100s. Which have also been in the field for quite a bit of time at this point. Or, because of chip shortages, this was the only machine that Eni could build now as a stopgap between HPC5 and HPC6.

We suspect that all of these factors came into play.

Be the first to comment