These days, whenever a research firm or industry leader outlines its vision for the future of enterprise technology, edge computing is almost always one of the central trends. Market data shows that this trend is real: According to one recent report, the worldwide edge computing market is forecast to reach $43.4 billion by 2027, with a compound annual growth rate of 37.4 percent from 2020 through 2027, inclusive.

At a high level, edge computing is less about specific products and is ultimately a new model for enabling a variety of emerging use cases that will shape the world in coming years, especially advanced IoT use cases. Consider, for example, targeted advertising: As cars zoom down a busy city street, a nearby billboard employs video analysis of the make, model and year of each vehicle and then displays a unique ad that’s tailored for each driver. Imagine if on that same street, the local government is using sensors to measure traffic congestion and then using that information in real-time to change how certain lanes are designated in order to improve traffic flow.

Edge computing is the only way to deliver this future. These sort of use cases require organizations to process and store increasing volumes of data in real-time – right where the data is being generated. Due to space limitation, edge infrastructure must come in smaller form factors compared to the infrastructure for public clouds or on-premises datacenters. Within these small form factors, edge platforms must deliver the needed processing power while remaining energy efficient.

To achieve this, NVM-Express technology will become a critical component of edge infrastructure. NVM-Express supports several key requirements of edge infrastructure: it can fit within small form factors, delivers low-latency computing and provides vital power efficiency.

Reducing Data Movement, Latency, And Power Consumption

Minimizing data movement and latency is a fundamental part of the edge model. Data has gravity – it takes resources to move back and forth between storage and computing components. The more hops and longer the distance that data travels, the higher the latency and the greater the cost. Edge computing works because it doesn’t require moving data from disparate endpoints all the way to a centralized cloud for processing and back to the endpoints for decisions to be made.

However, data still must move between the compute and storage components within edge infrastructure itself. NVM-Express technology addresses that issue by bringing storage and processing much closer together, reducing data movement between the two.

Edge use cases, such as the advanced IoT use cases mentioned above, require low latency to quickly analyze data and function properly. These apps have to make decisions quickly. For example, in the case of targeted advertising, it wouldn’t do much good to display that ad when the car’s already driven past. By locating compute and storage resources together, NVM-Express reduces data movement and enables low-latency processing.

Power efficiency is another key factor for edge computing. If edge infrastructure supports minimal data movement and low latency, edge apps will work fine, but if that underlying infrastructure eats up too much energy, these use cases will be too expensive for large-scale adoption across the world. Luckily, NVM-Express technology also maximizes power efficiency.

In a typical cloud or datacenter environment, NVM-Express components will only moderately lower power usage in the aggregate compared to traditional storage drives. But at the edge, the difference in power usage between NVM-Express and other storage technology is more important because edge devices are deployed at massive scale for a single application, and each device must drastically reduce power consumption. For example, in the above traffic measurement use case, there may be hundreds of edge devices working on continuous data streams.

Object Storage And NVM-Express: A Perfect Match For The Edge

Object storage is a highly distributed, peer-to-peer architecture that is simple and agile enough to be deployed at disparate edge locations. This makes it an ideal storage architecture to pair with NVM-Express technology for supporting edge deployments. Object storage allows organizations to bring computing resources right to where data is, a necessary component of edge computing. It is also extremely scalable. If a given edge deployment begins to experience a sharp rise in data volumes, object storage can be easily scaled up to accommodate that growing data. Finally, because the object storage architecture connects nodes together globally in a peer-to-peer network, it allows a given edge processor to borrow compute resources from a peer node if needed.

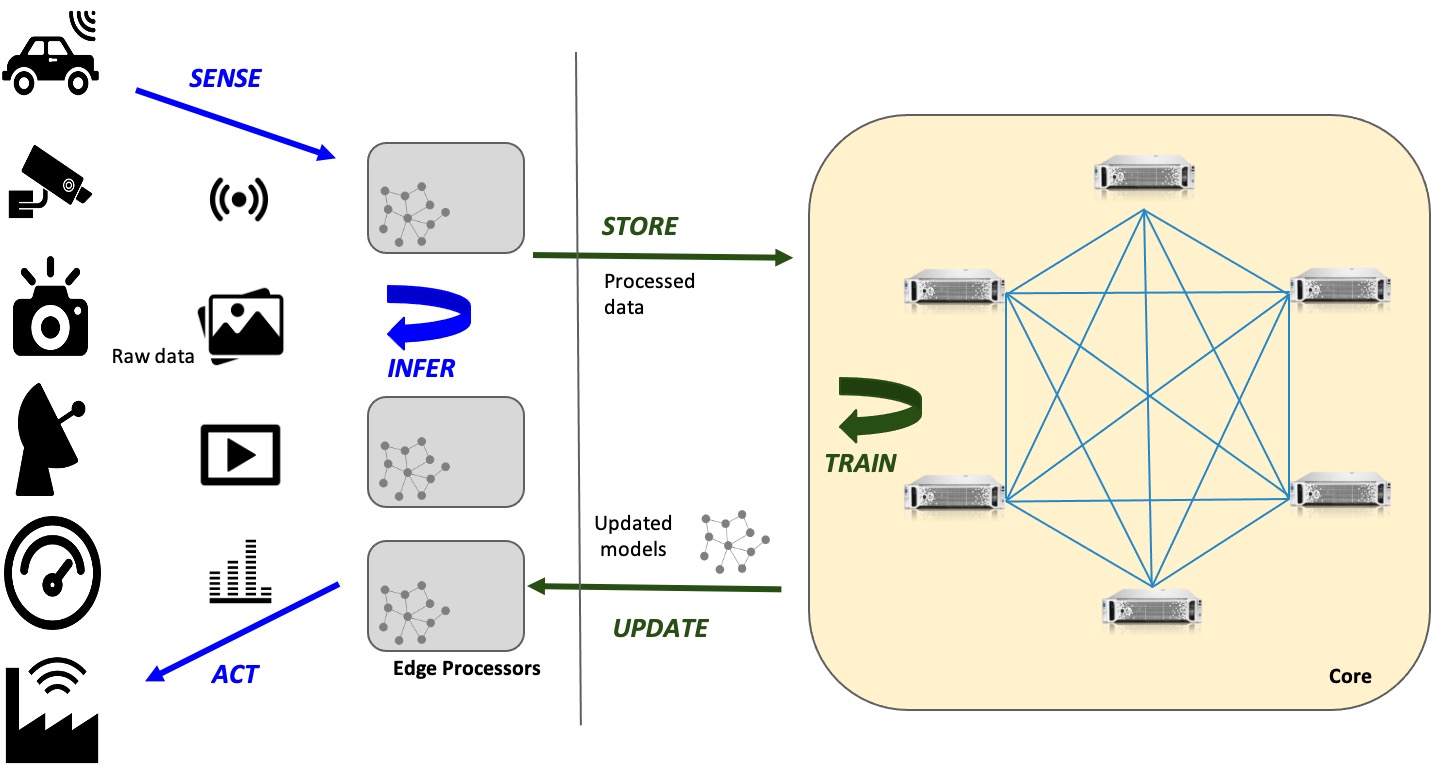

Furthermore, because of its highly distributed, peer-to-peer architecture, object storage supports a hybrid edge and hub model, the most efficient approach to edge computing. This model is comprised of infrastructure that forms an inner loop and outer loop. The inner loop is located at the edge – it processes data extremely quickly and stores relatively small volumes of data. The outer loop is located at a central hub – it processes and stores larger volumes of data.

The inner loop (at the edge) and outer loop (at a central hub) send data back and forth between each other. The inner loop only analyzes critical data and performs machine learning on it to make urgent decisions in real-time. For example, the processors in a self-driving car can detect an obstruction in the road and then immediately command the vehicle to either stop or change lanes. Meanwhile, the inner loop also sends data and processed metadata to the outer loop at the central hub for analysis and long-term storage. The data that arrives at the hub can be used to train machine learning models. In a feedback loop, those updated ML models can then be sent back to the inner loop to make better real-time decisions at the edge.

NVM-Express technology is increasingly being incorporated into object storage platforms. Both technologies make it easier to bring storage and compute infrastructure to data.

Conclusion

Edge computing is critical to supporting next-generation use cases such as advanced IoT, autonomous vehicles, smart manufacturing and 5G, among others. To make edge computing work, organizations need infrastructure in small form factors that offers low-latency processing and saves on power. NVM-Express achieves all of that.

Edge computing also requires the delivery of storage and compute resources directly to where data is being generated at disparate endpoints. Object storage, due to its highly distributed nature, enables that. Ultimately, object storage that leverages NVM-Express technology provides all the ingredients needed for widespread edge adoption.

Gary Ogasawara is chief technology officer at Cloudian. We interviewed Ogasawara back in July on Next Platform TV about the state of object storage in the enterprises.

Granted your article is about NVME and Object Stores, but while I agree regarding your advertising case, your traffic case has an elephant in the room, in that car traffic requires macro scale management. A better case would be for mobile network data traffic, as network data packets do not decide where to go to…