There are two Amperes in datacenter compute right now, and they are both gunning for Xeons. One is the “Ampere” A100 GPU accelerator from Nvidia, and the other is Ampere Computer, a provider of Arm-based server processors that is headed up by former Intel president Renee James and backed with private equity from The Carlyle Group.

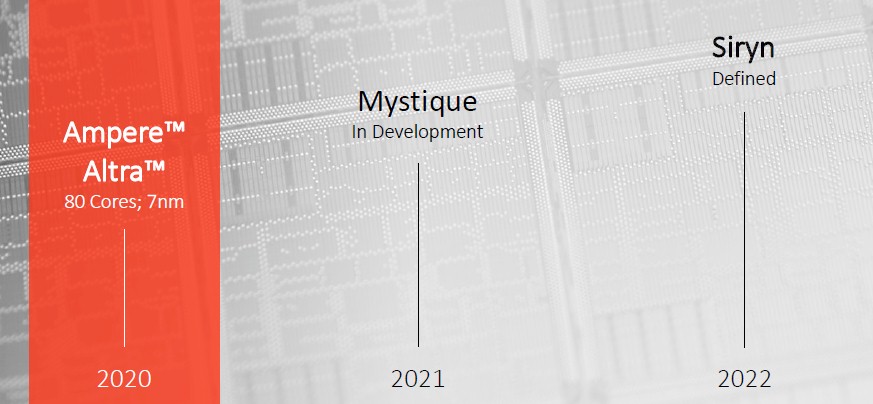

Nvidia already made a big splash with its A100, and now Ampere Computing is making some waves of its own now, with the second generation 80-core “Quicksilver” Altra Arm server chips out the door and the third generation “Mystique” Altra Max follow-ons being unveiled a bit this week. Both of these chips are etched using the 7 nanometer manufacturing processes from foundry partner Taiwan Semiconductor Manufacturing Corp. The first generation eMAG processors from Ampere Computing, really a slightly revamped variant of the “Skylark” X-Gene 3 processors from Applied Micro, the true pioneer in Arm server chip designs we think, were manufactured with advanced 16 nanometer processes from TSMC and were really just a testbed chip for Ampere Computing to prove it was serious and to get a little experience with a new architecture in some cases.

We think there is a very good chance that these two chips Altra chips will get traction at the hyperscalers and big cloud builders in the coming years – if the battle between AMD and Intel doesn’t take all of the oxygen out of the datacenter.

As Amazon is demonstrating with its own Graviton2 chips, there are compelling price/performance reasons for adopting Arm in the cloud and among the hyperscalers, on the order of 40 percent better than AWS instances based on Intel’s Skylake Xeon SP processors as a case in point. And the Quicksilver and Mystique chips blow away the 16-core Graviton1 and 64-core Graviton2 processors, which Amazon Web Services created as SmartNIC motors initially. If AWS wants to compete with Ampere Computing – and indeed Marvell with its 96-core “Triton” ThunderX3 Arm server chip, they are going to have to pick up the pace.

It looks like we have a core arm’s race here, people, particularly with Ampere Computing telling The Next Platform this week that it plans to push the Mystique Altra Max processors up to 128 cores. And these cores will have microarchitectural tweaks that make them distinct from the “Ares” Neoverse N1 cores that Ampere Computing used in the Quicksilver chips, so the performance boost will be even bigger than just the delta in core count minus the difference in clock speed.

The Mystique Altra Max chips will be sampling in the fall, according to Jeff Wittich, senior vice president of products at Ampere Computing, and it will be a monolithic chip design as we expected and as the company had been hinting. James and Wittich are in no hurry to move to a chiplet design, because this adds complexity and costs above and beyond pushing the reticle limits at TSMC, which is its own kind of problem.

Wittich confirmed as we have been suspecting that Ampere Computing will move to chiplet designs with the future fourth generation “Siryn” Altra processors expected in 2022 and in fact, the test chips for elements of the Siryn design are etched using 5 nanometer processes from TSMC and they are just coming back from the fabs now for review.

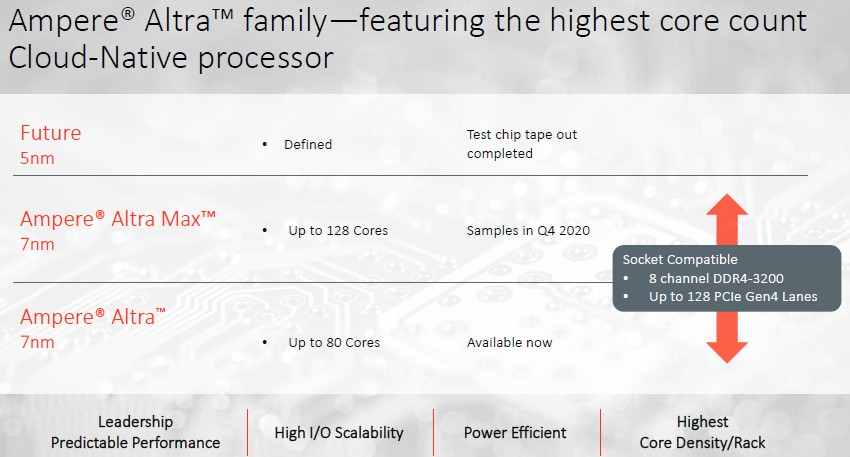

The important thing for Ampere Computing’s customers at this point is that the Quicksilver and Mystique chips will use the same server sockets and platforms, so supporting Altra today means a platform will be able to support Altra Max when it is available next year.

It seems pretty clear that the cloud vendors want an annual cadence for performance and price/performance increases in their CPUs, but they don’t want to have to change and therefore recertify the underlying platforms every year. Every other year is preferable, and given the pace of change for memory, bus, and interconnect technologies, for the next several years – say until 2026 or so when we think things will get very wonky as process shrinks all but stop for CMOS technology – a two-year cadence for platform changes is the sweet spot for hyperscalers and cloud builders.

That shared server platform supports eight channels of DDR4 memory running at 3.2 GHz and 128 lanes of PCI-Express 4.0 lanes, which is toe-to-toe with the AMD “Rome” Epyc processors and on par with IBM’s Power9 processor, sort of. The Power9 chip has only 48 lanes of PCI-Express 4.0, for 192 GB/sec of aggregate bandwidth, but there are another 24 lanes of 25 Gb/sec “Bluelink” ports, which implement remote NUMA across quad-socket blocks or NVLink ports out to GPU accelerators as well as the OpenCAPI ports for other kinds of accelerators. There is another 256 GB/sec of bandwidth for short range NUMA links for systems with four processors. Add that all up, and you are talking about an aggregate of 748 GB/sec of I/O bandwidth coming out of the Power9 socket, compared to 512 GB/sec for the Ampere Computing Altra and the AMD Rome Epyc; the Marvell ThunderX3 will have 64 lanes running at PCI-Express 4.0 speeds, for an aggregate of 256 GB/sec of I/O bandwidth. By the way, IBM is going to crank the hell out of the I/O with Power10, as we have pointed out, with an expected Power9 kicker that prototype some of the new SerDes that can link to I/O or memory.

What is clear from the chart above is that Ampere Computing is not making any commitment to use the same socket and platform with the future Siryn Altra chip. That doesn’t mean that it won’t, but we wouldn’t count on it, with DDR5 memory, PCI-Express 5.0 peripheral buses, and probably future CCIX, CXL, and Gen-Z ports also being supported. (Microsoft is hot on Arm processors and loves Gen-Z, so there is probably a push to bridge from PCI-Express 5.0 to Gen-Z at least here, and probably elsewhere among the hyperscaler and cloud builder elite.)

The Quicksilver chip implements those 80 cores in an 8 x 10 grid and has them interconnected with a mesh network similar to that used by Intel’s Xeon SP and Xeon Phi processors (and Tilera and a bunch of other chip designers from several years ago). The cores run at 3 GHz (a little less than the 3.3 GHz maximum speed expected on the Quicksilver chip), so a two-socket machine yields 160 cores with no simultaneous multithreading. Ampere Computing believes in having the strongest core it can deliver and not using SMT, which creates performance, security, and scaling issues that negate some of the benefits of doubling up the virtual cores by adding threads to each core.

With the Mystique Altra Max chips, the cores are laid out in a rectangular grid with the mesh interconnect extended, and the same eight memory controllers and 128 lanes of PCI-Express 4.0 are added, and the peak clock speed is dropped down to 3 GHz flat.

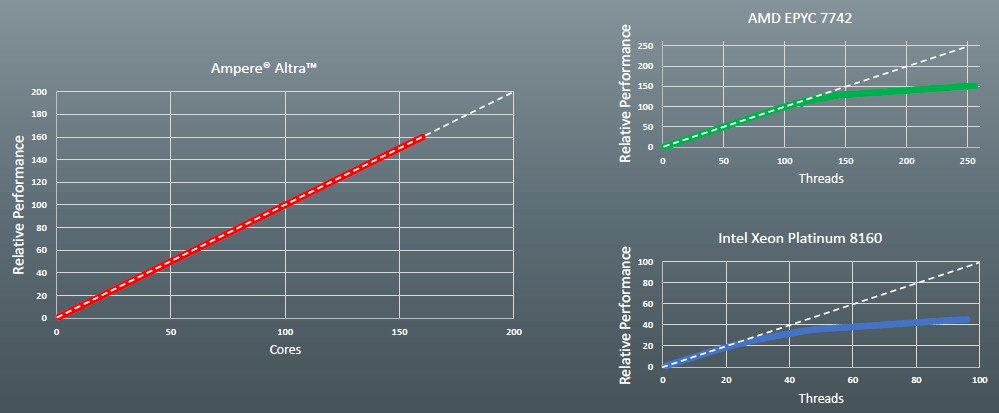

A top bin “Skylake” Xeon SP-8180 processor has 56 cores and 112 threads across a two-socket machine running at 2.5 GHz and is a little bit shy on the memory bandwidth, with only six memory channels compared to eight for the Altra. (A Cascade Lake” Xeon SP is a smidgen faster but essentially the same processor.) A top bin AMD Rome Epyc 7742 processor has 128 cores across two sockets with 246 threads running at 2.25 GHz and eight memory controllers running at the same 3.2 GHz speeds as the Altra has.

Obviously, all cores and all on-chip networks are not created equally, so you can’t just count cores and memory bandwidth and reckon performance. But Wittich did want to get people used to thinking about the differences between these three architectures, and whipped out this chart that showed the scalability of these respective server platforms mentioned above running the Stress-ng load generator. This chart below plots the relative performance as number of cores (for the Altra) or threads (for the Epyc or Xeon SP) are incremented by one to their full extent in each system. Take a gander:

For some reason, Ampere Computing’s techies used a 24-core Skylake Xeon SP-8160 system instead of the top bin part with 28 cores, which would go to 112 threads instead of the 96 threads of the part actually tested, but it is not hard to figure out where the relative scaling would go from this chart if you extrapolate a little. But the point remains that scaling across the cores is just as important as having a strong core. And having a set of strong cores that peter out for various reasons is like burning money.

“With our design and with our architectural approach, what we were trying to achieve with Altra was very, very good and efficient scaling,” explains Wittich. “We were trying to create very predictable performance as you scale out across different workloads and to solve some of the noisy neighbor problems on machines that are shared across workloads. Stress-ng is a simple test for us to show in that it doesn’t have a lot of other bottlenecks that will adversely impact the CPUs at different times. Obviously, we have more than enough I/O, network, and memory bandwidth to feed these cores. And we are within two percent of ideal scaling at 160 cores. With AMD, you have really good scaling as long as you are getting real cores, but once you have to start adding sibling threads at halfway through here, you can see that a thread does not give a full core of performance. With the Intel Xeon SPs, it’s all over sooner because there are only six memory channels and because a lot of the opportunistic performance in the design is not really predictable, when you have a low number of cores running you can turbo, but as you turn on cores the frequency starts to drop and really, as the system is loaded up, it drops back to what the real core performance should have been.”

In effect, you start trading off more cores for slower clocks, and you don’t get very far. This is why we always make bang for the buck comparisons on base clocks and only on base clocks.

Right now, says Wittich, Ampere Computing is working to get dozens of machines with the Quicksilver Altra processors in them to cloud and hyperscale customers so they can run their own benchmarks on them as well as doing a full suite of tests that pit the Altras against Xeon SPs and Epycs. Each machine will have its benefits, and Wittich knows that and also knows that Ampere Computing cannot run the table against these X86 alternatives on every test.

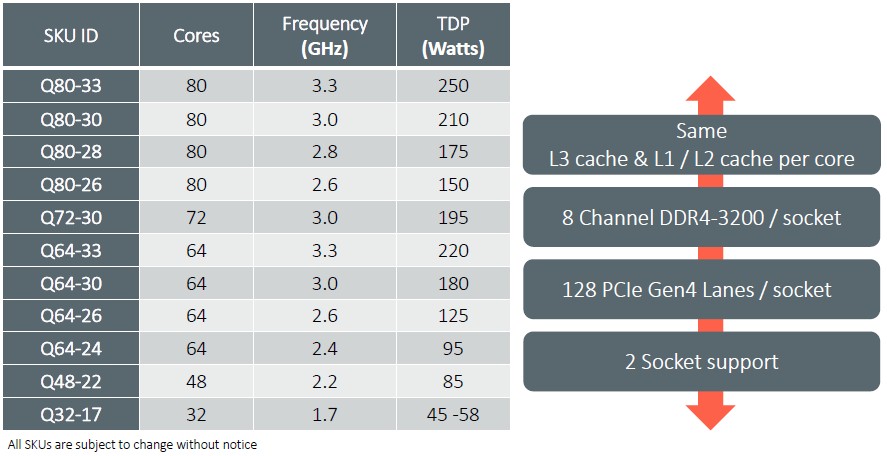

The other thing that Ampere Computing is revealing this week aside from the 128 cores on the Mystique chip is the SKU stack for the Quicksilver Altras. Here it is, without further ado:

It is beginning to look like 250 watts is the new ceiling for servers. Getting down to 45 watts with the lowest-bin part will mean having some of the features turned off in the BIOS, but the chip will be user adjustable rather than hard coded so those components (probably memory and I/O, but Wittich is not saying) can be reactivated when and if necessary. There is no word on pricing yet, but presumably there will be a premium for the compute capacity at the high end and a relative price break for the low-end of the SKU stack, relative to the middle of the pack and as is commonly done with server processors. Pricing for the Quicksilver Altras is available for OEMs and ODMs now, but will not be publicly available until the benchmark test suite is fully fleshed out in late August or early September. Then Ampere Computing will go public with its pricing and the fun comparisons can begin.

In the meantime, the Packet division of Equinix as well as Cloudflare are firing up Altra machines on their respective clouds for tire kickers to, well, kick. Oracle and Microsoft have said they will be adopting the chips as well, but it is not clear in what capacity. The Phoenics Electronics division of master reseller Avnet has signed up to distribute Altra processors, as has Genymobile, which runs a cloud for creating and testing Android phone applications on Arm servers. French cloud provider Scaleway is starting deployments, and Nvidia’s CUDA-X GPU offload libraries and development environment run with the Altra chips, too. Obviously, all of these machines run Linux, but we are pretty sure Microsoft can do a port of Windows Server for them – if it hasn’t done so already.

Be the first to comment