When talking about auto racing, everything eventually boils down to speed. While front-of-mind that means how fast a car travels around a track or through a course, speed is becoming increasing essential throughout all areas of the racing environment, including the development, design, testing and production of the cars themselves. Like in most other industries, companies that build race cars – whether it’s Formula 1, Formula 3, NASCAR, or other types – are being inundated with data that contain key information that can help design and create the cars in ways that make them go faster. Unlocking that data and accessing the information it holds as quickly as possible is key to succeeding in a highly competitive environment, where ultimate winning on the track can often be measured in milliseconds.

“We are in a very complex position, but not just because it is complex, but because it is very fast,” Andrea Pontremoli, a 27-year IBM veteran before leaving in 2007 to become president and CEO of Dallara, an Italian company that designs racing and sports cars, told a group of journalists this week at the company’s headquarters in Varano Melegari, which is in Parma about two hours outside of Milan. “The name of the game today is speed. It is really what is changing a lot of our economy and our way of working.”

The company, founded in 1972, is using a NeXtScale cluster running Lenovo nx360 M5 nodes, which are two-socket systems that run Intel’s Xeon E5-2600 processor, V3700 V2 storage system and RackSwitch G8052 1/10 Ethernet switch, along with a host of Lenovo software. That includes XClarity for system management, DataCore’s SANSymphony software-defined storage software, IBM’s Spectrum Scale parallel file system and Red Hat’s Enterprise Linux operating system.

Together the company uses the technology to create a high-performance computing (HPC) environment where data from such tools as its wind tunnels and driving simulators can be quickly analyzed and to run such complex workloads like computational fluid dynamics (CFD) and Dassault Systems’ Dymola engineering and simulation software to create and test computerized simulations of cars, which reduce the time, cost and complexity involved with car design.

“You cannot build up a real car. It would cost a fortune and take up a lot of time,” Pontremoli says. “And the gain through computers and CFD is letting us reduce the need to produce a real car for testing because when we go into production, we know already the performance. We produce something we only have to optimize. We have no doubt that everything works. And sometimes we have no time to test the car, so we go directly from simulation to production.”

For Lenovo, Dallara provides another proof point about the company’s growing prowess in HPC and supercomputing and is an example in the industry in customer demands on vendors, according to Per Overgaard, executive director of Lenovo’s Data Center Group’s EMEA segments.

“It’s a very unique collaboration we have with these guys, but it’s something we’re seeing more and more with our customers,” Overgaard told The Next Platform. “What’s changing in the industry is that, about five years ago, we were basically a company that answered RFPs – ‘We need this many processors, this much compute power, this much memory, this many racks’ – and then if we made the best tender and criteria. then we’d move on with things. Now it’s a different thing. Customers come to us with ideas, ‘This is what we want to do,’ and we are part of that process.”

Making It Easier to Make Mistakes

For almost five decades, Dallara has been working with and designing racing and sports cars for such companies as Lamborghini, Bugatti, Ferrari, Maserati, and Porsche. It also serves as a consulting partner to many of these companies and is building a knowledge base of information regarding system design that it can sell to companies not only in its longtime base of car companies but also in other verticals, including aerospace and industrial packaging operations – to increase the speed of packaging machines by in part decreasing the weight of the arms that are moving the systems. It also builds some of its own cars, though it’s careful not build so many that it ends up competing with its customers.

The company, which has more than 600 employees, now also runs its own schools complete with master’s degrees to teach students how to design performance cars.

When Pontremoli first came to Dallara, about 90 percent of its business was based on the racing industry; now that is down to 60 percent. In the coming years, the consultancy aspect will become a larger part of the Dallara business, but though the company will continue to rely on the racing segment as the core of company because it allows for the testing of new technologies. The speed of innovation in motor racing is too fast to make money from patents, he says. It’s better to innovate and then show other companies what you did. That is the consultancy part and doing so also enables the company’s work to become an industry standard, he says.

Dallara engineers focus on three areas for its work – designing and producing car chassis using carbon fiber composite materials to build the lightest cars possible, aerodynamics through the use of wind tunnels, CFD and 3D modeling, and vehicle design, which leverages the supercomputing performance of the Lenovo cluster to accelerate such jobs as driving simulations and analyzing data taken from areas like the wind tunnels. Pontremoli estimated that Dallara focuses on 85 percent of what drives performance in a Formula 1 car – the 35 percent that’s tied to weight and 50 percent linked to aerodynamics. The other 15 percent – the engine – is addressed by other companies.

The company goes through all that, and then it runs tests, the CEO says.

“This look takes normally nine months from a white piece of paper to a car running,” Pontremoli says. “Nine months is a very short time, of which eight months is in the design side and one month in the production side, so you understand how important the supercomputing capabilities are for us. And why are supercomputers important to us? The reality is that everything we do is based on the ability to make mistakes. If you are not able to make mistakes, if you do what you know, you are conservative. If you want to do something new, you have to accept you make mistakes. But how you can make mistakes without going into bankruptcy is through technology, through simulations.”

The combination of supercomputing and artificial intelligence not only enables engineers to crunch all the data coming in but also accelerates the processes that Dallara runs by finding patterns in simulations.

“We have 1 billion cells that are used to divide the car [and] more cells mean that cells are smaller and you are more precise,” he says. “So let’s assume that you divide the car into 1 billion cells – that is the normal Formula 1 car – then you do a calculation to try to understand that behavior of the car given the shape that you give to the compute. Then let’s assume you change, for example, the front wing, but the front wing will interact with the rear wing and the flow underneath the car, and all the air flows, so you start the calculation from zero, from scratch. Meanwhile, there are some parts of the calculation that will be the same as the previous one, but you don’t know, so you use AI to recognize a common part so that instead of doing the entire calculation, you recognize what is really changing and you keep what is the same.”

Making The HPC Case

Lenovo officials are pointing to Dallara and similar customers to highlight its capabilities in a highly competitive HPC field that is seeing demand for such infrastructures in enterprise datacenters grow as the amount of data being created rises. The company made a significant step into the field in 2014 when it bought IBM’s Intel-based server business for $2.3 billion. Since that time, it has become the vendor with the most systems in the bi-annual Top500 list. In the one released in November, it had 174 installations on the list. Coming in second was China’s Sugon at 71, though a combined Hewlett Packard Enterprise and Cray also would have had 71.

The company also is pushing programs on multiple fronts aimed at getting exascale capabilities into enterprise datacenters. One includes Project Neptune – which we detailed after it was introduced in 2018 – that addresses the issue of liquid cooling for datacenter system components, building on work that IBM began. It includes three avenue – direct-to-node (DTN) warm-water cooling, Rear-door Heat Exchangers (RDHXs) and Thermal Transfer Module and other technologies. It also includes software to dynamically adjust CPU frequency and reduce power consumption.

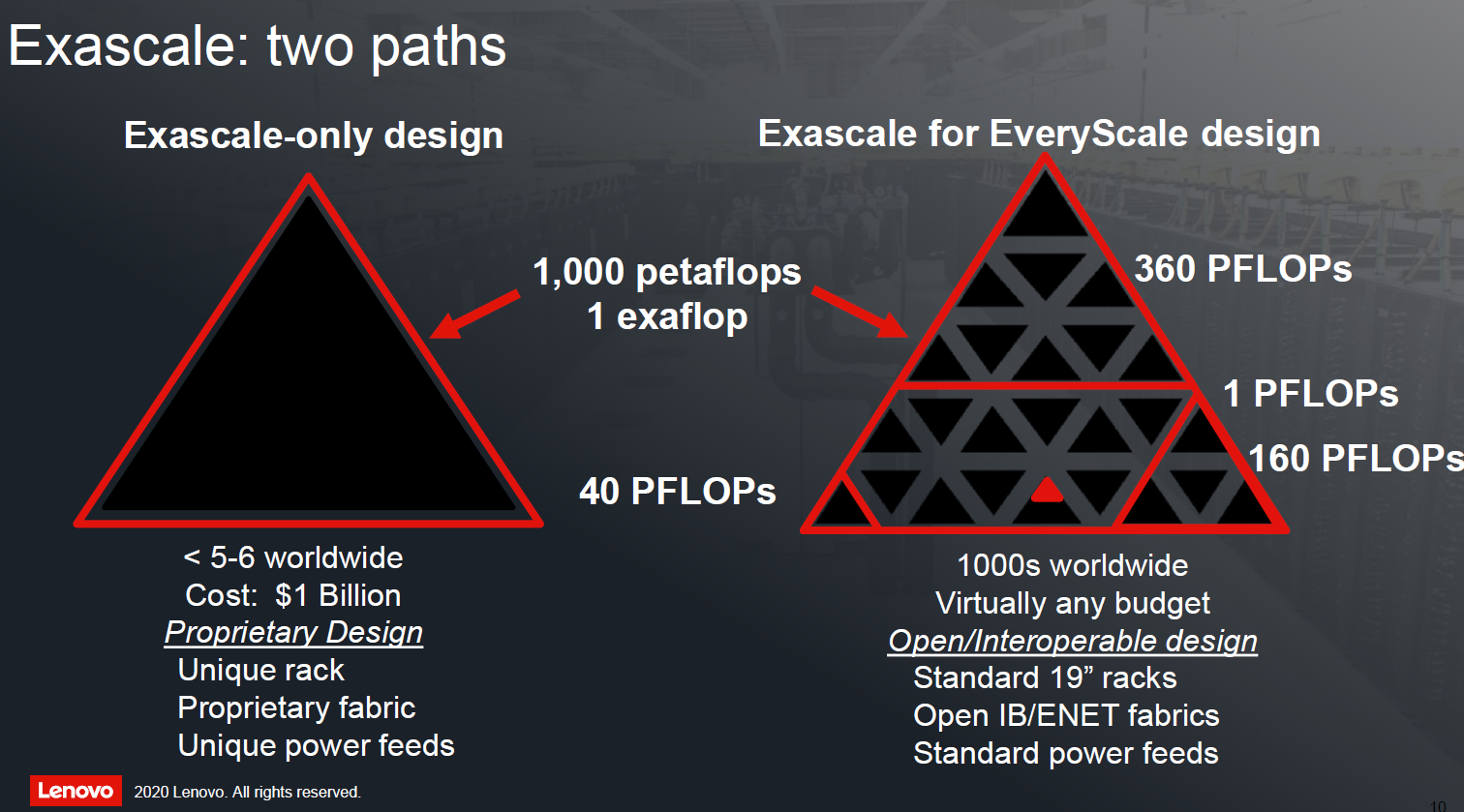

More recently, Lenovo unveiled its “Exascale-to-Everyscale” initiative to make exascale-level computing and AI and machine learning technologies more affordable and deployable for enterprises. At the Dallara event, Lenovo officials talked about this fractal HPC effort, and the widening divide between large and expensive custom-made supercomputers seen on the Top500 list and more affordable systems made with common core components and shared infrastructure that enterprises can afford and fit into their datacenters. The idea is to create systems that can be easily scaled and leverage open-source and off-the-shelf technologies.

It’s part of a growing trend among OEMs like HPE and Dell EMC to bring such capabilities to enterprises by using common components, technologies like GPUs and fast networking, and open-source software. Lenovo’s Overgaard says he sees the company’s ability to quickly and ably address complex demands like those put forth by Dallara as an advantage over rivals, adding that a company doesn’t “get best at something unless you really put the effort into it. It’s not by mistake that we’ve become the number-one company in Top500. It was a strategy that was put forth by our worldwide CEO.”

Be the first to comment