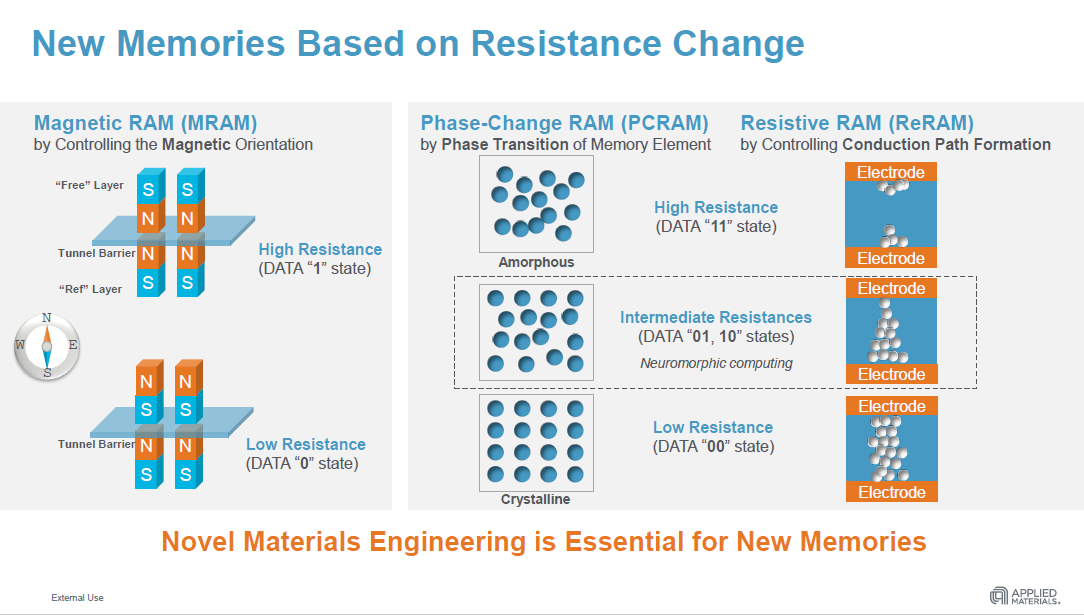

The growing imbalance between computational performance and data access performance has spurred the development of a number of new random access memory (RAM) technologies. Among these are Magneto-resistive RAM (MRAM), Resistive RAM (ReRAM), and Phase-change RAM (PCRAM). Up until recently though, there have been few commercial deployments of these devices. But thanks to the development of more advanced manufacturing systems, that soon might change.

MRAM is an especially attractive technology for IoT and edge computing devices since it promises much lower power consumption than NAND flash, the current storage memory of choice on this type of hardware. MRAM is also reasonably fast, which means it can not only replace the flash on these devices, but much of the SRAM as well, saving additional cost.

Samsung began shipping its first embedded MRAM product in March and TSMC is also providing its version of the technology, which Gyrfalcon Technology is using in its AI accelerator. Everspin is another MRAM provider, and its product is being used by IBM to serve as cache for a high-capacity (19TB) SSD. And just this week, Everspin announced its MRAM will be supported by Sage Microelectronic’s flash memory controller for enterprise SSDs.

ReRAM and PCRAM are more likely to show up in datacenter servers, both of which are good candidates for storage-class memory. SCM provides an intermediate memory tier that is much faster than NAND flash and less expensive than DRAM. Intel’s 3D XPoint Optane Persistent Memory product, which appears to use some sort of phase-change technology, is an early example of this category.

According to Kevin Moraes, VP of Metal Deposition Products at Applied Materials, the commercialization of these memories is being triggered by the exploding volumes of data being generated by IoT and edge devices, as well as by internet users. As a consequence, data volumes are expected to increase from about two zettabytes in 2018 to more than 10 zettabytes in 2022. The demand for this data is being driven in large part by artificial intelligence and data analytics applications, more generally.

At the same time data volumes are soaring, growth in processor performance is flattening, mainly due to the limitations of conventional CMOS technology. In its heyday in the 1980s and 90s, Dennard scaling and Moore’s Law delivered increases of more than 50 percent performance per year. Unfortunately, Dennard scaling died in middle of the last decade and Moore’s Law is now faltering. As a result, the annual rate of performance increase for processors is now about 3.5 percent per year.

“The rate of growth of data far outstrips the rate of growth of compute because Moore’s Law is slowing down,” Moraes tells The Next Platform. “So, in a nutshell, we think there is going to be a whole new set of hardware architectures that are going to be driven in order to improve compute efficiency. And a key part of improving compute efficiency is these new memories.”

As the world’s biggest maker of semiconductor-manufacturing equipment, Applied Materials is a key supplier to many of the industry’s largest foundries including Intel, TSMC, Samsung. and Globalfoundries. It also collaborates with companies like IBM, SK Hynix, Crossbar Inc, and Spin Memory on product development. Founded in 1967, the Applied has ridden the wave of Moore’s Law for the past 50 years.

While the materials used to build semiconductors during that period has been relatively stable, Moraes says that’s now changing. It used to be only a few elements besides silicon were used to in chips – things like boron, for example, which is used as a CMOS dopant. Now compounds and elements like germanium, selenium, tellurides, nitrides, and transition metal oxides are being used, especially for these new memory devices. “Today it’s like the Wild West,” says Moraes. “People will use anything.”

Fundamentally, that is changing the nature of semiconductor development from primarily a physics challenge to a physics and materials challenge. That complicates the manufacturing process, requiring more precise control of how the materials are deposited. That kind of precision is absolutely required to make these memories robust enough for widespread commercial use.

And that’s where Applied Materials comes in. The company recently announced a couple of new systems designed for high-volume production of MRAM, PCRAM, and ReRAM, the idea being to help to make devices based on these technologies much more reliable and cost-effective.

Applied’s MRAM system, the Endura Clover MRAM PVD, is a nine-chamber device that controls the deposition of the 30 different layers of materials needed for MRAM. According to Moraes, the system is able to do this with sub-angstrom precision, which enables the memory to have the level of endurance needed for commercial applications. In fact, Moraes says they’ve improved MRAM endurance 100-fold thanks to their more refined deposition technology. Applied is shipping the Clover platform to five customers, one which appears to be Spin Memory, a startup offering embedded MRAM as a replacement for SRAM.

The other new platform, the Endura Impulse PVD, uses a similar nine-chamber design to compose ReRAM and PCRAM wafers, in this case using deposition under vacuum to provide thickness and compostion uniformity for the various layers. Applied has eight early customers for the Impulse product, including Crossbar, a maker of ReRAM.

Whether this proves to be an inflection point for these technologies remains to be seen. Certainly, if the Applied hardware proves itself, a lot more devices containing these alternate memories will appear on the market, perhaps along with some new memory providers. That’s not to say DRAM, SRAM, and NAND will disappear anytime soon. There is plenty of life left in these more established technologies. But as it has been processors, we’re apt to see a much more diverse set of memory technologies in the years ahead to deal with an increasingly complex and demanding application landscape.

Be the first to comment