IBM has announced that it has achieved a new high-water mark in “quantum volume,” a metric the company is using to assess the capability of its quantum computers. The latest quantum volume of 16 turned in by the recently launched Q System One, doubles that of the 20-qubit machines in the company’s Q Network, and quadruples that of the 5-qubit Tenerife device IBM introduced in 2017.

Quantum volume takes into account a number of factors, including the number of qubits, gate and measurement errors, coherence time, connectivity, device crosstalk, and circuit software compiler efficiency. This metric is designed be much more comprehensive for assessing computational performance that just counting the number of qubits on the processor.

As we reported in January, the Q System One represents the company’s initial attempt at a quantum computer designed for commercial deployment. Although it uses the same 20-qubit chip as the machines running in IBM’s commercial Q Network, Q System One has incorporated additional features such as RF isolation, vibration dampening, stricter temperature control, and auto-calibration that are designed to reduce error rates.

Besides providing more qubits, getting errors under control is seen as the most critical factor for developing a universal quantum computer. And since two-qubit operations tend to be more common than one-qubit operations in a typical quantum application, its error measurement is considered to be a good indicator of gate fidelity.

IBM is saying Q System One is delivering some of the lowest error rates it has measured for a quantum system, with an average two-qubit gate error rate of just 1.7 percent. That’s more than half a percentage point lower than IBM’s previous best for its Q20 Poughkeepsie machine and more than a full point lower than the Q20 Tokyo system. The Q System One error rate also appears to be pretty much on par with IonQ’s 11-qubit configured device, which uses ion-trap qubits rather than the superconducting technology employed by IBM, Google, Intel, and others.

Google is also focusing on fault tolerance in its latest 72-qubit Bristlecone chip, although the company has yet to provide much in the way of specific numbers on how well the device is fulfilling that goal. Their previous 9-qubit chip achieved a 0.6 percent error rate on two-qubit gates and the developers there are aiming to do at least as well on their newest device. That said, they believe the two-qubit error rate needs to drop below 0.5 percent (with at least 49 qubits) to reach quantum supremacy, and to 0.1 percent (with millions of qubits) to deliver a broadly useful quantum computer.

That could be a decade or more away, but from IBM’s perspective, achieving a quantum system of practical value could happen much sooner. In particular, if a system can offer a significant advantage over classical systems on some key applications like computational chemistry, financial risk modeling, and supply chain optimization, it will have practical commercial value to some very deep-pocketed customers. That leading edge is based on what IBM refers to “quantum advantage,” which the company defines as a quantum computation that is hundreds or thousands of times faster than a classical computation, needs a smaller amount of memory required by a classical computer, or does something that isn’t possible with a classical computer.

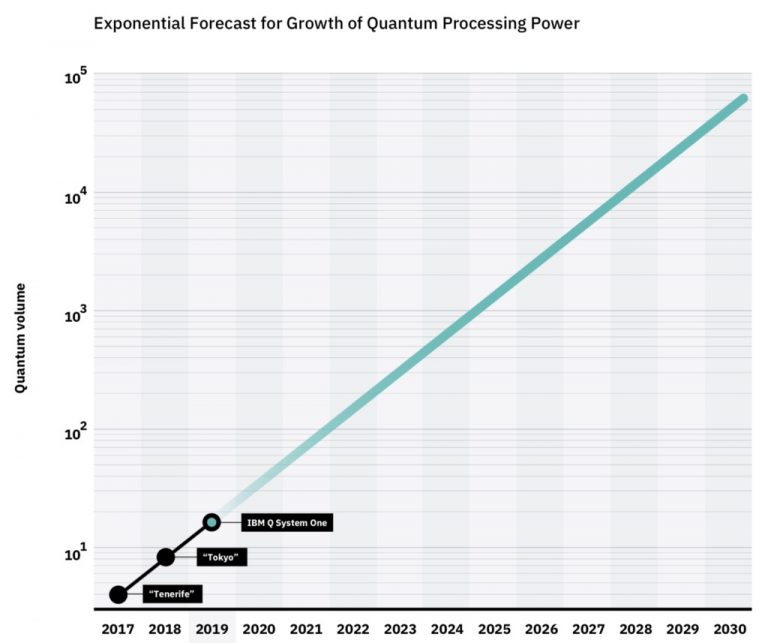

IBM also points out that it has been able to double its quantum volume every year since 2017. And even though that only represents three generations of this technology, if IBM can keep up that better-than-Moore’s Law pace for the next several years, the company claims it will be able to achieve their quantum advantage sometime in the 2020s. Based on this anticipated rate of increase in quantum volume, IBM has established a goal to do just that.

“Today, we are proposing a roadmap for quantum computing, as our IBM Q team is committed to reaching a point where quantum computation will provide a real impact on science and business,” said Sarah Sheldon, lead of the IBM Q Quantum Performance team “While we are making scientific breakthroughs and pursuing early uses cases for quantum computing, our goal is to continue to drive higher quantum volume to ultimately demonstrate quantum advantage.”

Sheldon and IBM Fellow Jay Gambetta offer a few hints on how IBM intends to keep ratcheting up the quantum volume in a recent blog post. One of the most important aspects appears to be keeping the coherence times in line with the error rates, noting that their devices are “close to being fundamentally limited by coherence times. The current coherence time for the IBM Q System One averages 73 microseconds, but to achieve an error rate of 0.01 percent, they say coherence time will need to be boosted to between one and five milliseconds. As far as they can determine from the underlying physics of the superconducting technology they are using, all of this is possible, noting there is “no fundamental materials ceiling to these devices yet.”

The subtext is pretty clear: Moore’s Law may be dying of old age, but the technology driving quantum computers is just getting started. “In 1965, Gordon Moore said, ‘The future of integrated electronics is the future of electronics itself,’” Sheldon and Gambetta recounted. “We now believe the future of quantum computing to be the future of computing itself.”

Be the first to comment