Hyperconverged infrastructure has grown up quite a bit from its early days, when it was seen primarily as a way of supplying an affordable and easy-to-deploy solution for supporting virtual desktop infrastructure (VDI) deployments. Wider adoption in the datacenter has happened fairly quickly, with the use cases multiplying and the installed base growing fast – and the numbers continue to trend up. Lower capital and operation expenses play a role, as does the modular nature of HCI, which allows for easier scalability and relatively easy deployment.

This shouldn’t come as any surprise to The Next Platform readers. We have detailed the pros of hyperconverged infrastructure (HCI), as well as the cons. By bringing together the compute and storage into a single node, HCI creates a modular design that means scaling is done simply by adding more nodes that deliver more compute and storage. Management software for HCI means running the systems is easier and cheaper. However, there are drawbacks, including that HCI can force organizations to add more capabilities than they may want. An enterprise may simply need more compute, but because HCI combines compute and storage, it has to take on the added storage as well.

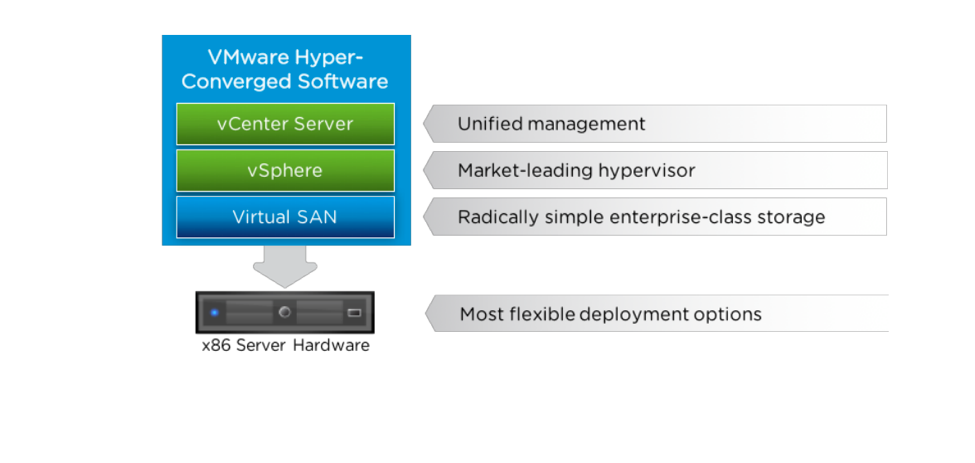

That hasn’t stopped the increasing datacenter adoption of the infrastructure, which has quickly expanded beyond VDI environments to include everything from databases to in-memory technologies, such as SAP’s HANA in-memory database. Analyst firms like Gartner and IDC are tracking a market segment that is growing more than 70 percent a year as enterprises embrace HCI for their on-premises operations. VMware offers its vSAN HCI software on more than fifteen hardware platforms, and the company now has more than 17,000 customers, adding about 150 a week, according to Lee Caswell, vice president of products, storage, and availability at VMware.

“What you are watching is VMware coming into this market with a bit of both technology offerings and ecosystem partners that are helping take this market from a niche play early on into a mainstream datacenter-quality, enterprise-ready infrastructure choice for customers,” Caswell tells The Next Platform. “Customers are responding and the biggest thing that you can see here is that the types of applications that are being put on this HCI hyperconverged infrastructure are now moving from really out-of-the-datacenter environments like VDI” to databases and other workloads.

The cost aspect is interesting – enterprises can save as much as 20 percent in capital expenditures with HCI, when compared with traditional three-tier datacenter architectures, he says.

“But that’s not the key driver,” Caswell says. “The key driver is this: I usually ask people to remember when Gmail introduced a 1 GB mailbox. That was distinctly different from what corporate IT offered inside of a workplace. What people saw in the cloud was the new operating model that was about speed and flexibility for customers. What’s happening in HCI is that you move from managing storage as a separate entity to basically having the infrastructure be on a quick-twitch response. That quick-twitch response means that you’ve basically reunited the storage, which was separate from the applications. In HCI, the storage is now co-existent with the applications. You’ve brought that storage back into these servers, which is where the applications were running.”

However, as the use of HCI within the enterprise datacenter continues to grow, the next steps for hyperconverged infrastructure is outside those traditional walls, not only in the cloud but also at the edge – with the rapid growth of the internet of things (IoT) – and with telecommunications firms as they roll out fast 5G networks, according to the VMware executive. As we’ve discussed in the past, attention of vendors and enterprises alike are turning to the edge, where more and more of the data that is key to organizations is being generated, and where it needs to be collected, stored, processed, analyzed and then acted upon, all in as close to real-time as possible. The latency and costs involved with sending data back and forth between these IoT devices – not only smartphones and other mobile devices, but also sensors, industrial systems, autonomous vehicles and the like – and core datacenters or the cloud become prohibitive, so the goal is to process and analyze as much of the data as possible at the edge, closer to where the data is being generated.

Hardware and software vendors from Dell EMC (which in November more tightly integrated vSAN with its VxRail hardware stack) to Hewlett Packard Enterprise to Cisco Systems are pushing as much of their capabilities out to the edge as possible, and HCI will play a key role there, Caswell says.

“As you think about IoT and the edge and the billions of endpoint devices that are being deployed, now we start thinking about, ‘Well, when you think about the edge, you’re going to have [an] edge [that] needs compute that’s separated from the core or the cloud, and it’s going to need a persistent sort of record,’” he says. “HCI is an ideal fit, so wherever you’re split by bandwidth, economics or data sovereignty issues, you’re going to have to have local processing with persistent storage, and so the edge offers up a dramatic opportunity for now a common operational model all the way up from the edge to the core to the cloud.”

VMware already is seeing this happen. The company has vSAN deployments on remote sites like oil rigs, and Caswell points to retailers as other organizations that can use HCI. They deploy sensors that generate data and distributed computing environments where local processing is critical and they don’t want to always leverage connections into public clouds. “The edge is going to evolve as new analytics, sensors, things like connected cars become important, but we have a way to go and quite an evolution into that space, though VMware is already at the edge,” he says.

The telco industry also is ripe for HCI, according to Caswell. Telcos for decades have populated their datacenter with custom hardware. However, AT&T, Verizon and others are transforming their operations with more commodity gear. AT&T has been vocal about using white-box hardware in its datacenters and other sites as it builds out its 5G network. 5G is the next generation of broadband wireless and promises as much as 20 times the speed than current 4G networks as well as 40 percent lower latency and more capacity. It will enable a broad array of advanced and emerging workloads and uses cases, such as autonomous vehicles.

VMware already is evolving its technologies to support 5G and the rapidly expanding number of endpoints as it starts becoming more widely available later this year and into 2020. That includes its NSX network virtualization technology and wrapping security attributes around a VM or container, and it also includes portion its ESXi server virtualization hypervisor to Arm processors used at the edge. (Interestingly, VMware has not yet committed to supporting ESXi on server-class Arm chips or on Power processors from IBM, but both are a distinct possibility.)

“That means that you presume that these VMs and applications are going to move and store the data with them, and we’re doing this instead of trying to establish hardened perimeters around every location,” Caswell says. “That’s a really tough thing to do. What you have is the opportunity to say, ‘As long as the VM and its data have those attributes and we presume that they will move, now I don’t have to go and think as much about the perimeter security rate of just trying to block everyone else.’ That model is finally broken, so I think the telco market and 5G transition introduces a very interesting opportunity for rethinking the security model and then also moving to a standard server base in place of proprietary hardware-based system with a software stack is the ideal solution for a fast-changing environment.”

There also is an opportunity for HCI.

“You actually think about putting an HCI deployment in a cell tower, for example, for monitoring of local information that you can’t send everything back to the core or cloud in order to make local decisions,” he says. “That data is important. I need to process it locally. I need to basically provide the analytics [and] machine learning on that data in a distributed environment. What HCI provides a very simple, easy way to do that without requiring any specialist. I’m moving to a generalist model who can plug in a system where it’s three nodes, all based on Ethernet and servers, as opposed to being based on storage, Fibre Channel and LUNs and a whole set of expertise. You’re moving to a generalist model that allows you to just have broader deployment at a lower cost, both CAPEX and OPEX, for distributed computing and distributed, persistent storage.”

With all the endpoints that will be connected to these faster 5G networks, “for storage people, do you move the data or do you move the analytics to the data? Which is easier to move? Data has gravity. It’s not fast or free to move. It takes time, it takes bandwidth, and so usually what you want to do is move the analytics out towards where the data’s being generated.”

Actually, NetApp HCI decouples compute and storage into separate nodes. You can add either a compute node, or a storage node independently of each other.