There are only so many quantum hardware architectures available but as that number grows, the need to understand which processor is best for specific quantum algorithms will be more pressing.

We are, of course, several years away from an explosion in the number of quantum chip startups jockeying for share and competing on benchmarks. Still, there is a viable but small market for platform-agnostic quantum simulators that help early developers understand which quantum codes work for various quantum architectures.

Quantum simulators are software stacks that emulate the connectivity and stability of quantum processors for individual quantum algorithms. The few companies that have gate model quantum systems (IBM, Google, Rigetti) already have their own simulation environments but these are rooted in their own hardware architectures. However, Atos saw an opportunity to lay the foundation for the next generation of quantum system use with its quantum simulator which runs on its own large shared memory clusters.

In essence, the Atos Quantum Simulator can analyze a quantum algorithm and match it to the best quantum processor technology based on variations between those devices in decoherence times and connectivity topologies. The company has developed algorithms that model noise in quantum systems, which has a major impact on how quickly the qubits degrade and the reasons for the breakdown in entanglement.

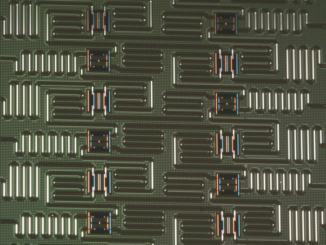

As one of the lead architects for the Quantum Simulator, Scott Hamilton, tells The Next Platform, the simulation can model the processors and topologies using a directional mapping technique that is tailored for each quantum processor they test. The topology map for these processors can then be used to optimize a circuit for the given connectivity paradigm (all quantum processors have differing ways of connecting qubits; from nearest neighbor like IBM’s device or Google’s more all-to-all inspired approach.

The focus is on gate model systems but Hamilton says they have also simulated D-Wave’s annealing architecture. The Atos team is refining its models and giving feedback in return via a partnership with Oak Ridge National Lab we talked about here, which has access to the various quantum devices.

“In the case of Google, for instance, their platform has more qubits that are directly connected but IBM’s structure is more like a 5-qubit array where the central qubit can communicate with all of the outside ones around a ring,” says Hamilton. But this does not necessarily mean one architecture is better than another since all evaluations are algorithm dependent.

Atos has evaluated the architectures of the major vendors and interestingly, several startups and their emerging devices. He says that Microsoft’s quantum design is not far enough along since it is only a couple of qubits and Rigetti is doing much the same thing in terms of simulation but they do not have enough information about their device to adequately model it.

Aside from these early evaluations, the other interesting side of this for high performance computing folks is how a densely interconnected system with shared memory is required to model quantum devices with the horsepower and communication necessary to do it across all processors without interruption.

This capability is based on a supercomputing architecture from Bull/Atos that many in HPC will know (although it has roots in running SAP HANA at massive scale). The quantum simulation tools runs on the Suquana S-series system. This supports up to 32 processor sockets (this machine for the simulator uses Skylake CPUs) and a single memeory space of up to 48 TB.

This capability is based on a supercomputing architecture from Bull/Atos that many in HPC will know (although it has roots in running SAP HANA at massive scale). The quantum simulation tools runs on the Suquana S-series system. This supports up to 32 processor sockets (this machine for the simulator uses Skylake CPUs) and a single memeory space of up to 48 TB.

All of this allows Atos to simulate up to 41 qubits. He says non-SMP machines that try to do this kind of modeling have to rely on a high speed interconnect which introduces time and error and increases simulation times. “Fitting the entire model into memory is where we are seeing a major improvement in the simulations from IBM and RIgetti—over a 40X improvement,” he says.

Since it’s secret sauce, Atos cannot tell us exactly how the emulation works but Hamilton did tell us it has a plugin-based structure to allow interfacing between quantum platforms. They are currently running four separate simulators and can allow researchers to use their own. “The plugin structure lets us modify the software stack at different levels and be able to interface with real quantum processors eventually. We have not done that yet but we hope to get there in the near future. We have structured the libraries into two levels that can simulate the classical and quantum processing pieces as well.

“We can work with all of the languages people use for quantum programming, including Q#,” Hamilton explains. “It all gets compiled down to our own open circuit format with translators that can interface with those languages. We can translate any simulator as well as long as we know the underlying gate model.”

We will go back to the point about how far something like this can reach, however. With the main quantum system makers housing their own simulators, where is the market for an independent quantum simulation platform? Hamilton says it is still early days but they see what they are building as an agnostic platform for future quantum development.

If indeed there is an explosion of new quantum devices that are vying for their own market share, this could be interesting indeed, but we are still some years away from significant variation to warrant dramatic competition between quantum device makers. The gate model system makers are still hovering around the same qubit counts, albeit with different connectivity strategies to ensure longer coherence times and reliability and efforts like Microsoft’s error correction protected strategy are more software and concept than hardware strategy.

But perhaps the real promise of quantum simulation relies far less on hardware advancements than being able to target algorithms to devices and extract meaningful results. Maybe the future of quantum systems means getting the algorithms codified to certain architectures first and building from there.

For example, as we discussed with Berkeley Lab researchers as they build their own quantum device, there might be custom quantum processor topologies that are application specific. Here, the quantum simulation work could be useful in optimizing circuits for particular workloads in quantum chemistry, for instance. Based on the analysis of quantum algorithms and how they match to available devices, Hamilton says they can see how different algorithms with different connectivity graphs, noise models, and decoherence times perform based on the gate structure of each device to find the best fit. And it is here where Atos might be onto something. Even if it might take a decade for the business story to flesh out.

Be the first to comment