Historically and traditionally, academic supercomputing centers have been very open about the architecture and setup of their HPC systems, and it makes sense if you think about it. Most of these institutions are publicly funded, so openness is implied. Moreover, colleges and universities are competitive with each other and they want to brag about the facilities they have and the research that their systems support and they often use their HPC iron as a recruiting tool.

This stands in stark contrast to enterprise datacenters, which are super-secretive about the hardware and software they use for simulation, modeling, and now machine learning and data analytics. Cloud builders are generally also secretive about their setups, and hyperscalers sometimes can’t help themselves and they brag about their stuff as a recruiting tool and to show off just for the fun of it.

The thing about HPC system architecture is that it is fractal in nature. You can take a system and scale it up or down and the architecture still holds. So if you can study a modestly sized new academic supercomputing system, you can get a sense of how you might start to build a much larger cluster to run larger jobs or at least many more smaller ones. Such is the case with a new HPC system called DeepSense, which IBM is building in Canada for the Centre for Ocean Ventures and Entrepreneurship (COVE), Dalhousie University, the Atlantic Canada Opportunities Agency, the Ocean Frontier Institute, and the province of Nova Scotia.

Running Deep

Many of us don’t think about the ocean all that much, except when we go to the beach, but a tremendous amount of business is done on the ocean through transportation or harvesting its resources, and there is of course tourism and entertainment.

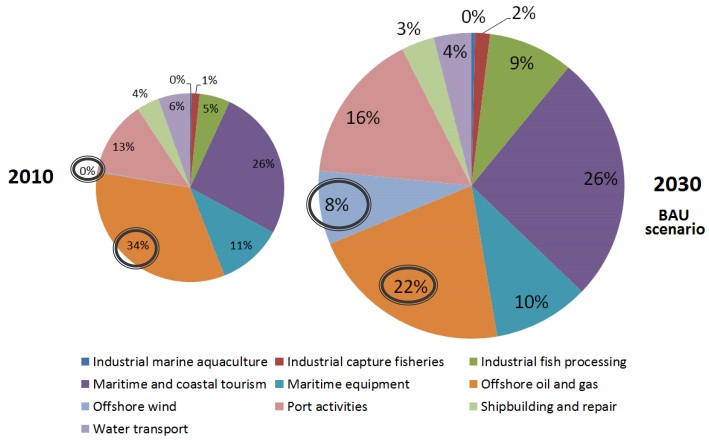

The regional ocean economy in Nova Scotia has 60,000 jobs and generates $5 billion in revenues, hence the investment in DeepSense at Dalhousie University. The global ocean economy is estimated by the Organization for Economic Cooperation and Development to generate $1.5 trillion in gross domestic value (not the same as gross domestic product) and that is expected to double to $3 trillion by 2030. The fishing, tourism, and oil and gas industries dominate these figures, of course, but ports contribute a lot, too, and offshore energy generation (both wind and wave) are expected to become a bigger piece of the pie. Here is what that looks like:

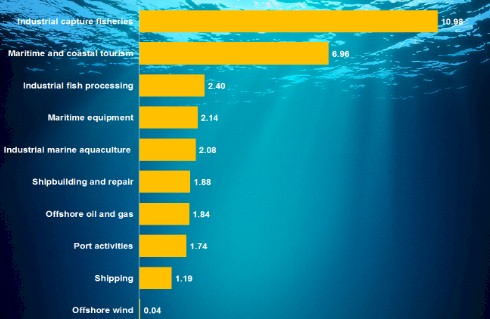

The OECD figures this is how the jobs broke down across the various ocean industries globally back in 2010:

The oceans, like other natural environments, are under pressure as the world’s population grows and resources get harvested and consumed. Pollution and climate change are big issues, and so is overfishing and energy production from waves and wind near or on the ocean. The oil and gas, transportation, and fishing industries are directly affected by anything happening with the oceans and the weather above it, and so are the world’s major navies.

So modeling the behavior of the ocean is important, and that is the task that the builders of the DeepSense are going to try to tackle – and they intend to use a mix of traditional HPC tools and emerging machine learning tools to innovate in this area. The DeepSense machine, which was donated to Dalhousie University in Halifax, Nova Scotia, by IBM, shows how Big Blue is thinking about building a complete HPC-AI system using its systems and software and what kinds of machines it wants to bring to market to topple the hegemony of Intel machinery in academic and enterprise HPC.

The DeepSense machine is not particularly large and its performance is not going to get it a ranking on the Top 500 list of supercomputers. But it is not simulating and modeling the ocean, an immense task, but rather taking output from the models run by oceanic researchers around the world and mashing it up with economic data to do ocean conservation. More than 200 companies and 60 tech startups in Nova Scotia will be using the system to case the economic opportunity that the oceans present and how to preserve it, according to IBM. This includes research relating to fisheries and aquaculture, seaport and logistics, security and defense, marine risk, finance and insurance; offshore energy, shipbuilding, policy and government, and ocean datacenters (Microsoft has played around here, using the ocean to cool an underwater facility). The idea is to create ocean data sets and the computational models to analyze them, and then scale them up. So the DeepSense machine and its applications could be scaled up a lot larger than the current system as more users do more things with it.

As an example, Real Time Aquaculture will use DeepSense to do environmental monitoring for ocean farmers using underwater wireless sensors that can feed a stream of data so farmers can make more immediate decisions about their inventory. The sensor array that Real Time Aquaculture has installed in the Atlantic Ocean takes 100,000 measurements a day and the analytics application it has created analyzes 11 million data points relating to temperature and tilt, salinity, dissolved oxygen, turbidity, and algae levels in the water.

The DeepSense project is being funded through a $9.8 million Canadian in-kind donation of hardware and software and the total investment in the project is $20 million Canadian. The federal government’s Atlantic Canada Opportunities Agency is kicking in another $5.9 million Canadian to the project. Dalhousie University and the Ocean Frontier Institute are putting in $2.1 million Canadian as well. The funding covers the installation of the hardware and software and its operation for five years. Inasmuch as IBM had put a value on the price of its donation, this information about the DeepSense configuration is useful. It implies that the cost of an HPC-AI system is mostly software and services, and not the underlying hardware. IBM is also making available techies and researchers from its Global Research division, who will provide their expertise in environmental science in general and the ocean in particular.

DeepSense is based on IBM Power Systems, as you might expect, and some of the nodes in the cluster are going to be accelerated by Nvidia GPUs. This acceleration will be made available for both HPC and AI workloads, and that means the system can have far fewer nodes than might be required with standard X86 processors, which do not have the memory bandwidth or compute capacity of the CPU-GPU combo that IBM has endorsed as the future of computing since the Power8 generation four years ago.

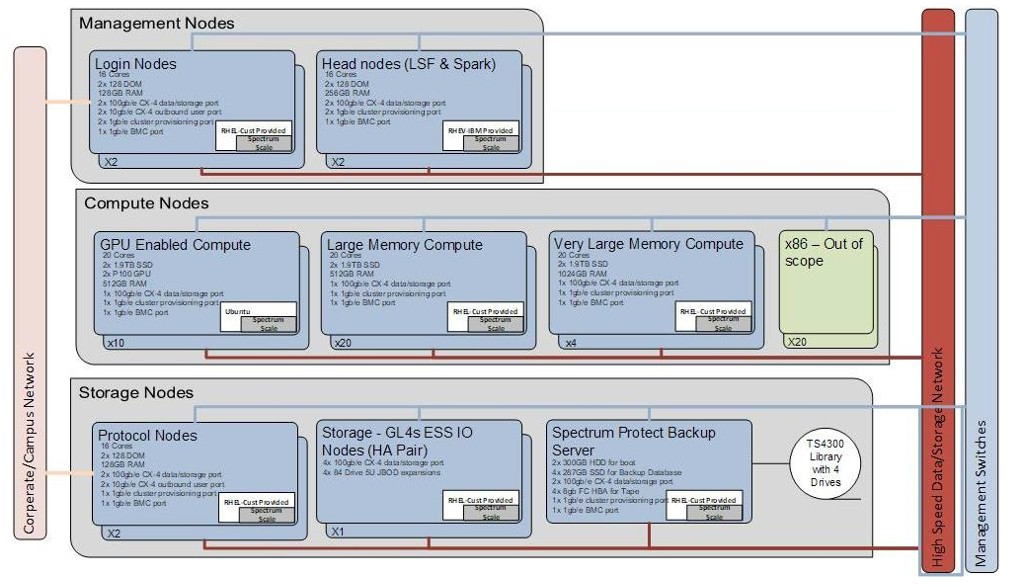

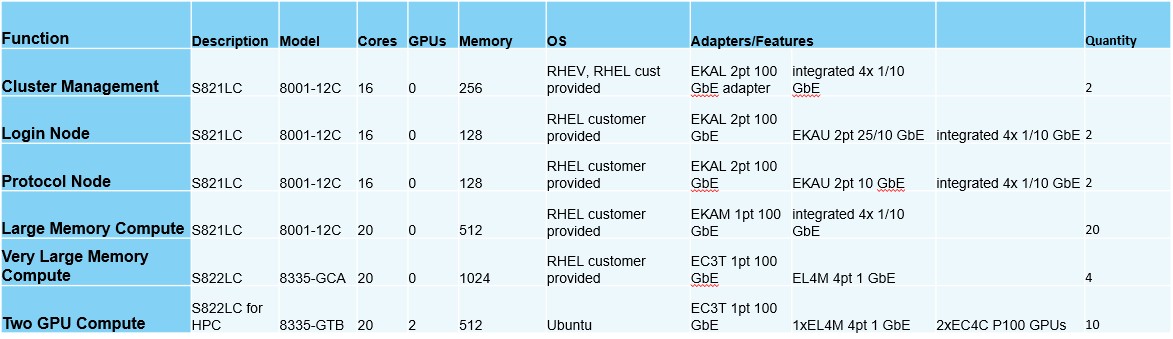

Here is the architecture of the DeepSense machine:

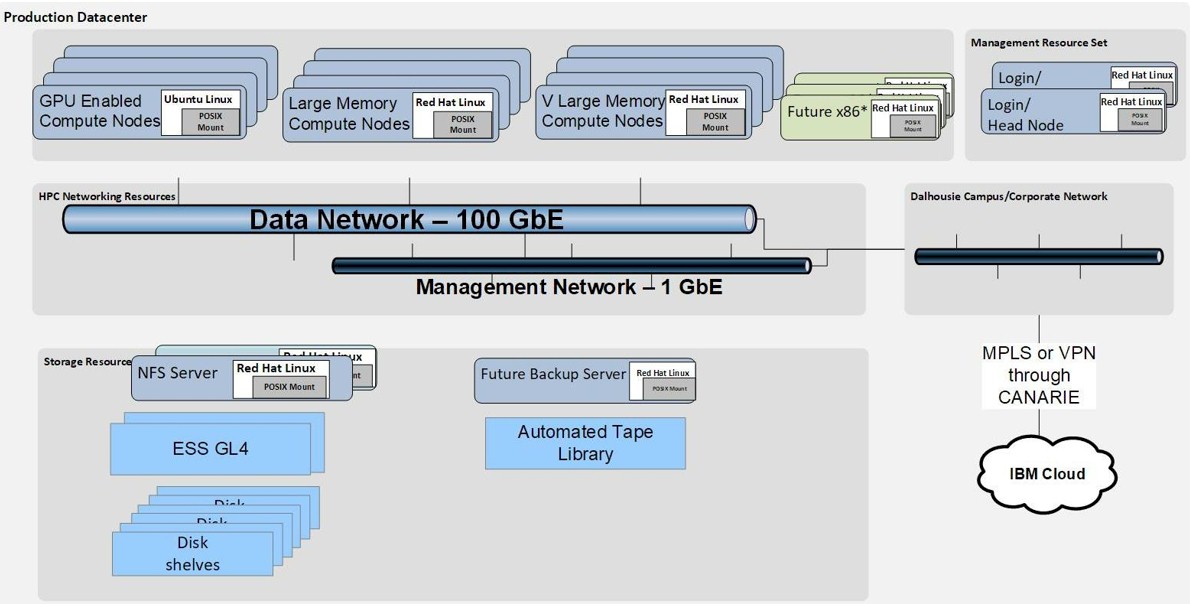

These servers are based on IBM’s Power8-based Power S821LC systems, not new Power9 iron, and the prior generation of Nvidia “Pascal” P100 GPU accelerators. The nodes that are using GPUs are running Canonical’s Ubuntu Server, and all of the rest of the iron is running Red Hat Enterprise Linux. There is an option to put X86 iron in the DeepSense machine, but that has not happened yet and may never happen given the compelling performance and memory bandwidth – and aggressive pricing – that IBM is offering with its current Power9 iron running Linux. There are a mix of large memory nodes (512 GB), very large memory nodes (1 TB), and GPU nodes (two P100s per server) in the DeepSense cluster, and it does not have the skinny memory configurations (128 GB in recent years and 256 GB currently) on nodes that you typically see in the HPC arena.

Here is table that shows the configurations of the nodes:

Here is table that shows the configurations of the nodes:

The nodes are linked together using 100 Gb/sec Ethernet, not InfiniBand as we might expect. Go figure. The vendor of the Ethernet adapters and switches was not identified, but the server adapters are almost certainly from Mellanox Technologies, and the switches could be as well.

The cluster is managed by IBM’s Spectrum Cluster Foundations tools, which includes the LSF (formerly Platform Computing) cluster manager for HPC jobs and the Conductor for Spark plug-in to manage in-memory processing atop Hadoop. For storage, DeepSense uses the Spectrum Scale (formerly GPFS) parallel file system, including extensions that emulate the Hadoop Distributed File System as well as OpenStack Cinder block storage and Swift object storage atop it as well as the traditional POSIX, NFS, and SMB interfaces that are commonly used in HPC and the enterprise. The DeepSense cluster has 3 PB of capacity at the moment, and will no doubt grow. (That’s not a lot these days.)

The GPU nodes are equipped with IBM’s PowerAI stack, which includes machine learning frameworks such as Caffe, Torch, TensorFlow, Theano, and Chainer all tuned up for Power CPUs and Nvidia GPUs. And, to make it easy to move workloads on and off DeepSense, the whole shebang is being equipped with a variant of the Docker Enterprise container orchestrator that IBM has created in conjunction with Docker specifically for Power iron.

This is what IBM is bringing to bear in the converging HPC and AI worlds. The question now is: Will more organizations actually buy it? Big Blue is certainly hoping so, and is seeding its market to try to make it happen. Some of those organizations working on the DeepSense project will want their own clusters at some point, and they will know this setup intimately. This is how you grow a market.

Be the first to comment