Much of the quantum computing hype of the last few years has centered on D-Wave, which has installed a number of functional systems and is hard at work making quantum programming more practical.

Smaller companies like Rigetti Computing are gaining traction as well, but all the while, in the background, IBM has been steadily furthering quantum computing work that kicked off at IBM Research in the mid-1970s with the introduction of the quantum information concept by Charlie Bennett.

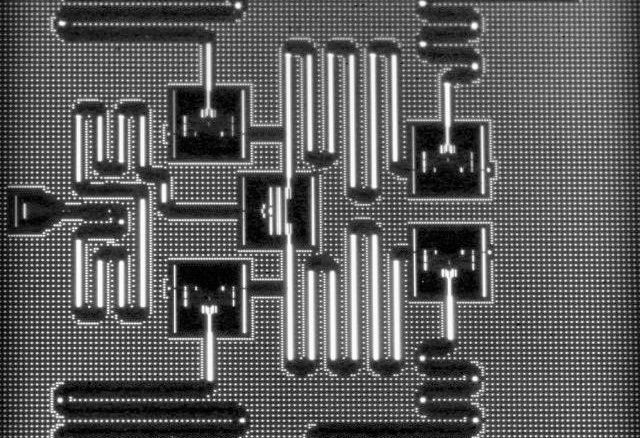

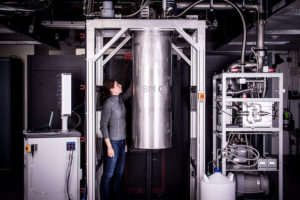

Since those early days, IBM has hit some important milestones on the road to quantum computing, including demonstrating the first quantum algorithms at their Almaden research center in the 1990s using a different type of qubit—one that was based on a liquid state nuclear magnetic resonance system. This was groundbreaking at the time but the big problem with taking it beyond research was the same one that plagues existing quantum devices—getting them to scale and remain stable. The current generation of quantum hardware devices based on superconnecting circuits and qubits matured alongside these early efforts with early work on cryogenic computing, which yielded a large boost in the qubits (and their stability) in 2010. Last summer, IBM showcased a 5 qubit quantum processor that was robust enough to allow more operations than ever before on such a system. That has now been boosted to 20 qubits.

It is important to stop here and talk for a moment about those qubits and just what that performance means. For decades we have been trained to think of processor performance in terms of jacking up the number of transistors or floating point performance. And from a quick view, it would sound like a 2000 qubit system (like D-Wave has) is leagues better than a 5 qubit quantum machine. This problematic metric is tough for a company like IBM to climb up against when in fact, as IBM’s manager of experimental quantum computing, Jerry Chow, tells The Next Platform the differences are far more nuanced than many understand.

For starters, the quantum systems designed by D-Wave and IBM differ significantly (D-Wave is focused on quantum annealing while IBM strives toward a fault tolerant universal quantum computer based on superconnecting circuits and qubits). Further, is having more qubits that are coherent (active) for only a very short amount of time better or worse than far fewer qubits that have drastically higher coherency times with far lower error rates? These are questions IBM wants people to consider as they consider the future of quantum computing. More generally, they also want to point to the programmability hurdles and breadth of potential applications solvable on quantum devices as important factors in who is ahead in the race for broader commercial adoption of quantum computers.

“D-Wave is building a special purpose quantum annealer, which is addressing a particular problem that is designed into their physical hardware. We are taking a universal path that can scale toward some eventual fault tolerant quantum system. There are some well-known algorithms with exponential speedups over classical systems but they require this fault tolerant universa approach. Even at stage where we have passed 50, 100, and then up to a few hundred qubits for test systems there are errors and they are not fault tolerant but even still, there are many applications in chemistry and optimization on that horizon,” Chow explains. He says that IBM wants to push a new metric for quantum computing capability measurement. Their proposal is quantum volume. This idea is that while more qubits are important, more depth in those is critical, which allows for more time to run operations. Further, error rates are not accounted for in mere discussions about qubit counts.

For those that have made decisions about the differences between D-Wave and IBM’s quantum approaches, one important distinction remains. Programmability—and specifically, which is easier. Chow says many domain specialist (i.e. chemistry researchers) versus physicists have not known where to start with quantum computing. Its Q Experience software (API and SDK) allows for the use of familiar tools and interfaces iPython and Jupyter notebooks for instance) to start recasting problems to run on a remote quantum device.

With its announcement today of the 20 qubit capability that is available now through the IBM Q Experience interface, IBM also announced a 50 qubit prototype is alive in the lab. Chow says they have also beefed up their open source quantum programming stack QISKit, which he says over 60,000 users have sandboxed with over 1.7 million quantum experiments run, generating over 30 peer reviewed papers.

“Until people can get their hands on this stuff it is hard for real adoption to happen. It is an entirely different model of computing. We are trying to bridge the gap with open source tools, engage a community, defining interfaces and a software stack to make it usable and I think we’ll see more of a heuristic development; we’ll make better hardware and people will have to run things to find better ideas, just as with the development of classical algorithms in the last few decades,” says Chow.

This news emerges just before crowds gather at the annual Supercomputing Conference (SC17) in Denver where an unprecedented amount of attention will be granted to novel architectures and different models of computing. In other words, the time is increasingly ripe for quantum systems to be granted more legitimacy as accelerators for HPC applications or as wholesale replacements in very select areas like quantum chemistry, for instance.

Be the first to comment