Well, it could have been a lot worse. About 5.6 percent worse, if you do the math.

As we here at The Next Platform have been anticipating for quite some time, with so many stars aligning here in 2017 and a slew of server processor and GPU coprocessor announcements and deliveries expected starting in the summer and rolling into the fall, there is indeed a slowdown in the server market and one that savvy customers might be able to take advantage of. But we thought those on the bleeding edge of performance were going to wait to see what Intel, AMD, IBM, Qualcomm, and Cavium are going to bring to bear with their respective X86, Power, and ARM processors. And we think that one of the big hyperscalers that is also a cloud player has got the jump on the entire market when it comes to Intel’s impending “Skylake” Xeon processors, which are due within the next month or so according to the scuttlebutt.

Each new generation of processors is supposed to present a big jump in performance as well as an improvement in price, so it is not just a matter of getting a public relations coup to be put at the front of the line for any new chip generation from Intel and, now, its reinvigorated competitors in the X86, Power, and ARM arenas. Over the past several generations of Xeon chips, as we have shown in our past analysis, Intel has increased the performance the Xeon line by using a mix of architectural changes in the cores and caches to improve instructions per clock as well as by cramming more and more cores on the die to boost the throughput. But in many cases, thanks to the lack of credible competition, Intel has been able to hold the cost of a unit of performance more or less steady and therefore extract a lot of profits from the server base. In fact, we think Intel not only grew its profits from servers, but is and has been for a long time the main beneficiary, in terms of profits, from the system market. Microsoft and Red Hat get their piece of the action with operating systems, but the server makers themselves – whether they are OEMs or ODMs – live on skinny to no margins. A condition, by the way, that we think is unhealthy.

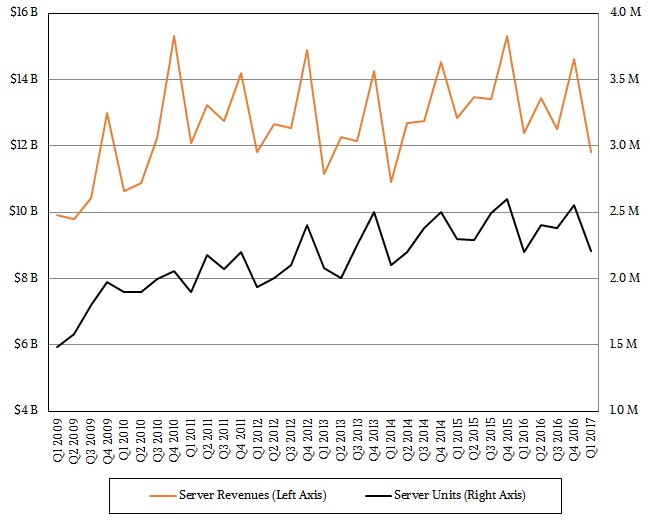

In presenting its server revenue and shipment report card for the first quarter, the analysts at IDC said that one large server customer making a big bet on cloud services accounted for approximately 250,000 units deployed during that period, which works out to 11 percent of the 2.21 million server units deployed. If you take the average selling price of a server sold by one of the ODMs – just shy of $2,800 and just a smidgen lower than a year ago – then this would work out to around $690 million in revenue, and if that hyperscaler – who we think is Google based on a hunch – did not have access to what we presume are Skylake processors, then revenues for the quarter would have been down by more than 10 percent and shipments would have fallen by 11 percent. This would have been a Mini Ice Age in the server market, albeit much less severe than the 30 percent to 35 percent declines we saw over four quarters during the Great Recession back in late 2008 and early 2009.

We think that Google is the big server buyer that IDC is referencing because it has been very vocal about having early access to the Skylake chips, and by virtue of the server volumes that Google is deploying as it builds out its Cloud Platform public cloud, it can move to the front of the Skylake line. To our way of thinking, Google dabbles in Power and ARM architectures but doesn’t make a big deal about it, as Microsoft has done in recent months. It is probably not a coincidence that Microsoft endorsed Qualcomm Centriq ARM chips as well as AMD Epyc X86 chips in its “Project Olympus” Open Compute servers at the same time as it announced support of Windows Server on ARM and Hewlett Packard Enterprise, which has Microsoft as one of its largest customers, stomached a 14 percent server decline in its first quarter. The ODMs were up strongly, too, and that also points to Google, which uses ODMs to build its iron. Whoever the hyperscaler is that got a half million chips from Intel – and it makes no sense that they are not Skylakes – they seemed to have soaked up all of Intel’s capacity to make the chips, which is why they are being announced sometime this summer instead of last fall or this spring.

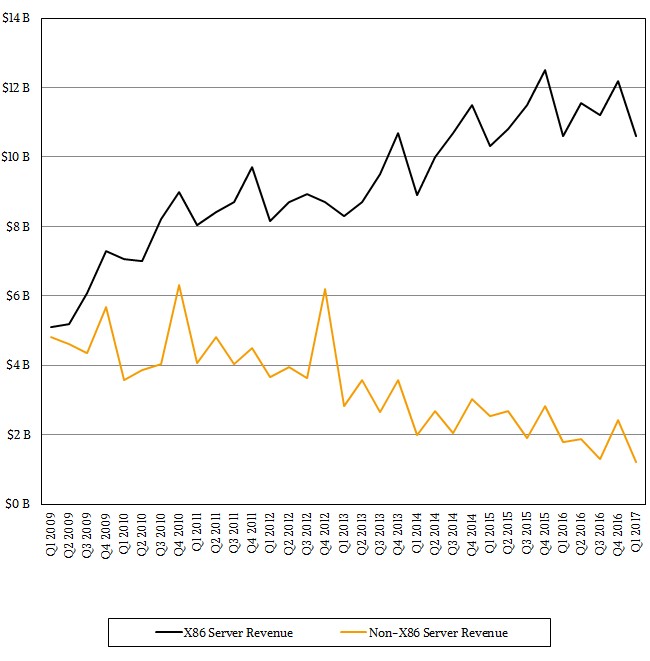

As it turns out, server shipments worldwide rose by 1.4 percent to 2.21 million units, according to IDC, but because of secular declines in the proprietary mainframe and midrange markets as well as in RISC/Unix platforms, revenues fell by 4.6 percent to $11.81 billion in the thirteen weeks ending in March of this year.

If you drill down into the figures, revenues for X86 machinery were flat at $10.6 billion and shipments held steady, too, which is remarkable if you think about it. The average selling price of an X86 server held steady at around $4,800 and change, and the funny bit to us is that the average X86 server costs about $2,000 more than the average ODM server. If you work the math out backwards, the tens of millions of enterprises that buy machines for their own use are paying a hefty premium for their gear, and not for nothing. They have a lot of redundancy and resiliency in each machine, since each machine tends to be mission critical and host its own applications and databases. (We are oversimplifying a bit.) This is why hyperscalers and cloud builders put resiliency in their clusters, but if you are replicating data and compute two or three times, notice how the price doesn’t really go down? If you give people utility pricing, they turn it off and on and reserve it, but then they end up using more of it, too.

Welcome to the IT industry. The price never really goes down. The functionality really does go up, however.

If you look at the server market by form factor and price band, the volume segment, which includes machines that cost less than $25,000, had a 3.4 percent decline in the first quarter, to $9.5 billion, and the midrange sector, which is comprised of machines that cost between $25,000 and $250,000, had a stunning 16.5 percent increase to $1.3 billion. (The midrange as so defined has not seen good growth in a while.) The high end of the market, with machines that cost more than $250,000, had a 20 percent decline to $1 billion. We can remember when this was how much money sold each month in System z mainframes alone.

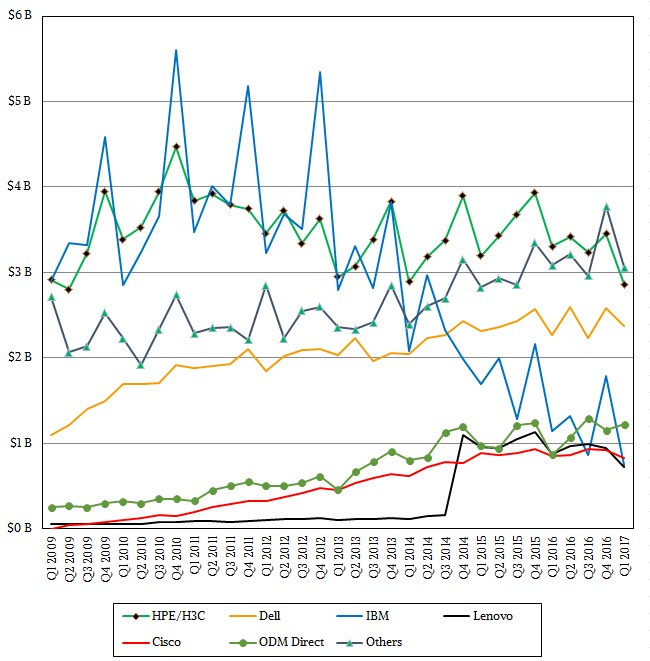

Hewlett Packard Enterprise has held onto its top spot in the revenue rankings, but even with the combination of its H3C partnership in China with Tsinghua University, HPE had a 15.8 percent revenue decline in the quarter, to $2.86 billion. Dell managed to eke out 4.7 percent growth to hit $2.37 billion, and that was because it grew shipments by a tenth of a point to 465,300 units. HPE’s units fell by 14.3 percent to 460,400 units, and the difference seems to be one of its big tier one customers, who we think was probably Microsoft Azure. Dell has been ahead of HPE in the market in the United States in terms of shipments for a while, and now it has beat it on the global scale.

Lenovo, on the other hand, is losing ground. The company’s shipments fell by 27.3 percent to 146,100 units and its revenues fell by 16.5 percent to $727 million, as best as IDC can reckon. IDC believes that Cisco sold $824.7 million in its UCS blade and rack systems in the quarter, down only 3 percent and doing better than the market at large, but clearly Cisco has found its water level at somewhere around $3.5 billion in annual server sales. IBM, which sold off its X86 server business to Lenovo, dropped the most in the first quarter, with revenues off a recession-like 34.7 percent to $744.5 million.

The ODMs, which IDC tracks as a group and which includes the likes of Quanta, Wistron, Foxconn, Tyan Jabil Circuit, and a few others, did remarkably well, with revenues up 41.8 percent to $1.22 billion and shipments up 44.1 percent to 443,600 machines. Again, we think that big hyperscaler buying what we presume are a quarter million Skylake machines is one of the big factors here.

The question we have now is whether the global market can consume a massive surge in computing power that is coming down the pike. If these impending CPUs are priced aggressively against each other, this will be great for price/performance, but not so great for chip makers unless there is elastic demand for compute. Form factors, persistent memory, and augmented compute through GPUs and FPGAs are compelling changes in the way systems are architected, too. It will be fascinating to see how this all plays out.

Well, Google did announce it in February:

https://cloudplatform.googleblog.com/2017/02/Google-Cloud-Platform-is-the-first-cloud-provider-to-offer-Intel-Skylake.html

Well with AMD offering its Epyc platform SKUs built on that modular Zeppelin(2 CCX/8 Zen core) die at various 16 to 32 core server/workstation options for some very low cost with very good performance metrics that will/is causing Intel’s high margins tol take a hit. AMD’s yields on their Modular Zeppelin dies are rumored to be above 80% and AMD only needs to crank out those modular Zeppelin die units for their entire consumer desktop Zen/Ryzen and consumer desktop Zen/ThreadRipper(HEDT) as well as their line of pro Zen/Epyc SKUs so that in and of itself makes for some very low overhead costs, including the fact that AMD does not have to worry about uber expensive chip FAB upkeep costs and process node R&D development.

With AMD’s use of GlobalFounderies(GF) it’s more about economy of scale with that licensed from Samsung 14nm process that GF is using and GF can spread that cost across GF’s entire customer base so AMD’s cost there are amortized along with GF’s entire 14nm customer base with AMD getting an agreed upon rate and no big chip fab upkeep/process node R&D bills to bleed its coffers.

AMD is sitting pretty in its very lean state owing to so many years of management fat trimming. So AMD does not have any of Intel’s high overhead middle management lard and chip fab/process node R&D upkeep costs. These smaller Modular Zeppelin dies are coming off the wafer lines by the hundreds of thousands and AMD only needs to worry about connecting those Zeppelin dies up in 1(without MCM), or 2 through 4 on an MCM to get at an entire first generation product stack consumer Zen/Ryzen 3, 5, 7 to consumer ThreadRipper(2 Zeppelin dies on an MCM) up to professional server workstation Epyc’s 4 total Zeppelin dies on an MCM for that top end SKU. I’m sure that there will be Epyc branded 16 core/24 core (2, 3 Zeppelin dies on an MCM) server workstation variants for the professional markets also with the usual fully certified for ECC memory MainBoards(1S/2S) for that professional/production market that needs fully certified ECC capable motherboard SKUs and the Epyc branded SKUs for the pro market.

AMD’s low overhead operation only needs to get its margins up a few more percentage points in order to achieve profitability and AMD can aggressively price to gain all that Professional market share back and then some, all while making use of that one modular Zeppelin die and those great yields that come with only having to crank of an 8 core die that is used across a very wide consumer to professional product stack. That one very smart Zeppelin die based move and one can only guess at what Intel’s relatively large 18+ core die/wafer yields are but AMD was vary smart to go modular with Zeppelin across its consumer desktop and professional Epyc product offerings and save billions.

AMD will be offering Epyc in single and dual socket configurations and there was some discussion at a trade event concerning a more direct(NVLink like) more directly interfacing of AMD’s Epyc branded CPUs to its Vega GPUs via the Infinity Fabric so even for HPC workloads there can be more FP workloads managed on the GPU rather than Epyc’s CPU cores only. AMD does have a disadvantage in FP/AVX on its first generation Zen products but that can be made up by utilizing its GPU SKUs for the HPC workloads that need all that FP.

Intel has already continued to double down on its usual product scheme of increasingly more segmented offerings in order to put off any more losses from its shrinking margins in the consumer market while trying to keep that consumer product stack from being used to cannibalize its server offerings via some strangely limited PCI lane offerings while AMD is offering a full 64 PCIe lanes across its consumer(ThreadRipper HEDT) and 64+ PCIe lanes in the professional/Epyc offerings. Intel’s classic majority market product segmentation strategy is coming under some very epic pressures from the AMD’s relatively sparse offerings with AMD’s Consumer ThreadRipper 16 core CPU/Motherboard SKUs all coming with 64 PCIe lanes(All ThreadRipper SKUs) rather that any artificially limited(BY Management/Marketing for Intel’s SKUs) of AMD’s motherboard offerings like Intel’s limiting of motherboard/CPU PCIe lanes and even RAID support on some SKUs. Intel is forcing some of its consumer/HEDT customers to purchase at extra costs($$$$) RAID Keys to enable the HEDT motherboard’s feature sets for some RAID configurations.

Sure Intel Is lowering its CPU prices in order to compete with Ryzen and Epyc, but Intel is suffering from metaphysical angst when it comes to that traditional cash cow Intel Server/HPC market. And Intel making some very rash decisions that are alienating some of its consumer customers. AMD’s Epyc SKUs are offering more in the area of server mainboard features(More DIMM channels, PCIe lanes, etc.) also. So Intel is really going to start feeling the pressure in the professional x86 based CPU market, as if power9 was not just beginning to come online along with some more ARM based competition.

Intel’s days of unchecked product segmentation are over in one 64 PCIe lane/8 memory channel swoop with AMD’s very unsegmented product offerings having great value at a very low initial CPU/Motherboard cost that Intel is finding itself ill prepared to compensate for that real competition in such a short amount of time and maintain those high margins that its stockholders have grown so accustomed to having.

It’s going to be a Hyperscaler’s hardware market in very short order over the next 6 to 24 months with increasing CPU and GPU accelerator competition and those hardware makers that are the most ill equipped to live with much thinner margins will be the ones that suffer the most.

@Hyper_Epyc_Margin_Pressures

I think you understate Intels problem, they have had no real competition for nearly 7 years, they have grown fat and lazy in that time and the response so far has been tepid at the very least.

The Zeppelin configuration from AMD has some weaknesses and there are still areas that Intel can out perform them, but this may well be insufficient for Intel to avoid a big hit.

The consumer market is looking at cost and Intel is expensive.

The gaming market is beginning to look to multi-threading performance and Intel are lacking.

HPC is all about the thread speed and GPU and soon FPG acceleration.

Intel seem to have missed that single threaded workloads are beginning to vanish and their undoubted advantage in that area, and make no mistake they still have that advantage, is far less important than it used to be.

My laptop and workstation are Intel, they are both 3 years old and will be refreshed within the next year, but they may no longer have “Intel Inside”. The Zen architecture is nearly as much a game changer as the Athlon was so many years ago, and Athlon drove a spike into itaniums heart.

I’m not cheering on AMD as the underdog, but for the real competition that they have bought back to the main stream CPU market, with a real, cost effective alternative to the lazy over-priced incumbent.

The workloads I typically handle may well perform better on AMD than Intel, I think I have an enjoyable few months ahead verifying the performance envelopes of various offerings.

Just on cost, my home stuff will really only be looking at AMD, curiously enough, Intel seems to be able to offer nothing that competes.