It all started with a new twist on an old idea, that of a lightweight software container running inside Linux that would house applications and make them portable. And now Docker is coming full circle and completing its eponymous platform by opening up the tools to allow users to create their own minimalist Linux operating system that is containerized and modular above the kernel and that only gives applications precisely what they need to run.

The new LinuxKit is not so much a variant of Linux as a means of creating them. The toolkit for making Linuxes, which was unveiled at DockerCon 2017 this week, is derived from containderd, the software container that Docker donated to the Cloud Native Computing Foundation, the open source consortium started by Google where its Kubernetes open source container orchestration system lives. Containerd is a daemon that runs with either Linux or Windows platforms, and it is an essential piece of the portability story that makes Docker so compelling to hyperscalers, cloud builders, enterprises, and HPC centers alike.

Patrick Chanezon, who joined Docker in March 2015 as a member of the technical staff and who put together the Docker Enterprise Edition that was announced this March, explains why Docker got into the business of creating Linux distros and why it is opening up LinuxKit so others can create their own containerized Linux operating systems.

“Over the past four years, containers have changed all of the major technology platforms, whether they target the datacenter, the cloud, or IoT, and this opens up two opportunities,” Chanezon explains. “One is that with containers, the operating system itself can become more secure, lean, and portable, and two, to drive the container ecosystem to the next level and take it mainstream, we need some means of collaborate on components and share some tooling.”

Docker knows a thing or two about this problem. The Docker runtime is entirely dependent on Linux, and over the past two years, the company has expanded out from supporting a few key Linuxes as the foundation of containers to run on MacOS and Windows 10 on the desktop, Windows Server on servers, and the virtualized server instances on Amazon Web Services, Microsoft Azure, and Google Cloud Platform. So Docker, the company, had to create variants of its stack to run on these platforms and create its own Linux substrate, tuned for each platform, so Docker would run. The tool for making these different Linux substrates is now being open sourced as LinuxKit, and it is basically a minimal, hardened Linux kernel with all of the operating system services running on top of it inside of containers.

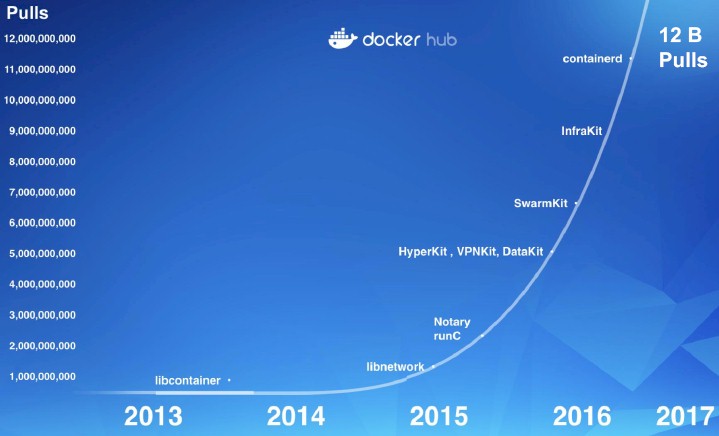

Every time Docker has done the hard work of abstracting the container environment a little more, it has helped spur on adoption of the Docker container platform. Take a look at this pretty chart showing the exponential growth in the aggregate number of pulls of application containers on the Docker Hub repository service:

By opening up LinuxKit for any and all to use, Docker will accomplish a few things. First, it will get help porting Docker to more platforms. Indeed, it is widely expected that IBM will be announcing support for Docker containers on its System z mainframes with the intent of eventually making Docker available natively on Power Systems machines, presumably running on Linux partitions but perhaps also in conjunction with its own AIX and IBM i (formerly OS/400) operating systems with a tiny little Linux substrate shimmed in there.

LinuxKit is being launched under the auspices of the Linux Foundation, the place where Linux creator Linus Torvalds gets his paycheck and also where the Xen hypervisor and other key open source projects live. Intel is in on the effort, and so is ARM Holdings, which controls the ARM processor instruction set. Microsoft, which runs Linux on its Azure cloud as a peer to Windows and which runs Docker containers on top of its Windows Server stack, whether it is in the datacenter or on the Azure cloud, is also helping steer LinuxKit. Hewlett Packard Enterprise, still the dominant server maker in the world, is also participating, and so is IBM.

The minimalist LinuxKit distribution has an ISO that is around 35 MB, and the typical Linux underpinning Docker Enterprise weighs in at around 100 MB. That bare-bones Linux setup includes the operating system kernel plus the runc and containerd to run the system services in containers and then a DHCP server and a random number generator. (Chanezon did not know offhand what the memory footprint of this entry LinuxKit is or how it compares to a running Linux instance underneath Docker Enterprise or any of the other Linux distributions, but that would be neat to know.)

Because this Linux is only designed to run containers, it has a much smaller attack surface from a security standpoint, not just smaller disk and memory capacity requirements. This added security through boxing things off is probably one of the most compelling features of software containers and was, at a much larger grained level, a key selling point for virtual machines in the datacenter a decade ago when they were first taking off on the X86 architecture.

The file system used for the LinuxKit instance is not writable, so you can’t hack it with malicious code; application data is stored in other file systems that are mounted from the containers where the code is running. Not only does Docker want to sandbox the Linux services in containers to isolate them, but Chanezon says that Linux is too big for any one person or organization to secure by themselves, so the way to secure all of the Linux services has to be sandboxed and secured by many people working side-by-side. Containerization of Linux services like that enabled by LinuxKit will help break the security job down into bite-sized pieces while providing a framework for consistency. “We think Linux is too big for any person to secure, so it has to be a community approach,” says Chanezon.

While Docker does not expect that many enterprises and HPC shops will want to build their own Linux instances using LinuxKit, we think that the idea will take off and that companies will get in the habit of securing their most sensitive systems and applications by stripping down to the essentials and containerizing elements of the Linux operating system, just like Docker itself has done for its dozen different Linux distros to support all of those platforms.

At DockerCon this week, Docker is also launching a different effort called the Moby Project, which is a build system for creating a container service. (LinixKit builds Linux substrates, and Moby lashes a bunch of other tools to the Docker runtime to turn it into a container service.) The Moby service is used internally at Docker to build its platforms, and it is written in the Go programming language like all of the rest of the Docker stack and, not coincidentally, the Kubernetes stack. (Google invented Go and ported the container management elements of its internal Borg container and cluster management tool to Go when it created Kubernetes.) To seed the Moby Project, Docker is contributing over 80 components that it has created for its Docker stack. (Of course, not all Docker elements are open.)

The Moby framework does not require that companies donate their code to use it, since it is a service, not a program that is redistributed. To get it rolling, Docker is putting in the instructions for creating the base Docker Community Edition, which was also launched in March of this year.

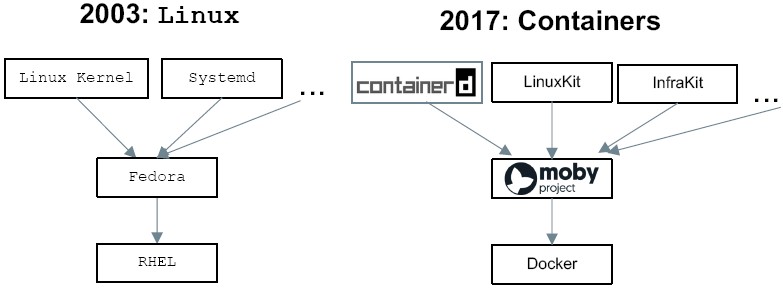

The structure of development at Docker will now look like that set up by Red Hat in the early days of Linux:

In essence, Moby is the build system that creates Docker Community Edition, which is akin to Fedora, and Docker Enterprise is derived from Moby and is akin to Red Hat Enterprise Linux.

Lifting And Shifting

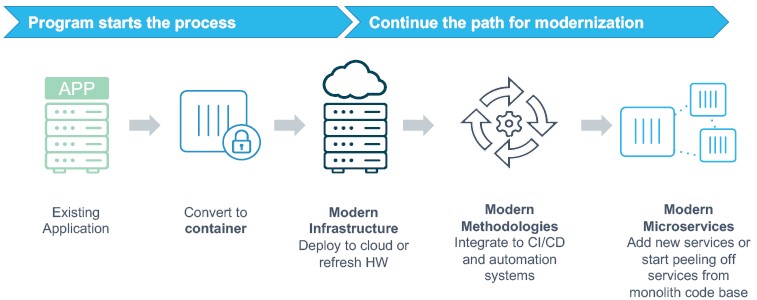

The final bit of interesting news at DockerCon 2017 is that Docker, the company, is teaming up with HPE, Microsoft, Cisco Systems, and Avanade to take on the containerization of legacy applications.

Scott Johnston, senior vice president at Docker, tells us exactly what we expected: Global 2000 customers have somewhere on the order of thousands to tens of thousands of applications, and across these major firms, less than 5 percent of the applications have been containerized so far. While somewhere between 5 percent and 10 percent of the applications that are being containerized are net-new, microservices-style applications that everyone is talking about all the time, the other 90 percent to 95 percent are just lifting and shifting legacy applications from bare metal or virtual machines to containers.

Incidentally, Oracle is one of those lifters and shifters and announced at the Docker confab that its eponymous database plus its java Devlopment Kit, WebLogic application server, HTTP Server, Coherence caching server, and Instant Client had all been containerized and are now part of the Docker Store. Oracle has not yet containerized any of parts of its Fusion application suite, and to our knowledge, SAP has not containerized its application yet, either. But this seems inevitable. (The code is no doubt already modular.)

Working with the partners above, Docker has come up with a program (marketing, not code) to pull legacy applications into containers, and to do so without changing source code, with a fixed price and statement of work – and to do it in under five days. Companies have been beta testing this program for the past several months. Financial services firm Northern Trust took an internal company address book and servicing application that was running on a Linux virtual machine and lifted it onto containers and moved it out to the Azure cloud. The resulting machine needed half as much compute and memory to run, and new instances of this application could be deployed four times as fast.

In general, says Johnston, customers who move from bare metal or VMs to Docker containers can provision, scale, and deploy applications up to 75 percent faster, and those moving from bare metal to containers can save 50 percent on compute and those who are moving from VMs will save around 25 percent. This is a big deal in an IT environment that has spent a decade doing more with less and now thinks of this as situation normal.

Nice writeup. Just wanted to point out that systemd did not exist in 2003, its first release was 2010.

Is it just me or is the entire online reporting profession starting to slip with their collective fact checking. I was just viewing a Level One Techs AM4 motherboard review and the reviewer apparently did not do his homework in stating that there was currently no APUs that where available for the AM4 motherboard platform and that AMD would be releasing some Zen/Raven Ridge APUs this year. Now AMD in fact has had its Excavator/Bristol Ridge APUs that in fact do use the AM4 motherboard platform also so the online press maybe needs to keep a fact sheet handy at all times for fact checking purposes.

How someone with that level of expertise can make such an omission is very disheartening, and the reviewer made a point to speak about the AM4 motherboard’s Video outputs all while forgetting that AMD began using AM4 motherboards before Zen/Ryzen was available with AMD’s Excavator/Bristol Ridge(consumer) Desktop/Mobile APUs.

Another big mistake with regards to the online press is the usage of AMD’s proper code name/naming nomenclature with respect to its CPUs and their respective CPUs micro-architecture code names/naming nomenclature. As for example the online press referring to Ryzen as if Ryzen is a micro-architecture when Ryzen is in fact a consumer brand name for a line of consumer SKUs that are based on the Zen CPU micro-architecture. So Zen is the proper name for AMD’s latest x86 32/64 bit ISA running micro-architecture just as Excavator is the name of AMD’s previous x86 32/64 bit ISA running micro-architecture. There is also an AMD code name(Zeppelin) for the 8 core/16 thread/2 CCX unit, modular monolithic, Die that is used across the Zen/Ryzen(Consumer) and Zen/Naples(Server) SKUs. So Zen/Ryzen is in fact made up of one Zeppelin Die and the various Fabric IP while the Zen/Naples variants are made up of 4 Zeppelin Dies on a MCM(South bridge included) with Zen/Naples qualifying as a SOC(System on a Chip/System on a module). Also Zen/Naples has that Fabric IP with some extra on MCM server IP that Zen/Ryzen lacks.

I do not entirely blame the online press for some of the confusion. It’s just that AMD has provided its press kits/pamphlets with the expectation that the online technology press is actually 100% technologically competent and AMD is not being pedantic enough with its press materials and maybe AMD needs to produce some primers to give to the press to help avoid some confusion.

P.S. Please do not make the argument that for a processor to qualify as a SOC it needs to have integrated graphics also, as in the Server/HPC/Workstation market an SOC/Module only needs a CPU(processor), northbridge, and a Southbridge on the Die/module to go along with the other usual IP to qualify as a SOC/SOM.