In the high performance computing arena, the stress is always on performance. Anything and everything that can be done to try to make data retrieval and processing faster ultimately adds up to better simulations and models that more accurately reflect the reality we are trying to recreate and often cast forward in time to figure out what will happen next.

Pushing performance is an interesting challenge here at the beginning of the 21st century, since a lot of server and storage components are commoditized and therefore available to others. The real engineering is coming up with innovative ways of putting together hardware and then writing a software stack that can make this hardware malleable enough to meet diverse needs. Any vendor that doesn’t do that is going to have a tough time making a profit, and therefore will struggle to meet the needs of a customer base that expands out from HPC into other areas. (Or, conversely, tries to expand from the enterprise into HPC, hyperscale, or cloud.)

Supporting a diversity of workloads on a single and flexible hardware platform is something that DataDirect Networks has demonstrated with its “Wolfcreek” storage, which the company started telling the world about back in June 2015 and which it started shipping this time last year in base arrays that could run disk-based Lustre and Spectrum Scale (formerly GPFS) parallel file systems. The Wolfcreek platform also runs DDN’s homegrown Web Object Scaler object storage, which had over 200 billion objects under management this time last year across its customer base, blew through 500 billion objects in April, and which has probably well north of 1 trillion objects managed now. Wolfcreek is also the hardware underneath the Flashscale all-flash appliances that debuted in May of this year, and it is at the heart of the Intelligent Memory Engine burst buffer, too. While DDN thinks it has created tuned synergies between its hardware and its software, it has also been offering software-only licenses to its file systems and object storage for those who want to run it on the hardware of their choice.

Ahead of the SC16 supercomputing conference in Salt Lake City next week, DDN announced that it has upgraded the Wolfcreek platform to use the latest and greatest processors from Intel. The Wolfcreek chassis is 4U high and has 72 drive bays, which can be loaded up with SAS disks, SAS SSDs, or if you want extra performance, you can put up to 48 dual-port NVM-Express drives in the chassis. (There are not enough PCI-Express lanes to have all of the 72 drives in the unit speak NVM-Express at the same time.) Wolfcreek includes a pair of Xeon E5 processors from Intel to run the SFAOS storage operating system for block and file storage and any other software add-ons such as GPFS or Lustre parallel file systems, WOS object storage, or IME burst buffer code.

The original Wolfcreeks shipped with Intel’s “Haswell” Xeon E5 v3 processors, which made their debut in September 2014, but with the upgrade announced this week, the storage hardware is being upgraded to the current “Broadwell” Xeon E5 v4 processors, which pack a lot more cores and therefore can do more work for the storage itself and for the applications that can be run right on the platform, right next to the storage.

“The Broadwell processors unlock the peak potential of the platform,” Laura Shepard, senior director of products and vertical markets at DDN, tells The Next Platform, adding that DDN has also updated SFAOS to a 3.1 release that has scalability and performance tweaks to take advantage of the extra processing as well as for faster network protocols. “Having more cores to play for us does a lot for us in terms of value-added software in SFAOS, the most notable being in-storage processing. The percentage of the aggregate processing that we need is now minimal, thanks to the larger number of cores and software changes. It used to be to embed applications, file systems, and so on required about half of the computational power of the controller. Now, it only takes somewhere between 10 percent and 15 percent of the compute in the node to support the full environment for embedded, down from 50 percent two generations ago, and this is a very big difference for us.”

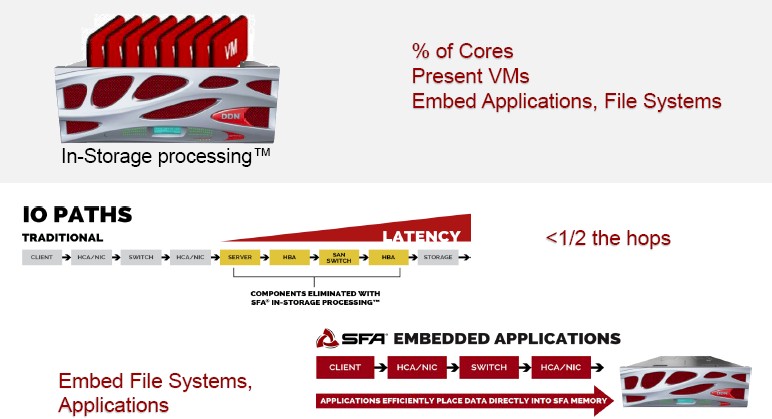

Here is an example of in-storage processing based on a GRIDScaler appliance based on Wolfcreek running the Spectrum Scale parallel file system from IBM:

The important part of that in-storage diagram is the bit in the middle that is hard to read, which shows the overhead from the server, host bus adapters, and SAN switches being eliminated because the application is actually residing on the nodes next to the storage that it is using. The other important bit is that applications can directly place data into the memory of the Wolfcreek nodes without having to reach out across the network to external parallel file systems.

“We have been working on this not just because more cores are available, but because embedded is becoming much more important to our users, one the one hand for the latency reduction and on the other hand because we are servicing more folks in tiny datacenters with very expensive real estate – in financial services and manufacturing, for example. It is beneficial to augment the density with embedded compute. It also helps us break more into the commercial space because they don’t need a team of experts to run a parallel file system, we just put it in an appliance and they load their applications.”

Every generation of server gets two Intel processor generations, thanks to the “tick-tock” model that Intel has of introducing a new manufacturing process for one generation and then a microarchitecture change and core count increase in the second one, all with socket compatibility. So the prior generation of SFA12K arrays were based on the “Sandy Bridge” and then “Ivy Bridge” Xeon E5s and Intel’s “Romley” server platform, and the Wolfcreek SFA14K platform uses the Haswell and Broadwell processors and Intel’s “Grantley” server platform. Compute servers follow the same cadence, and we can expect a follow-on to the Wolfcreek based on Intel’s future “Skylake” Xeon E5 chips and their “Purley” server platform, due sometime around the middle of next year if the rumors are right. If history is any guide, DDN will take several months to get Purley up to speed on its software stack. This means that the Wolfcreek arrays have a long life ahead of them yet, and indeed, DDN is still selling and supporting SFA12K platforms to customers and has not announced an end of life on it.

On the Lustre front, DDN will be showing off a new secure parallel file system that makes use of the hardened SE Linux and runs Lustre clients in Docker containers to create what it is calling Lustre Multi-Level Security. The more secure Lustre stack is necessary for financial services, oil and gas, life sciences, government, and commercial customers handling large amounts of sensitive data. The setup provides Lustre client node isolation through subdirectory mounts and authenticates both users and nodes using the Kerberos tool. This Lustre MLS setup is a combination of open source and DDN software (subdirectory mounts and I/O optimizations for secure environments) that is put into place using a professional services engagement on top of an EXAScaler Lustre appliance deal. Shepard says that if you tried to create a secure, containerized variant of Lustre yourself from these pieces, it would add about 50 percent latency to the Lustre file system, but that with the tweaks it has done and the approach that it has taken, you can get close to near native performance on top of its EXAScalers. (Not everyone will see that big of a jump, of course. Your mileage will vary.)

On the bust buffer front, DDN has been shipping its IME240 burst buffer with 72 drives for quite a while, but some customers have told DDN that they want to run the IME stack on commodity hardware. So in the first quarter of next year, DDN will be shipping a 2U server with two dozen NVM-Express flash drives that delivers more than 20 GB/sec of bandwidth based on a Supermicro server. Two of the SAS SSDs in the IME240 are used as system drives, so you are only going to get 22 drives worth of capacity. If you have two of these appliances stacked up, that’s 40 GB/sec out of the pair in that 4U space, which is not as good as the 60 GB/sec of throughput that the Flashscale Wolfcreek platform is delivering in the same space.

Be the first to comment