If there is anything that chip giant Intel has learned over the past two decades as it has gradually climbed to dominance in processing in the datacenter, it is ironically that one size most definitely does not fit all. Quite the opposite, and increasingly so.

As the tight co-design of hardware and software continues in all parts of the IT industry, we can expect fine-grained customization for very precise – and lucrative – workloads, like data analytics and machine learning, just to name two of the hottest areas today.

Software will run most efficiently on hardware that is tuned for it, although we are used to thinking of that process in a mirror image, where programmers tweak their code to take advantage of the forward-looking features a chip maker conceives of four or five years before they are etched into its transistors and delivered as a product. The competition is fierce these days, and Intel has to move fast if it is to keep its compute hegemony in the datacenter. That is why at the Intel Developer Forum in San Francisco the company put a new path on the Knights family of many-core processors that will see the company deliver a version of this chip specifically tuned for machine learning workloads.

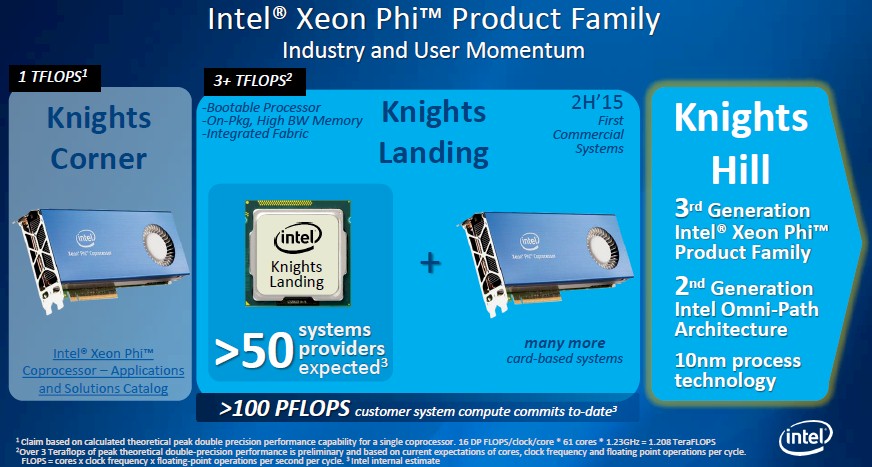

The new device is code-named “Knights Mill” and is not to be confused with the future “Knights Hill” Xeon Phi that is due to come to market in 2018, most likely first in the 180 petaflops “Aurora” supercomputer that Intel is building in conjunction with Cray for the US Department of Energy.

The Knights Mill chip fulfills a prediction that we made when the current “Knights Landing” Xeon Phi processor was formally unveiled back in November 2015, when we pointed out that Nvidia would be supporting half-precision FP16 floating point math in the then-forthcoming “Pascal” Tesla GP100 GPUs, giving a significant boost to the performance of the training of deep learning algorithms. The Knights Landing Xeon Phi chips, which have been shipping in volume since June, deliver a peak performance of 3.46 teraflops at double precision and 6.92 teraflops at single precision, but do not support half precision math like the Pascal GPUs do.

This may not seem like a big deal, but for machine learning as well as for certain image processing and signal processing applications, more data at lower precision actually yields better results with certain algorithms than a smaller amount of more precise data. With a GPU coprocessor or a Knights processor or coprocessor, the dataset is limited to what can fit in the local memory of the device and the scalability of the application and its framework across multiple devices. By shifting from very high precision data down to lower precision data, this allows for the effective size of the dataset to grow. (More data beats a better algorithm every time, a contention that Google made a long time ago and has demonstrated in many ways.)

So by stepping back and supporting variable precision math on Knights Mill, as Data Center Group general manager Diane Bryant said this future chip would do in her keynote address at IDF, the Knights chips will be able to take on bigger machine learning models within the same physical hardware footprint and we presume a similar (but perhaps slightly higher) thermal footprint.

In unveiling the Knights Mill chip, Bryant did not tip Intel’s cards very much on what it would be. But we can make some guesses, which is fun.

The math units on the current Knights Landing chips are the brawniest ones Intel has delivered to date. The Knights Landing chip has 76 cores on it, each based on a heavily modified “Silvermont” Atom core. To increase the yields on its 14 nanometer manufacturing processes (which have been a headache), Intel only expects 72 of the 76 cores to actually work properly, and in some cases, even fewer do and only 64 or 68 of the cores on the die are activated. The Knights Landing cores have two AVX512 vector processing units, and a tile, which has two Atom cores and four vector units, can process 16 double precision and 32 single precision operations per clock cycle. If you step it back to 16-bit half precision, that would in theory yield 64 half-precision operations per cycle. That half precision effectively doubles the local MCDRAM memory on the Knights Landing card to 32 GB (as far as the machine learning dataset is concerned, since the data), and a model can be trained with a larger (if less precise) dataset and yield better results faster. It is probably not 2X faster, but somewhere north of 1X or no one would bother and south of 2X because of the loss in fidelity of the data. But for the sake of some math here, let’s just say it is 2X just like Nvidia does with its Pascal GPUs.

As we suggested last year, the move to half precision would effectively double the performance of the Knights Landing processor on machine learning workloads that are sensitive to dataset size and memory bandwidth, and in this case, just adding FP16 support to the current top-end, 72-core Knights Landing Xeon Phi 7290 to 13.8 teraflops at a cost of $6,254, or about $453 per teraflops. While the top-end Tesla P100 coprocessor from Nvidia can deliver 21.2 teraflops at half precision, we estimate that it costs somewhere on the order of $10.500 and that works out to $495 per teraflops. So just by adding FP16 support to the existing Knights Landing, Intel can basically close the performance gap on machine learning workloads that take advantage of half precision floating point.

Now, if Intel could get all 76 cores of the Knights Landing die to work, that would yield another 5.6 percent performance at half precision, to 14.6 teraflops per chip, and if the clock speed could be increased a little, perhaps to 1.7 GHz in a 300 watt thermal envelope, that would add another 13.3 percent performance on top of that, for 16.8 teraflops at half precision. That would get Intel down to $372 per teraflops at half precision – considerably better bang for the buck than the Pascal-based Tesla P100 if our guess about list pricing is correct for the Nvidia chips. The Pascal chips, which run at 300 watts, would still deliver better performance per watt – specifically, 70.7 gigaflops per watt compared to the hypothetical Knights Mill chip based on Knights Landing we are talking about above, which would deliver 56 gigaflops per watt.

But perhaps the real measure that matter is dollars per teraflops per watt, because hyperscalers are concerned about all three vectors – money, performance, and power.

In that case, the Nvidia Tesla P100 card (which is the one that supports NVLink interconnects and has on-package memory with very high bandwidth) costs $1.65 per teraflops per watt. The top-end Xeon Phi 7290 comes in at $1.84 per teraflops per watt, and the slightly less powerful Xeon Phi 7250 comes in at a slightly higher $1.86 per teraflops per watt and the lower-end Xeon Phi 7230 comes in at $1.62 per teraflops per watt. With Intel, you pay a premium for performance density in the Xeon Phi line, and in this case, those are hypothetical half precision numbers and the current Knights Landing Xeon Phi does not support FP16 data storage and processing. But if you made the Knights Mill by tweaking the Knights Landing as we suggest, you end up with a chip that can deliver $1.24 per teraflops per watt, assuming of course that Intel does not try to charge a premium for this deep learning chip.

This is a thought experiment, remember. We are not, mind you, suggesting this is precisely what Intel will do. There could be enough demand for a special machine learning variant of Xeon Phi to change the process technology, the core count, or other aspects of the chip. But we think just adding FP16 support to the die is the easy way to put the pressure on Nvidia, which is still not shipping the Pascal Tesla P100s in volume, although the versions plugging into PCI-Express slots are ramping.

Having said all of that, the chart above shown by Bryant would seem to suggest that the special Knights Mill Xeon Phi chip would yield a significant performance boost, more than what we are showing by goosing the current Knights Landing chip.

The “Knights Corner” chip from 2013 was rated at a slightly more than 2 teraflops single precision, and the Knights Landing chip from this year is rated at 6.92 teraflops single precision. If you look at where the Knights Mill name crosses the X axis above the center of the 2017 date and then ricochet over to the Y axis, that looks like something on the order of 3.5X more single precision, or around 24 teraflops at single precision. That would be 48 teraflops at half precision. With the current Knights Landing cores running a top speed of 1.5 GHz, it would take 252 of those cores to deliver that level of single precision performance. Thus, we have a strong feeling that the chart above is not to scale, or that Intel showed half precision for the Knights Mill part and single precision for the Knights Corner and Knights Landing parts. Even at 24 teraflops half precision, if this is indeed what Intel is expressing in the chart above, this will represent a huge leap in performance, much more than just goosing the yields and the clocks on Knights Landing.

The question we have is this: What happens if Intel adds FP16 support to a 28-core “Skylake” Xeon E5 processor running at 2 GHz or so?

And how does this intersect or interfere with a future Knights Hill chip implemented in 10 nanometer technologies?

Hmmmm.

Variety Is The Spice Of Datacenter Life

Intel has already committed to speed up the cadence of the Knights family of processors, which like their “Broadwell” Xeon E5 brethren took a bit longer to ramp than Intel had expected. (Like Taiwan Semiconductor Manufacturing Corp and Global Foundries, Intel has had issues with the 14 nanometer node.) And now it is offering variations on the Knights theme, presumably at the behest of the hyperscalers who want an alternative to GPUs and supercomputer centers that want one architecture for analytics, machine learning, and simulation workloads.

With Intel having the broadest and deepest processing portfolio any single vendor has ever brought to bear, and adding to it all the time, it may seem to be an embarrassment of riches in Compute Land these days. But Intel is trying to prevent cracks in the datacenter walls where competitors can get in and take root. Intel can’t fill every possible gap itself, as the acquisitions of FPGA maker Altera back in January for $13.7 billion and of deep learning upstart Nervana Systems for perhaps more than $400 million two weeks ago shows. It seems likely that both of these acquisitions will play a big role in Intel’s deep learning efforts.

But Intel is already claiming dominance, and Bryant repeated a statistic that we first heard back at the ISC supercomputing conference in June to demonstrate this.

“It may come as a surprise to some of you – we will see – but we hold the leadership position today,” Bryant said. “Intel processors power 97 percent of all those servers deployed running machine learning workloads, and that includes deep learning. The Intel Xeon E5 processor is the most widely deployed processor for machine learning and deep learning.”

It is true, obviously, that GPUs and FPGAs are deployed as coprocessors these days and their software is constructed to offload massively parallel routines to them, and therefore there are always some Xeons in the mix. But that does not mean that these Xeons represent the bulk of the aggregate performance brought to bear on such work. We will get some clarification on what this 97 percent stat really means.

Bryant tossed out a few other relevant statistics, saying that 7 percent of servers deployed last year supported machine learning workloads, but by 2020, more servers will be running analytics workloads than any other kind of job. Again, we would love to see the pie charts behind these stats with the other workloads outlined and the percent changes and will endeavor to do this.

One of Intel’s biggest clients for machine learning is Baidu, which is not coincidentally one of the key innovators in this field along with Google and Facebook. Joining Bryant on the stage at IDF last week was Jing Wang, senior vice president of engineering at the Chinese search engine giant, who said that Intel’s chips were the underpinnings of its Baidu Duer personal assistant software and its Deep Speech speech-to-text software.

As we have detailed in the past, Baidu is a big user of Nvidia GPUs for training its deep learning algorithms, and it is not a coincidence that Nervana Systems counted Baidu as a big customer. Intel’s acquisition of both Altera and Nervana Systems represent both defensive and offensive maneuvers. They give Intel a story to tell and deprive others who may have acquired these companies the chance to tell a story that is different from the Xeon and now Xeon Phi. Intel can decide to deploy such technologies as aggressively or not as it sees fit, depending on the changing competitive landscape. If, for instance, Xilinx takes its foot off the gas with FPGAs, then Intel can, too, and potentially sell more Xeon and Xeon Phi processors. If other chip designers working on products specifically to run AI workloads slow down, Intel can be less aggressive with Nervana’s technology. If they speed up, Intel can push FPGAs and Nervana chips harder. And it can pitch everything on the truck against Power chips and Nvidia accelerators.

Baidu doesn’t want to leave the Intel fold if it doesn’t have to, and neither to other hyperscalers or the HPC centers that will be running similar workloads at scale. Which is why Wang was singing the praises of the Xeon Phi and is probably looking forward to what Intel will do with Nervana’s technology.

“We are always trying to find new ways of training our neural networks faster,” explained Wang. “A big part of our technique is to use parts that are normally reserved for high performance computing. That has helped us achieve a 7X speedup over our previous system. So experiments that used to take weeks now take days. When it comes to AI, Xeon Phi processors are a great fit in terms of running our machine learning networks. The increased memory size that the Xeon Phi provides makes it easier for us to train our models efficiently compared to other solutions. In testing with Xeon Phi, we found very promising and consistent performance across a wide range of kernel shapes and sizes relevant to the state of the art long and short term memory models.”

(We have to take that one on faith, not having realized neural networks had short and long term memory. . . )

As for what Intel will do with Nervana, Bryant offered an overview, but not a roadmap.

“Their IP as well as their expertise in accelerating deep learning algorithms will directly apply to our advancements in artificial intelligence,” she said. “They have solutions at the silicon level, at the library level, and at the framework level. So bringing together the engineers that create Xeon and Xeon Phi with the talented Nervana Systems engineers and their deep learning engine we can accelerate solutions to you, the developer community, and we plan to continue to make these kinds of investments in leading edge technologies that complement and enhance our artificial intelligence portfolio.”

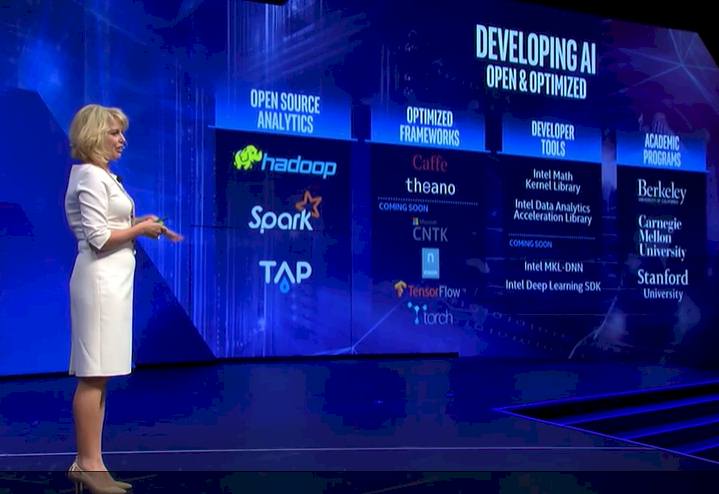

The company will also be tweaking its math and kernel libraries to tune then up for AI algorithms and contribute routines to various analytics and machine learning frameworks so that the technologies that Intel does put in the market – be they Xeon, Xeon Phi, Nervana, or Altera – will be pushing the performance envelope and keeping as many alternatives out of the datacenter as possible.

Instead of FP16, I’d like to see some unum (see Gustafson) hardware developed and given to capable compiler & math libs people. That would be some real improvement.

Machine learning gains its precision from large amounts of data on which it does statistical sampling. So it does not care about how precise underlying floats are, as long as they are somewhere between 0 and 1. That however does not apply well to engineering world, which needs to have a precision and a known error margin with it in order to produce something useful. And since all the buzz in the field is now at machine learning, who will be the voice of old school engineering?

Isn’t it likely that Knights Mill will have some of Nervana’s tech baked into it? I realize they just bought Nervana, but it seems odd that they’d buy Nervana and suddenly release information about a brand new machine learning chip.

I don’t think there is a way to bake anything Nervana is doing into the Phi but what the Nervana folks have figured out are some really interesting software tricks for dense multicore processors. I had the same thought upon hearing about Knights Mill but I really think they’ll bifurcate the two for now. I also suspect there would be huge cost differences in Mill/Nervana if you look at the architecture write-up I did (and the economics of it).

I think you guys should check out Wave:

http://wavecomp.com

If what they claim is true they totally destroy nVidia and Intel

Long Short Term Memory (LSTM) is a particular kind of Recurrent Neural Network model, which deals with sequence data. It’s a name that was assigned in the 90s and doesn’t make too much sense. It only has one kind of memory vector but it can selectively forget things out of that vector (which makes it short term) and remember things over a long sequence.

Chris Olah from Google Brain has a good article on them on his blog (http://colah.github.io/posts/2015-08-Understanding-LSTMs/).

Not really buying it that Intel is competitive in the deep learning space yet.

Intel doesn’t even provide FP16, and researchers could even make us of FP8, which will probably arrive soon enough from Nvidia, because Nvidia knows this is huge business for them, and it’s not a huge stretch to modify their chips to work like that. But I doubt Intel will go that low with its “general purpose processors pretending to be graphics cores”.

Regardless, I think in the end, it’s either Nvidia winning thanks to a strong software ecosystem that it may be creating and ease of use for academics. Or it will be custom chip solution with 10x the efficiency, like Google’s TPU. I don’t think there’s much room for Intel’s general purpose “co-processors”.

Why are you comparing a product coming out in 2017 with a product coming out this year?

Nvidia will have Volta in 2017.

Well, because that is the product we have some details on now, and what we believe Intel is reacting to. Knights Hill is going to be aimed at Volta, and there will no doubt be ML variants of it. But the point is taken. Intel and Nvidia product launches are not in phase–at least not yet.

The early benchmarks are in, and you can beat the snot out of a Xeon Phi KNL with a 1200 USD Titan X in Deep Learning training these Days.

And at the inferencing stage they get even more destroyed due to their support of INT8 instructions (4x improvement).

Feels like Knights Mill is more of a desparate attempt to stay relevant for Intel.

The only area where they could really compete would be where the DL models require more than 128 GB of system memory, in which case even a DGX-1 might get bottlenecked by the PCIe.