The two-socket Xeon server has been the default workhorse machine in the datacenter for so long and to such a great extent that using anything else almost looks aberrant. But there are occasions where a fatter machine makes sense based on the applications under consideration and the specific economics of the hardware and software supporting those applications.

All things being equal, of course companies would want to buy the most powerful machines they can, and indeed, Intel has said time and time again that customers are continuing to buy up the Xeon stack within the Xeon D, Xeon E5, and Xeon E7 product lines. The wonder is that companies do not buy up across the Xeon product lines, especially given the benefits of doing so in terms of saving money, space, power, and cooling. Let’s just say that customers have inertia in their buying habits.

But with the new “Broadwell” family of Xeon E7 v4 processors, customers should perhaps give the bigger iron a second look before just defaulting to a Xeon E5-2600 v4 machine in the same Broadwell generation. A lot depends on the software stack, at least according to Intel’s own analysis. In the wake of the rollout of the Broadwell Xeon E7 chips in June, Intel has shared its own price/performance analysis of the Broadwell Xeon lineup to help customers to assess their options. This analysis explains, in part, why four-socket machines have been so popular in China, where companies are starting with more of a clean slate and do not have the same inertial patterns.

The Intel analysis does not, however, take into account the quad-socket Xeon E5-4600 v4 processors, which were quietly launched a few weeks after the Xeon E7s came to market. As we pointed out a few weeks ago, the Xeon E7-4800 v4 processors offer better bang for the buck at the CPU level than the equivalent Xeon E5-4600 processors, but they use buffered DDR4 memory cards that are more expensive than the plain vanilla DDR4 memory sticks used in the Xeon E5 line. You really need to do a thorough system comparison to be sure you have the right platform for the job. For many HPC and enterprise shops, building out clusters mostly with two-socket Xeon E5-2600s with a relatively small percentage of nodes based on quad-socket Xeon E5-4600 or Xeon E7-4800 processors is probably the safest strategy. Then you can have some fat nodes with bigger chunks of compute and memory capacity and memory and I/O bandwidth.

Get With The Program

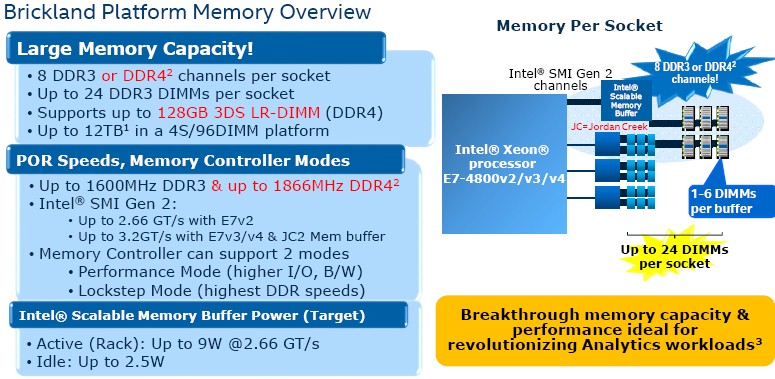

Intel has supported three generations of the Xeon E7 processors in the same “Brickland-EX” server platform, which is based on the same Socket R1 socket as was used to support “Ivy Bridge-EX” Xeon E7 v2 chips as well as the follow-on Haswell and Broadwell chips.

The Brickland platform includes the “Patsburg-J” C602J chipset and the “Jordan Creek-2” scalable memory buffer chip, which can link the processors to either DDR3 or DDR4 memory. All of the server makers we know of are using DDR4 memory here because it runs faster and at lower heat dissipation, and combined with fact that the Haswell and Broadwell chips have their voltage regulators integrated on the processor, the overall thermal footprint can go down a bit on a big iron server.

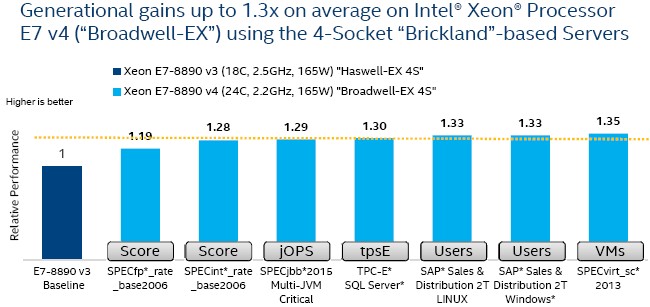

The performance of the new Xeon E7 v4 processors – the 4800 series are for quad socket machines and the 8800 series are for eight-socket systems – depends on the workload, as is the case for all Xeon processors. Here is how the top-bin Broadwell Xeon E7-8890 v4 processor, which has 24 cores spinning at 2.2 GHz, compares to the top-bin Haswell Xeon E7-8890 v3 chip in a four-socket system:

As you can see, the average performance boost for the top-bin parts is around 30 percent, which is a mix of improvements in the Broadwell core as well as a process shrink from 22 nanometers with the Haswells to 14 nanometers with the Broadwells. Those whose workloads are sensitive to clock speeds probably won’t be looking at the top-bin parts, since they run slightly slower. For the target virtualization, Java, and database workloads that Intel aims the Xeon E7s at, the Broadwell variants are showing pretty consistent performance gains across these workloads.

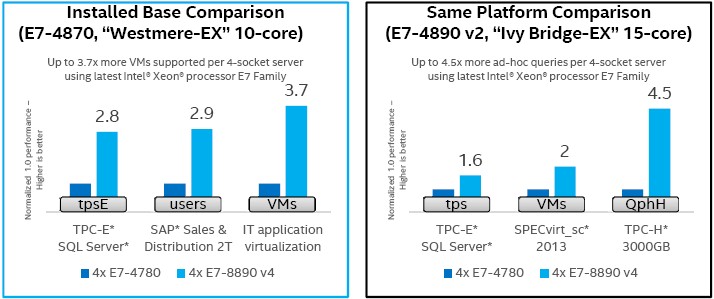

As is always the case when a processor maker puts a new series of motors in the field, the intended target customers are not those who bought systems recently so much as those who bought machines a few generations ago and who are probably due for replacements. To be fair, in enterprise and hyperscale environments with a diversity of environments that are continually expanding, companies often replace a portion of their base every three or four years, and upgrade a chunk on an annual cycle such that all machines get upgraded within a three to four year span. But the marketing message for the Xeon E7s is more about replacing big iron machines that have been in the field for quite some time.

To make the case for a Broadwell Xeon E7 v4 upgrade, Intel took an installed base of 100 machines based on the “Westmere-EX” Xeon E7 v1 machines that debuted back in April 2011. Intel never did a Xeon E7 variant of the “Sandy Bridge” family of Xeons, which is why it has to go back five years to make a comparison. (The Sandy Bridge-EX chips were slated to be delivered in 2012, but instead Intel jumped ahead to the Ivy Bridge-EX chips and promised to support three generations of chips in the Brickland platform.) The 100 servers in the Intel comparison are based on the Westmere E7-4870 v1 processors, which have ten cores running at 2.4 GHz and have 30 MB of L3 cache. The Broadwell E7-8890 v4 chips to replace this iron have 24 cores per processor, running at 2.2 GHz and packing 60 MB of L3 cache. The SPECint_rate_base2006 integer benchmark test is used to gauge the relative performance of these machines (which is a reasonably good proxy for relative virtualization, Java, and database performance in the absence of actual tests), and the SPEC integer math shows that it only takes 33 of the four-socket machines using the E7-8890 v4 processors to do the work of those 100 Westmere-EX systems. (Why Intel did not use the cheaper E7-4800 v4 processors is a bit of a mystery.)

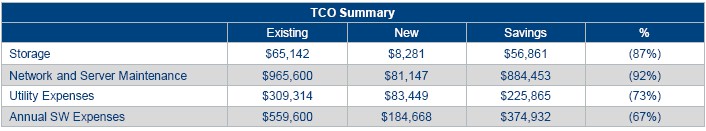

In any event, the four-socket systems in the Intel comparison have eight Ethernet ports running at 1 Gb/sec speeds (which seem a bit slow to us), and the newer boxes have a 1,200 watt active power draw compared to 692 watts for the older iron. Idle power draw, however, is 250 watts on the new Broadwell machines compared to 392 watts for the Westmere gear, and even with a ridiculously low 10 percent utilization on the machines, at 10 cents per kilowatt hour there is a saving of 73 percent on the electricity cost, plus a massive drop in the cost of network and server maintenance and software licensing. This comparison did not include the full cost of the machines, but it looks like Intel was reckoning that a four-socket Xeon E7 v4 machine cost $70,000.

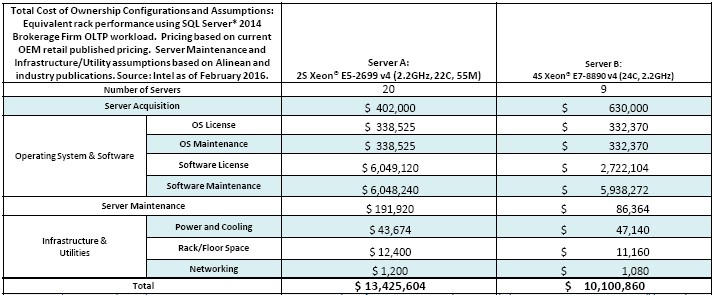

In another comparison, which pit the Xeon E7 machines against Xeon E5 machines supporting Microsoft’s SQL Server Enterprise 2014 database supporting a brokerage firm OLTP workload running atop Windows Server 2012 Datacenter Edition R2, Intel was explicit about the hardware and software costs. The Xeon E7 v4 machines were configured with the same 24-core E7-8890 v4 parts, while the Xeon E5 machines were set up with the 22-core E5-2699 v4 chips running at 2.2 GHz. It would take 20 of the Xeon E5 machines to do the same work as the nine Xeon E7 machines. Here is how Intel tallies up the butcher’s bill for the hardware, software, and infrastructure costs:

As you can see, while the Xeon E5 machines are less costly at the hardware level, and the operating system costs are the same, the database software licenses are more expensive. The four-socket machines have about a 25 percent lower total cost of ownership.

This may not be enough to make customers jump from a Xeon E5 to a Xeon E7, but it might be enough to get a 25 percent discount on licensing costs from Microsoft.

Be the first to comment