The battle between the Mesos and Kubernetes tools for managing applications on modern clusters continues to heat up, with the former reaching its milestone 1.0 with a “universal containerizer” feature that supports native Docker container formats and a shiny new API stack that is a lot more friendly and flexible than the manner in which APIs are implemented in systems management software these days.

Ultimately, something has to be in control of the clusters and divvy up scarce resources to hungry applications, and there has been an epic battle shaping up between Mesos, Kubernetes, and OpenStack.

Mesos is the open source cluster management tool that heralds from the University of California at Berkeley’s AMPLab, which also gave the world the Spark in-memory processing framework and which in fact created Mesos originally to provide cluster management for Spark setups and which was inspired, in part, by the Borg and Omega cluster managers used internally at search engine giant Google. Kubernetes is an open source project that was founded by Google precisely to bring some of the ideas behind Borg to the world, and it is, in many ways, the main competition that Mesos sees these days. For a while it looked like OpenStack might be the uber-controller on which all things hang – at least to some – but at the moment it looks like the OpenStack cloud controller will be just another containerized application that runs on top of Kubernetes. (Never say never in IT, of course.) The irony is, of course, that both Mesos (which we profiled here) and Kubernetes (which we detailed there) are both espousing that they are following the Google Way. In the long run, both will probably survive and provide competitive pressure that is healthy and good.

By reaching its 1.0 milestone, the Apache Mesos project is not saying that Mesos was not production ready until now, Benjamin Hindman, one of the founders of the Mesos project, tells The Next Platform. Hindman was running the AMPLab when Mesos was conceived and, along with techies from Twitter and Airbnb, helped turn what was originally a scheduler for multicore processors into a cluster controller with application frameworks and a sophisticated two-level scheduler. What the 1.0 release of the open source Mesos project signifies is that the underpinnings of the cluster management system has been revamped to make it faster and easier to implement new frameworks on top of Mesos. The API stack is so different and other features are so important that Hindman says it justifies the 1.0 moniker.

“The way that a lot of projects have done APIs in the past is to build libraries that are used for communicating with the system, and that is where we started with Mesos as well,” Hindman explains. “What that meant, though, is that any time a project wants to make a change in the API it would have to change those libraries and get everybody to install them. This was a big pain in the neck for users, a management and programming nightmare, and made adding a new feature to the API was tough and take a long time. So we have decoupled all of that and went straight to an API based on HTTP and RPC. It is not a REST protocol, but it has HTTP as its transport so that as other protocols might evolve in the future, such as GRPC, we would be able to take our objects and use it with that protocol as well.”

This new API is based on JSON documents and protocol buffers, to be specific, and it speaks plain old HTTP so there is no library that needs to be updated as the core API for managing Mesos changes. The new API structure has a consistent structure for all API endpoints in the system as well, whether they are the core API for operating Mesos or the hooks between the various frameworks for Hadoop, Spark, Cassandra, and other workloads running on top of Mesos.

“This allows us to version the API really well, and this is something that the Mesos community felt really passionate about because a lot of open source projects have not done a great job with API versioning,” Hindman continues. “They have sometimes broken compatibility and not really taken it seriously. We wanted to cut a 1.0 release when we had an API that we could version appropriately and got through proper deprecation cycles. We are going to be able to iterate on features a lot faster now, and it is going to be easier for us to change particular implementations of the API pretty quickly.”

That API talk might be a bit gorpy, but it could turn out to be a key differentiator for Mesos. And so will be the universal containerizer that allows for application software container images in the Docker format to be run natively on the Mesos substrate.

Mesos took the inspiration for its various kinds of compute and storage frameworks from Borg, which assumes that all applications running inside of Google are deployed on top of containers. Mesos got its start on Solaris containers with that variant of the Unix operating system, which was open sourced in 2009, but as Google wove its control groups (cgroups) and namespaces resource container technology into the Linux kernel, ultimately leading to the creation of LXC containers in commercial Linux distributions, the Mesos project adopted its own variant of cgroups and namespaces as a means of isolating different workloads on the same nodes in a cluster. (This is vital if you want to have latency-sensitive and batch processing jobs running side-by-side on the cluster, which is a requirement if you want to drive up utilization on a cluster, as Google has taught us.)

Up until now, if you wanted to run Docker on top of Mesos, you needed to fire up the Docker daemon (a management agent) on the nodes in the cluster. But with Mesos 1.0, frameworks running on top of Mesos can launch either Docker or Appc containers without their respective Docker Engine or rkt daemons. The isolation of the containers is done using isolators. The Cloud Native Computing Initiative, which is where Kubernetes was spun out to by Google a year ago, is distinct from the Open Container Initiative, which is working on a converged container format that will draw on the technologies created by Docker and CoreOS. These two had some disagreements over container formats but are working them out; Docker has its own eponymous stack and CoreOS sells its own commercial-grade Kubernetes system called Tectonic.

Hindman also tells us that the Mesos community is pondering how to natively support virtual machine formats – our guess is those compatible with the KVM hypervisor that is popularly deployed with Linux and OpenStack – to obviate the need for running OpenStack at all on top of Mesos or any other Borg-like cluster management system. There is also some talk about nested containerization, which would enable for different versions of Docker images and maybe even Docker daemons to be run on the same cluster, for instance.

The other big tweak for Mesos 1.0 is that containers running on hybrid CPU-GPU clusters can see GPU resources and schedule work on machines that have GPUs. The initial support allows for Mesos to wrap around the CUDA programming environment from Nvidia and dispatch work to its Tesla family of GPU coprocessors, but over time this can be extended to other GPUs and software stacks, such as AMD FireStream and FirePro cards and the OpenCL environment. The architecture that allows for this hybrid computing support could also be extended to FPGA and DSP accelerators, too. The GPU support can have file system isolation or not, meaning it can dispatch work to imageless containers or to ones running a Docker image. The Mesos 1.0 update has initial beta support for running atop Windows Server, with full support for Windows Server 2016 as a replacement for Linux as a substrate expected by late this year. Windows Server 2016 is expected to debut in October of this year.

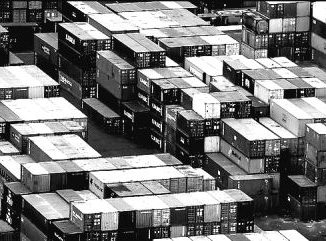

The thing that Mesos 1.0 is not stressing, by the way, is scalability and stability, particularly with the largest Mesos cluster at Twitter having over 33,000 nodes and many customers running the commercial DC/OS variant of Mesos from Mesosphere scaling up to several tens of thousands of nodes, too.

What the Mesos community, and therefore also Mesosphere, are looking to work on now is fit and polish, to make the Day Two experience a good one. Some applications that have frameworks already running on Mesos for a long time run well, such as Hadoop, Spark, Kafka, and Cassandra, but other applications in the enterprise, particularly stateful ones that have their data tied very tightly to them, need extra care to be implemented on Mesos. In the long run, the Apache Mesos community and Mesosphere alike want to get as many applications and their respective frameworks running smoothly on top of Mesos, to give customers a seamless installation and operation experience that is the whole point of containerization on the first place.

Now that Mesos has done its blockbuster 1.0 release, you might be thinking that the pace of innovation will slow. Hindman says that it will not slow so much as change. Up until now, the Mesos community was dropping an updated release every two months or so, which is a pretty tough pace to keep up with but one commensurate with a new and fast-evolving open source project. (Think about the early days of Linux and OpenStack, for two good examples.) The cadence for Mesos releases could settle down to six months, as has happened with commercial Linux and OpenStack updates, and we think it might even fall into the same April-October pattern that both now have. As for the next major release, it could come as soon as a year from now, according to Hindman, because there are a lot of features in the works to better handle multitenancy and better allocation of resources in the cluster for diverse workload running simultaneously. This last bit is tricky, and IBM has been stepping up with its expertise to help out here, and Microsoft will pitch in, too, we think. No one expects Google, which has plenty smarts here, to help out Mesos. Google is all about Kubernetes.

Be the first to comment