Just because Intel doesn’t make a lot of noise about a product does not mean that it is not important for the company. Rather, it is a gauge of relative importance, and with such a broad and deep portfolio of chips, not everything can be cause for rolling out the red carpet.

So it is, as usual, with the Xeon E5-4600 processors, the variant of Intel’s server chips that has some of the scalability attributes of the high-end Xeon E7 family while being based on the workhorse Xeon E5 chip that is used in the vast majority of the servers in the world.

The E5-4600 processors, which have just been updated to the “Broadwell” cores and their 14 nanometer processes, are meant to allow for more affordable and more dense four-socket Xeon systems than is possible with the E7-4800 chips, which do not use normal memory sticks but, like other high-end machines with large memory capacity, have buffer chips interspersed between the memory controller on the processor and the DRAM on the DIMMs. The bandwidth between the sockets is lower on the E5-4600 than it is on the E7-4800, with the former having only two QuickPath Interconnect (QPI) ports per socket compared to three QPI ports for the later.

In essence, the E5-4600 is a variant of the E5-2600 that has a slightly different NUMA configuration. With the E5-2600, the two QPI links on the processor are cross-coupled, providing high bandwidth and low latency between the two sockets, and offering near linear scaling across the compute and memory. For all intents and purposes, the two-socket machine looks like a massive single processor to the systems software. With higher levels of NUMA, it can take two, three, more hops between sockets for data to be moved. With the E5-4600s, the four chips are linked in a ring with each processor being directly linked to two adjacent processors but needing two hops to get to the fourth. This adds some latency in memory access across the NUMA cluster, which is important for some (but certainly not all) workloads. With the Xeon E7-4800s, each chip has three QPI links, so the four chips in a quad-socket box again are tightly linked and it only takes one hop to get from one socket to the other. (With the E7-8800, two rings of quad sockets are cross-connected and a quarter of the time again it takes two hops to get from one socket to the other, but three quarters of the time it only takes one hop or no hop at all because you hit the target right on the first try.)

All this hopping around is what makes NUMA systems work – and we will not get into the quasi-NUMA functionality going on in the rings of cores on a single processor, but this is all getting very fractal as we scale with cores instead of clocks to boost throughput performance.

The interesting bit is that Intel is delivering more aggregate compute in the E5-4600s than it is doing in the E7-4800s, and it is charging a lot more for it than it does for the E7-4800s. We are not sure what the overall system price would be for systems using one or the other processor, but once those fat memory cards with their buffer chips are taken into account with the E7-4800s, it could be that the overall cost for the E5-4600 system is still lower. We know for sure that the compute density is higher with the E5-4600 because it does not need those fat memory cards but just the same kind of normal DDR4 memory sticks for the Xeon E5-2600 processors that are used in two-socket machines.

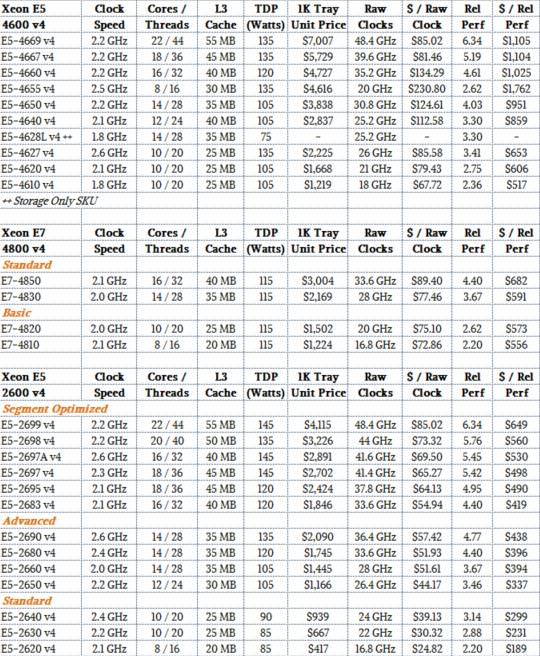

Take a look at how the Broadwell E5-4600 chips stack up against their E7-4800 and E5-2600 brethren:

This comparison above only shows the relevant parts of the E5-2600 and E7-4800 lines, but you can see our full analysis of the Broadwell Xeon E5-2600s at this link and for the Broadwell E7-4800s and E7-8800s here. Our point is that Intel is charging a pretty hefty premium, at the CPU level, for the E5-4600 processors. Some of this higher cost is probably due to the relatively low demand (and therefore low manufacturing volumes), but some of it is because customers who want four-socket machines that can scale up to 48 memory slots and up to 3 TB using 64 GB memory sticks are willing to pay a premium for the extra compute and therefore memory scalability compared to the Xeon E5-2600 workhorses.

In the table above, relative performance is reckoned against the top-bin standard part in the “Nehalem” Xeon 5500 processor that launched in March 2009, marking the beginning of the current Xeon server chip architecture. That Nehalem Xeon E5540 processor had four cores and eight threads running at 2.53 GHz with 8 MB of L3 cache memory – a relatively puny processor by today’s standards. To gauge relative performance within generations, we multiply the core count by the clock speed, and then adjust this by the instruction per clock (IPC) improvements that Intel has said it has attained for each generation of Xeon cores. The top-bin Broadwell Xeon E5-4669 v4 chip has 22 cores and 44 threads running at 2.2 GHz, which is roughly five times as much raw throughout in terms of clocks, and when you add in the IPC improvements, the performance per socket is on the order of 6.3X compared to that Nehalem E5540. (Interestingly, the L3 cache is 6.9X as fat, scaling more or less with our relative performance metric, which stands to reason.)

There are not easy like-for-like comparisons between the Xeon E5-4600 and E7-4800 processors, as you can see from the table above. But if you do the price/performance math, the E5-4660 with 16 cores running at 2.2 GHz costs $1,025 per unit of relative performance, a 50 percent premium over the E7-4850 v4, which has 16 cores running at a similar 2.1 GHz that costs a mere $682 per unit of relative performance. The 14-core E5-4650 v4 carries a 61 percent premium over its closest cousin in the E7 line, which is the E7-4830 v4. Interestingly, the bang for the buck of the E5-4620 v4 and the E7-4820, which have ten cores each and about the same performance, is about the same. (There is a slight premium for the E5-4600 variant, around 6 percent.)

In general, the E5-4600 v4 processors carry a bigger premium over the E5-2600 processors used in two-socket machines, and in a sense, that premium is solely for the higher NUMA scalability (and potentially higher memory capacity) engendered in the former. For some workloads, having more memory capacity and bandwidth is more important that the absolute highest possible core count, and obviously you can get twice as much memory (both capacity and bandwidth) in a four-socket E5-4600 server compared to a E5-2600 system. To be specific, 48 memory slots with the quad compared to 24 memory slots the duo, and with relatively cheap 32 GB sticks, that is 1.5 TB versus 768 GB; 64 GB memory sticks are more expensive and not widely deployed and the newly shipping 128 GB sticks are as pricey gold and not even remotely a volume product. Our point is, sometimes it makes more sense to have a four-socket server for memory expansion, not compute. This has certainly been the case for quite some time in the HPC market, and is increasingly the case with in-memory workloads like Spark or SAP HANA.

That memory expansion carries a pretty steep price, however, depending on how you look at it.

Take the top-bin parts as the extreme example, where a user wants to scale up compute and memory capacity uniformly. The 22-core E5-2699 v4 costs $4,115 each when bought in 1,000-unit trays from Intel, and two of them cost $8,230. To have double the compute and memory scale, you need to buy four E5-4669 v4 processors, which would cost $28,028 at list price of $7,007 a pop. The doubling of the processors would cost $16,430 if pricing were consistent across the Xeon E5 v4 chips, which means the remaining $11,568 – 41.3 percent of the cost – is embodied solely in the potential for more memory capacity and bandwidth.

The other way to look at this is to hold the core counts constant (or relatively so, as the Xeon E5 v4 product line permits) and see what the cost of adding sockets and therefore memory capacity comes to. In this case we will take a pair of 20-core Xeon E5-2698 v4 chips, which cost $3,226 each for a total of 40 cores for $6,452. To get the same core count across four (rather than two) sockets, and therefore be able to have twice the memory capacity and bandwidth, at roughly the same clock speed and L3 cache in aggregate, you would pick a quad of ten-core E5-4620 v4 chips, which cost $1,668 each for a total of $6,672. That’s a memory premium of only $220, or 3.4 percent.

Clearly, the socket and memory scalability of the E5-4600s depends on which chips you compare. So do the math before you buy.

If you want to compare the Broadwell Xeon E5-4600 v4 family to the three prior generations of E5-4600s, we have a detailed analysis of these earlier chips here. Generally speaking, the Broadwell versions of these processors have the same price, SKU for SKU, and run at a slightly lower clock speed (100 MHz or 200 MHz) but with a few more cores that provide somewhere in the neighborhood of 25 percent more throughput once the IPC improvements moving from Haswell to Broadwell cores are thrown in.

As we have pointed out before, we think the Broadwells will be the last generation of Xeon processors where Intel makes distinctions between Xeon E5-2600, E5-4600, E7-4800, and E7-8800 processors. From the looks of things, Intel is fixing to have a unified Xeon line that allows scaling from two to four to eight processors in a single family, with the future “Skylake” Xeons coming next year, radically simplifying its products. Intel may not roll out these devices all at once mind you. Or, then again, maybe it will.

All we know is 2017 is going to be a lot of fun for Xeon systems, with a modified architecture that includes 3D XPoint memory and integrated Omni-Path interconnects, too, thanks to the future “Purley” platform.

Row clock metric is meaningless. Why don’t you tell us CPI/core?

Thanks.

How is $ / Raw Clock the same for 2699 and 4669, when everything used to compute that is the same except the price, which is quite different. It appears your table contains copy-and-paste fail.