The past decade or so has seen some really phenomenal capacity growth and similarly remarkable software technology in support of distributed-memory systems. When work can be spread out across a lot of processors and/or a lot of disjointed memory, life has been good.

Pity, though, that poor application needing access to a lot of shared memory or which could use the specialized and so faster resources of local accelerators. For such, distributed memory just does not cut it and having to send work out to an IO-attached accelerator chews into much of what would otherwise be an accelerator’s advantages. With this in mind, a number of companies – AMD, ARM Holdings, Huawei Technology, IBM, Mellanox Technologies, Qualcomm, and Xilinx – have thrown their lot together and created a consortium to work the architecture of a Cache Coherence Interconnect for Accelerators (CCIX).

Yawn. How esoteric can we get, right? No, really. This is an important step forward in systems design. Rather than having scads of identical (a.k.a., homogeneous) processors sharing the memory of a traditional cache-coherent shared-memory symmetric multi-processor (SMP) systems and non-uniform memory access (NUMA) systems, that same system becomes a mix of different types of processors (a.k.a. heterogeneous) – call some of them accelerators if you like – all with access to the common memory.

With the CCIX’s architecture being worked, rather than farming out the work to IO-attached accelerators, these same accelerators become peers with the processors. Being a peer in this sense does not require that all processors share the same instruction set, they only need to speak the same language when accessing the shared memory. The rapid data sharing found in SMPs becomes available to local accelerators as well.

And, better, if those same processors are capable of understanding the higher level addressing model of the others, complex objects residing in memory, objects containing those addresses, can be produced by one type of processor and directly consumed by another. Fast. Fast and easy.

More gobbledygook? Yeah, maybe, but hear me out. I’ll try to explain. Allow me to dig down a tad and outline the advantages while also outlining what this really means.

If you have read some of my articles in The Next Platform, you know that I tend to start by pulling together the pieces. The place to start with the CCIX architecture is with an outline of the notion of a processor’s cache, and then into the meaning and support of cache coherence.

But I will prelude this by observing that the essence of cache coherence is that, no matter how many copies of data might reside in processor caches, from the point of view of some application accessing that data, and no matter from what processor the access is occurring, there appears to be just one instance of that data. If your application wants some data, no matter the location – whether in an SMP’s DRAM or in a processor’s cache, or now in some notion of an accelerator – the system will provide your application the current version of that data and provide it quickly. Although it is not physically the case, cache coherence allows a lot of processors to share a common memory, allowing applications to perceive data as though every access was from this shared memory. Shared “memory” here is not just the system’s DRAM, but also the whole of the system’s cache.

A Tad Bit Of Cache Theory

For most of us, our mental picture of a processor is that it executes instructions found in memory, accesses data in that same memory, and occasionally changes that same data. Good enough for a lot of stuff. It happens also to be the basic storage model used by most programming languages.

But also true is that the processor cores don’t really access the system’s DRAM. What? Yup, the processor cores access the data and instructions in their cache, not the DRAM. The processor’s sub-nanosecond cycle times would be of no particular benefit if that were not the case. The cache, which temporarily holds data blocks originally residing in the DRAM, can be accessed at processor speeds; the DRAM can take hundreds of times longer. We see the core’s multi-gigahertz frequency benefit because the data needed by these cores really is frequently found in the local cache. If the data being accessed by a core did not happen to reside in its cache, a cache controller initiates a memory access to feed the cache and only then does the processor tend to wait.

That data block mentioned above happens to be, as examples, 32 or 128 bytes in size and aligned in memory on that same boundary. Practically every read or write access of DRAM memory is of such a block size. It does not matter whether a program is changing, of example, a 4-byte integer, if that data block is not in the processor core’s cache, the entire data block is read into the cache and later – perhaps much later – written back from cache into memory, all without a program’s involvement. Again, the processor cores access their data primitives – like 4-byte integers – from the cache, the cache controllers access the memory using larger data blocks.

For example, consider this short MIT Scratch animation in which the same data block – originally residing in DRAM – is modified multiple times before it later ages out of the cache and back into the DRAM:

As this progresses, the current data – here a simple 4-byte integer within a block – is residing in the cache, not in memory, until the block containing this integer is flushed out of the cache at hardware’s discretion.

The processor, at the time that it begins the access, does not know the location of the data it intends to access. All the core knows is that it has a real address representing the data, a real address representing the location of the data in the DRAM. But because each cached data block is tagged with a real address, the cache is able to respond first saying to the core “Hey, I’ve got the data that you need.” If not, the cache controller tells the core “Hey, hold on a moment, I’ll find and access your data for you using your real address.”

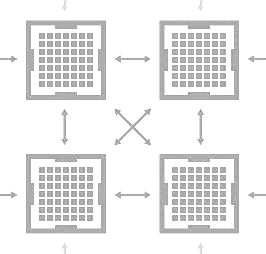

You know, though, that multi-core systems are pretty much the norm now. So in this next animation, we see two processors, each with their own cache, both sharing the same memory. Both processors are changing the same memory block, in this case a single 4-byte integer of that larger block, both processors using the same real address. Because an application, executing anywhere, expects to see a single coherent view of that data, as each change is made, the data block effectively moves – it gets pulled – from cache to cache. No DRAM access is needed since the hardware determines that the current version of that data is in a cache, not at the real-addressed location in the DRAM:

As each core wants to store its data, it realizes that it does not have the needed data block in its own cache. And at that same time, the cores have no idea where the current version of the data is residing. It could be in the DRAM, or – in a larger system – in any of many more cache(s). Completely unbeknownst to the application doing the data store, the hardware finds the current version of the data. This is part of keeping the cache coherent. The cache controller wanting the data may well need to ask every other cache and DRAM in the system “Who has the current version of the real-addressed data block that I’m looking for?”

What it takes to do his is an architecture in its own right. Today that architecture is private, proprietary, the intellectual property of the company owning any given homogeneous SMP’s design. And it can get very complex; I’ve shown just a couple variations. Consider another, as in the following animation.

Here we show two chips of a potentially much larger cache-coherent NUMA SMP. Each chip has multiple cores, each core with its own cache. Each chip directly links to some part of the system’s DRAM. All memory, memory attached to any chip, is accessible from any core, no matter the chip.

This animation starts with three of these cores accessing (reading) that same data block – all using the same real address – into their own cache. The data block, now residing identically in multiple core’s cache, is considered by all to be shared. One core subsequently needs to store into this data block. The result is that the otherwise shared versions associated with the other cores get invalidated so that the remaining core has an exclusive copy of the data block, now with updated data. (You don’t want both new and stale data being subsequently concurrently accessed.) In the fullness of time, that now changed data block ages out of that core’s cache and returns to memory, returning per the real address associated with the data block. Take a look:

Again, making this happen requires a lot of communications between the cores and chips over what is called here the SMP fabric. Managing all of this is also part of this cache coherence architecture.

But I/O Devices Can Already Access An SMP’s Memory

That outline of cache coherence is Interesting, but even today I/O-attached accelerators can access an SMP’s memory. Most (all?) will even be able to read the contents of the SMP’s cache when pulling data into the accelerator’s own memory. And, aside from the overhead of initiating such operations, the I/O links are fast as well. So what’s the big deal? After all, an I/O-attached accelerator can see the contents of a processor’s cache.

To explain, we next take a look at a variation on our theme. This time we add an I/O-attached accelerator to our animation, using some I/O interconnect. This accelerator, via a traditional I/O-based architecture, can access the data of the SMP. In doing so, in reading from the SMP, the data blocks it reads can also come from some core’s cache. All good:

But notice that the cache-coherent SMP can subsequently continue changing that same data block and the I/O-attached accelerator is completely unaware that this is going on. This data is not coherent and there is no hardware means of ensuring it. It is not really part of the cache-coherent SMP. The data pulled is a copy of the state of the data from some moment in the past. Not only does this accelerator need to be driven via the overhead of a traditional I/O protocol, but keeping its data in sync, keeping it coherent with that of the SMP, takes still more software design and overhead.

Again, this overhead chews into the performance advantages of accelerators.

Such copying is completely normal to all of today’s I/O. If you want to send data out of your SMP, that data is being copied out by some asynchronously executing I/O adapter. Same words for data flowing into your SMP’s memory; some asynchronously executing I/O adapter is being told by the processors, via some considerable software overhead, to copy such inbound data into the SMP’s DRAM. We are not at all saying that this model is not completely reasonable; it has been around for like forever. We are, though, saying that fast accelerators want their data flowing into and out of them to match their own speed. The high bandwidth of the I/O links driving such data flow may be great, but bandwidth is only one component of the desired short data latency.

In the second part of this series, we will drill into how cache coherence needs to evolve for accelerators, and why something like CCIX is absolutely necessary.

Time Traveling Coherence Algorithm for Distributed Shared Memory

http://people.csail.mit.edu/devadas/pubs/tardis.pdf

Parallel programming made easy

http://www.csail.mit.edu/parallel_programming_made_easy

Timothy,

I am still waiting for nice articles on the above mentioned research.

Nice article. I like the effort that has gone in to explain coherency to the lay person. CCIX is a reflection of the consolidation that is active in the industry. Companies have to collaborate a lot more now than before. Intel would benefit immensely by adopting CCIX as well. Any clue on whether they are or considering being part of CCIX?

No, Intel is not part of CCIX members. The CCIX Members are listed here: http://www.ccixconsortium.com/members