There is a long-running joke in high performance computing that for any question that can be asked, the answer is probably going to be “it depends.” This maxim persists because there is an incredible amount of diversity of hardware, software, middleware, applications, and other factors that make coming up with a universally true statement about any tuning or optimization impossible.

This same sentiment might also be applied in warehouse scale datacenters, although the number of parameters and complexity for most homogenous workloads might not come close to HPC datacenters running multiple or complex applications. The point is, although there are default parameters in every nook inside the HPC hardware and software stack, most of these are left at the standard and if not, tuning one parameter might make one application sing and another fall flat. There are no sure bets, so how does one design a truly “smart” way of understanding and applying parameter tweaks on both hardware and software?

This is a question that Dr. Tomer Morad from Cornell University has been toying with for a number of years. A few years ago his research team built a dynamic scheduler that would make energy-efficient decisions by predicting energy per instruction in different scenarios. For instance, one of the things it would do was expand the number of threads only when the throughput increase justified the extra power. This was great in theory and for the applications where it worked, there was up to a 32% energy reduction. But for others, sometimes it meant a 3% increase in energy consumption. There were just too many parameters, tunables, and differences in the application alone that when applying that against other differences (software, hardware, different compilers) the generalizability of the tool broke down.

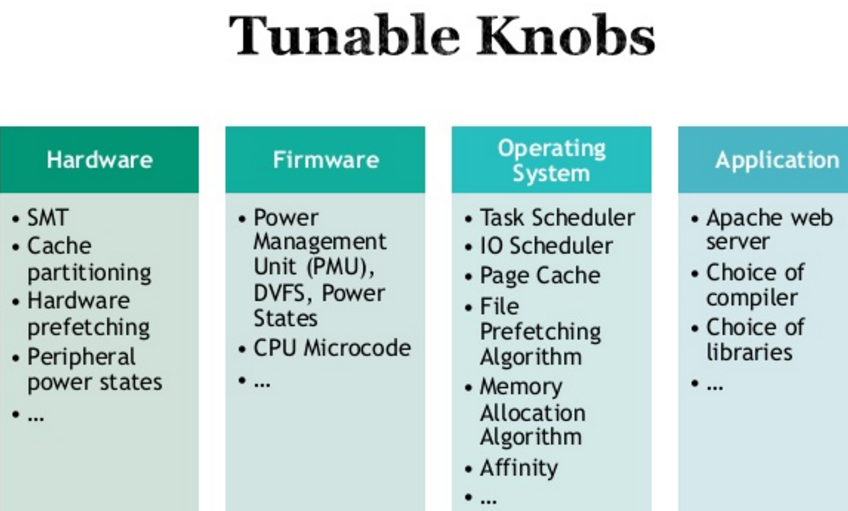

“There are literally hundreds of tunable parameters in today’s systems,” Morad described last week during the HPC Advisory Council Stanford Workshop. “And let’s be practical too—we cannot reasonably expect system engineers to understand or know about all of these knobs and to understand the effect of each of these knobs on a specific application. So what happens is we sweep through a small number of knobs and leave the rest on default and thus miss a lot of opportunities for tuning.”

When important workloads hit an HPC datacenter, admins immediately set about tuning it, usually manually sweeping some key parameters to find the best configuration for a benchmark—and often using the default settings. This is all labor intensive, and while there are a lot of “knobs” only so many can be turned. And even if there was intimate knowledge of all of these tunables, workloads themselves are dynamic, changing in phases at different phases. The goal is to create dynamic tuning, which responds to the individual circumstances of each application, Morad argues.

The above parameter issues only scratch the surface of tuning, which reveals an opportunity—one Morad saw firsthand when building the dynamic energy efficiency scheduler. That lack of generalizability can be overcome in some areas, he argues, and in an automatic way. “If we’re looking at even 20 binary knobs or parameters, that’s a million states because of so many dependencies. What is needed is something that is smarter and can take shortcuts to test these and yet also adapt to changing workloads.” The other goal, of course, is for that adaptability to target different goals. For instance, to keep workloads running within a certain power budget or with a certain level of performance.

Realistically speaking, all of this cannot be automated to deliver a best of all worlds approach every time for changing workloads. But in his work in Cornell’s incubator program, Morad and team have developed the closest thing they can get to that goal via the DatArcs Optimizer.

At the high level, the process of whittling down the tuning begins with a workload classifier, which places into “buckets” similar workloads that have similar parameters. A metrics estimator tracks system metrics and feeds these to a dynamic tuner that further refines within those buckets. Once an optimal setting is found, it gets carried over to new and other similar workloads. As one might imagine, this is more challenging that it sounds. Even for mere classification, the number of buckets can’t be too numerous or few and since workloads have different behaviors at each phase, defining these accurately is an ongoing challenge.

The project is still in development and while Morad openly defined what the challenges are of “tuning the tuner” the team is currently in beta and looking for people from the HPC community to give the tuning capabilities a whirl against their real-world workloads.

“Cornell’s incubator program” refers to the ‘Runway Startup Postdoc Program at the Jacobs Technion-Cornell Institute at CornellTech’ in NYC.