Some parts of the platform stack are so ubiquitous that they are almost transparent. Commercialized web servers were the first part of the Internet to make the jump from service providers to enterprises, and they made companies like Netscape, IBM, Microsoft, and Red Hat rich in those early days.

The open source Apache web server rose to prominence along with the open source and Linux software movements that coincided with the rise of Internet-style networking and computing, and for several decades the Apache web server has dominated the installed base. But the NGINX web serving and load balancing platform, which is also open source, has been making steady gains in recent years, and it looks like it won’t be too long before it surpasses Apache in the installed base.

What is going on here?

To figure that out, The Next Platform had a chat with Owen Garrett, who is head of products at NGINX and who cut his teeth back in the dot-com era as a senior software engineer for the line of web servers and load balancers from Zeus Technology that were the speed demons of the early days of the commercial Internet. (WAN optimization appliance maker Riverbed Technology bought Zeus back in 2011.) And the first thing to understand, says Garrett, is that the web server is not something just hangs off to the side of infrastructure and that web server performance matters more than ever because of this.

“The web server is a critical part of the stack, and its job is to take HTTP requests that are coming in and somehow connect those to an application,” Garrett explains. “If the response is just static content, the web server can deal with that directly, but if it requires running some code, then generally there is some intermediary between that web server and the application code, which is an application server. The app server could be as simple as a process manager for PHP or as complex as a big chunk of Java code for a servlet runner for an enterprise Java application. The thing is, the web server is always in the data path. The application never sees the network traffic, it just gets presented with a web request, and the network never sees the application because the web server bridges the two. The important thing is that web servers are pretty much everywhere, and you can regard the web server as the operating system for a modern application.”

We would observe that everyone wants to be the operating system in the platform stack. . . . and that is another story for another day. But to Garrett’s point, applications are written for and served by this particular piece of software, and no one would argue that it is not integral to a modern platform – including the next one that people are building, perhaps with a mix of bare metal and containers. Here is why. Even if an application did not have a web server front end (which is hard to imagine, but stay with us here), the infrastructure and microservices application components that do run on top of the clustered servers are absolutely and fundamentally dependent on web servers because of the fact that they are driven, by and large, by APIs that use REST interfaces and HTTP protocols.

So, in effect, the web server is not just the face of the application, it is also the nerve center that moves the infrastructure muscles of the entire body that supports that face.

Given this, performance matters more than ever and, as is the case with a lot of new systems, the use of open source software is often a prerequisite. NGINX, which was founded in 2002 by Igor Sysoev and which released its first product two years later, is known for performance and is also open source, so it can compete against the well-established and well-loved Apache web server. And increasingly, the company’s software is competing against various network appliances from Cisco Systems, Citrix Systems, and F5 Networks as service providers, cloud builders, and enterprises shift from hardware-based appliances to software running in virtual machines on standard X86 clusters to perform other network functions.

Some data is in order, and Garrett can point to lots of it to show the shift that is underway out there on the Internet.

The Big Web Server Shift

If you buy into the Windows Server platform, as many enterprise customers have for a large portion of their workloads and as Microsoft does with its own Azure public cloud, then you tend to buy into the complete stack including Microsoft’s own Internet Information Services (ISS) web server. While there is an unofficial port of the NGINX web server for Windows, most companies deploying it do so on Linux. There are NGINX ports for various Unix ports as well, and in fact, the software is licensed under a very permissive BSD license that comes from the open source Unix movement decades ago.

That permissive license is one of the reasons, in fact, that most of the hyperscalers use NGINX as their web server these days, with the big outlier being Google, which has created its own web server and load balancer stack. The Google Web Server is itself is a fork of Apache, and some of the modifications it has made were contributed back to the Apache community to boost its performance; last month, Google open sourced its Seesaw load balancer, no doubt in reaction to the popularity of the NGINX alternative. All of the hyperscalers open source their tools to get people acquainted with them and to help improve them. It is a kind of recruiting.

For the past several years, the rise of NGINX has been steady and predictable, and the decline of Apache has been the same, and it is hard not to draw the conclusion that service providers, cloud builders, web hosters, and enterprises are not following in the footsteps of the hyperscalers who put NGINX on the map and replacing Apache with NGINX.

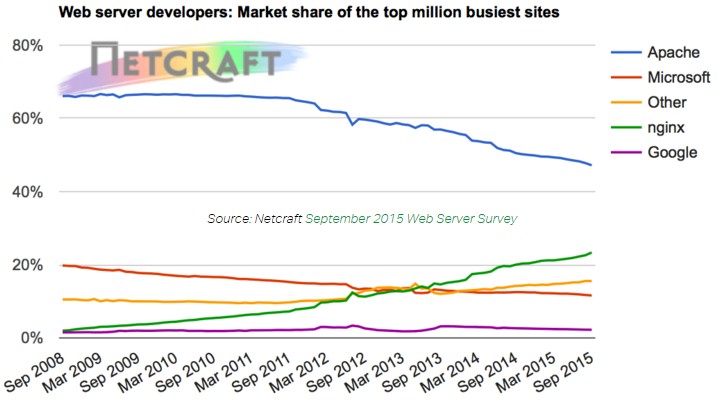

The chart above is from Netcraft, which has counted web server instances by domain names since there was a commercial Internet, shows the data for the top 1 million web sites in the world as ranked by Alexa traffic rankings. (There are more than 1 billion web sites, so this is just the top slice.)

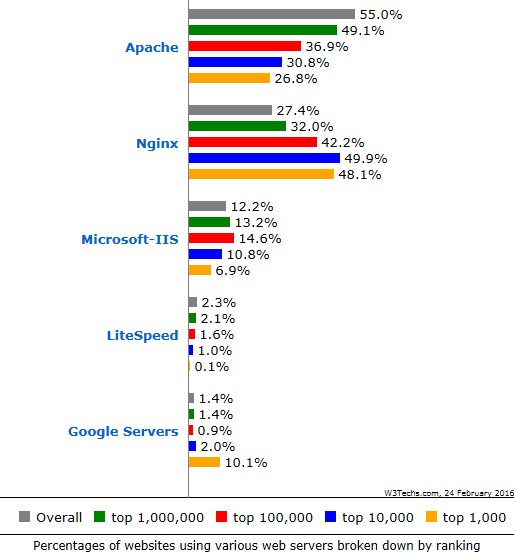

Here is an interesting bit of data collection that was done by W3Techs, which also tracks web server usage. In 2013, NGINX was the most popular web server in use by the top 1,000 busiest sites in the world (ranked by Alexa again). In 2014, NGINX was the top web server for the 10,000 sites with the heaviest traffic, and last year, it was the most popular with 100,000 busiest sites. (The NGINX share is just shy of 50 percent in the top 1,000 and top 10,000 rankings.) Here is the latest data from W3Techs if you want to see it for yourself:

The Netcraft data, which looks pretty linear to our eyes, would seem to suggest that as 2016 comes to a close, NGINX will be the most popular web server in use on the top 1 million busiest sites, but the Netcraft data looks to our eye that a crossover between Apache and NGINX will happen sometime around the middle of 2017 or so.

But that is not the real metric to consider, says Garrett. “The way that I interpret this growth is that 70 percent, 80 percent, or maybe even 90 percent of new applications are being built on top of NGINX, and as traffic gradually moves from the old incumbents to the new platforms, this is driving that 50 percent figure for all of the top 10,000 and 100,000 web sites.” (Another interesting tidbit that is a kind of leading indicator. According to customer surveys, about half of the companies deploying NGINX are doing so on a public cloud.)

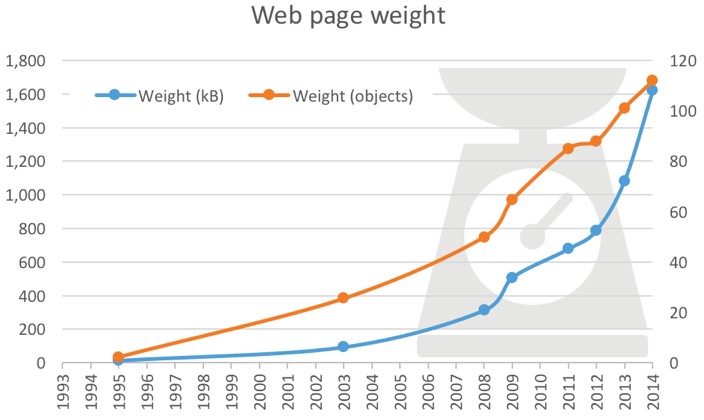

The drivers pushing web performance to the fore are simple. Pages are getting more complex and larger, and end users or the API stacks that are based on web technologies want lower and lower latency in providing richer and deeper data. Take a look at this chart showing web page weight over time:

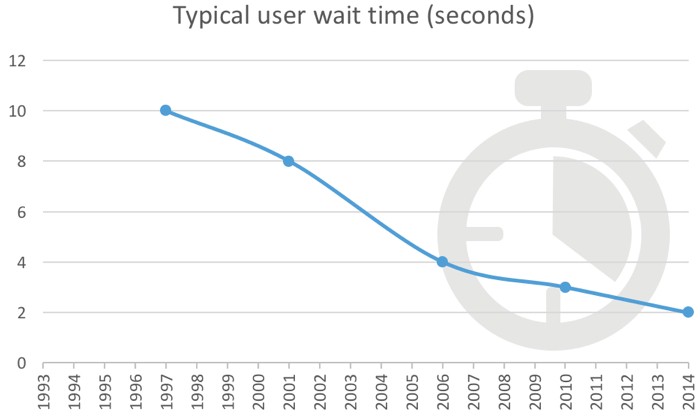

That chart is from Web Optimization and it shows a factor of 49X increase in the number of objects per page in nearly two decades, and a factor of 115X increase in the average page size, from 14.1KB in 1994 to 1.6 MB in 2014. This has to be balanced against the performance of those web pages, and we are increasingly impatient and application developers know that and have been pushing response time down:

That is only a factor of 5X improvement in average response time, and it is hard to believe that response times of 2 seconds in 2014 were acceptable. What we have heard is that people get impatient to 200 milliseconds, and that 100 milliseconds is better. And we also presume that for the web servers that are at the heart of infrastructure, the lowest possible latency for that REST control stack that is hitting the web servers is absolutely critical. Presumably – we do not have any performance data comparing Apache, IIS, and NGINX for this – the need for speed is what is driving adoption of NGINX and what is transforming the LAMP stack – Linux, Apache, MySQL, and PHP, PERL, and Python – into the LEMP stack – the E is for “Engine X” of course.

“Our job is to learn what these large users are doing with NGINX and the changes that they are making and then feed it back into the open source and commercial versions of the product. There is only a relatively small number of innovators in the world – the Googles, Facebooks, and Amazons – but there is a huge number of fast followers. Enterprises want to do what these innovators have done, but they don’t have the expertise or the desire to do the R&D themselves.”

NGINX is not just about replacing Apache, by the way. It can act like a front-end proxy for Apache and other web servers, turning a collection of badly behaving Internet user connections into a more orderly stream of stuff that helps Apache do a better job serving.

But the issue is larger than this, says Garrett. “The traditional LAMP and LEMP approaches are rooted in the three-tier, Java 2 Enterprise Edition architecture. Modern organizations are moving away from that and toward microservices, and from my perspective, microservices is just a second generation of services-oriented architecture. But it is SOA using more modern and more lightweight protocols and virtualization such as containers, and it is open source at its roots. The original SOA approach was a little bit strangled by the large vendors that were driving the direction. Microservices needs some sort of HTTP receiver and gateway at the front of that turbulent, chaotic environment where the application is deployed and continuously changing, but itself does not change at such a rapid rate.”

NGINX would very much like for its eponymous stack to be that gateway as well as the nerve center of the platform. And it learns from the hyperscalers to help build its products to stay out ahead of the needs of enterprises.

“Our job is to learn what these large users are doing with NGINX and the changes that they are making and then feed it back into the open source and commercial versions of the product,” says Garrett. “There is only a relatively small number of innovators in the world – the Googles, Facebooks, and Amazons – but there is a huge number of fast followers. Enterprises want to do what these innovators have done, but they don’t have the expertise or the desire to do the R&D themselves.”

NGINX has a support model that funds the development of the open source versions of its web server and load balancer. The service is called NGINX Plus, and it has been in the market for about two and a half years now. When it launched, NGINX quickly attracted about a 100 customers, and as 2015 came to a close, it had in excess of 500 customers paying for its two flavors of support.

The NGINX web server is free and open source and exactly the same as the commercial version, while NGINX Plus adds in the load balancer and comes with two levels of support. An annual contract for 8×5 business support costs $1,900 per server year, while a contract with 24×7 support and 30 minute response time on calls runs $4,500 per server per year. That is considerably more expensive than a Linux license from Red Hat, SUSE Linux, or Canonical, which all bundle in NGINX as well as Apache. But that is what it costs to have access to the creators of the code.

NGINX has about 80 employees, with 30 of them in Moscow and 50 of them in its San Francisco headquarters. The company has raised $33 million in three rounds of venture funding, with Runa Capital, New Enterprise Associates, and Index Ventures kicking in funds. So has the venture arm of none other than Michael Dell, who is in the process of buying EMC for $67 billion and who might want to own his own open source web server and load balancer, too.

I am certainly biases, but the real reason is due to 2 factors that I can see:

1. Continuing FUD about how Apache doesn’t scale, it’s as fast as nginx, etc, (all of which are no longer true and haven’t been true for years), while ignoring the capabilities that Apache has (and has had) that nginx doesn’t (or just gained, like dynamic modules).

2. Intense marketing and PR designed to push nginx.

The very fact that no one from Apache was contacted to address this is kinda evidence of both.

Of course, when an “open source” project is dependent upon a single company, and one that has strictly controlled and limited who has real influence on the project, and lives or dies by the Open Core business model, one can see how critical it is to put extreme resources into advocating nginx, no matter the actual harm to the true open source ecosystem.

My take is just that NGINX is much easier to configure from a fresh perspective.

Anything out performance of NGINX vs Apache, I have never seen anything conclusive over the years. Feature wise, what does NGINX has that Apache don’t have?

Anyways, from the article :-

“NGINX would very much like for its eponymous stack to be that gateway as well as the nerve center of the platform. And it learns from the hyperscalers to help build its products to stay out ahead of the needs of enterprises.”

What does that even mean? Corporate speak much?

I disagree. Look at:

http://www.laymance.com/blog/apache-load-balancers-and-log-files/

– Juanita

NGINX is not just about replacing Apache. It can act as a front-end proxy for Apache and other web servers, turning a collection of badly behaving Internet user connections into a more orderly stream of stuff that helps Apache do a better job serving.