The story for the adoption of new technologies follows a familiar narrative arc: The innovators create something, and it takes some time for the hype to die down and the software to mature and harden before it is appropriate for the rest of the market to consume. The innovators get the advantage of advanced technology, which they pay handsomely for in terms of the talent it takes to create and maintain it, and enterprises mitigate risk by waiting a bit.

So it is, perhaps, with Docker containers this year.

Docker, the company behind the popular software container stack, is working with Microsoft to embed its technology in Windows Server 2016, which will come later this year, and for those who are running Linux environments, the company has put together its first complete stack of tools for creating, deploying, and running containerized applications both on premises and in the public cloud. Appropriately enough, this stack is called Docker Datacenter, and it fulfills the goals that the company that has become synonymous with containers set for itself years ago when the container craze first started in earnest.

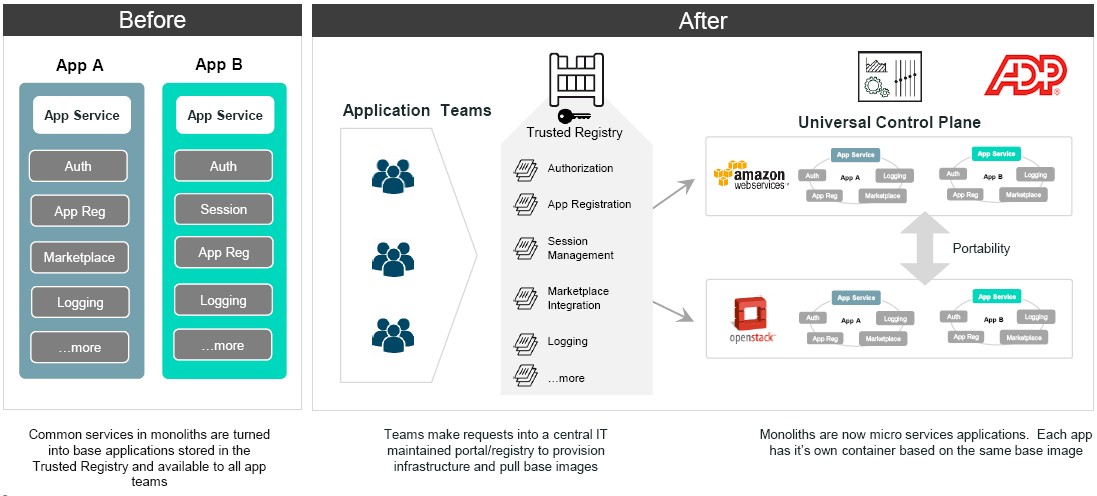

The big public cloud providers – Amazon Web Services, Microsoft Azure, and Google Cloud Platform – have all created their own container services for those who want to deploy Docker on their infrastructure rather than on premises. The idea is for the container to become the shrink-wrapped package for the delivery of software while at the same time companies are seeking to break down relatively monolithic applications into smaller bits, called microservices, that are woven together to comprise a new application that is easier to maintain at a continuous rate. This is the way that hyperscalers like Google and Facebook have long since learned to deploy applications, and even the HPC centers are getting into the act with projects like Shifter from the National Energy Research Scientific Computing Center or extensions to existing job schedulers like Univa’s Navops.

This is a future for software development and deployment that, in hindsight, seems inevitable and precisely aligns with the founding theme of The Next Platform, which is that cutting edge technologies developed to deal with scale and scope eventually trickle down to the enterprise or jump between clouds, hyperscalers, and HPC centers. Lather, rinse, and repeat.

While there are some very large enterprises with sophisticated IT people who can consume a new technology (generally these days it is open source) in its relatively raw state, this is not the case for many large enterprises, who by necessity have to be more cautious. Sometimes they do not have the same level of skills in their organization, and in many cases they have a different and much more harsh regulatory environment that is very sensitive about who has access to data and applications and how these two flit around the world in private datacenters and on public clouds. Think financial services, big pharma, healthcare, and such.

As we have pointed out before, in many ways, Google has it a lot easier than General Mills or Goldman Sachs or General Motors.

With the advent of Docker Datacenter, Docker, the company, wants to make it easier for companies to start creating microservices and running them in containerized environments. And, unlike the previous revolution in server virtualization, which was a necessary transition that made VMware rich precisely in a manner consistent with its wildest dreams (and Microsoft and Red Hat to a certain extent, too), the container revolution is based on open source technologies and companies like Docker are going to make a good living. The datacenter has been virtualized where it makes sense – bare metal is still suitable for some things – and now the pressure is on for the next, more efficient phase change in infrastructure, and even VMware knows we have hit peak server virtualization.

The Docker Datacenter stack brings together all of the key pieces needed for a containerized environment and, importantly for enterprise customers, leaves room for all kinds of external systems used to manage code or systems to be integrated into it. This last bit is important because enterprises do not have the luxury of throwing out old applications and data or infrastructure tools to manage these.

“The reality is that the journey that we have been on with customers as they are increasingly running Docker application in production is to formalize the role of their operations teams in this whole containerization movement,” David Messina, senior vice president of marketing at Docker. “It is a platform that speaks first to Ops but makes no compromises for Dev, and I think that is a very essential part of the design goal and it integrates elements of the open source projects that are Docker with the commercial stack that we have.”

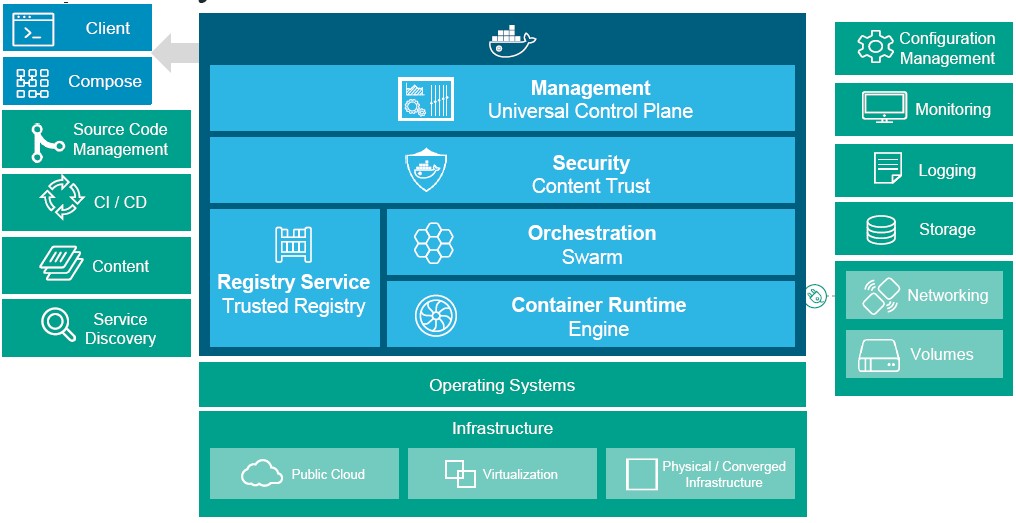

In the stack above, the Docker Toolbox is what is used to compose containers, and the bits in the green below the Docker Compose and Client tools come from third party tool makers or other open source projects that can integrate into these development tools. The blue boxes in the center are the key elements of the Docker Datacenter stack, including the Engine container runtime, the Swarm container orchestration tool, the Content Trust security layer, the Trusted Registry (formerly known as Docker Hub Enterprise), and the new Universal Control Plane management layer that was in preview late last year. The parts that control the access to the data and containers are key for enterprises. The management layer has hooks into it using REST APIs to link to other configuration management, monitoring, and logging tools as well as hooks out to persistent storage systems such as ClusterHQ’s Flocker or Red Hat’s GlusterFS that have been tweaked to work with Docker.

The Docker Datacenter stack, as it turns out, is similar to the kind of Docker control system that music streaming service Spotify created for itself, according Messina, and there are probably numerous other examples of homegrown container management systems besides Google’s Borg and Facebook’s Tupperware, just to name two. No one expects the hyperscalers to ditch their own tools for Docker Datacenter, either, although smaller organizations that have cobbled together some early Docker tools might make the leap from homegrown to commercial.

The interesting bit about Docker Datacenter is not only that it is designed to be deployed inside of enterprise datacenters for local use on applications running behind the corporate firewall, but can also be used as a substrate to provide a compatibility layer on raw infrastructure clouds and, in a sense, compete against the container services that AWS, Google, and Microsoft have created. This kind of cross-platform compatibility was not possible at the server virtualization level, given that these companies standardized on different hypervisors in their public facing clouds (Xen, KVM, and Hyper-V, to be specific) and use a very different set of APIs and tools to control that virtual infrastructure.

This is precisely what payroll processor ADP is doing, according to Messina, who says that the company was an early tester of the Docker Datacenter stack and now has it running across 762 server nodes on top of its OpenStack cloud and will also be using the stack to provide a compatibility layer running on the AWS cloud.

ADP is extremely secretive about precisely what its infrastructure is, and is by no means dumping its fleet of IBM mainframes and replacing them with containerized clusters running new-fangled applications. But we suspect that we applications created by ADP will run atop Docker Datacenter going forward and that over time, this infrastructure could grow very large and dwarf the mainframe footprint. This is certainly what happened during the Unix revolution and then the Linux evolution at many mainframe shops, but then again, it is hard to move those mainframe apps because of the risk and cost involved. That is why they persist, after all.

While ADP is using Docker Datacenter to provide hybrid computing across private and public cloud infrastructure, an unnamed top five pharmaceutical company that was also an early tester for the tool is using the stack so it can run its applications on both Azure and AWS and play the two off against each other. It will be interesting to see how such a strategy plays out in terms of complexity and economics, and hopefully there will be use cases soon that we can talk about.

Docker Datacenter is available now, and it is priced based on the number of server nodes under management with two tiers of support. With standard business (8×5) support, it costs $150 per node, and for 24×7 support it costs $300 per node. This is orders of magnitude less expensive than an ESXi hypervisor, vSphere add-ons, and vCenter management console, and enterprises will be doing that math for sure as they map out their next platforms.

I do not agree, look at http://shadow-soft.com/wp-content/uploads/2014/02/Army-RedHat-Whitepaper-February-2014.pdf Friendly, Arlinda