Bringing the Azure platform from Microsoft’s own public cloud down into the datacenters of enterprises and service providers means more than just giving these shops the same tools to manage raw virtualized compute, storage, and networking.

The Azure Stack platform, which will ship later this year and which Microsoft announced earlier this week, means literally bringing down as many of the suite of Azure services as is technically feasible and desirable to the corporate datacenter.

The end result of Azure Stack for customers will be something that looks and feels like the real Azure, although it will be running on their own hardware and under their own management. In a sense, this is the natural progression of Windows Server as the center of gravity of operating systems has shifted from a particular runtime to creating a cluster-wide management system with many runtimes and allowing for many different styles of compute and storage.

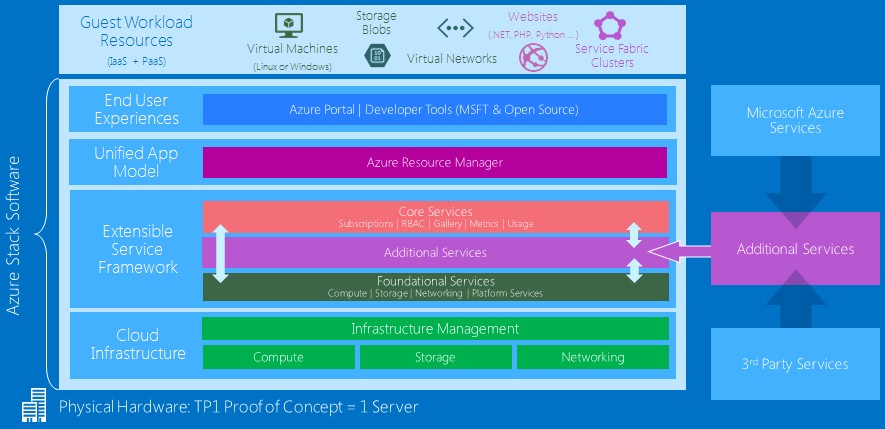

The Azure Stack is comprised of layers of software, just like the real Azure that Microsoft has running distributed around 22 regions on earth, making it one of the largest sets of infrastructure on the planet and rivalling (if not exceeding) the infrastructure that Google and Amazon have built for their internal operations and their clouds. The interesting bit for us here at The Next Platform is to contemplate how many tens of millions of organizations have Windows Server running a big chunk of their workloads and how many of these will want to move to the Azure model of creating, consuming, and managing infrastructure and applications. The amount of compute and storage capacity in enterprise datacenters is vast, at least an order of magnitude larger than the infrastructure that Microsoft has assembled to underpin Azure. Microsoft has well over 1 million servers in its cloud platform, but much of this runs Office 365, Xbox Live, Bing, and other services that are not, strictly speaking, running on Azure. They no doubt will in the future, and like other enterprises, Microsoft is carefully orchestrating those moves.

It is still early days in infrastructure and platform services, and while it may not feel like it, there is plenty of time for enterprises to learn the new way of building and consuming services on clouds. As we discussed in our previous article concerning Azure Stack, neither Amazon Web Services nor Google Cloud Platform seem inclined to offer a private cloud implementation of their platforms, and we do not expect that to change. This gives Microsoft an advantage over its public cloud rivals among the many, many enterprises that say they plan to run in hybrid fashion on their cloudy infrastructure – at least when it comes to hooking private datacenters with public clouds. If companies mean cross-cloud portability when they say hybrid, then none of the cloud builders are promising this, which would be exceedingly difficult to deliver for any company. The services on the big public clouds are changing all the time, they use radically different APIs to control their infrastructure and services, and they have different metaphors and organizational elements to describe their clouds. You end up with a least common denominator hybrid public cloud, which may not be particularly useful.

“Taking a copy of Azure and putting it into the enterprise doesn’t make sense. While Azure Stack should be just another region as far as enterprise customers are concerned and we should maximize the number of services that can be deployed locally, these services have to be things that can scale down to the enterprise level.”

To our thinking, the competition between public cloud operators should be sufficient to keep pricing, performance, and features in rough parity over the long haul, much as the intense competition among Linux, Windows Server, and Unix has kept them more or less in line across different server classes and different workloads. You can pick a platform and then benefit from the fact that these platform providers are trying to win new customers and keep existing ones. So while cross-cloud hybrid compute and storage would be wonderful, they may not be economically practical or technically feasible except for the most rudimentary of services like a raw VM or a gigabyte of storage. In the same way, we don’t actually expect Windows Server to be a clone of Linux, or vice versa.

So what services are actually going to come in Azure Stack? What hardware will it run on and how far will it scale? Microsoft is not yet providing full roadmaps and reference architectures yet, given that Azure Stack Technical Preview 1 is just shipping today, but executives speaking to The Next Platform did provide some guidance on what to initially expect in these areas.

The Two Azure Stacks

Back in the day, one rudimentary way of measuring the sophistication of an operating system was to track the numbers of lines of code that comprised its stack over time. The more features and functions the operating system had, the more its code swelled. We asked how much code was in the Azure Stack code base was, which caused a certain amount of amusement, and Mark Russinovich, chief technology officer at Azure, quipped, “Tons.” When pressed, he said that he didn’t know the precise number, but that it was much larger than an operating system.

There is obviously a lot of code sharing not only between the Windows Server and Azure public cloud teams these days, but also between the Azure and the Azure Stack teams. In some cases, Azure is driving the addition of features in Windows Server – nested virtualization was one of them that was requested and is coming out with Windows Server 2016 later this year, for instance – and in others, customers are requesting features in Windows Server that may benefit the two Azures.

“The higher up in the stack you go, the more code sharing there is,” explained Russinovich, referring to the differences between the code used for the Azure public cloud and Azure Stack. “At the bottom, the fabric underneath is very different, but when we get into services like Virtual Machines, Virtual Machine Extension, or networking services, a lot of that code is shared between public Azure and on-prem. The Azure Resource Manager is exactly the same and the Azure Portal is exactly the same.”

Microsoft had long since figured out how to scale out compute, storage, and networking to build a hyperscale public cloud, but three years ago, it figured out that it needed to rearchitect the application model at the heart of its public cloud so it could be brought down into the enterprise and be used on private clouds. Azure Resource Manager is the result of those efforts. That this layer is common across the two Azures makes perfect sense.

But Microsoft’s Azure software for the public cloud is very tightly tied to the Clos network that links servers and storage together and its proprietary networking stack, which we have discussed recently, and is similarly designed to run on the fairly homogeneous infrastructure, too.

“Taking a copy of Azure and putting it into the enterprise doesn’t make sense,” Ryan O’Hara, director of program management for the enterprise cloud division at Microsoft, tells The Next Platform. “While Azure Stack should be just another region as far as enterprise customers are concerned and we should maximize the number of services that can be deployed locally, these services have to be things that can scale down to the enterprise level. There are some services, such as the Azure Data Lake, data ingestion, or data encoding, where customers will benefit from the scale of the Azure public cloud.”

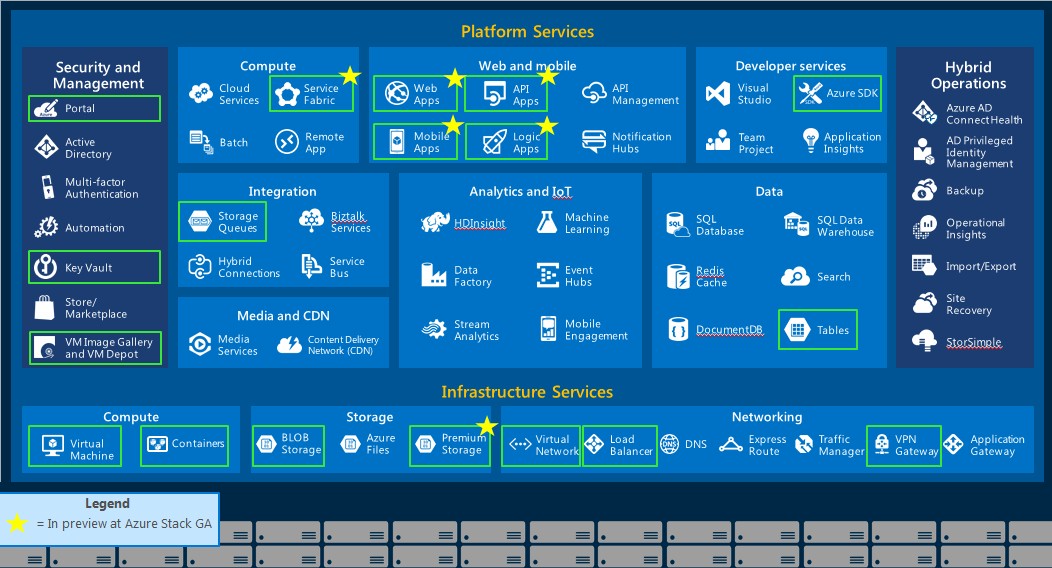

To give a sense of what services to expect with Azure Stack, Microsoft provided this handy overview of the services currently on the Azure cloud and listed the ones that will be available in Azure Stack, which are boxed in yellow in the chart below.

Those Azure services with stars after them will be in preview as Azure Stack becomes generally available in the fourth quarter and will presumably come to market in 2017. Other services, as appropriate, will make the jump from Azure to Azure Stack. Site Recovery, for instance, will be an interesting one in that it will provide failover capability between Azure Stack on-premises clouds and the actual Azure, and will also allow for Azure Stacks that are distributed geographically to provide backup for each other. Azure Stack will eventually support multiple zones, obviously, and Microsoft is working to provide VM-to-VM failover in a future Azure Stack release.

Importantly, the Azure Resource Manager templates that customers are building and sharing to help automatically deploy applications on the Azure framework, which you can see here, will also work seamlessly on Azure Stack private clouds.

It is also likely that services that are not part of Azure and are provided by third parties, such as ticketing systems for managing hardware and software, could be added to Azure Stack even though they are not part of Azure itself. And while Microsoft has no plans to offer supported versions of Azure Stack where its own techies and its own Autopilot cloud controller for Azure reaches out manages a private Azure Stack cloud, Mike Neil, corporate vice president in charge of Enterprise Cloud at Microsoft, says that it will consider this if enough customers ask for it and expects for third party service providers to do it in any event.

The Hardware Foundation For Azure Stack

A pod of compute capacity on the Azure cloud is 960 nodes of Microsoft’s own Open Cloud Server design housed in 20 racks. By definition this pod has enough spare capacity to put some in reserve for failovers when they will inevitably occur. In the enterprise, says O’Hara, companies might have some reserve servers, but not on the same order of magnitude as Microsoft, and they will use overcommit and quality of service protocols to deal with failures and keep critical workloads humming on the infrastructure.

The underlying storage on the two Azures is different, too, which made it a challenge to scale it down, explained Russinovich. On the real Azure, the storage service runs initially on dozens of servers in the pod, and on an initial deployment of 960 nodes, this is no big deal. But when Microsoft is trying to get the initial deployment size of Azure Stack down to a few nodes, this can’t work, and moreover, the erasure coding techniques that Microsoft uses in the real Azure also assumes a very large set of storage servers over which to has the data protection algorithms and to spread the data making up objects.

So to bring Azure Stack into the enterprise, Microsoft has to tweak the network topology and storage a bit and also make it available on commercial-grade servers and switching from the likes of Hewlett Packard Enterprise and Dell, its two key systems partners. It seems likely that Cisco Systems, Lenovo, Fujitsu, and NEC will also sell Azure Stack hardware-software bundles, too.

In terms of the scale of Azure Stack infrastructure, Microsoft is not being specific at the moment, but clearly Microsoft knows how to scale Azure up and has demonstrated that to customers through its own public cloud. The question is not how far Microsoft can push it, but how far it will certify configurations to give large enterprises building private Azures enough headroom so they are comfortable that they will not hit any scale ceilings.

The Cloud Platform System that Microsoft stacked up with Dell last year to build a cloud based on Windows Server 2012 R2, its Hyper-V hypervisor, plus Windows Server SMB 3.0 and Storage Spaces storage is probably a good indicator of what the initial scale might be for an Azure Stack cluster.

With this Cloud Platform System setup, which used the portal from the Azure Pack (inspired by the real Azure but distinct from it) and Systems Center 2012 R2 to manage it, a rack of machines had 32 nodes with a total of 512 cores, 8 TB of memory, and 282 TB of usable tiered storage comprised of disk and flash. (About 8 percent of that capacity was configured as flash to goose the performance of the underlying file systems.) The whole shebang is linked together with 10 Gb/sec Ethernet switching.

Assuming that an average virtual machine needs 1.75 GB of virtual memory, and 50 GB of storage, then such a rack could host around 2,000 VMs, according to Microsoft and Dell. The Cloud Platform System was designed to scale up to four racks, and importantly, was designed to scale down as small as three nodes to start. So the scale starts at 144 VMs (taking out the cloud management overhead on the three nodes) and rises to around 8,000 VMs across three racks. For Microsoft Azure, this 8,000 VMs represents one fifth of the capacity of only one of its pods. For a single enterprise, those four racks provide a lot of VMs.

Vijay Tewari, principal group program manager in charge of Microsoft’s virtualization management tools and who is in charge of the Cloud Platform System and will no doubt be helping build the reference architectures for Azure Stack with Microsoft’s hardware partners, hints to us that the scale will be a bit larger for Azure Stack than for Cloud Platform System.

Our guess is somewhere around 32,000 or 64,000 virtual machines, which is plenty of headroom and which, using future “Broadwell” Xeon E5 v4 processors from Intel and pushing the compute density up quite a bit – the Cloud Platform System was using eight-core Xeons, which is not all that dense – could fit in maybe eight, twelve, or sixteen racks, depending on the processor chosen. As we know from reading The Next Platform, the Broadwell Xeon E5 chips will top out at 22 cores, so let’s pick a processor with 16 cores as a mid-level option in terms of cores, performance, and cost to base a hypothetical Azure Stack upon. Assuming the same 32 nodes per rack, that will give 1,024 cores and 4,000 VMs per rack. So eight racks gets you to 32,000 VMs and sixteen racks gets you to 64,000 VMs.

Interestingly, Tewari says that the infrastructure services that comprise Azure Stack will run on the stripped-down Nano Server implementation of Windows Server, which comes out with Windows Server 2016 and which is a key element of Microsoft’s container strategy for the platform. And instead of patching these underlying VMs that run the Azure Stack code itself (as distinct from the VMs that run customer code), Microsoft will also be doing complete fresh installs of the hypervisor and Nano Server installations instead of trying to patch them. (Customers could decide they want the same approach for their application VMs.)

One of the other issues that Microsoft has to work out is how frequently Azure Stack itself will be updated. “Right now, we are working out with customers what the cadence should be,” explains Neil. “Azure deploys hourly and daily, which is probably too frequently, and an annual cadence is too slow.” We suggested that Azure Stack could be updated on the April-October cadence of some commercial Linux operating systems and the OpenStack controller that is tied to it, and Neil said that Microsoft wanted to keep Azure and Azure Stack in synch as much as possible and that this cadence “is probably about right.”

Azure Stack will be a priced software product, but Microsoft has not divulged how it will be packaged or what it will cost and it will likely not do so until it is just about to ship or is shipping.

Be the first to comment