For someone like Steve Pawlowski, who spent well over thirty years at Intel working on a wide range of processors for an even more striking array of platforms, it seems only natural to take a cautious view of entirely new approaches to data processing that require a fundamental rethink of computing hardware and software.

While this generalization applies to those in the computing world who are suspicious about the actual future of any number of emerging technologies (quantum computers, for instance), there are other processor paradigms on the horizon that are meeting with similarly raised eyebrows. This is not so much because they are limited or faulty, but because with a slowing Moore’s Law around the corner and a bevy of new processor alternatives and ecosystems on the horizon, it is simply too hard to say which will win—and what will fall by the wayside as “interesting experiment” that may (or may not) crop up again in future years as applications themselves evolve.

Pawlowski, now VP of Advanced Computing at Micron Technology, represents two sides of the processor futures argument. On the one hand, he is seeing a shift in how future systems will be designed to achieve workload and efficiency targets—one that necessitates a move away from how we have traditionally considered things like performance, power consumption, and scalability. This has opened his eyes to the capabilities of the Automata processor, Micron’s attempt to bring a unique compute engine to market for a specialized set of select workloads, enough so that he is helping undertake that broader effort in his role at Micron following his 33-year career at Intel developing a host of server and processor platforms.

On the other side, however, these experiences over the years at Intel (he made the move from Intel Fellow to Micron in 2014) have informed the way he looks at practical market adoption of new approaches to processing—and how a potential user base might adopt and embrace something new.

Pawlowski started at Intel developing a single-board computer based on the 8086 processor, then had a hand in almost every other processor that shipped, ending with the Haswell Xeon chips. As anyone who has watched Intel over the years knows, some things worked. Some things did not. In short, Pawlowski is not jaded, but is balanced in a way that three decades in the chip business would likely make one. With that said, he does see potential for Automata due to the power, performance, and other challenges ahead—and most important—in light of the bevy of forthcoming workloads that require deep memory pockets and the need for fast solving of tremendously data-rich problems.

“When I was at Intel, and especially as we were looking at exascale computing as a set of problems, the focus was at first, how to get memory closer to the processor. Now it’s shifted to how to get the processor closer to memory.” With Automata, however, he says Micron is bringing those lessons together but then asking, “What about the role of memory for doing some of the processing”?

And herein lies the key. The memory becomes the compute.

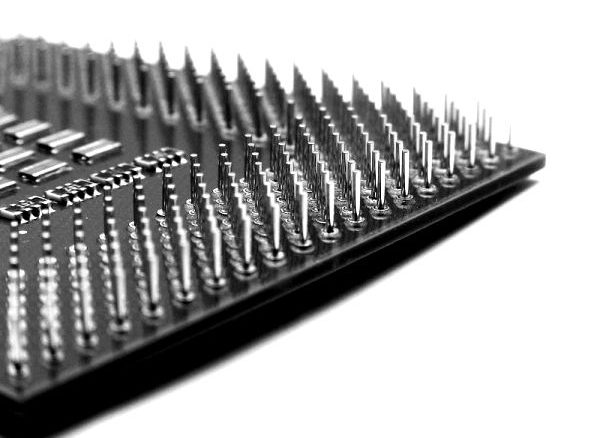

That question leads up back to the Automata processor. At its most basic, it is a programmable silicon device that taps into the parallelism inherent to computer memory, Micron’s bread and butter, for its computational thrust. These are programmable devices that leverage that parallelism with a novel interconnect network to do very rapid pattern matching and search across enormous volumes of data elements.

As Micron describes, “Unlike a conventional CPU, the Automata processor is a scalable, two-dimensional fabric comprised of thousands to millions of interconnected processing elements, each programmed to perform a targeted task or operation. Whereas conventional parallelism consists of a single instruction applied to many chunks of data, the AP focuses a vast number of instructions at a targeted problem.” These problems include anything with search and large-scale pattern matching at the base, which means cyber security, genomics, and other large-scale analytics problems that are confined by the available memory on traditional CPU-based systems.

As Micron describes, “Unlike a conventional CPU, the Automata processor is a scalable, two-dimensional fabric comprised of thousands to millions of interconnected processing elements, each programmed to perform a targeted task or operation. Whereas conventional parallelism consists of a single instruction applied to many chunks of data, the AP focuses a vast number of instructions at a targeted problem.” These problems include anything with search and large-scale pattern matching at the base, which means cyber security, genomics, and other large-scale analytics problems that are confined by the available memory on traditional CPU-based systems.

One might imagine that this could be the right time for Automata to hit the market, given the wave of new genomics requirements, not to mention the momentum behind large-scale machine learning projects at the hyperscale companies—and soon, for large enterprise. As we already know, training of neural networks involves a computationally hefty training phase, one that is often re-run with new data to augment existing models. Unfortunately, the Automata processor is not a floating point device, and since training is a matrix multiply-heavy set of calculations, this is not where it will shine for neural networks. However, with that said, the things that it does well and efficiently, at least in theory at this point, is operate very quickly against a trained dataset, meaning that it could have a place in that two-system stage that’s set for neural network and machine learning workloads (one system, often with GPUs, for training—another system for inference, or actually running the trained model).

On that note above, GPU companies like Nvidia are targeting that two-phase approach with two separate GPUs, one for the compute-intensive training via the Tesla M40 processor, and the lower power, strength-in-numbers approach for the Tesla M4. And others, including FPGA maker, Xilinx, whom we spoke with in November, are seeing the value for FPGAs in this training and inference space as well. Both have programmability stories that have been told (learning CUDA, working with reconfigurable devices via OpenCL or other approaches), but for Automata, that tale is more complex because the programming model for such a device is still in development—something that Micron is seeking to fix with its Automata computing center at the University of Virginia, where a lot of the early ecosystem and software development is taking place.

Other potential workloads outside of neural networks and machine learning involve very large search and optimization workloads. For instance, in one of the few published details about what Automata might be a fit for, a team showed how a proteomics application could scour through over 46,000 protein signatures in a single clock cycle—something that took a standard CPU-based approach far, far longer to do. While this was important as an early use case to showcase, Pawlowski says that the value of a project like this was understanding firsthand what the challenges are when bringing compute inside memory. As one example, he cites the hurdle of trying to do synchronous operations of compute with asynchronous ones in something basic like refresh.

Pawlowski says there are early stage efforts underway to add more computational complexity to the Automata effort, but he was not able to share details. This brings us back to the question of investment on Micron’s side, given the limited application domains (not to mention the increasingly rich processor ecosystems of options these will enter into). To build further compilers, tooling, application front-ends, and other moving parts takes money, although he notes that the Automata development is not so far out of range that any other startup with between 100 to 200 people working on the project.

“In my 33 years at Intel, and of course now, there have been many new capabilities that started with a good idea, but the technology was not good enough at the time. However, if the idea had come along ten years later, the technology would have been far enough along that it would have been a success.” It was interesting to hear him say this, since he is lumping the processor program into a broad camp of technologies he’s seen come and go, but he was not disparaging Automata, either. Recognizing the relative limitations in terms of programmability and application areas, at least now, he knows there is still an ecosystem to build out. But even a rich ecosystem is not guarantee of success.

Still, he contends, “a lot of experiments don’t need billions of dollars until you see the path of where you want to go. It was a modest investment for Auotmata in terms of people and technology…the strategy is to make small bets to see if you can build a larger and richer portfolio,”

Interestingly, Automata has another advantage in terms of its modest investment strategy—to produce the devices they can use their same process, techniques, and facility with only minor adjustments to what is used for DRAM today. This was, if one remembers, the key value proposition of Automata and it’s potential for market adoption when it was announced in 2013. Pawlowski says this doesn’t mean the devices will be priced at DRAM prices—but it is one possible bright spot.

Ultimately, the story here is more about a rethink in computing strategies for very large and memory-intensive problems. And it is also a story about future architectures since, as has been established, no one is expecting a massive influx of Automata-based systems to storm the Top 500 in the next couple of years—or even to pose them as a competitor to existing accelerators, co-processors, or “quantum” systems.

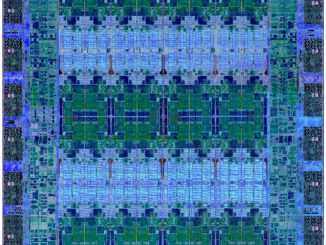

Pawlowski’s view of the system architecture of the future (in the 2020 to 2025 timeframe) is an interesting one, and while Automata is a part of that, the combined themes of heterogeneity and balance are key as well. Such a system would feature a logic device with a certain number of cores—a number that is balanced with memory stacked on top or closeby so the bandwidth and capacity per core remain constant. These would have the requisite configurability nested (via something like an FPGA), and the system would be able to spin off parts of the workload to these devices. The host processors would not be the beefy “big silicon” featured on forthcoming supercomputers, however. “Cell phone chips and no boutique network or memory solutions” are key here, he argues. The goal would be to have that balanced capacity and bandwidth in an efficient package—all with the ability to handle the heavy message passing of MPI environments and large shared memory capabilities. These would be modular units of compute that could operate as single nodes or as cluster of elements.

As for Automata in this scenario, Pawlowski says “the network will inform the best way to be able to map the algorithms on top of the machine. If the network has the configurability of the Automata processor, but at system scale, the efficiency of those algorithms will go up far more than today.”

There will be a few thousand such Automata processors shipped to key development entities in the early part of 2016, mostly in an effort to build the software ecosystem.

Seems to me the AP will excel for ‘connect the dots’ situations, particularly when the order or sequence of the respective ‘dots’ is meaningful, e.g., combinatorial, conditional graphs — a classic problem in intelligence analysis, military, commercial, medical, etc.

One such application we are investigating is the ability of assess a suite of computer programs for logic, arithmetic and semantic integrity in seconds. We think the AP will allow assessing the potentially millions of paths as each byte of code in considered (as text) with respect to all previous bytes.

First thank you for writing this article even though I had wished a slightly more detail techncal look at the Automata processor but probably such information is simply not available but still interesting to read about it. I see Micron’s problem though without a mass market appeal especially in silicon production it is hard to justify the initial investment. Low volume, high margin products are definitely more and more eroded due to high cost in staying in the latest fab tech game. A problem certain other chip manufactures definitely have found themselves in over the last decade .

I have already written about how it works, or rather, all there is that they could tell us a few years ago. I should have linked to it http://www.hpcwire.com/2013/11/22/micron-exposes-memorys-double-life-automata-processor/