It’s funny how Hadoop continues to crop up as the prime example of an open source platform that finds an early niche as an experiment in enterprise IT shops then suddenly explodes into its own vibrant ecosystem. In fact, the same thing might be happening with Spark, at least as an engine for the types of workloads that Hadoop was not so skilled at tackling.

The next big platform push from a range of companies will be around machine learning. Tuning and optimizing the hardware and software stack, cobbling it together with other core open source pieces (including Spark) and rolling it out in a nice, neat vertically integrated package. We are seeing this happening in the open source machine learning software stack for certain with companies like H2O and others trying to meld together disparate pieces to form a cogent, generally applicable foundation for a broad range of machine learning tasks, and now the big players are getting board, including one of the biggest—Big Blue.

IBM has seen this story play out already. Going back to the Hadoop example above, they saw the opportunity forming around the “big data” movement (if it can be called that) and snapped up bits of the Hadoop stack to form BigInsights—a product that five years in, has become a sticking point for new analytical tools and frameworks. A platform, as it were, for the broadest possible set of enterprise analytics applications.

It’s hard to tell what lessons IBM learned from that experience, but according to Rob Thomas, VP of Analytics at IBM, there are similarities in what is happening with machine learning now and what fed the move to build BigInsights. Thomas was there at the beginning of the BigInsights rollout, but tells The Next Platform that the difference now is that they are more actively engaging with the open source ecosystem through new platforms, including their SystemML project, which was just slated as an Apache Incubator project in November. Similar community-driven efforts within the Apache frame, including their work on Spark, are integrated into this very “open” strategy. Of course, this is all enlightened self-interest. But as we saw with companies like Cloudera, for instance, that is not necessarily a critical statement. There is value on both sides—for the user and the supporting company—that is, if it’s done right and the user demand is great enough to sustain the investment.

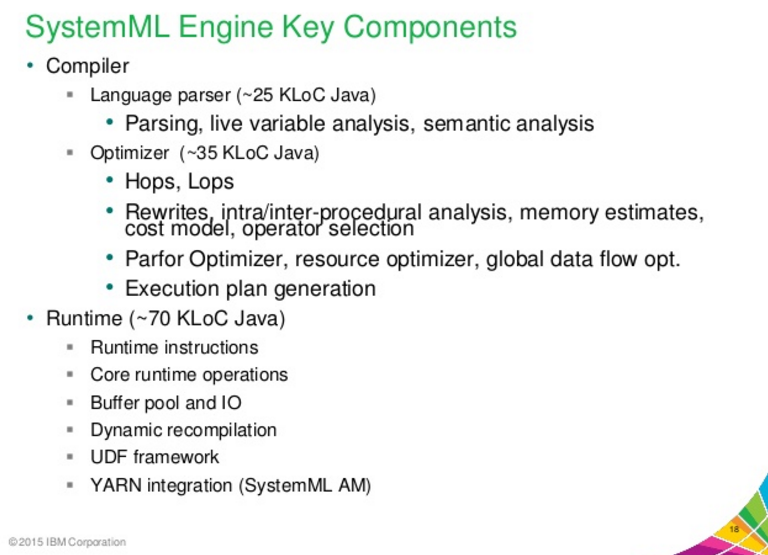

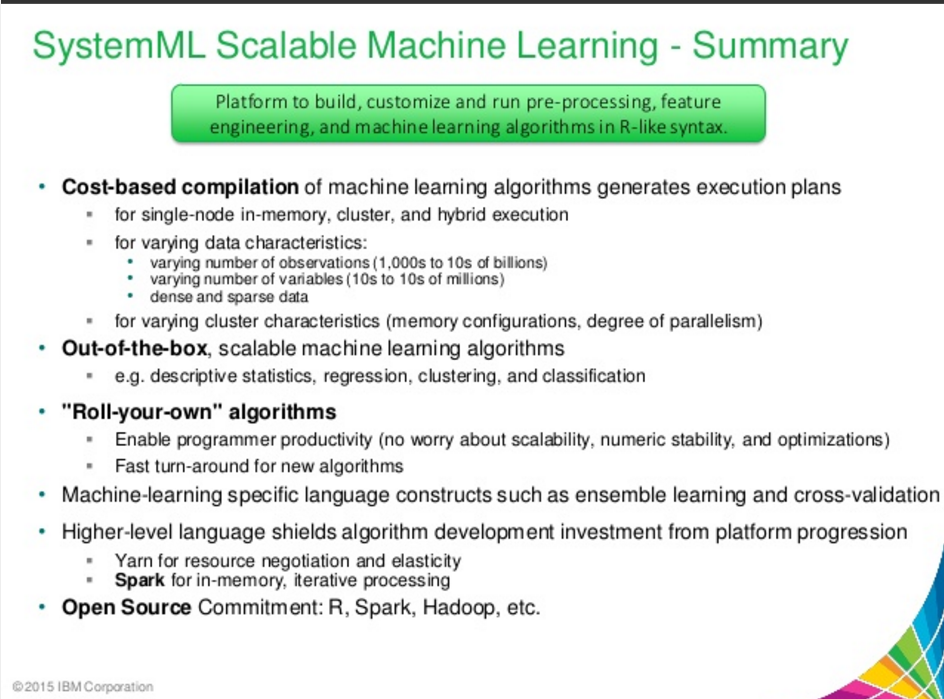

Unlike building a single monolithic framework to support current and future machine learning workloads, Thomas says the goal is to build an “analytics operating system” that has Spark at its base to handle the streaming and real-time processing of data while other components and capabilities, including SystemML, can sit on top to power new ways of looking at those datasets. At its core, SystemML is an engine for running algorithms that fit in the broad machine learning camp and includes an optimizer to prevent constant rewriting of algorithms once new data comes in—a persistent (and pressing) challenge that is keeping broader use of machine learning for real-time purposes at bay.

SystemML, by the way, is nothing new. IBM has been working on the capabilities for a few years and it already exists at the heart of the IBM BigInsights text analytics tooling. Whereas common language and API frameworks for machine learning like the Apache MLLib form the basis of such workloads, SystemML optimizes these algorithms on the fly as data streams in from Spark and other sources. It is optimized for data access, ingestion, and running models and is designed to stretch across a wide range of algorithms under the MLlib banner.

We will be digging into this in more depth in a future article because the “how it works” question is interesting, but the larger story is that IBM is working toward developing what Thomas calls a “next generation data stack.”

“We put things like Hadoop and NoSQL stores at the bottom of this stack, Spark the next layer up, the whole area of machine learning and advanced analytics on top of all of that, then APIs and a programming layer above, and at the top, data and database applications.”

Thomas tells us that they are seeing how this new data stack is putting different strains on infrastructure. He says they, in conjunction with their OpenPower Foundation partners, Nviida, are closely watching GPUs because they shine in these sorts of conditions. “We’ve been evaluating GPUs for machine learning and Spark because with CPUs these things won’t work at scale and for SoC’s it’s too expensive.” There is a much broader interest in GPUs in larger enterprise circles as well, he notes because of the limitations of traditional infrastructure.

But Thomas contends that the infrastructure, at least from an end user perspective, is mattering less. His view of that next generation data stack is rooted primarily in the cloud—IBM’s cloud, of course. With SoftLayer at the base and all that stack neatly bundled where users can pick and pull the required elements, he says the real adoption can begin because the path to getting up and running with machine learning requires a steep uphill climb.

IBM is set to have stiff competition if it wants to own whatever that real next-generation machine learning stack will be. A handful of big vendors, including chipmakers like Intel and Nvidia, already have footing in these markets with their own work on optimizing machine learning libraries for their own architectures. One can imagine how the OpenPower effort will, as we have already seen, step in to grab mind share away, but we are still at the dawn of a new era for general use of machine learning.

For the curious, here are some recent slides showing off all the neat tricks SystemML can do. As promised, we’ll circle around on this again this winter to pick apart the inner workings.

Hmm sounds a lot like Intel’s DAAL just wrapped in a language. Same as Intel’s DAAL doesn’t cover any state of the art ML algorithms.