Petar Radokjovic, who leads the memory systems division at the Barcelona Supercomputer Center, described his desk, messy with the latest research on next-generation memory systems for high performance computing, and says that for a time, the sight of them was enough to give him an ulcer.

Specifically, it has been the relatively recent string of published research (not to mention a wave of vendor material) espousing the tremendous “memory performance gains” that will come from the emerging series of stacked memory technologies, including the Hybrid Memory Cube and High Bandwidth Memory. It’s not that these are not potentially game-changing technologies, Radokjovic explains. It is that the very term “memory performance” is problematic. In fact, he says, there is no such thing.

And to be fair, he has a point, even though the term “memory performance” has been bandied about with increasing frequency in the wake of announcements featuring stacked DRAM.

“There is also no such thing as fast memory or slow memory. It is memory and that means the speed comes from two completely different dimensions. Bandwidth and latency. And from what I could see, there is no one that really understands the two are different and cannot add up to a single version of memory performance or memory speed.”

The point is, while many in the field understand the differences fundamentally, what is missing is that there is no basis for describing memory performance as a quantifiable figure or metric. And that, Radokjovic notes, has caused at least one of the memory vendors his team at BSC worked with on their assessment of actual stacked memory capabilities to “apologize for their marketing departments,” because they do in fact understand that determining performance for these future devices is going to be an application-specific challenge – and one that involves considering the entire architecture that the memory is hooked into to make a real assessment.

“You hear a lot about memory speed, memory performance, and these big performance gains from fast memory. But no one is ever clear whether that is bandwidth or latency. Even though they might know there is a difference, it makes no sense to talk about memory performance without talking about each of those two dimensions.”

And to back up for a moment, this is an issue that infuriates Radokjovic immensely. He passionately described the turmoil that led to the writing of a recently released research item, Another Trip to the Wall: How Much Will Stacked DRAM Benefit HPC? At the beginning of his quest, he reached out to Dr. Sally McKee, one of the more well-known voices in memory research, “Like the initial memory wall paper, this study points out something that most of computer architects “knew” without really understanding. And in order to fully exploit the potential of 3D-stacked DRAMs, we have to really understand what we can and what we cannot expect from these devices.”

What is interesting is that in most material about the role of stacked memory in large-scale HPC systems in particular, the emphasis is on scaling the memory wall by having a combination of fast and slow memory, with the fast memory being the stacked DRAM. (The Next Platform went into detail on the memory architecture of Intel’s impending “Knights Landing” Xeon Phi processors back in March and discussed how this memory architecture might work for applications and how it might evolve – the latter from a presentation at the ISC 2015 supercomputing conference).

“But memory is not slow or fast, it has latency and bandwidth, it doesn’t have performance. The application has performance when it runs in the system, but memory does not,” Radokjovic explains. And, he says, this has to stop, especially since supercomputing centers are being told if they rush out to swap their DDRX for stacked memory they’ll see massive “memory performance gains.” There will be some, he notes, but certainly not for all applications. Rather, he says, this will be something that is going to be far more likely the driving force for large-scale analytics and data-intensive problems. For LINPACK (as an example) and a wealth of other scientific computing codes (that echo the LINPACK benchmark’s measurements in terms of best architecture), there needs to be a cautious view going forward.

There will be an important place for stacked memory, but it sits at the border of high performance computing and big data, Radokjovic says. For applications with irregular access patterns, graph algorithms — those will benefit because the emphasis is on structured data across sparse matrices where there are many irregular accesses to memory and where there is massive data in memory versus cache. But LINPACK and the large host of other scientific computing problems that mirror are CPU bound — the data comes into the caches and the crunching is done from there.

Again, this is not to say that there won’t be “gains” but it is important to start thinking about those gains in raw bandwidth and raw latency figures. And further, to separate those two with a more balanced view into how those will fit into next-generation CPU architectures where supposedly the memory wall is somewhat lessened by closer, denser memory. But don’t say it’s faster. . . .

The frustrating part for Radokjovic and his colleagues even though it is common knowledge that bandwidth and latency are separate elements in understanding memory perfo… (doh!), none of the recent literature and talk about future supercomputers and data-intensive machines is reflecting that there is no single way to think about performance without the application context. It is a marketing issue, it is a vendor issue, and it is a research issue, Radokjovic says.

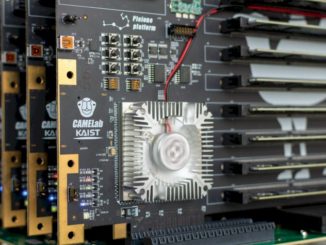

The BSC team has done a few rough potential benchmarks to showcase the relative performance gains that might expected, but since there are not pre-production parts on the memory or chip side, a great deal remains speculative beyond the fact that the application is the true metric of performance—the only one. But this is going to be a “game changer” nonetheless. “If you look at processors now, most of what we see is there because memory was far away; caches, pipeplines, prefetechers—there was a lot of work required to get things around. So the question now becomes, how will CPU design change with memory systems like this? You can’t take your BMW and put a truck engine it, after all.”

What Radokjovic’s team wants to make clear first, however, is that well before that BMW hits the road, understanding where the fast lanes are for one’s specific destination is a good starting point.

Buton Smith had much to say about this topic before he bought Cray in the 90’s. The Tera architecture was essentially the gattling gun approach with outstanding memory references and an ability to switch processes on a per-clock-cycle basis.