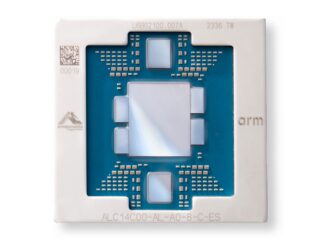

In the first part in this series, we talked about the nature of processor virtualization on different systems and how this affects the underlying capacity and performance of compute in cloud infrastructure. It is important to understand the nature of the capacity that you are buying out on a cloud because not all systems and hypervisors virtualize compute in precisely the same manner. This has important ramifications for the applications that run on these clouds.

You will recall that AWS provides at least two metrics for control: ECU (short for EC2 Compute Unit) and vCPU (meaning virtual Central Processing Unit). While researching for this article, I found that a number of web pages on AWS claim that the vCPU notion has replaced that of ECU. That didn’t seem right to me, since there are good reasons for both. Checking, I do find AWS contradicting such assertions; both are supported. Indeed, you might have seen earlier that you really do want ECU or some such capacity-based metric for control in addition to the count of the number of “processors.” ECU controls – or is your guarantee of – the usage of compute capacity.

We start this second part of the CPU virtualization on the cloud series by observing that for a given full SMP system, there is some maximum capacity available within that system. For some given workload, you can drive that SMP’s processors as hard as possible and you will get to a point where it is producing some maximum throughput value. If we install on that same SMP some number of operating system instances, and then run the same workload on all, we’ll find that it is still very unlikely that the sum of their throughput will exceed this capacity maximum. This is the basis for AWS’ notion of the multi-workload mix called ECU; other providers have a similar concept. Whatever the SMP systems used in building the cloud, there exists such a maximum capacity for that system.

So, whatever capacity you rent from the cloud for an operating system instance – in terms of AWS ECUs, for example – it is always some fraction of this maximum. Although we do not know this with certainty for AWS, with PowerVM it is true that the sum of the capacities specified over all active OSes is not allowed to exceed this maximum. You specify for each OS how much capacity is desired/required, and PowerVM works to meet/guarantee that expectation. At some level PowerVM knows that it can provide every operating system instance its own capacity because your operating system’s capacity needs are known to exist; the sum of the fractional capacities does not exceed the whole.

The trick for the cloud providers is to take the parameters that you provide, and find – for at least a while – a location where those resources are available. Of course, that’s not enough. What stops some operating system from taking over the entire SMP once assigned there? To avoid that, to ensure every instance gets its share, the hypervisor on each system tracks for each instance some notion of capacity consumption. One way to do that is to track how much time your vCPU(s) have been on a core within some time slice. If your usage in that time slice exceeds the capacity you are paying for, your vCPU(s) may be forced to stop executing for a short while. That is true for all; if the others had reached their maximum capacity consumption, your OS instance is assured time as well. The intent is to ensure all vCPUs get their fair use of the available hardware capacity.

PowerVM offers an interesting variation on this theme, capped versus uncapped capacity, so I suspect others do as well. Capped capacity limits capacity consumption to nothing more than your rented capacity limit. For example, suppose that you are using two VPs and have arranged for the capacity implied by 1.25 cores. That allows your applications to assign their threads to up two VPs at a time. But once, within a time slice, they together add up their capacity usage to that of 1.25 cores, the hypervisor stops their execution. If, for example, one VP was being used all of the time and the other was being used on and off for a quarter of the time, the capacity limit would not be reached and you applications can continue executing like that indefinitely. This is capped mode; you use up to your limit, you end, at least until the next time slice begins.

For uncapped capacity, compute consumption is still being measured, but an additional question gets asked when the capacity limit is reached: Does any other operating system VP need this otherwise available capacity? If not, if no other operating system VP is waiting, your VPs get to continue executing. But if so or if another VP is waiting or shows up, a waiting VP takes over and you cease execution for a moment. If that VP quickly ends its need for a processor, your VP – which is then in a waiting/dispatchable state – might be provided the opportunity to continue executing again and continue exceeding its capacity consumption limit.

Seems good right? Using our example, that could mean that your uncapped, 2-VP and 1.25-core capacity PowerVM OS instance could continue executing indefinitely on two cores. You see the throughput of 2 full cores rather than the capacity of 1.25 cores. Nice. Nice as long as the SMP system’s usage remains low enough to allow your vCPUs to continue. Folks get used to that extra throughput, the throughput and response time of 2 versus 1.25. Tomorrow, though, a less kindly OS moves into your digs (i.e., the same SMP) and it intends to stay busy. No more available capacity; capacity becomes limited to 1.25 and throughput slows to what you paid for. Notice that this is not so much a performance problem as it is a concern with managing expectations. There is nothing you did to harm performance, another OS merely got what it deserved.

The Capacity Is What It Is Because Of Cache

We wrote of how there is a maximum capacity available in any single SMP. You can successfully argue that there are a lot of ways to measure that capacity, but what is true is that most such measures count on much of the data accessed being found in the processor’s cache. Because a cache access is so much faster than from DRAM, the maximum capacity measured counts on some fairly significant cache hit rate. If the cache were not there, the maximum capacity would be very much less. It follows that if the cache hit rate were somehow considerably lessened, so would the maximum capacity. That last point is the lead in to the remainder of this section.

Recall that even though your OS is sharing the SMP with a number of others, you are still expecting your fraction of the SMP’s total capacity of the SMP. The reason that processor virtualization exists in the first place is to allow them all to share the SMP’s capacity. And as long as that sharing is “nice”, then the performance of your OS and every other is fine; they are sharing the measured capacity. So let’s have something happen – and happen for quite a while – that is not “nice.”

Before we do, let’s drive home a related concept. We assumed that the sum of the capacity provided to each OS instance will be less than the capacity available in the SMP in which they reside. We also know that each OS instance can have some number of VPs. Although the total capacity fixed, it is not a requirement that the sum of the VPs across all the active OSes will be equal to the number of physical processor cores (or SMT threads). This means that the VP count can be over-subscribed, potentially well in excess of the number of processors. In fact, it is sometimes preferred that this is the case; at low to moderate levels of CPU utilization, having more VPs available to your OS might result in better response times; the parallel execution – rather than being queued up behind a single VP – allows some of the work to be perceived as completing sooner.

But what this means at high utilization is that there can be many more active VPs than there are physical processors to assign them to. And what this means is that, to ensure fair use of the capacity, the hypervisor will be time slicing the use of the physical processors by the excessively many VPs. For example, let’s assume 32 physical processors and 256 active VPs. This is not unreasonable; there can be hundreds of OS instances sharing this system. To play fair, the hypervisor must role all 256 VPs through the 32 processors and do so quickly in order to provide the illusion of making ongoing progress by all.

So, now, back to the cache. When a task begins execution on a core, the task’s data state does not reside in the cache. It takes time for that state to be pulled into the cache from the DRAM or from other caches. Although it is normal to have some number of subsequent cache fills during normal execution, some of the task’s state tends to stay in the core’s cache for reuse while the task is executing on the core. With any luck, that task tends to stay on that core, having its performance benefitted by its still cache-resident data (and instruction) state; keeping data quickly accessible is why cache exists after all.

Even in a virtualized environment, a task can still stay on a processor for long enough to keep its cache state hot, the cache hit rate high, and the resulting throughput similarly high. This is especially true at lower utilization and in uncapped partitions. If competition for a processor and its cache is low, the task’s cache state remains that of just that task.

But now let’s increase the virtualized SMP’s utilization by adding more work (and more VPs). We may significantly over-subscribe the number of active VPs are a result. Your task’s state, state which took time to load into the cache when its VP was assigned to a processor, gets wiped out of the cache when the hypervisor switches your VP off of the processor, replacing it with another VP. When your task’s VP subsequently switches back onto a processor, it might not even be the same processor. As a result, when your VP is restored to execution, time is again spent restoring its state to the cache.

Picture this as occurring with every VP switch. The cache hit rate drops. Less work is being accomplished. Throughput is down. Tasks take longer to complete their work, stay on their VP rather than getting off on their own, and the number of active VPs remain higher longer. Sooner or later this effect may clear, but during this time what you had perceived as acceptable response times might not be.

The Cloud Providers Know Their Stuff

You need to be aware of these effects with processor virtualization. But it is fair to assume that those bringing you the cloud know – or should know – these effects as well. Appropriate management of their systems is a big deal to them and these are just a few of the factors involved.

It is also fair to assume that they have more capacity throughout their entire environment to meet all of the performance needs of their customer’s, even when all of the OS instances are busy. They own systems either at active standby available for when the capacity demands increase or when the inevitable failure occurs. Some of these are powered off because they wisely don’t want to spend any more energy than they really need.

They also have the ability to move OS instances from one SMP to another. As one system’s utilization increases into a dangerous zone, they may decide to move even your OS to a less used system. The converse is also true; having many OS instances spread across many underutilized systems naturally suggests coalescing these OSes onto fewer systems, at the very least to save the energy of the now unused systems. Knowing when to move OS instances and when to shut down or reactivate systems tells you that they are monitoring their own environment closely. Like I mentioned earlier, these folks often have load balancing down to a science; there is a financial benefit to them to do so.

As with most companies, though, there is also a financial benefit to them in keeping their customers and potential new customers happy. Allowing easily measurable swings in what would otherwise be constant and reasonable performance is no more to their benefit than it is to you. So although there might also seem to be a financial benefit to them to cram as many OS instances into as few active SMP systems as possible, most know the performance side effects of having done so. Excessive over use of their systems is not good business.

Still, even single OS instance performance swings being what they are, occasionally things just don’t work out and a resource gets over utilized, at least for a while.

Be the first to comment