The “Tomahawk” switch chips from Broadcom, which are based on the new 25G Ethernet standard crafted by hyperscalers, are starting to appear in more and more devices. This week, it is Dell’s turn to roll out some iron.

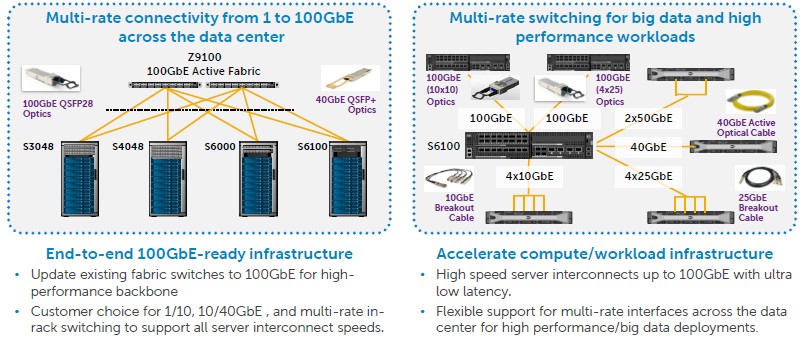

Back in April, Dell was the first vendor out the door with switches based on the Tomahawk chips, which support port speeds of 25 Gb/sec, 50 Gb/sec, and 100 Gb/sec (with one, two, or four lanes per port) and which also can be downshifted to run at 10 Gb/sec or 40 Gb/sec speeds to be compatible with Ethernet server adapter cards and other switching gear in the network that might be based on these lower speeds.

At the time, Arpit Joshipura, vice president of product management and strategy at the Dell Networking division, told The Next Platform that it cost about around $5,000 per port for a 100 Gb/sec switch based on earlier generations of ASICs that support 10 Gb/sec lanes. The new Tomahawk ASICs, as well as the Spectrum chips from Mellanox Technologies and the XPliant chips from Cavium Networks, support lanes running at 25 Gb/sec, and the bandwidth per port can be much higher and the cost per bit transferred is much lower even though a port costs maybe 1.3X to 1.5X more, comparing 10 Gb/sec to 25 GB/sec or 40 Gb/sec to the new 100 Gb/sec. The plan with the Z9100-ON fixed switch, which has 32 ports running at 100 Gb/sec and which can use cable splitters to add ports at lower speeds, was to get the cost of the switch plus its optics and cabling down under to $2,000 per port.

Mellanox, as The Next Platform reported last week when Arista Networks launches its 25G products, is being very aggressive in its pricing, with a Spectrum SN2700 switch initially priced at $1,531 per port when it was launched in June and now selling for $590 per port with four 100 Gb/sec ConnectX-4 server adapters and eight copper cables thrown in. Arista’s most similar product, the 7060CX-32, has a list price of under $1,000 per port, not including cables.

The cost of the cabling usually exceeds the cost of the switches in hyperscale and HPC datacenters, so you have to factor in that price when you are making comparisons. As you can see, the vendors do not make it easy to make comparisons, and this is intentionally so.

With its latest datacenter switch, the S6100-ON, Dell is going modular, much as it has done with its PowerEdge-FX2 servers aimed at cloud builders, hyperscalers, and large enterprises. The S6100-ON splits the difference between the giant core switches that have many pluggable switch modules for adding capacity or upgrading speeds and fixed function 1U or 2U rack switches that cannot be altered. A modular rack switch like the S6100-ON offers some flexibility but also cuts out some of the cost compared to monster switches.

Like other Tomahawk switches, the new S6100-ON top-of-rack switch offers 3.2 Tb/sec of aggregate switching bandwidth – that’s one-way, with 6.4 Tb/sec bi-directional. This is 2.5X the bandwidth of prior switches based on Broadcom’s popular “Trident-II” ASICs, which stands to reason given the 2.5X boost in port speeds on the switches. The S6100-ON has four modules, and customers can mix and match different modules in the same 2U enclosure. One module has four 100 Gb/sec CXP ports and four 100 Gb/sec QSFP28 ports; the QSFP28 ports can have splitter cables that break each port down into four ports running at 25 Gb/sec or two ports running at 50 Gb/sec. Another module just offers eight SQFP28 ports, and a third module offers sixteen QSFP+ ports running at 40 Gb/sec with optional breakout cables to split each port into four 10 Gb/sec ports.

The modules supporting 40 Gb/sec ports will be available in January 2016 and the ones support 100 Gb/sec will be available at the end of the first quarter, so Dell is giving customers a long time to plan and to comparison shop with the emerging 25G competition. Pricing has not been announced yet, but Amit Thakkar, director of product management for the networking division at Dell, tells The Next Platform that it will be competitive with Arista when it comes to price.

The ON in the name of the switch conveys the fact that the switch does not just run Dell’s own OS9 network operating system (formerly known as FTOS), but can also be equipped with the ONIE open network operating system installer and load up Cumulus Linux from Cumulus Networks, Switch Light from Big Switch Networks, Netvisor from Pluribus Networks, and OcNOS from IP Infusion. (The latter company has been shipping a routing and MPLS stack longer than the others have been shipping their switching operating systems, although they are newer to the Dell open networking party.)

So who is interested in these 25G switches? When the 25G standard was announced, the story line was that these switches were really aimed at hyperscalers and cloud builders and their unique needs for high bandwidth, high port count, low power consumption, and low cost per unit of bandwidth. To which The Next Platform has been thinking, doesn’t everyone want that? Or, to be even more precise, those companies who have not yet upgraded from 10 Gb/sec on their networks to 40 Gb/sec are going to be much happier to move to 100 Gb/sec (with splitters down to 25 Gb/sec or 50 Gb/sec possibly to make the most of the ports today and save the higher bandwidth for tomorrow).

“We have had a pretty extensive beta program, and interest has been from all over the spectrum,” says Thakkar, who agrees with our assessment that 100 Gb/sec networking should have been available four years ago when 40 Gb/sec switching became more common, but the 25 Gb/sec SERDES circuits were not ready for primetime, as they are now. “We could not use 25G SERDES to expose 32 ports running at 100 Gb/sec inside of a 1U chassis,” Thakkar explained. The other issue is that the current generation of 100 Gb/sec switches can support both low power and high power cabling, which means they can be used for short range across a few racks and long range across the datacenter, unlike many 40 Gb/sec devices and early 100 Gb/sec devices based on 10 Gb/sec SERDES.

The question now is whether there is pent up demand for bandwidth that 40 Gb/sec and prior 100 Gb/sec switches did not satisfy – regardless of the customer segment, but not regardless of the breadth of the infrastructure any organization is supporting – that the new 25G products will. The answer is yes, and particularly for those customers that were using link aggregation to make ten 10 Gb/sec ports look like a 100 Gb/sec port in their networks. (In effect, they do outside of the switch what switch vendors were doing inside the switch.) Among the hyperscalers like Google and Microsoft, who spearheaded the 25G spec and who are seeing their bandwidth needs go up dramatically, there is absolutely pent up demand for 100 Gb/sec switches using chips like the Tomahawks. Thakkar says that once the price of the optics comes down and the cost of 25 Gb/sec ports are added to servers, this will drive even more demand, and across customers other than hyperscalers. The economics for server adapters and switches will make 10 Gb/sec the new 1 Gb/sec, as Mellanox has said, with 25 Gb/sec being the new 10 Gb/sec.

Of course, predicting the future is hard. But Tom Burns, general manager of Dell Networking, added this: “Our customer demand for beta and test units for 100 Gb/sec platforms has far exceeded that for our first 40 Gb/sec products.”

Sounds like the datacenter is going to get the bandwidth it craves, and perhaps at a price it can afford, especially if the 25G price war keeps heating up.

Verification will be created the applicant’s credit history and the value of the house being bought.