Those of us who focus on the infrastructure layer of the next platforms that companies are building sometimes forget about the applications that ride on those platforms and give them a reason to be. There is a transformation underway in one of the key enterprise software markets in the world, and system makers worldwide are trying to figure out how to ride what is arguably the third major wave to lift German software giant SAP. That wave is the HANA in-memory database and the application stack and development platform that has been steadily building around it.

Both Dell, which is the number two server maker in the world behind Hewlett-Packard, and SGI, which is the number two high-end supercomputer maker behind Cray, want a piece of this action, and they have forged an alliance to provide systems to customers who need much larger memory footprints than can be provided by a standard two-socket or four-socket Xeon server.

The market opportunity for HANA is immense, and we are just at the beginning of what most people expect will be a vast migration wave that will sweep across the SAP customer base, which numbers 291,000 customers across 190 countries. It is hard to say how much money is up for grabs, but it is easily tens of billions of dollars over the next several years for hardware and systems software as customers transition from data warehouses and online transaction processing based on disk-based relational databases from Oracle, IBM, Microsoft, and SAP to HANA in-memory databases from SAP. To be sure, not all of those customers at SAP will ditch their Oracle, DB2, or SQL Server databases, since databases tend to be the stickiest part of the datacenter. All of those databases have some in-memory features as well, and these will work with SAP’s Business Suite applications. But SAP is tightly (although not monopolistically) coupling HANA to Business Suite, and wants to undercut its application rivals (which Oracle, IBM, and Microsoft all are to varying degrees) with an integrated offering, called Business Suite 4 HANA, shortened to S/4HANA in the SAP lingo, that will give SAP a much larger portion of the revenue pie at its customers while presumably saving them money and radically boosting the performance of their applications.

In many cases – including at SAP itself, which uses HANA to underpin its data warehouse as well as its transaction processing systems – the performance on HANA is so good that customers rely on live operational data for ad hoc queries instead of offloading and consolidating information to a data warehouse to run the queries. This means customers get better answers faster, and it also means less data massaging, too. Data warehouses don’t go away and are still used for long-term trend analysis and to support legacy applications.

But here’s the problem: Standard workhorse servers only have so much memory, and even with the sophisticated data compression techniques used with HANA to cram more data into main memory in the systems that run it, some companies are running out of gas, even on machines that have eight processor sockets and can support 12 TB of main memory, as the most recent “Haswell” Xeon E7s do. But SGI’s UltraViolet 300H systems, which are shared memory systems based on SGI’s NUMALink 7 interconnect, can scale well above that for both processing and main memory.

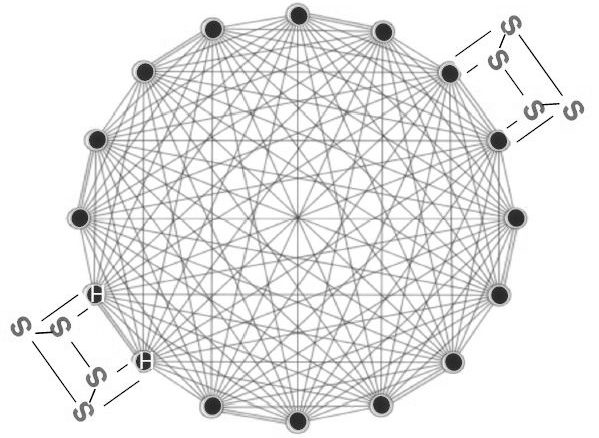

As The Next Platform has previously reported, the UV 300H machines (and the plain vanilla UV 300 systems that are not tuned specifically for HANA) trade-off system scalability for very tight coupling of processors and memory in a shared memory system. The UV 300 family has an all-to-all topology, meaning all nodes in the shared memory system are equidistant, in terms of memory latency, from all other nodes. For all intents and purposes, it looks like a big symmetric multiprocessing (SMP) system, and it offers a much easier programming model and more consistent memory access latencies than a non-uniform memory access (NUMA) clustering method used on more scalable UV 2000 systems using the NUMALink 6 interconnect and future UV 3000 systems that will also deploy the NUMALink 7 interconnect from SGI at some point. The UV 300 system and its UV 300H HANA variant support up to 32 processor sockets in a single system image and can span up to 24 TB of memory now, with 48 TB available when denser 64 GB memory is available at a reasonably affordable price.

“Our biggest challenge is getting these machines in the hands of a much bigger sales force than we have. The partnership with Dell is great in that we have zero product overlap – they don’t have anything over four sockets, and something like thirty times the sales force that is enterprise savvy.”

While Dell has plenty of engineering chops, it has shown no interest in developing its own interconnects and moreover, it has not been particularly interested in even creating PowerEdge servers that exploit Intel’s Xeon E7 processors and chipsets to create eight-socket servers. We talked to Ashley Gorakhpurwalla, general manager of servers at Dell, back in May ahead of the Haswell Xeon E7 launch, and he told The Next Platform that Dell had no plans to make an eight-socket server of its own, even though it is possible to do this without too much effort using Intel’s chips and chipsets.

Now we know why, and it wasn’t that Dell was leaving the opportunity for Hewlett-Packard, Lenovo, Oracle, and SGI to chase alone. It was that it was cooking up a deal with SGI to resell its UV 300H systems to support HANA workloads.

Chasing A $1 Billion Opportunity

As we have talked about in our detailed analysis of SGI earlier this year, SAP has spent quite a bit of time casing the SAP HANA market and trying to figure out how to capture the high-end chunk of it. SGI has said in the past that it expects anywhere from 5 percent to 10 percent of the customers who migrate to HANA either running Business Suite, homegrown applications, or a mix of the two will come to something north of $1 billion in server sales in 2018.

“For us, that is a nice sized market,” says SGI president and CEO, Jorge Titinger,” and that is true enough–particularly if SGI can capture a large portion of it because its means scale further than plain vanilla Xeon E7 servers. Titinger tells The Next Platform that the market size for big HANA boxes with more than four-sockets is around $350 million in 2015 (up from a projection of $230 million late last year that we saw when we put out SGI analysis together back in early May). SGI’s financial models looking ahead, where it wants to have the UV family of machines account for around 10 percent of revenues for this fiscal year, assume that about 6 percent of SAP HANA customers will need a big box. Other UV 300 sales are in that model for more generic database and analytics workloads, and we presume there is also a revenue bump from the UV 3000 supercomputer launch that we expect sometime around September but which Titinger did not discuss.

As we have said before, the advent of in-memory architectures could drive a resurgence of large scale NUMA systems, although we do believe that Power and Sparc architectures will face adoption challenges outside of their existing bases and that scalable Xeon E7 iron from Hewlett-Packard, whose “Kraken” Superdome X machine scales to 16 sockets, and SGI’s UV 300H, which scales to 32 sockets, will probably have a much easier time in the enterprise than regular eight-socket Xeon machines from the likes of HP, Lenovo, Fujitsu, and so on. Enterprise customers like headroom, and they have never been shy about paying a premium for systems that give them that. There is nothing worse than running out of headroom on a mission critical system. SGI and Dell – as well as HP – are counting on this.

“Our biggest challenge is getting these machines in the hands of a much bigger sales force than we have,” explains Titinger. “The partnership with Dell is great in that we have zero product overlap – they don’t have anything over four sockets, and something like thirty times the sales force that is enterprise savvy. We complete their capability to offer the largest configurations for HANA.”

The issue is that Dell is facing and that SGI solves for the company is that while partitioning HANA instances for in-memory data warehouses is fine (SAP itself does this), it does not work well for OLTP workloads like the Business Suite S4/HANA stack. You can’t get around it and you need a scale-up system to make it run right. And that also means, by the way, that Intel will be under pressure from SAP and its hardware partners to keep expanding the memory footprint and maybe even add memory compression accelerators into its hardware. (Such advances will perhaps cut into the scale-up advantages that SGI has with the UV 300H and HP has with the Kraken variant of the “DragonHawk” Superdome X machines, so there will be pressure the other way to not do this. It will be interesting to see who wins.)

The financial terms of the reseller agreement that SGI has inked with Dell were not disclosed, but Titinger did confirm that it was not an exclusive deal. That means SGI will be free to pursue partnerships with others, with the obvious ones being Cisco Systems and Lenovo, which do not have high-end machines. Fujitsu, Inspur, and Hitachi, which also do not have such machines, are possibilities; Oracle will probably prefer to focus on selling its Sparc M NUMA systems, which can scale to 32 sockets and 32 TB of memory, running its own applications, and NEC has been a long-time partner with HP so that would be the obvious partnership for big Xeon iron running HANA or any other workload for that matter.

Dell and SGI have already worked on a half dozen UV 300H deals together already, according to Titinger.

Looking ahead, Dell could end up peddling SGI gear for more than just HANA workloads. Titinger confirmed that the Dell reseller agreement includes all of SGI’s hardware, including its InfiniteStorage arrays. But for now, both companies are focusing like a laser on the HANA opportunity and will branch out from there. Brian Freed, vice president and general manager of high performance data analytics at SGI, tells us that MemSQL and Spark in-memory datastores are also appropriate for running in scale-up fashion on NUMALink-connected shared memory systems, and that dynamic graph analytics databases (not just the read-only ones that run sort of acceptably well on X86 server clusters) are other possible places to peddle UV shared memory iron. Looking out even further, Oracle 12c and SQL Server databases could also be boosted to run faster on UV machines, but SGI is making no promises of a big push even though it does already have Oracle and SQL Server databases running on some UV machines already.

For now, the SGI UV 300H systems are certified to run HANA SPS8 on machines with 8 sockets, and Freed says that with HANA SPS9 machines were certified up to 16 sockets. SGI has certified the UV 300H systems up to 16 sockets and is working on 20 sockets now. With the future HANA SPS10 release, expect another scalability bump, and code that SAP and SGI are working on now to push scalability up will come out in SPS11 and SPS12. Eventually, the HANA code will catch up with the hardware, and when it does, SGI will be more scalable than any other HANA box out there.

No mention of EMC? This unique scale up offering also seems like a great opportunity for the system integrators as well? Is it your sense that the real ramp won’t occur until SPS10 or 11? Thx.